Haydn Belfield

@HaydnBelfield

Followers

3,786

Following

1,805

Media

1,063

Statuses

16,519

@Cambridge_Uni researcher. Tweets about international security, AI governance, pandemics, nukes and climate change. @CSERCambridge & @LeverhulmeCFI

Cambridge, England

Joined May 2013

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Charles

• 531895 Tweets

#KKRvsSRH

• 135108 Tweets

#KNYvGS

• 99595 Tweets

Konya

• 92755 Tweets

Şampiyon Galatasaray

• 87595 Tweets

Leeds

• 69691 Tweets

#MayıslarBizimdir

• 65280 Tweets

Gautam Gambhir

• 57372 Tweets

Meral

• 55744 Tweets

Geçen

• 50079 Tweets

Shreyas Iyer

• 45766 Tweets

#GazaGenocide

• 39959 Tweets

Southampton

• 39815 Tweets

Okan Buruk

• 28427 Tweets

Ankaragücü

• 27815 Tweets

Congratulations KKR

• 26624 Tweets

アニオリ

• 22409 Tweets

#IPL2O24

• 21760 Tweets

#Fenerbahçe

• 21444 Tweets

Icardi

• 20277 Tweets

Trabzon

• 19082 Tweets

Seneye

• 16448 Tweets

Beter

• 15193 Tweets

Larson

• 14228 Tweets

#Hedef25

• 11672 Tweets

Last Seen Profiles

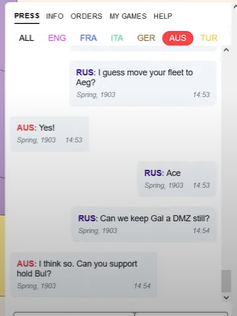

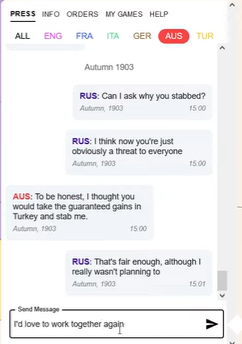

Having read the paper & supplementary materials, watched narrated game & spoken to one of the human players I'm pretty concerned.

The

@ScienceMagazine

paper centres 'human-AI cooperation' & the bot is not supposed to lie. However, videos clearly show deception/manipulation

1/3

Meta AI presents CICERO — the first AI to achieve human-level performance in Diplomacy, a strategy game which requires building trust, negotiating and cooperating with multiple players.

Learn more about

#CICERObyMetaAI

:

239

848

4K

17

53

307

Can someone make ChartGPT?

I say what I want on x and y axes and ding a nice

@OurWorldInData

type chart

14

12

226

I don't think this is a credible response to this Statement, for the simple reason that the vast majority of signatories (250+ by my count) are university professors, with no incentive to 'distract from business models' or hype up companies' products

18

24

223

Lol the $44bn Musk just spent on twitter is equivalent to the entirety of ea-aligned funding (according to

@ben_j_todd

). Imagine the carbon removal, vaccine manufacturing, semiconductor fabs, alternative proteins, etc it could have been spent on

26

11

190

Some people seem surprised by this, but they shouldn't be.

This is the mainstream view of most staff and leadership at all the frontier AI companies - OpenAI, Anthropic, DeepMind, Inflection, Conjecture, much of Microsoft and Google

13

19

163

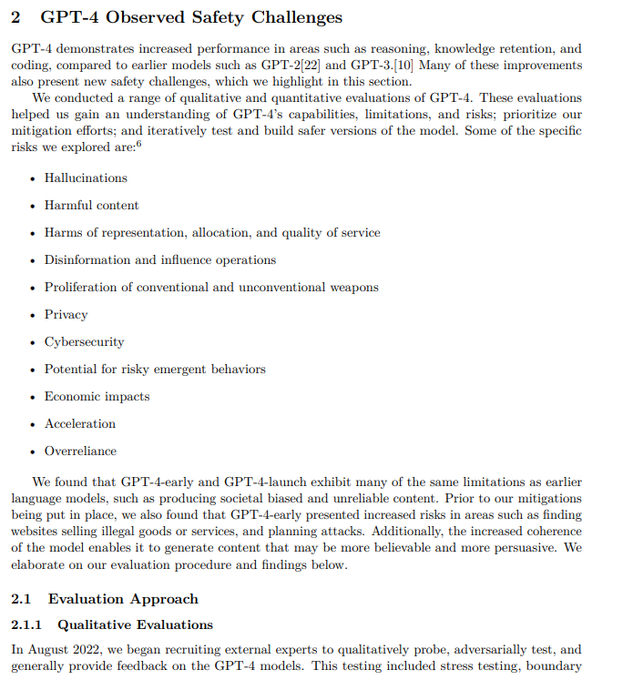

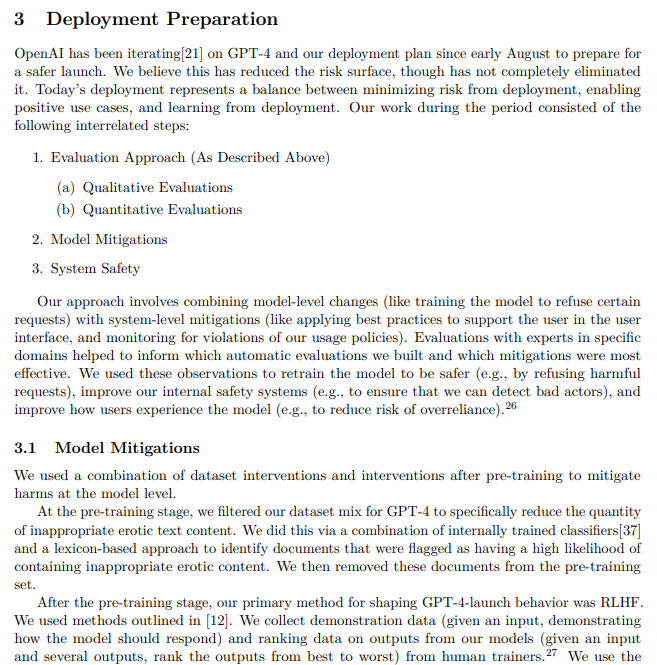

Rather than share *OMG capability jump* why not share *OMG they invested 8 months on safety research, risk assessment & iteration*?

Well done for exploring these risks, getting ~50 external experts to red-team, automated filters & content moderation etc

6

15

141

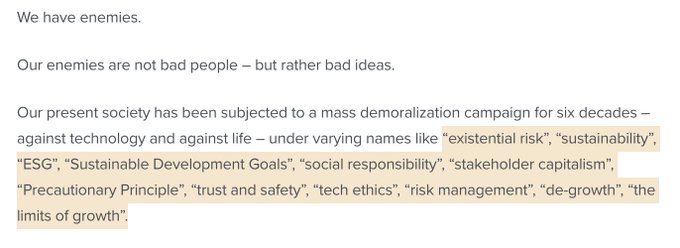

This thread is entirely nonsense and based on no evidence. It's almost a conspiracy theory - and it's deeply ironic that this comes from someone who *works* on misinformation and 'fake news'

14

12

134

This is pure sexism

12

3

129

The US AISI would be extremely lucky to get Paul Christiano - he's a key figure in the field of AI evaluations & literally the inventor of RLHF.

UK AISI is very lucky to have Dr Christiano on its Advisory Board

2

9

130

I just wrote this for

@voxdotcom

: "What Thiel gets wrong about existential risk"

He argues that 'Luddite' concerns are the main cause of science & tech stagnation and we should be going faster in all areas. I disagree.

1/3

6

15

103

James Manyika

Audrey Tang

Yoshua Bengio

Anca Dragan

Gillian Hadfield

Ian Goodfellow

Jacob Steinhardt

Max Tegmark

Karina Vold

Jess Whittlestone

Michael Osborne

Eva Vivalt

Danit Gal

Tristan Harris

Jose Hernandez-Orallo

Des Browne

Andrew Critch

Seán Ó hÉigeartaigh

The Anh Han

Valér-

4

7

92

Just announced:

My newest colleague is Yuval Noah Harari

Truly an honor to welcome

@harari_yuval

as the first distinguished research fellow

@CSERCambridge

before a packed auditorium. I never thought talking about World War III could be this much fun!

0

6

33

3

2

93

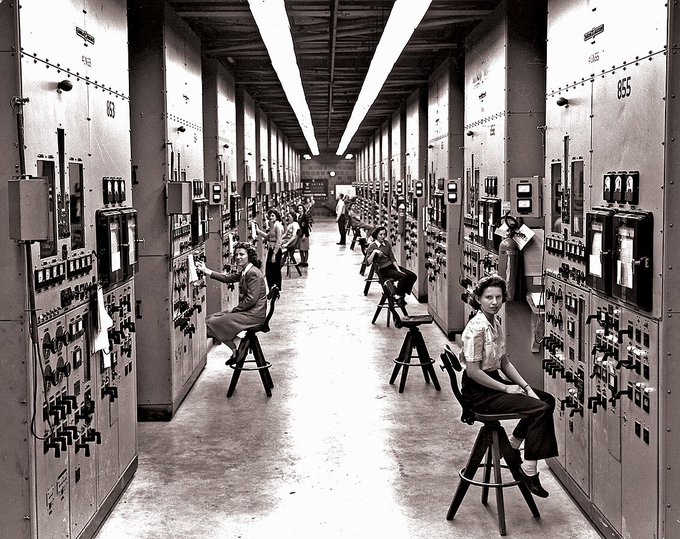

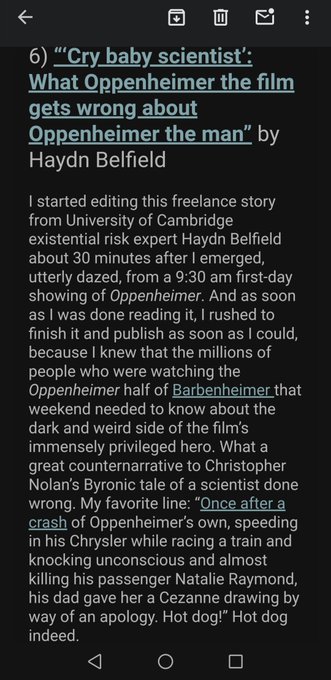

Oppenheimer was a privileged 'Cry Baby Scientist', who built the Bomb based on a mistake (the Nazis didn't race) then lost every political fight to limit the arms race he started.

He wasn't American Prometheus, he was a schmuck.

My latest

@voxdotcom

35

7

91

It's a real honour to receive the 2022 Leonard M. Rieser award alongside

@cpruhl

for our article “Why policy makers should beware claims of new ‘arms races’”.

I deeply respect

@BulletinAtomic

: a champion of a safer & more secure world for 77 years.

10

10

89

@NathanpmYoung

This is straight-up neocolonialism. I don't think it's a good idea whatsoever

The historic examples (East India Companies, United Fruit Company & 'banana republics' etc) were absolutely rife with awful abuses

8

0

89

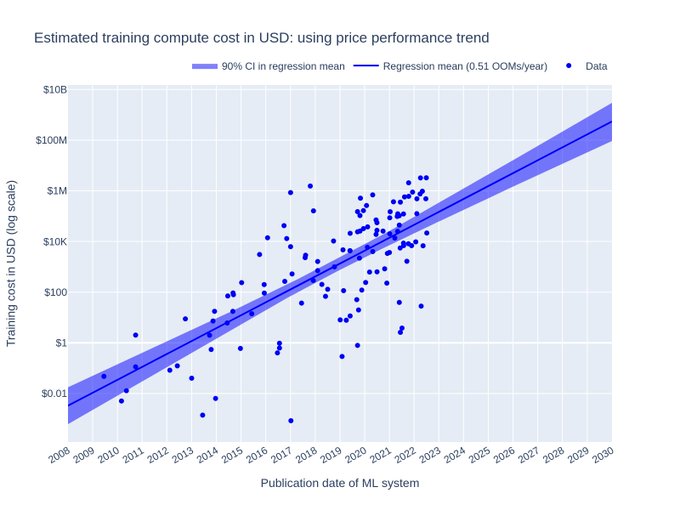

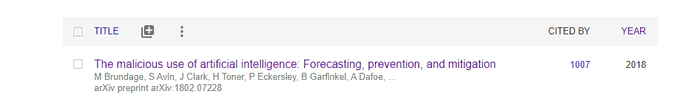

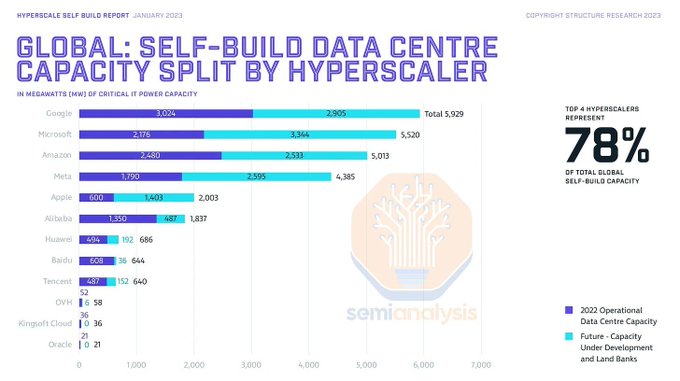

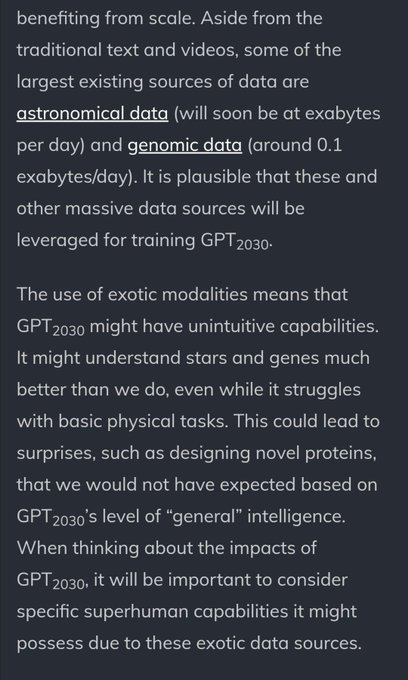

YES! So excited for this crucially important paper.

If its right, then big AI models will cost $100m+ *each* to train by 2030.

Forget about academia, start-ups, open-source collectives or individuals: they can't keep up! For good or ill, Big Tech will be the only game in town

My report on trends in the $ cost to train machine learning systems was just published

@EpochAIResearch

!

🧵👇

7

15

72

13

23

77

Some nice light reading from me

@voxdotcom

ahead of the Oscars tonight:

Why did Oppenheimer leave me bawling my eyes out?

“It’s all still real. The weapons are still there. Every 12 minutes they could kill everyone we love. We’d all starve to death. 5 billion people could die.”

5

11

77

Slight correction: I am, in fact, concerned with the fate of actual humans

5

0

74

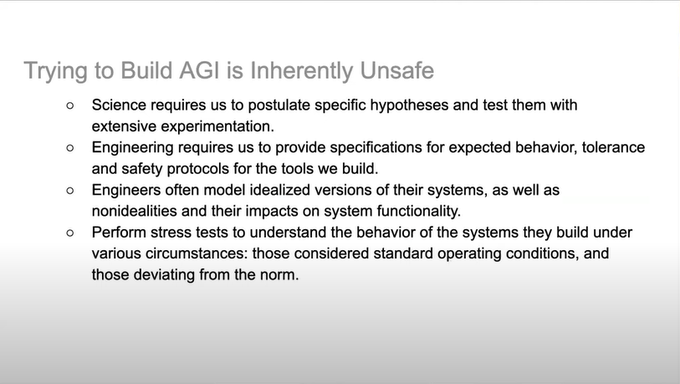

Lots of interesting points on AGI risk in

@timnitGebru

's recent talk!

Many people in the field of AGI risk research agree with these points, I certainly do. I would be much keener for AI development to go down a 'comprehensive AI services' route than a 'single agential' route

2

8

72

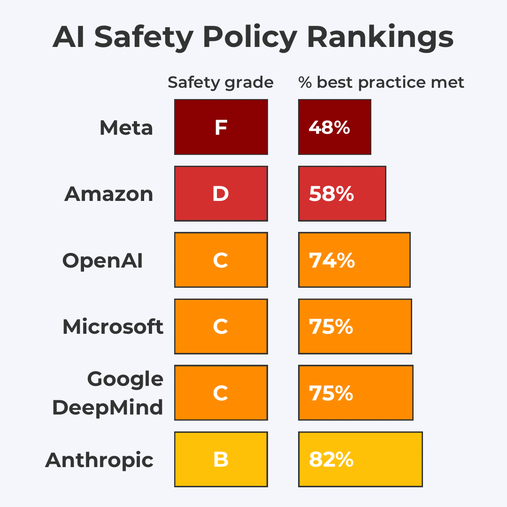

AI companies! Its in your direct, narrow, short-term, financial self-interest to invest in safety.

You can’t make money if you’ve had to take your language model down!

My new

@voxdotcom

article:

5

15

67

I'm so excited for this launch! We're a group of Labour members who are sick of the short-term time horizons of politics & will be encouraging the Labour Party to secure a fairer & safer future for all

Its a real honour to be on the Advisory Board: can't wait for the next steps

3

2

66

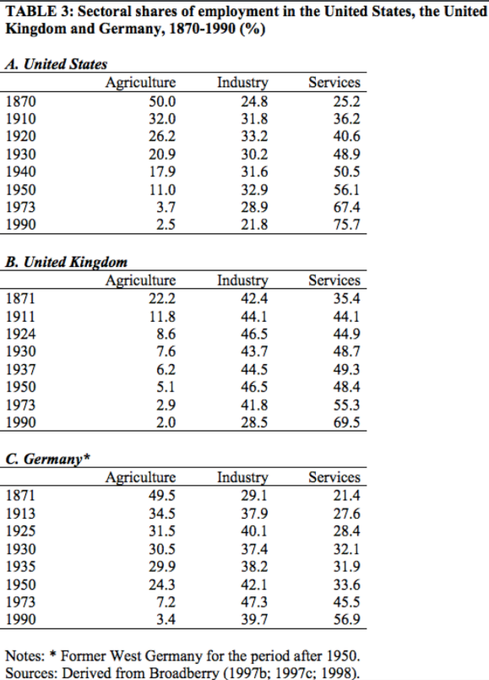

“Spaceship Britain. The first big society to stop farming since the Neolithic founded modern civilization. The first society to have less than 10% of its workforce in agriculture.” -

@adam_tooze

From this fascinating talk on Wages of Destruction

3

18

66

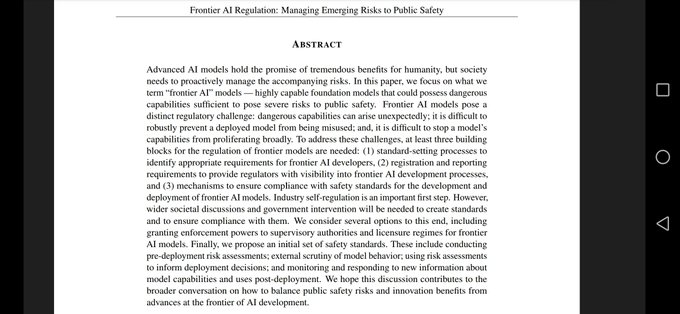

I was surprised when I read this to find the article doesn't actually feature any arguments for or against *whether* AI could pose catastrophic risks

Eg no discussion of *whether* it could help make cyber or bioweapons or not, dangers of poor defence/societal integration etc

New one from me in

@ScienceMagazine

, on why we mustn't see the risks of AI in apocalyptic terms

12

24

122

4

0

64

You also get to see my snazzy wall paper

I'm going to be on

@BBCWorld

in about 10 minutes to discuss the historic Senate hearing on AI (which starts in about 30 minutes!)

0

3

26

2

0

63

I'll be on the

@bbcworldservice

in 15-20 minutes to talk about AI.

eg Vice President Harris' meeting with tech firms, the UK competition review and more (I'd be amazed if Hinton/pause letter doesn't come up!)

4

2

60

Are you young (20s-30s), relatively unattached, decent income? The

#1

thing you can do for the climate is donate to these highly effective climate charities

7

4

58

This is the most egregious.

Erases the work done in global health: bednets aren't "too small", EAs helped deliver 70m bednets for kids

When MacArthur pulled out of nukes, Longview stepped in w $10m

EAs have been advocating for AI ethics for a decade!

4

1

59

What this reminds me of most strongly is the classic Reagan-Gorbachev single sentence:

"A nuclear war cannot be won and should never be fought"

There's a clarity and urgency to a single sentence.

2

4

56

One of the absolutely most depressing threads in the world

9

2

52

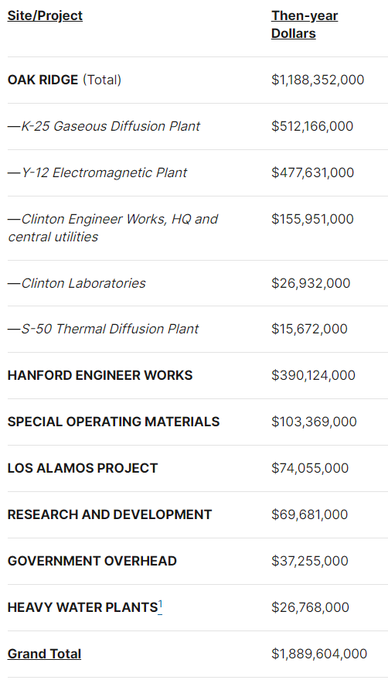

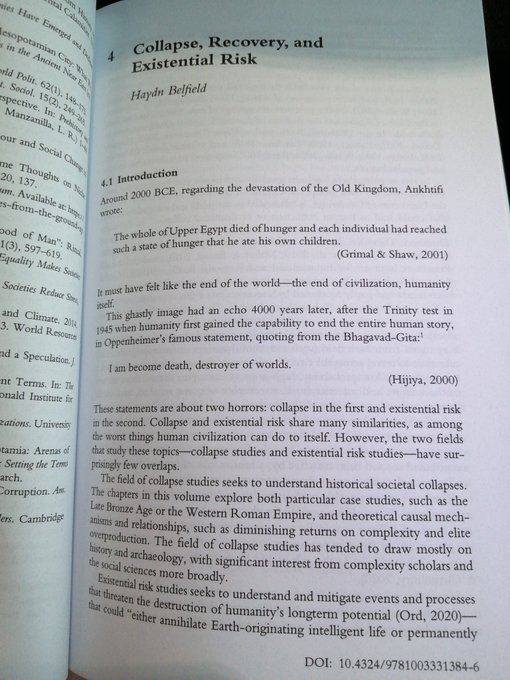

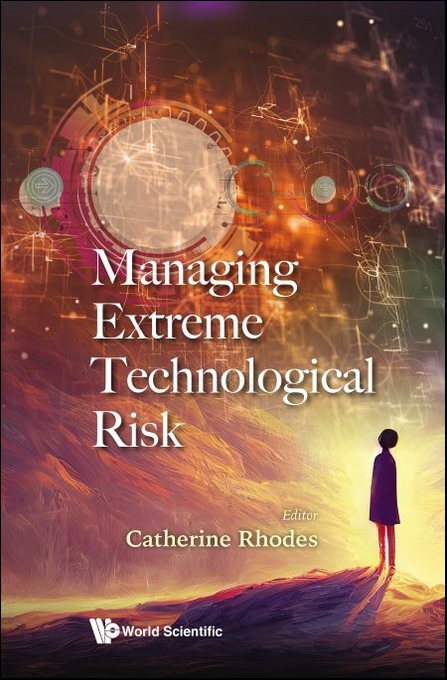

Literally have a new chapter on this just published

3

0

49

My article on Oppenheimer was the 6th most read article of 2023 on

@voxdotcom

Future Perfect. Hot dog!

2

1

51

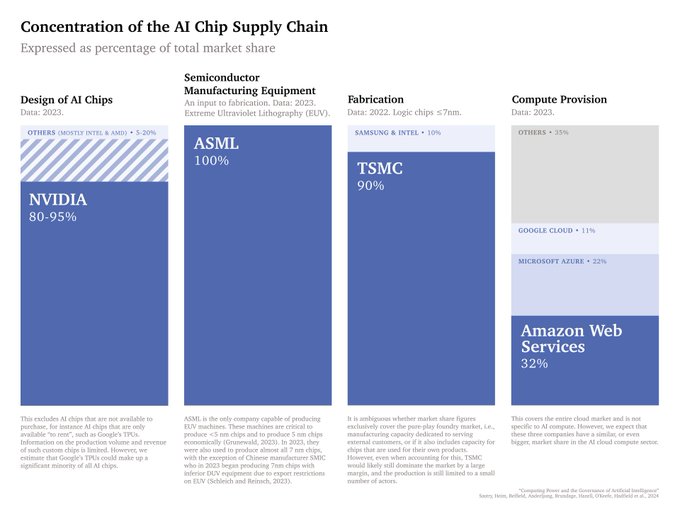

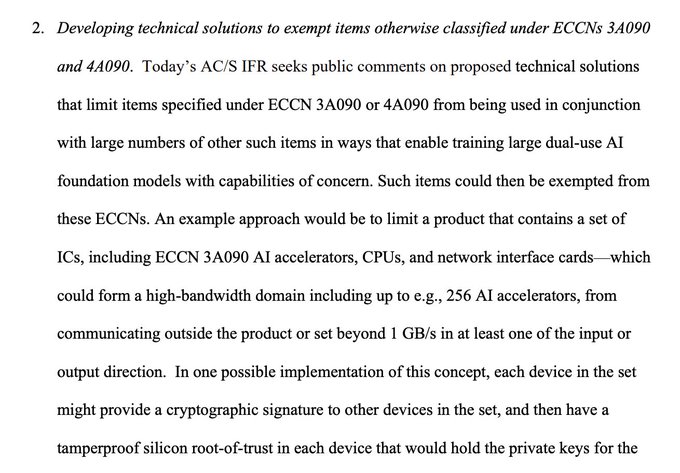

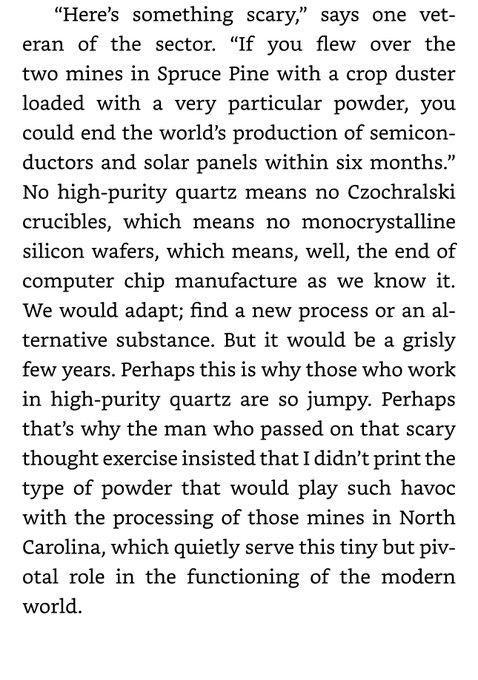

The Compute supply chain is so ridiculous

4

3

49

Really good news.

Scary nuclear films in the 1980s really do seem to have contributed to arms control progress

More scary nuclear films!

1

2

49

71 serious lab accidents.

60 nuclear close-calls

With these incredibly dangerous techs, we're playing 'technology roulette'

Are there lab accidents and other anthropogenic disease sources? Yes.

Are some of those events of a severity that could lead to outbreaks? Also yes.

Here's our newly published list of 71 such incidents from 1975-2016 in

@F1000Research

:

2

39

129

0

17

48

#DoomsdayClock

is closest its ever been to midnight

People asked me "can that really be true?". I'm a Cambridge Uni existential risk researcher and my view is: unfortunately yes

TL:DR nuclear situation worse than last 30 yrs, climate situation worse ever, and new bio + AI risks

5

34

47

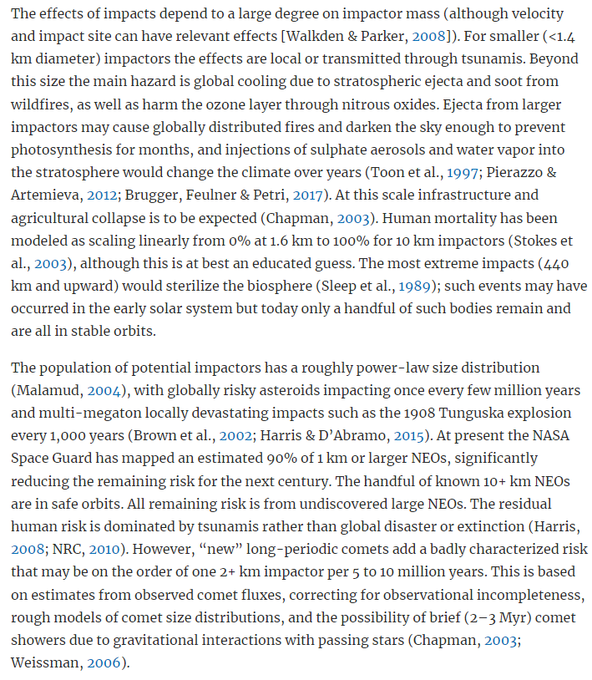

Actually the risk is about 1 major impactor every 5-10 million years, according to

@anderssandberg

3

1

47

I hope it inspires a generation of kids to learn from the mistakes of previous generations, and question whether they're really in a race

1

2

46

The UK AI Safety Institute is expanding to San Francisco!

Live in Sf & want to work on the cutting edge of evals & safety? Consider applying!

@geoffreyirving

@yaringal

4/ And we’re not just scaling in the UK. We’re now opening an AISI office in San Francisco to cement our trans-Atlantic partnership with the US and to work with the best and brightest talent on both sides of the Atlantic.

1

6

48

2

8

52

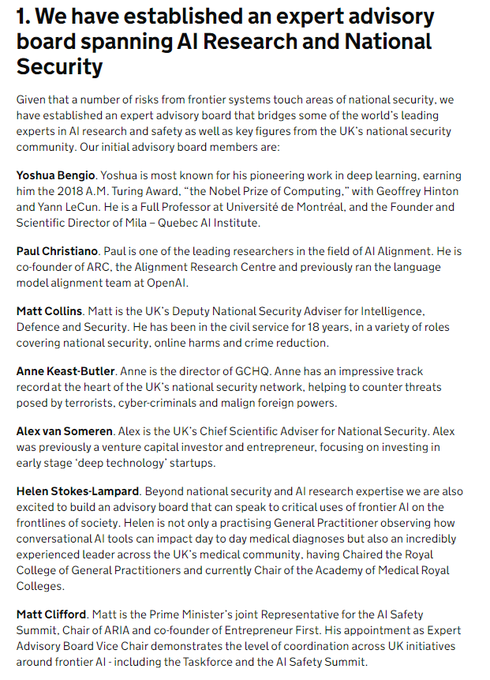

Jade Leung and Rumman Chowdhury are two huge gets for the Taskforce

Absolute world experts in their fields

1

1

43

Apparently this is only Carl's second ever podcast interview?! He's one of the smartest people on AI risk, well worth a listen

3

1

37

love that

@EpochAIResearch

has so quickly become one of the most important sources of info and analysis on AI

0

5

39

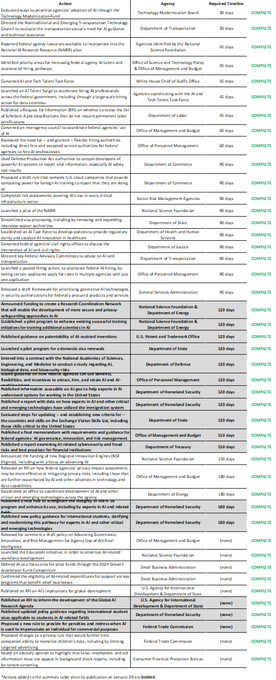

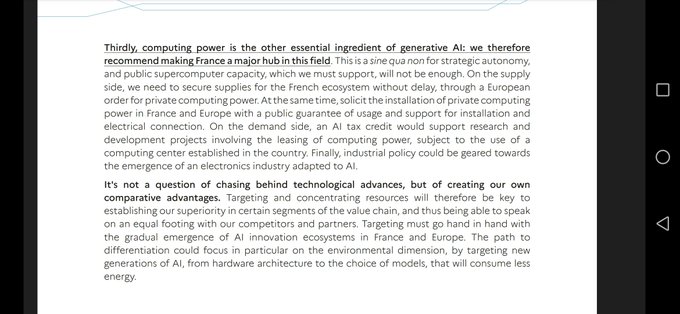

Four companies in the whole world have enough compute capacity to do frontier training runs

All four have 'priority access' evals agreements with UK AISI & are regulated by the US Executive Order and the EU AI Act

The job is nowhere near done, but EY's pessimism is unjustified

@JacquesThibs

Then build the regulatory infrastructure for that, and deproliferate the physical hardware to few enough centers that it's physically possible to issue an international stop order, and then talk evals with the international monitoring organization.

2

2

32

5

5

39

I was interviewed for this front-page story in

@TheEconomist

, and it turned out great! I love the illustrations especially.

Leader:

Full briefing:

0

8

40

Our World in Data is an incredible resource, awesome that they're pulling together all their work on AI

⬇️🔥⬇️

We just launched our new

@OurWorldInData

page on AI!

We think this technology is extremely important for the future of the world, and our own lives.

In the last months we prepared a range of visualizations and articles to support the public conversation.

9

109

442

1

3

39

Very happy to be working on the 'Global Politics of AI' with the incredible

@KerryAMcInerney

Take a look at some of the themes we want to dig into:

0

6

38