Rajesh / Raj

@rkarmani

Followers

1,135

Following

762

Media

180

Statuses

5,603

Working until AGI. Playing forever. 42% normie. Equity: @askwhai @farmersfridge @gritdotio Minor: @gumroad Kunduz @replit @maybe @getLinen $BTC $ETH

Silicon Valley 🌄

Joined March 2009

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Cohen

• 311680 Tweets

#GucciCruise25

• 213066 Tweets

#LeeKnowXGucci

• 200422 Tweets

Liverpool

• 114683 Tweets

Villa

• 102948 Tweets

Aziz Yıldırım

• 97307 Tweets

OpenAI

• 96084 Tweets

Spurs

• 76548 Tweets

Martinez

• 71568 Tweets

ChatGPT

• 62803 Tweets

Diaz

• 61791 Tweets

GPT-4o

• 54265 Tweets

Gomez

• 50570 Tweets

Mourinho

• 47710 Tweets

gracie

• 40536 Tweets

Klopp

• 30733 Tweets

Lions

• 27331 Tweets

#KızılGoncalar

• 26528 Tweets

Lamine

• 26096 Tweets

#AVLLIV

• 23956 Tweets

Vitor Roque

• 23452 Tweets

Duran

• 23069 Tweets

Aleaga

• 22226 Tweets

Meek

• 20416 Tweets

Nunez

• 18206 Tweets

Goff

• 14842 Tweets

Watkins

• 12669 Tweets

Raphinha

• 12396 Tweets

Last Seen Profiles

@aSciEnthusiast

@Grady_Booch

Sorry sir, science has always been slower at explaining things than religion. Once again you are late 😜

37

9

254

Chollet with a master understanding of understanding and intelligence.

Remember his proposed benchmark ARC came well before the GPT hype and has stood the test of time.

2

31

88

Intriguing question: can humans theoretically create a machine that can reason, learn & understand like humans, without humans first understanding how they reason, learn & understand themselves?

@ShlomoArgamon

@GaryMarcus

@Grady_Booch

@danieldennett

6

15

30

Thank you

@GaryMarcus

@Montreal_AI

for giving me a chance to listen to AI luminaries, incl

@yudapearl

@kahneman_daniel

who inspired me to get into AI & start AskWhai two yrs ago.

0

7

29

Combining the mysterious magic of deep learning with symbolic search (aka neuro-symbolic or hybrid AI models) is something that

@GaryMarcus

,

@Grady_Booch

, others have predicted for a long time.

Q* & GB's work hints at it as the future of large models.

Here's my conversation with Gary Marcus (

@GaryMarcus

) about the future of AI systems that may achieve common sense reasoning with a hybrid of deep learning and symbolic AI.

7

43

217

4

4

27

@zachtratar

If there is something, here are the clues:

Nov 1: SamA says LLMs are not enough. We need big breakthrough for AGi.

Nov 6: SamA “everything today will seem quaint next year”

Nov 16: SamA “4 times pushed the frontier of discovery, last one within last couple of weeks”

Nov 17:

0

0

25

@garybasin

rumour is that it's intentionally nerfed for reasons (possibly cost, time, doomerism/regulation, also forcing oai hand to show some cards soon)

3

0

24

@SteveStuWill

Aww so awesome. I still wish they could wash dishes, do laundry and fetch water on demand

3

0

15

@RVAwonk

@AdamParkhomenko

Is he only under investigation now because Facebook desperately needs a scapegoat?

1

2

10

@sterlingcrispin

@peterthiel

Could you help by actually articulating the business or human problem that could be solved with even one of the data set?

That will help Ai founders and techies rally around it.

1

0

10

@PedanticRomantc

I see why you’d feel that way initially. Here, one could 1) choose never to sign up for

@LambdaSchool

or 2) choose to make less than $50k after the program, 3) if you get lucky, just pay off $30k. Not sure how that’s like as atrocious as slavery.

CC

@paulg

0

0

11

@pt

@bresslernation

@mattbilinsky

Isn’t this how?

Target ads (by demo & psychographics) -> UTM Params -> for visitors with utm, add cookie (& account profile If signup) -> retarget/drip campaign for the rest of visitors

Repeat for all subset/target segments. I got user data in my system now.

1

0

11

@Carnage4Life

Hard disagree. It was a culture straight from the heart of the two founders. Put together in the post dot com recession.

0

0

10

Deep Emissions with Deep Learning!

Clearly, there is an efficiency gap between DL and human cognition. There are better models. “Need a Newton of AI.”

@GaryMarcus

3

5

10

@rishmishra

@jamesrapoport

@Superhuman

Someone went to an Ashram for 2 or 3 years and came back with Superhuman.

0

1

9

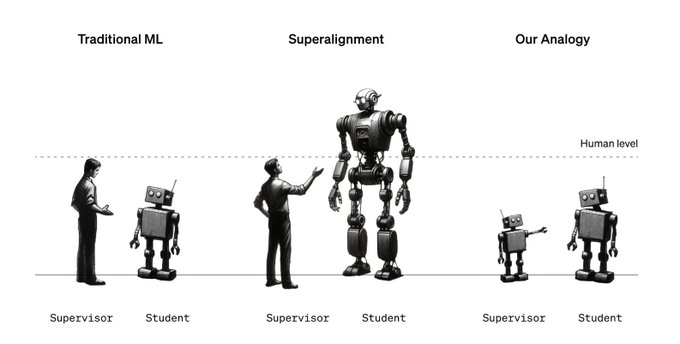

This is an important read, irrespective of a specific company or an architecture

0

2

9

Excellent debate initiated by

@GaryMarcus

What you just did is exactly what GPT3 can not do without "understanding", i.e identify gaps in its own knowledge:

1) ask questions to fill those gaps, OR

2) say "I screwed up" with any conviction

It matters else you can't rely on it

2

3

9

If you blv in OpenAI thesis on AGI, 1000 random people will determine the fate of humanity from here on

4

2

9

Notice how he said next-step prediction instead of next-token prediction

2

0

8

@SparklinPM

@paulg

People want:

Sugar & salt ~ McDs

So they quench thirst for info ~ google

And for hoarding stuff ~ amazon

To Contribute back ~ Microsoft

For group acceptance/validation ~ Fb/LI

Yet being different ~ Apple

1

0

8

@robfindlay

@ikeadrift

"Archeologists discover a generic plastic chair in the desert, excavating and dusting it with great care."

1

0

8

Today we officially "throw our hat into the ring" and take on the challenge of bringing ethics, transparency and fairness in probably the most crucial human development i.e. artificial intelligence. I am proud to join an incredible team in this journey.

1

3

7

@GaryMarcus

@karaswisher

He wants everyone to trust him as AGI prophet.

Looks like he has trust issues within his team

1

0

7

Such an honor 🙏🏼

Meet our members'

#Grist50

pick:

@rkarmani

built

@zeropercent

to solve our massive food waste problem.

1

7

17

0

1

7

@Afinetheorem

At its core, this is about economic harm to copyright creators and holders, not about how the technology works.

1

0

8

@sarah_cone

Funding Pullback by nsf, dod and darpa for fundamental, long-term technologies over the last 20-30 years.

Overindexing by VCs on safe and familiar bets such as b2b SaaS, and social media apps. US is already behind China on real-time AI, & risks falling behind on chip production

0

0

7

Masters of the Fleets👨✈️ 👩✈️: These folks are always top of the mind, hmm top of the feed

@chriscantino

@hunterwalk

@ljin18

@nbashaw

It’s a thing, Master of the Fleets 🚢

0

0

7

For our supporters, advisors and the team, who believed we could build Zero Percent in Chicago.

#MoxieAwards

0

1

7

@andrewchen

Just came to Bay Area from Chicago to:

1. Escape winter and get warmer weather/ outdoors for the toddler (trigger)

2. Remote work for my spouse (enabler)

3. Build moon (chasing dream)

Sorry, causality is complex 😊

0

0

6

If you'd prefer hype-free, troll-free, influence-free (afaik) pulse on AI development, follow these accounts.

@fchollet

@Grady_Booch

@MelMitchell1

@rao2z

@GaryMarcus

@karpathy

@chipro

@dileeplearning

Any others?

2

1

6

On my way to NY

@nationswell

summit. You can still vote 4

@zeropercent

at . Let's bring Tech Impact award to Chicago.

0

2

6

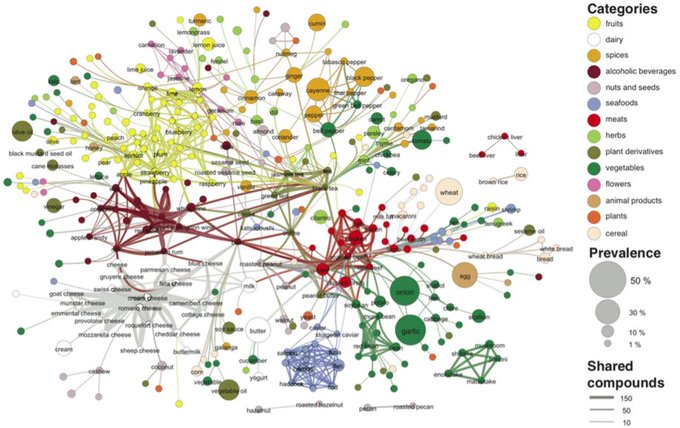

Interesting "AI-based" recipe builder

Hi! We dig the

#FoodScience

of

#Foodpairing

. Please go and play with our algorithm on and give us feedback. Thanks!

0

4

8

0

1

6

@AnnaArchivist

Why exclusive access? Give access to every LLM company that contributes their OCR results so you get the best vs the first

0

0

5

@garybasin

Like Turing Test, AGI is being coopted away from the core essence. Soon we will realize it's a bad term. Of course there is a lot of "general" and "intelligence" in GPT-4 but it's not close to biological/human intelligence. Multiple flaws in his arguments..

0

0

5

@satyanutella_

Also he seems to be idealistic about broad outcomes despite quoting Gita/Krishna. Even the most powerful/willful humans had way less control over broader outcomes than expected

0

0

5

@Grady_Booch

Lol true except none of the presenters wanted it for themselves. Tim Cook was happy with his glasses

0

0

5

@garybasin

That's fair. This is a company that learned by putting billions in self driving with no return so far.

GenAI may turn out to be a money pit. ROI from GPT4->5 is quite speculative, and might be negative for Google given their core business

1

0

5

@elonmusk

@tszzl

@Alwaleed_Talal

@Twitter

@Kingdom_KHC

One question, if I may?

Why not buy

@Clubhouse

.. it may be cheaper and faster path to a free speech arena. You were on it last year and if you bring your 80M followers, that’s almost all twitter DAUs😀.

3

0

5

@benedictevans

Some form of “I’m saving the species”, or no “I’m saving the civilization” (completely different things btw)

0

0

4

Time to step back and see what's the deal. LLMs are probabilistic & compressed generators of training data. They do NOT see all inputs/outputs (and permutations) for arbitrary math problems -> hopeless. Can we instead trade inference time?

1

1

4

@ShlomoArgamon

@AOC

I read nuclear was not even in the original plan. What other should be in the plan?

1

0

2