Irina Rish

@irinarish

Followers

8,627

Following

994

Media

235

Statuses

6,524

prof UdeM/Mila; Canada Excellence Research Chair; AAI Lab head ; INCITE project PI ; CSO

Montreal, QC

Joined October 2011

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

オーロラ

• 508456 Tweets

Joost

• 344098 Tweets

Forest

• 116459 Tweets

#الهلال_الحزم

• 113550 Tweets

الدوري الاقوي

• 63224 Tweets

Modric

• 59387 Tweets

Ali Koç

• 49697 Tweets

Dremo

• 44621 Tweets

الدوري السعودي

• 43442 Tweets

Burnley

• 36022 Tweets

Granada

• 35004 Tweets

الدوري التاريخي

• 31098 Tweets

Brahim

• 30419 Tweets

Arda Güler

• 27114 Tweets

Luton

• 24332 Tweets

#NfoChe

• 22037 Tweets

Aina

• 21125 Tweets

#الدوري_الاقوي_في_التاريخ

• 18018 Tweets

DiniKonferansa TehditVeEngel

• 17616 Tweets

Reece James

• 15798 Tweets

Mudryk

• 15353 Tweets

Sterling

• 14783 Tweets

نيمار

• 11605 Tweets

Last Seen Profiles

Pinned Tweet

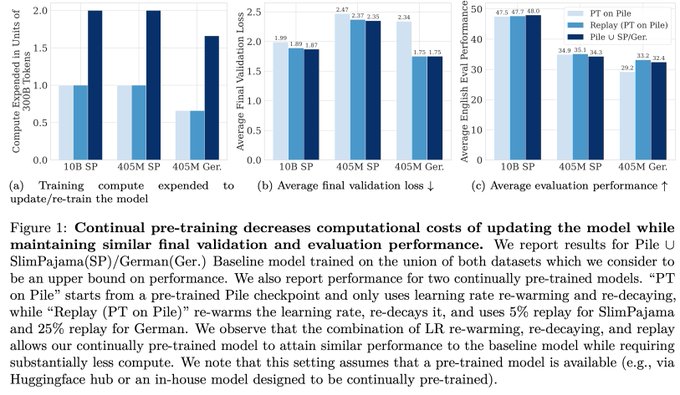

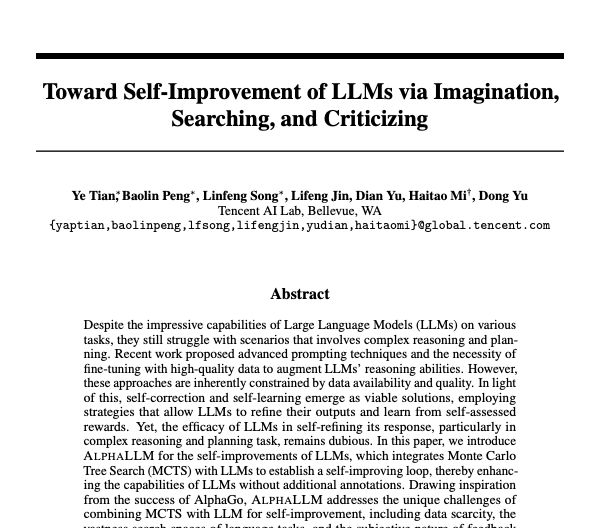

🚨 Open-source AI community - stop building everything from scratch, let's build on each other's (and your own) work over time - continually, as we should! Don't waste previoius compute and human effort!

See - simple & useful tips on how to just keep…

Interested in seamlessly updating your

#LLM

on new datasets to avoid wasting previous efforts & compute, all while maintaining performance on past data? Excited to present Simple and Scalable Strategies to Continually Pre-train Large Language Models! 🧵 1/N

4

49

159

3

38

143

A little Xmas present 4 you!🎁🎄🎉 Excited for the first release of our open-source Robin vision-language models built by the team at

@irinarish

’s

@cercaai

lab @

@UMontreal

as part of our INCITE project . Blog/models/code: 🧵

15

73

332

@emilychangtv

@laurenepowell

@marissamayer

@sama

@CondoleezzaRice

I agree with

@pmddomingos

jere: the sex of the board members is irrelevant. What is relevant is they understand of AI and its likely future trajectories. Other personal characteristics are irrelevant.

7

12

273

Thrilled to announce that our joint proposal (

@Mila_Quebec

@laion_ai

@AiEleuther

) on "Scalable Foundation Models for Transferrable Generalist AI" won DOEs INCITE compute award on Summit supercomputer (~6M V100 hrs /year is a good start towards AGI ;) 1/N

20

27

253

More (belated) Xmas (or New Year :) presents for y'all! 🎁🥂🎉

Our

@cerc_aai

team

@UMontreal

(), jointly with our virtual

@AGICollective

community, is excited to announce the first release of CL-FoMo (Continually-Learning Foundation Models) suite of…

9

22

206

Very excited and honored to receive the Canada Excellence Research Chair (CERC) in Autonomous AI Thanks to

#NSERC

and all my colleagues and collaborators at

@MILAMontreal

@UMontreal

@IBMResearch

Now we have 7 years to build AGI 😆

15

19

193

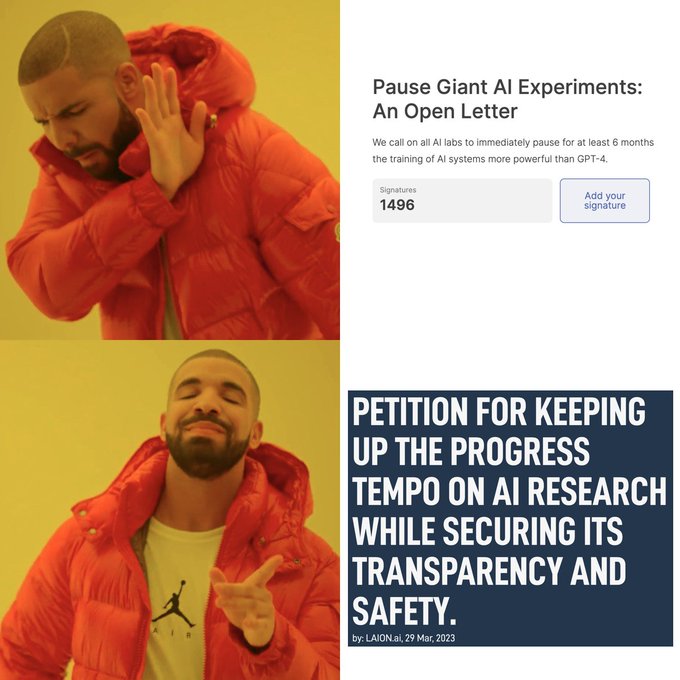

Sign :

#Initiative

Securing Our Digital Future: A CERN for Open Source large-scale AI Research and its Safety in

#European

Union via

@openPetition

7

19

169

"In Silicon Valley, crypto and the metaverse are out. Generative A.I. is in."

@StabilityAI

(nice pic of

@EMostaque

;)

5

25

156

Join our Scaling, Alignment & Open-Source AI workshop Fri, Dec 15 in New Orleans or remotely !

Hear Nick Bostrom, Yoshua Bengio (TBA)

@ylecun

@tegmark

@percyliang

@juliabossmann

@JJitsev

@ethanCaballero

@irinarish

and others discuss the Open Source and…

0

24

152

The recordings of our panel at 6th Scaling ws , moderated by

@FabriceLebrice

, are out - featuring Yoshua Bengio, Nick Bostrom,

@ylecun

@tegmark

@percyliang

@JJitsev

@juliabossman

@norabelrose

@Plinz

@ethanCaballero

@irinarish

.

Join our Scaling, Alignment & Open-Source AI workshop Fri, Dec 15 in New Orleans or remotely !

Hear Nick Bostrom, Yoshua Bengio (TBA)

@ylecun

@tegmark

@percyliang

@juliabossmann

@JJitsev

@ethanCaballero

@irinarish

and others discuss the Open Source and…

0

24

152

5

22

89

Proud to announce the first open source time-series foundation model Llama (inspired by

@Meta

's LLaMA - thanks,

@ylecun

;) , built jointly with my awesome collaborators from

@cerc_aai

@UMontreal

@ServiceNowRSRCH

and

@MorganStanley

:

@arjunashok37

@krasul

,

@CluelessAndrew

,…

8

32

150

Happy to collaborate with

@togethercompute

,

@StanfordCRFM

,

@HazyResearch

,

@DS3Lab

,

@ontocord

,

@LAION

@AiEleuther

on building fully-open LLaMAs as a part of our INCITE project - many thanks to

@OLCFGOV

for providing Summit compute!

@UMontreal

@UMontrealDIRO

@Mila_Quebec

5

33

124

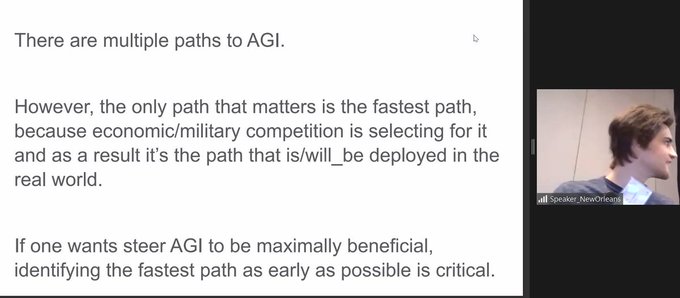

self-improvement/"self-play"/continual interaction/feedback with other AI's and humans is likely the fastest path to AGI - where the latter is not a single agent but rather a community/population of intelligent agents. No intelligent agent, at any level (from insects to humans)…

4

22

122

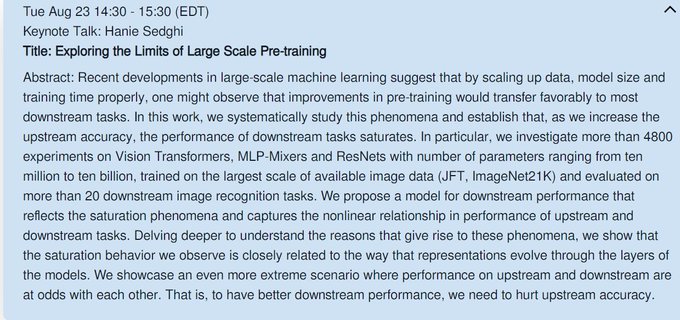

Come to our

#NeuralScalingLaws

workshop at

#ICML

on Fri July 28th - registration and details:

0

7

95

"Social distancing works but in its simplest form it is brutal and economically very damaging" - so Vargha & Yoshua from

#MILAMontreal

propose a very cool solution.

#MILAMontreal

rocks!! :)

#COVID19

#ArtificialIntelligence

8

45

101

@pmddomingos

work-life balance is more important than achievement, and life is more important than work :)

0

1

97

It was a great pleasure to discuss emergence in natural and artificial systems with

@drmichaellevin

and

@MLStreetTalk

! Thank you both!

Check this conversation with Prof. Michael Levin

@drmichaellevin

and Prof. Irina Rish

@irinarish

. We discussed emergence, intelligence and transhumanism. Hope you enjoy!

9

19

70

7

9

94

@cercaai

@UMontreal

This release, led by Robin’s team members,

@kshitijkgupta

,

@DanielZKaplan

@simonramstedt

, builds upon the LLaVA architecture,

@MistralAI

's Mistral-7B and

@Teknium1

's OpenHermes-2.5 pretrained LLMs, along with OpenAI’s CLIP and Google/BigVision’s SigLIP as the image encoder.

4

3

92

@GaryMarcus

@NautilusMag

Deep Learning is doing the EXACT opposite of hitting the wall right now - anyone paying attention to the rapid improvement in generalization/transfer capabilities of large scale models would agree. I think deep learning is actually experiencing a revolution now.

6

9

90

@ylecun

@tegmark

@RishiSunak

@vonderleyen

Agreed. I am a part of that silent pro-open source AI academia majority. But hey, maybe we should get less silent 😀 which byw by no means undermines the need for alignment research. Let's study and steer emerging AI behaviors - all of them.

2

1

83

The recent

@OpenAI

events are just an example of the deepeinging divide in AI - between fear- vs hope mentality. I am not against thoughtful approaches to safety. But I think that NOT building AGI would be terrible. And also - "fear is not an option". It never ends well.

4

10

79

Agree with

@JeffDean

- this is precisely what we are aiming at, training LLMs contunually on Summit/Frontier :

"Incremental learning, ways of training so that one new task does not interfere with another, does not make it so you have catastrophic…

.

@JeffDean

, chief scientist,

@Google

DeepMind and Google Research, Grace Chung, Site Lead, and Engineering Director, Google Australia, and

@ManishGuptaMG1

, Director of Google Research India talk about large language models and the current AI landscape.

@ETtech

@EconomicTimes

2

11

37

5

14

82

@M1ndPrison

@danfaggella

@Abel_TorresM

When Oppenheimer was considering the possibility of unstoppable chain reaction that will end the world, he did actual calculations and asked other scientists to confirm. The chance was nearly zero, though nothing ever can be an exact zero. When AI "scientists" talk about…

17

7

78

@MichaelTrazzi

everyone's job involves neural networks; maybe not necessarily artificial ones 😂

2

1

71

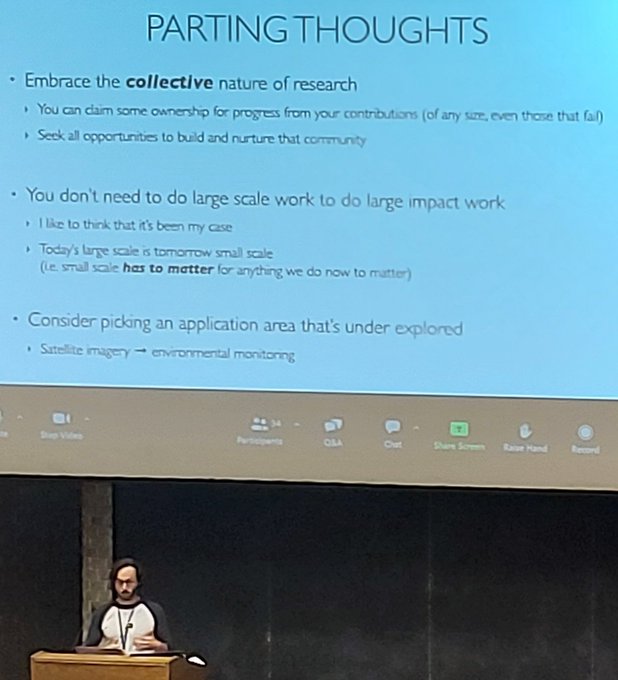

"You don't need to do large scale work to do large impact work" - great take away lesson from

@hugo_larochelle

's plenary at CoLLA today! Yes,large scale is often large impact, but other things can be too. Impact is more important than "just scale".

3

8

73

Could it be that we've been focusing on a wrong AI narrative? Paradigm shift to "AI+Human", from "AI vs Human"? Symbiotic partnership vs Terminator scenario? Developing Human/AI relationship vs X-risk paranoia? Positive psychology/mutual benefits vs control? Thoughts?

32

8

73

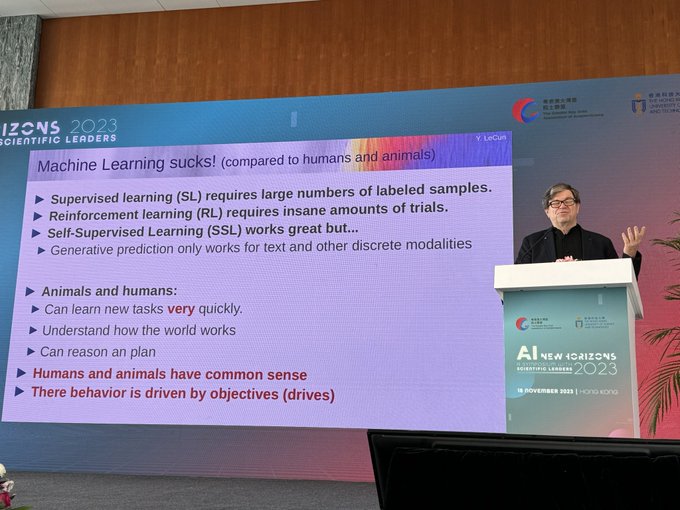

Would love to see the slides! Or,

@ylecun

- maybe you can give this talk again in our Scaling, Alignment and Open-Source AI on Fri Dec 15, besides joining the panel with Nick Bostrom and others?

“Machine learning sucks!” Keynote by

@ylecun

at the AI Symposium: New Horizons 2023 at the Asia Society in Hong Kong.

19

68

421

3

9

66

Would love to attend. Yes, I am well aware JBP is a controversial figure, but that is what makes him more interesting; plus, many (though not all) of his ideas I find interesting and useful. Sure, my opinion is subjective (but isn't this true of all opinions? 🤣

3

0

58

🔥The 4th Scaling workshop! Fri Dec 2, New Orleans (across NeurIPS)

@AISweden

LLMs,

@StabilityAI

Stable Diffusion,

@laion_ai

LAION5B/OpenCLIP, phase transition (

@tegmark

MIT team), Broken Neural Scaling Laws (

@ethanCaballero

@Mila_Quebec

) and more

see🧵

14

14

60

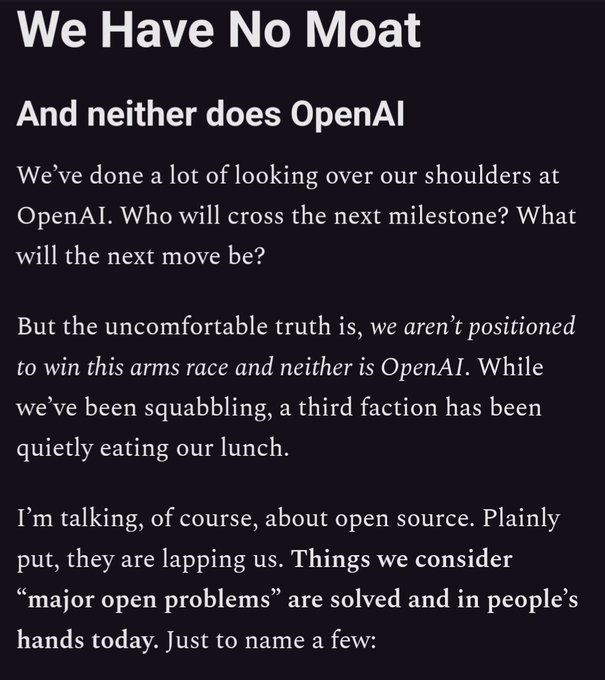

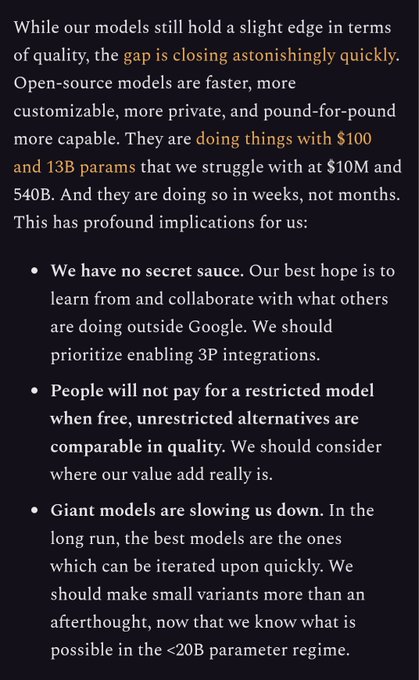

Christoph Schuhmann from

@laion_ai

gets to the core of the controversy about open-source AI: are humans inherently bad or inherently good (or, rather, "it depends on the prompt" :) - and thus NOT open-sourcing might be as dangerous (or more) than NOT open-sourcing.

7

12

56

@AndrewYNg

@ylecun

@geoffreyhinton

Important decisions thay can suffocate the development of AI progress should not be based on emotion-driven narratives, questionable analogies (nuclear weapons, etc) and subjective prior beliefs, with jumps in reasoning leading to far-reaching conclusions. This is not a solid.…

1

3

58

Exciting to see the first release of RedPajama-INCITE models (open version of LLaMA suite), after weeks of training on Summit! Huge thanks to

@OLCFGOV

for our INCITE compute award, and to the large collaborative team that made this possible!

0

15

57

Bio-plausible beyond-backprop method that works well? Yes! See our ICML spotlight TODAY @ 5.35 PM by Maxence Ernoult! Awesome job, Maxence,

@FabriceLebrice

@amoudgl

@scspinney

in collaboration with

@ebelilov

@tyrell_turing

and Yoshua!

3

13

57

Our "beyond backprop" variant of Difference-Target-Propagation (DTP) is beating state-of-art for DTPs on CIFAR-10 and ImageNet 32×32 - thanks to Max Ernoult

@FabriceLebrice

Abhinav

@Moudgil

,

@scspinney

@ebelilov

@tyrell_turing

and Yoshua Bengio!

1

13

54

AGI is still a taboo in some academic circles. But who cares about academic circles? 😉

@karpathy

@catherineols

AGI was definitely a big taboo in academic circles (still is to some degree), but Kurzweil's "the singularity is near" came out in 2005 and was pretty popular, so it was definitely already on people's minds. There was also an AGI internet forum:

2

2

23

7

8

53

Panel discussion: is scale all you need? how scaling in brains compares to scaling in ANNs?

@SuryaGanguli

makes a good point that different parts of the brain have different scaling exponents/scaled non-homogeneously -

@JJitsev

(a neurscientist as well) mainly agrees.

3

5

54

How on earth did I end up on that list? No idea 😉

Which AI Brains left us shaken this year? Who blew our minds? We're excited to announce the World Summit AI community's top 50 Innovators official list 2022💥 ➡️?

Who is your top pick?

#WSAITop50

#AIBrains

Join the conversation by commenting below 👇

1

12

26

9

4

54

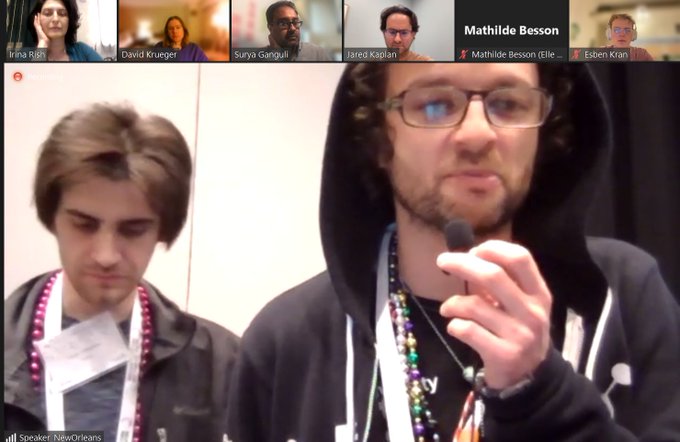

This shirt is the best meme ever - ordering more for students taking my neural scaling class this winter (ran out of shorts very quickly).

@jordiae

do you have bulk discounts at ? 😉

@ethanCaballero

we need new designs as well - including alignment 😉

3

2

52

AI researchers should learn more from neuroscientists, philosophers - and meditators, indeed - these fields studied the mind for centuries before AI was even born, though, like all youth, AI can be obnoxious (though often wrong) and won't listen to the "boomers" 😀

7

5

52

@davidchalmers42

My version of the scaling hypothesis is a bit different: I believe that "some kind of" networks and "some kind of" scaling will lead to AGI (evolution did it already) - but what kinds of networks and what way of scaling - that's a billion-dollar question ;)

7

6

49

@ilyasut

It must be an emotional roller-coaster for everyone involved in the recent events... I don't want to state any opinion here, just send virtual hugs - to both sides. Whatever the disagreements are, this is... stressful.

3

1

50

Panel discussion next Fri Dec 25, 4pm @ Scaling, Alignment & Open-Source AI workshop w/ Nick Bostrom, Yoshua Bengio,

@ylecun

@tegmark

@percyliang

@JJitsev

@juliabossmann

@ethanCaballero

Details :

Next Friday our

#panel

will be on

#OpenSource

and the

#FuturOfAI

: Maximizing Benefits while Reducing Risks” with NickBostrom, YannLeCun, YoshuaBengio, PercyLiang, JeniaJitsev , JuliaBossmann, EthanCaballero and

@irinarish

0

1

12

2

4

49

To all students out there looking for a PhD opportunity at Mila - don't miss out on this! Guillaume's lab is among the most exciting research places you will find! I am not exaggerating.

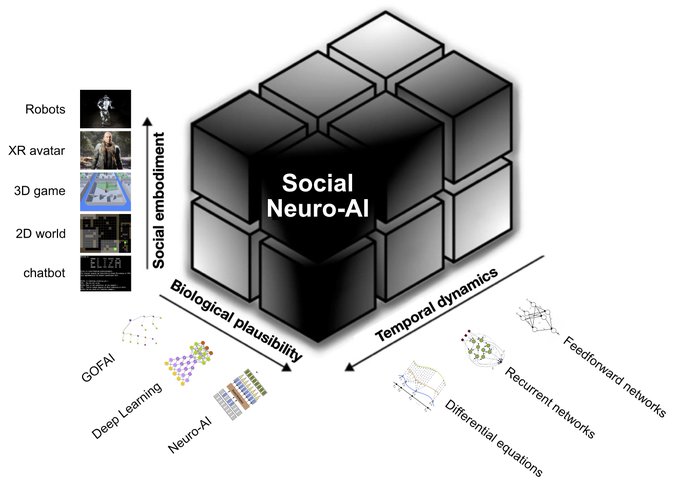

Fully-funded PhD in my lab with

@UMontrealDIRO

&

@Mila_Quebec

: "Neuro-inspired cognitive architectures for artificial metacognition and social learning"

#PleaseRT

#JobAlert

12

110

308

0

7

49

Panel discussion last week at AAAI workshop on Systems Neuroscience Approaches to General Intelligence (SynAGI) with

@BlumLenore

@Plinz

@introspection

@kanair

@JamesKozloski

2

10

47

What are the chances you meet some random guys in a bar in Tremblant (who are in the area to test-drive new motorcycle models), and (almost) the first thing they say is: "Have you heard about Stable Diffusion? It's so cool, I use it to generate novel bike designs" 🤣

@EMostaque

1

1

48

Thank you. And, my 2023 New Year's resolution - to finally find time to learn French ;) Though it's tough with all other things happening at the same time 😂. My 2023 to do list:

1. Create AGI.

2. Upload my mind on the cloud.

3. Learn French - after 1 & 2 it should be easy 🤣

Congratulations to Irina Rish, Canada Excellence Research Chair in Autonomous Artificial Intelligence. (in French)

@irinarish

@UMontreal

@NSERC_CRSNG

1

2

11

4

3

47

@NandoDF

Scale is a necessary component of an emergent behavior; but is is sufficient? Does it matter WHAT you scale and HOW? Whale vs primate brain? Are transformers missing important capabilities without feedback loops?

@NandoDF

@ilyasut

@ethancaballero

@tyrell_turing

@introspection

0

1

47

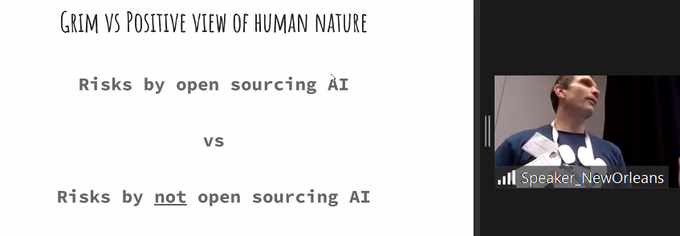

Yes,

@ylecun

! We are witnessing the open source (r)evolution that will make closed-source "dinosaurs" obsolete. This Cambrian explosion of open source AI species will dominate language, multimodal and eventually RL ecosystem. There is no turning back 😀

5

8

46

Last week,

@cerc_aai

ran an exciting AI

@Scale

event at

@Mila_Quebec

- videos posted here: ! Huge thanks to

@QuentinAnthon15

for amazing tutorials, and to CERC-AAI team for great overviews of foundation models built on

@OLCFGOV

's Summit & Frontier!

2

10

46

A nice article explaining why speeding up rather than slowing down AI development may be a better way to go (besides, slowing down today's fast-paced AI progress seems to be as realistic as stopping a tsunami).

6

10

47

Feedback loops are essential for brain functioning; input-less spontaneous activity is a hallmark of natural but not artificial NNs. Are we sure AGI can be achieved without them? Why or why not?

@ilyasut

@ethancaballero

5

5

44