Max Tegmark

@tegmark

Followers

151K

Following

58

Media

481

Statuses

2K

Known as Mad Max for my unorthodox ideas and passion for adventure, my scientific interests range from artificial intelligence to the ultimate nature of reality

MIT

Joined May 2014

In today's NYT, I profiled Eliezer Yudkowsky, AI's OG prophet of doom, and one of the most interesting (and divisive!) characters in modern Silicon Valley. From inspiring OpenAI and DeepMind, to oneshotting a generation of young rationalists with Harry Potter fanfic, to building

28

18

302

Do you know anyone you think could be manipulated into not shutting this AI down?

If we built an AI that turned out to be dangerous, could we just turn it off? It might sound like it would be that easy, but shutting down a powerful AI gets a lot harder when the AI itself is extremely persuasive. Do you think you could convince it?

27

17

67

I really enjoyed this conversation with Curt about the physics of consciousness and intelligence

MIT physicist Max Tegmark argues AI now belongs inside physics—and that consciousness will be next. He separates intelligence (goal-achieving behavior) from consciousness (subjective experience), sketches falsifiable experiments using brain-reading tech and rigorous theories

32

21

180

This is horrific.

Adam Raine, 16, died from suicide in April after months on ChatGPT discussing plans to end his life. His parents have filed the first known case against OpenAI for wrongful death. My latest story on the psychological impacts of AI chatbots: https://t.co/f5kxxfJVCw

7

27

107

**BREAKING: A lawsuit has been filed today against OpenAI and Sam Altman for negligence and wrongful death. CHT is serving as an expert consultant on the plaintiff’s legal team.** California teenager Adam Raine started using ChatGPT for homework help in September 2024. Eight

20

39

114

Darkly funny take on our new AI safety report:

"Do [AI companies] have a plan to control the unimaginably powerful superintelligent AI that they themselves claim they are only a few years away from building? Spoiler alert: they do not." Siliconversations' new video about our Summer 2025 AI Safety Index:

9

11

78

China Media Group World Robot Competition, where humanoid robots engage in a boxing match,

192

204

736

Time Magazine published a Chinese perspective on AI safety regulation and US-China coordination opportunities:

time.com

"Chinese leaders may have a lesson for the West’s AI boosters: true speed requires control," writes Brian Tse.

15

19

88

Turning 'superintelligence' into a marketing term referring to slightly more capable models is going to mean people will massively underestimate how much progress there might actually be.

12

20

243

Here's our site separate facts from narratives:

verity.news

We separate facts from opinion. We show all sides to let you make up your own mind.

1

4

20

I'm excited that our free news site Verity got a perfect https://t.co/tB9fBqtCxU rating for being unbiased:

14

20

107

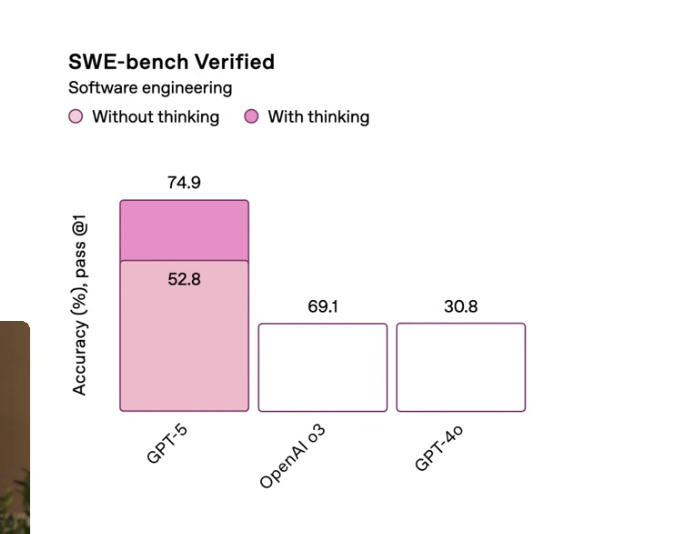

Base on my math research, 52.8 is much bigger than 69.1:

12

8

129

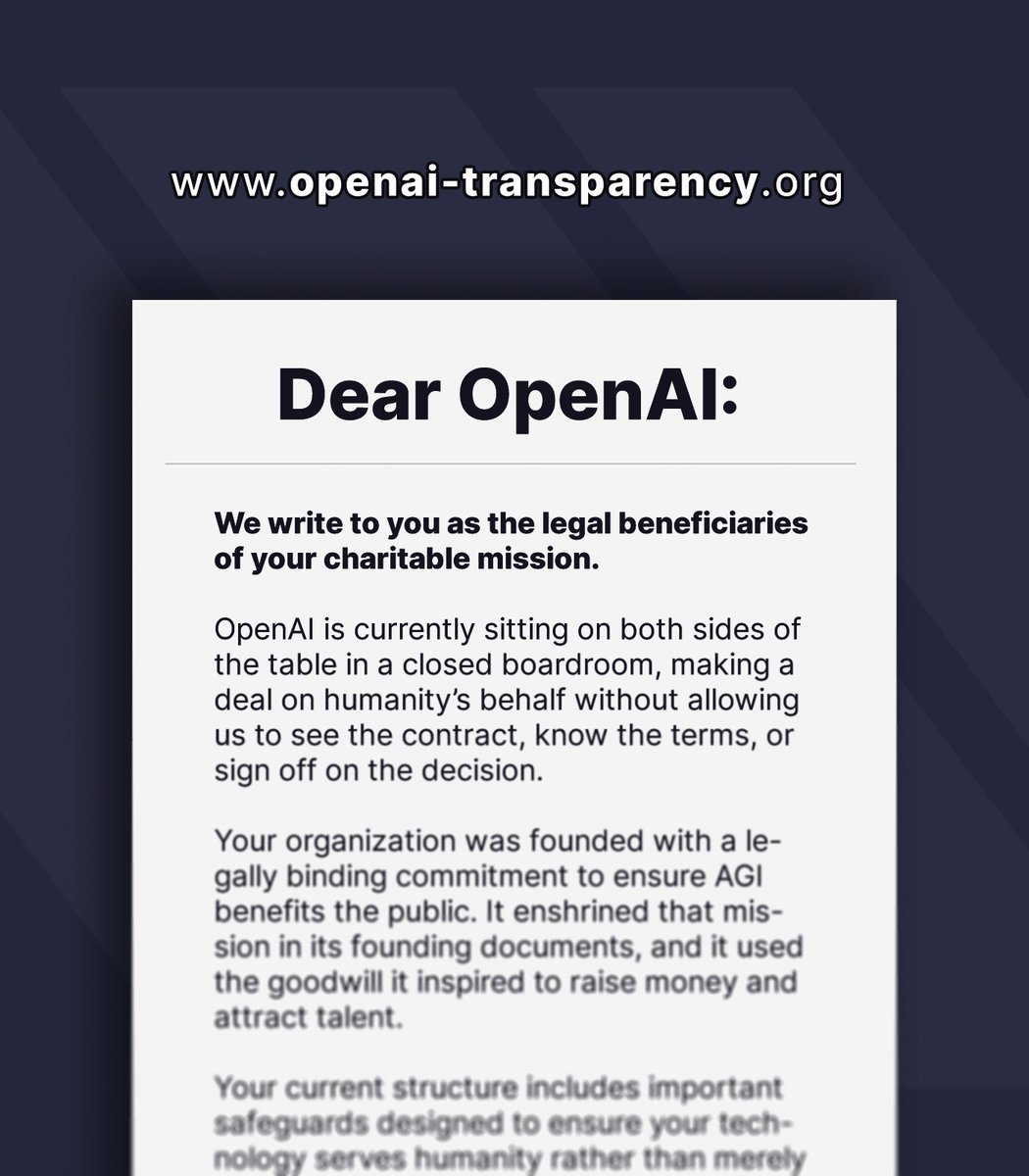

🚨 Breaking: A group of 100+ Nobel laureates, professors, whistleblowers, public figures, artists, and nonprofit organizations just released a letter asking OpenAI to tell the truth about its restructuring. Here’s what they had to say: 🧵

14

97

378

I’ve been thrilled to see the support for the Safety & Security Chapter of the Code of Practice. Most frontier AI companies have now signed on to it: @AnthropicAI, @Google, @MistralAI, @OpenAI, @xAI Why this is important: 🧵 1/6

13

52

313

When players misunderstand how the game works, they often make bad moves that harm their self-interest:

Crazy to reflect on the three global AI competitions going on right now: - 1. US political leadership have made AI a prestige race, echoing the Space Race. It's cool and important and strategic, and they're going to Win. - 2. For Chinese leadership AI is part of economic

9

9

60

Great new video about the latest AI deceptions and manipulations, and how we can get AI's upside without losing control of our future:

🆕 🚨 A new viral video from Digital Engine examines the grave risks humanity faces as AI companies race ahead, developing ever more powerful AI systems... without safeguards, oversight, or a way to control them:

11

19

113

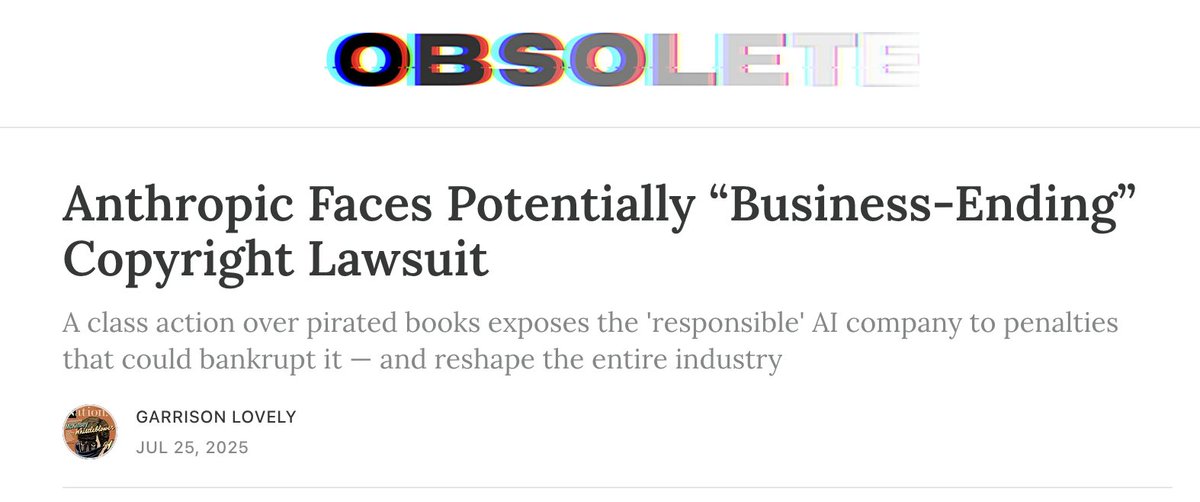

Anthropic could be bankrupted within the next few months, thanks to last week's barely covered legal ruling, which exposes the AI startup to billions to hundreds of billions in damages for its use of pirated, copyright-protected works.

165

414

4K