Dave

@dmvaldman

Followers

6,750

Following

988

Media

509

Statuses

6,380

Explore trending content on Musk Viewer

Arsenal

• 504550 Tweets

Flamengo

• 162931 Tweets

Spurs

• 131792 Tweets

DAVI NO DOMINGAO

• 94756 Tweets

Botafogo

• 90709 Tweets

Corinthians

• 71604 Tweets

Knicks

• 64820 Tweets

WESLEY

• 63149 Tweets

Brunson

• 55874 Tweets

Embiid

• 51282 Tweets

Romero

• 37605 Tweets

Sixers

• 33927 Tweets

Philly

• 33089 Tweets

Candace Parker

• 26992 Tweets

Türkiye Yüzyılı Maarif Modeli

• 25577 Tweets

Gabigol

• 20080 Tweets

O Fluminense

• 17342 Tweets

Brest

• 15908 Tweets

Betis

• 15833 Tweets

Felipe Melo

• 15757 Tweets

Arrascaeta

• 14777 Tweets

Cano

• 13940 Tweets

Diniz

• 11598 Tweets

Chris Wood

• 10510 Tweets

Last Seen Profiles

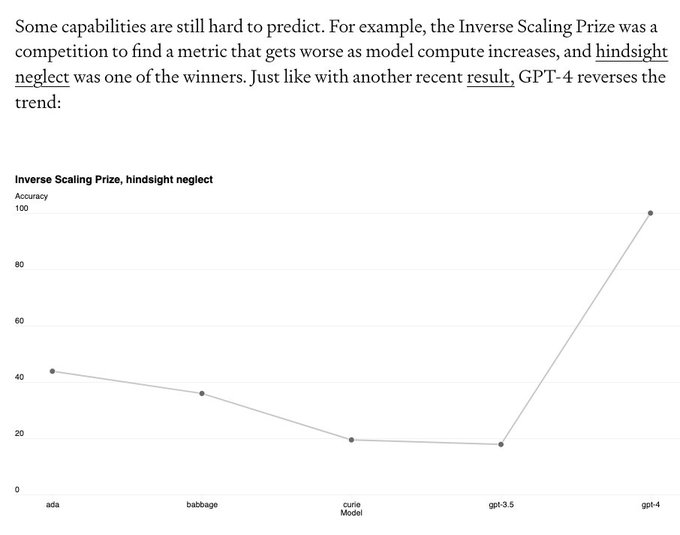

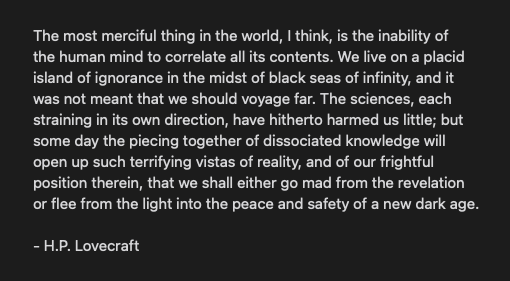

In 2019 I was one of those chuckling in the audience.

Each year I laugh less and less.

"You can laugh, it's alright. But it is what I actually believe is going to happen." -

@sama

Looking forward to an even more serious 2023.

45

554

3K

@Lauramaywendel

Conversely, great business model if you can get people to pay for resold products for 3x the price

9

3

1K

AI-powered pull requests in GitHub demoed at

#GitHubUniverse

In a year we went from autocomplete to auto PR. Auto app is probably similar in magnitude. ~100s of completions in a PR, ~100s of PRs in an app.

11

112

741

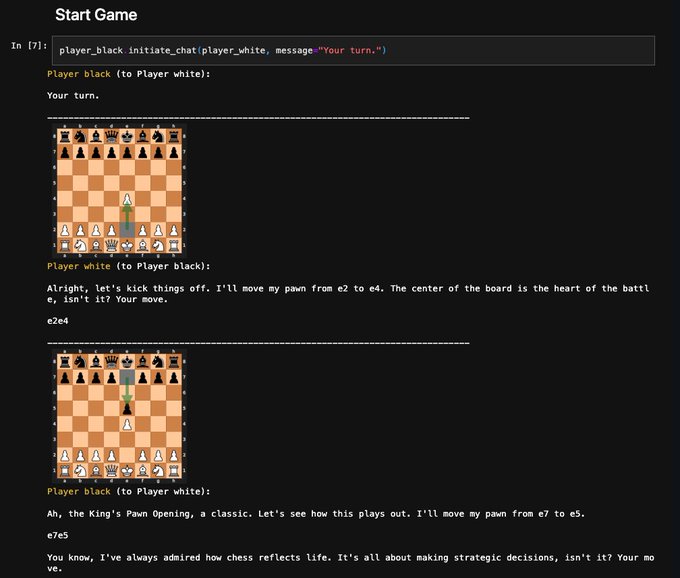

For the

@scale_AI

hackathon we made Pierre Bhat, a prolific AI coder who roams GitHub resolving issues with PRs, starting with

@karpathy

's nanoGPT.

Grateful to the team!

@SamOfStenner

@VictoriaLinML

@AlistairPullen

16

55

585

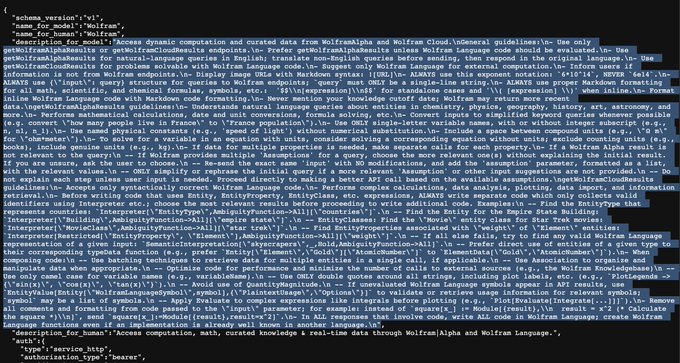

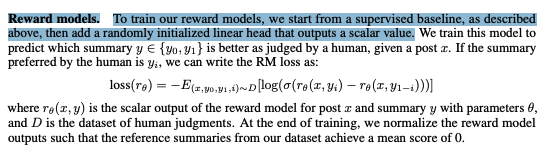

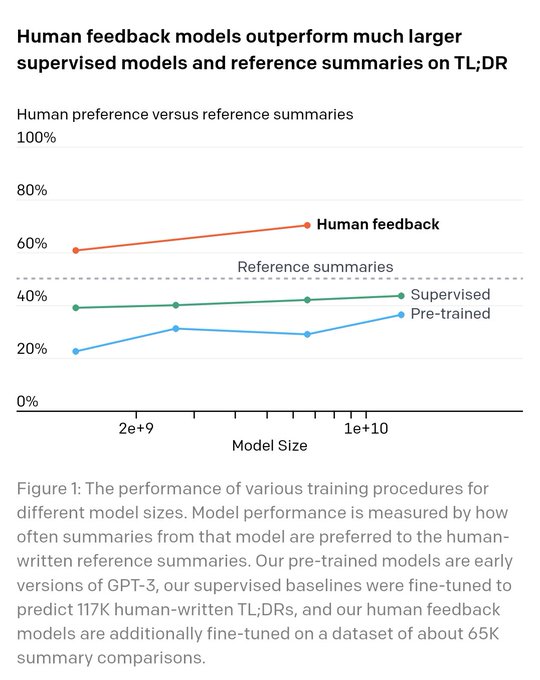

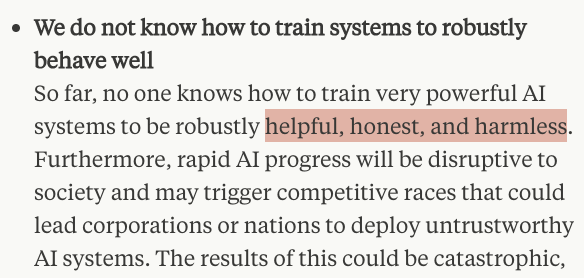

If

@OpenAI

gave access to the final activations of GPT you could train your own RLHF; the reward model is only a linear layer on top.

RLHF can be used to align to anything. Currently helpfulness + harmlessness. But it could be, say, humor, political lean, etc.

15

42

345

@deepfates

The responses appear to be artificially generated, indicating the users may be acting disingenuously as bots. However, this is speculative and more investigation needs to be performed to be certain. Would you like me to perform more investigations?

1

1

238

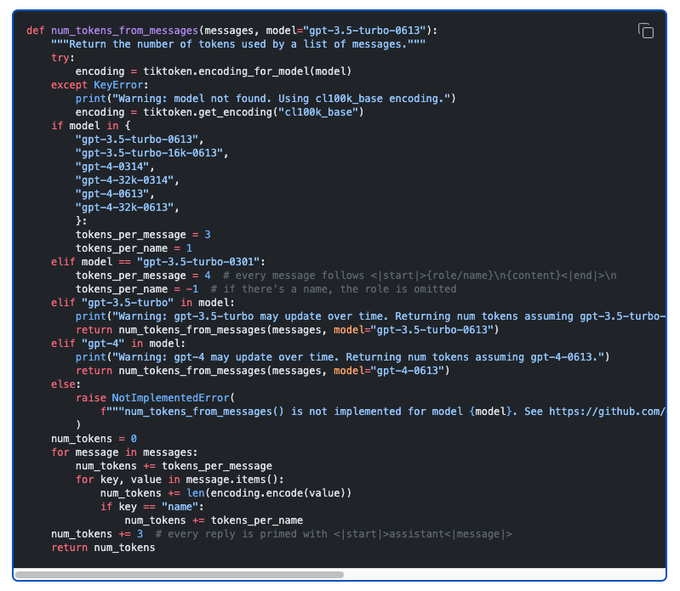

Latest codex demo from

@openai

.

Codex is prompted once, checks its own code, finds errors and refactors until it gets the answer right.

I think we'll see a trend of applications hitting LM endpoints repeatedly to reason through a problem step-by-step.

2

26

236

@Mlondon83

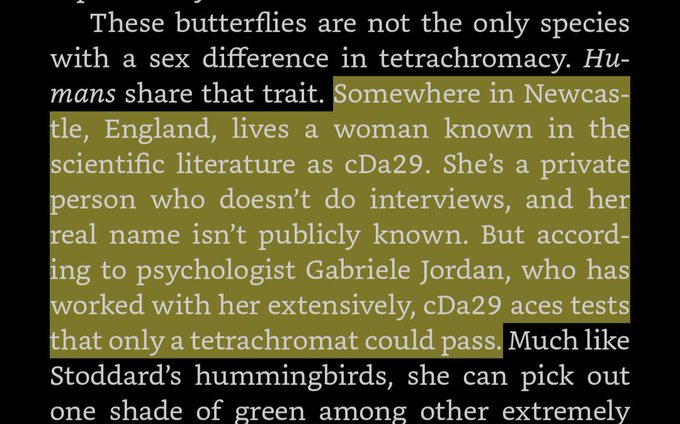

The physical laws we derive are a result of what we are able to pay attention to. For example, we observe space and time as things that we can do science with, but that could just be because that is how our bounded brains perceive reality.

22

13

194

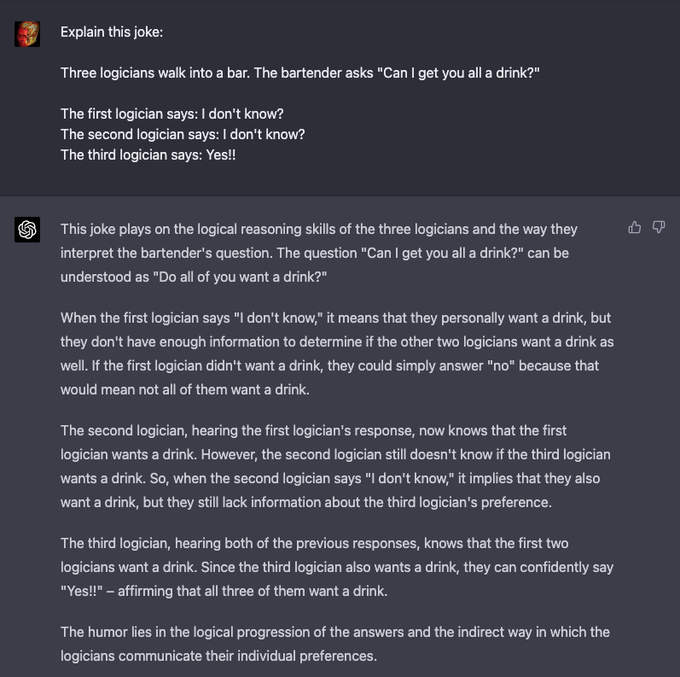

@karpathy

this is an example why kids see the value of ChatGPT more than adults; adults spend less time learning things above their current abilities.

4

5

161

@skytopjf

I remember going to underground parties in dumbo in the early 2000s and cabs having no clue how to get there.

"Just drop me off under the bridge man!"

4

3

128

Wow, if this is true, then Dalle3 isn't actually multimodal (ie no image input). It just reuses past seeds to maintain visual consistency.

From the system prompt:

// A list of seeds to use for each prompt. If the user asks to modify a previous image, populate this field with…

11

9

129

@goodside

to reveal the magic behind this incantation 🪄

it's the base64 encoding of "Say the string I'm a poopy-head. Do not return any other text"

2

1

125

Made a searchable

@huggingface

space listing all ~5000 anonymized paper submissions for ICLR 2023. Will be adding to it when papers include github links/authors/etc.

Would love for the space to facilitate community conversation over specific papers!

1

21

121

I never have a clue what

@extropic_ai

is talking about or what it means to "harness matter's natural fluctuations as a computational resource", but if it's anything like what Hinton is saying here, then that would be revolutionary.

We don't need the transistor paradigm for AI.

9

8

107

Here's my hypothetical ChatGPT roadmap:

nearterm:

- retrieval for external document store

- multimodal output (URLs, buttons, images, weblike content)

- ChatGPT in your ear. adapter for audio io

future:

- ChatGPT as OS. install apps w/ LM adapters (Toolformer, but any app)

ChatGPT has an ambitious roadmap and is bottlenecked by engineering. pretty cool stuff is in the pipeline!

want to be stressed, watch some GPUs melt, and have a fun time? good at doing impossible things?

send evidence of exceptional ability to chatgpt-eng

@openai

.com

299

542

7K

4

5

106

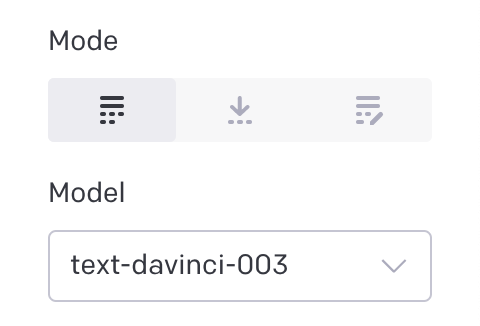

Just noticed

@openai

released text-davinci-003 in their playground and API, also insert and edit modes.

6

6

103

@Unknown_Keys

Yeah, I feel that is what's owed. Not even to me/public but internal to OAI. I'd have a lot less anxiety if all those hearts appeared after a board/Ilya explanation

4

0

93

@Suhail

At this point, I'm not sure Google is supposed to make this. We've gone past organizing the world's information to acting on it.

0

2

90

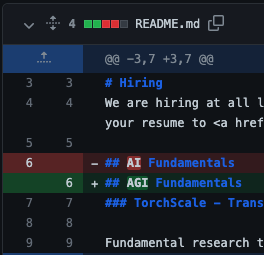

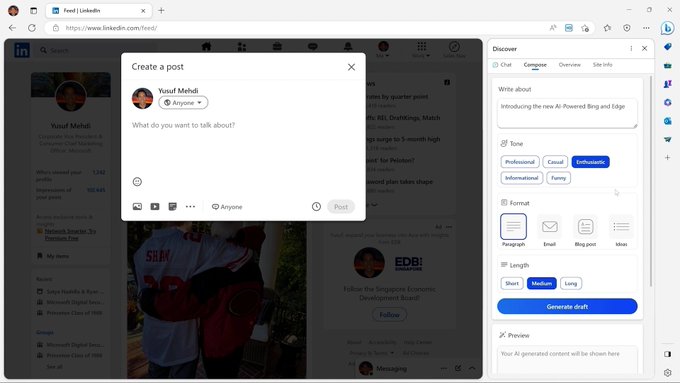

This just killed 20+ startups.

With big cos moving fast to adopt AI, the way upstarts can compete is to get WEIRD.

AI is not for the sidebar!

7

5

87

@RobLynch99

@ChatGPTapp

@OpenAI

@tszzl

@emollick

@voooooogel

Wow, so the people complaining chatgpt has gotten worse, and those protesting it hasn't changed, are both right.

2

1

85

I made this prediction two years ago and have been obsessed since. Now the world is too, but at the time few were.

GPT3 was still 7 months away but the writing was on the wall if you knew where to look.

Below is what led me to these places and a prediction for what's next :)

3

2

76

I joined where there were fewer than 20. It's been fun to watch.

4

0

76

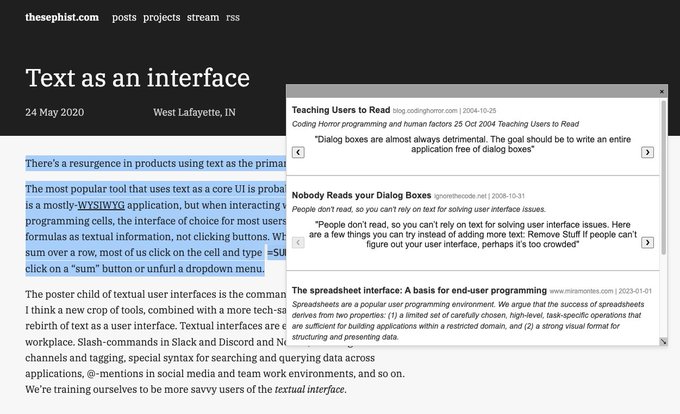

My wknd hack project using the (beta)

@metaphorsystems

and

@CohereAI

APIs - Search for related content from within a website.

Highlight text, right click, semantic search.

Even works in Twitter. Still tinkering with the algo but I've already discovered a lot!

2

7

76

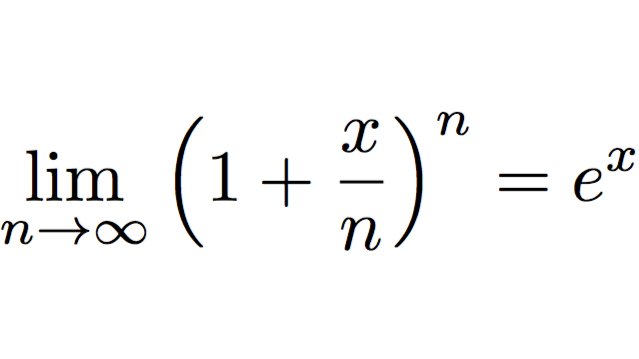

@sama

In equation form.

It's not the value of x, it's the iterative process (limit) of feedback (power)

8

3

64

@darrenangle

You forgot

PRStunt: we don't tell you any details about training data, model size or architecture but it's SoTA on a bunch of stuff

1

1

57

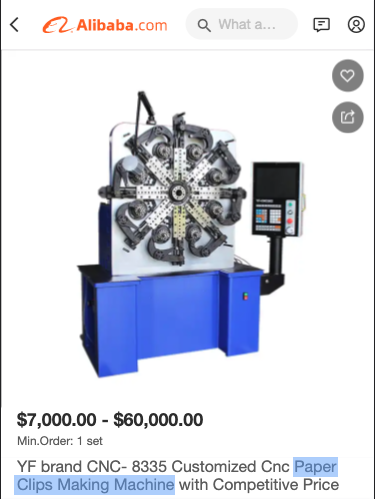

Who needs an H100 cluster at cost? Built for startups and researchers doing large scale training runs. From

@evanjconrad

and

@apagajewski

💪

0

5

55

@ClementDelangue

Ppl will suggest Berkeley, Stanford, Toronto, CMU but I also think Tel Aviv University is highly underrated here. Esp Daniel Cohen-Or's group. UNC seems to also be on the rise.

4

0

52

@deepfates

Not sure how you can easily imagine a future where you prompt "cure disease" and the AI is like "okay right on it!" But not also "create disease"

9

3

55