Arram Sabeti

@arram

Followers

10,740

Following

835

Media

447

Statuses

7,280

Artist / Tinkerer / Investor Housing scarcity is the greatest civilizational self own. Economic illiteracy is the cause. LET THEM BUILD.

San Francisco

Joined May 2008

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

#KAZZAWARDS2024

• 796787 Tweets

SAROCHA REBECCA IN KAZZ

• 574367 Tweets

FOURTH x KAZZ 2024🥳

• 94964 Tweets

ナイジェリア

• 47987 Tweets

Varane

• 43096 Tweets

インプレゾンビ

• 40167 Tweets

魔法少女

• 30267 Tweets

#お迎え渋谷くん

• 27623 Tweets

B1NI TOPS SPOTIFY

• 26267 Tweets

名誉毀損

• 16447 Tweets

#ابراهيم_المهيدب

• 14722 Tweets

#くる恋

• 13985 Tweets

風の行方

• 12927 Tweets

いなば食品

• 11681 Tweets

Last Seen Profiles

1/ Sorry New York Times, I decline to be interviewed.

The harassment of

@bariweiss

and Scott Alexander are only two of the more recent reasons I no longer trust your paper. Helping elect Donald Trump is another. I encourage others in tech to

#BoycottTheNYT

65

561

3K

A general thesis of mine is that there is vast amounts of private data hidden in plain sight that will eventually be made public.

39

186

2K

@SenWarren

I dislike almost everything you tweet, but this is a total no brained and should have been made illegal decades ago. 👏

16

5

955

11/ In short, The New York Times and Trump harness the same forces. It's working: trust is down, but the stock is up. The paper of record is now an engine for turning out-group hatred into profit. We don’t have to help them.

#BoycottTheNYT

18

88

623

The most uncertain part has been when AGI would happen, but most timelines have accelerated. Geoffrey Hinton, one of the founders of ML, recently said he can’t rule out AGI in the next 5 years, and that AI wiping out humanity is not inconceivable.

1

58

507

I'm trying not to get hyperbolic but I feel like I've just been shown electricity for the first time. I'm not sure what we're going to do with it, but damn if it doesn't feel like it could change everything.

14

55

466

Having worked for Emmett I can say he’s one of the smartest, most level headed people I’ve ever met and an amazing engineer.

Sam Altman is out. Emmett Shear of Twitch becomes OpenAI interim CEO, by

@amir

.

@theinformation

1

4

34

17

19

423

9/ Quoting

@bariweiss

's now famous resignation letter, these are lessons "about the importance of understanding other Americans, the necessity of resisting tribalism, and the centrality of the free exchange of ideas to a democratic society."

3

38

385

@CJHandmer

If the true costs of our housing policy were widely understood there would be rioting in the streets.

3

11

410

10/ There are outlets across the political spectrum that claim to be fair that we know aren't. The difference is that the NYT used to have a genuine claim to fairness and not everyone yet realizes that they no longer do.

@torkander

@paulg

Yes, I am holding NYT to a higher standard than Fox News, given its history and its reputation. But that increasingly seems like wishful thinking.

3

3

61

6

37

378

@DavidSacks

And people made fun of Gringott’s bank in Harry Potter with their big piles of coins locked in vaults.

1

8

328

@rotmil_adam

738 researchers attending an academic conference who don’t explicitly work on safety is a small and biased group?

0

1

327

Slowly more and more credible people began to admit it was a problem. Eventually Elon read Superintelligence and wrote the tweet that launched AI risk into the mainstream:

1

16

325

@GraceSpelman

The word ‘fascinate’ is probably from Fascinus - an ancient Roman god often depicted as a penis with wings.

3

19

306

San Francisco could have been the most important city in the world.

Look at the Fortune 10. Tech is the most important industry and San Francisco was the center of it, but it has done everything in its power to destroy one of America’s most important strategic assets.

2

25

273

I'm told I should use

#ghostnyt

. Interesting mix of left and right people retweeting. If anyone wants to learn more, the report I link to is a great place to start:

3

28

233

Just discovered Bitcoin:

http://en.wikipedia.org/wiki/Bitcoin

It sounds like an idea that could change the world.

18

37

162

New post about how GPT-3 often knows more than it seems, and how it can be instructed to reject nonsense.

cc

@lacker

@gwern

@nicklovescode

5

34

171

@LauraDeming

WW2 wage freezes. Maybe not too insignificant seeming then, but completely insignificant compared to the fact that it’s responsible for how fucked our entire medical system is 80 years later.

4

4

154

@twitbranwell

I’m not sure. I think part of it is how sci-fi it sounds. As for researchers I think another part is a reaction to feeling your field is being attacked

0

1

135

This would be a great time to read the Slate Star Codex classic ‘Against Tulip Subsidies’

9

9

128

My friends who leave San Francisco always come back. Time to make peace with it: there’s no escaping SF.

I’ve avoided getting involved in the knife-fight-in-clown-car that is SF politics for a long time, but there is no other option.

We have to fix this city.

10

6

126

@mnolangray

Do you have the link for the shade structure? Ironically actually something I’m looking for

3

0

119

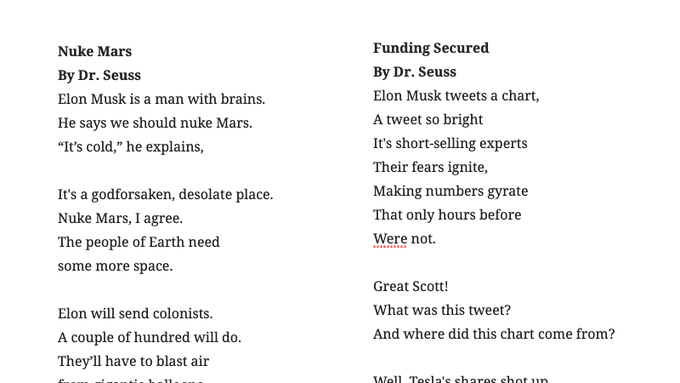

It took a few hours of cherry picking, but I was still amazed at how good GPT-3 is at comedy.

7

6

102

@wanyeburkett

I said exactly this to a friend. Calling it ‘trickle down housing’ is guilt by vibe association, not an actual argument. Pure tribalism.

1

3

101

@antoniogm

Dude love your twitter but the AI is just computers doing some things faster is the worst take I’ve seen from you.

3

0

94

My friend

@joshalbrecht

just announced his company raising $200M. I’ve long considered Josh the most talented person nobody knows about. He’s also the most reasonable, conscientious person I know. There’s a crazy story I tell to explain to people what kind of person Josh is... 🧵

5

8

97

@thelawofaverage

Yes, that's what happens when you artificially restrict supply from responding to demand. But by all means, let's blame people for living in homes.

3

0

97