Ani Kembhavi

@anikembhavi

Followers

2,706

Following

305

Media

41

Statuses

531

Senior Director @allen_ai + Affiliate Assoc Prof @UW 📷 : Visual Prog, Unified-IO, BiDAF 🤖 : ProcTHOR, Objaverse, SPOC 🌎 : SATLAS All views my own.

Joined June 2019

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

#रामचरितमानसअनुसारमोक्षकैसेहो

• 151151 Tweets

jeno

• 147437 Tweets

Watch Factful Debates YouTube

• 118566 Tweets

Michigan

• 95513 Tweets

Riquelme

• 84972 Tweets

Janet

• 68324 Tweets

Oklahoma

• 53566 Tweets

Colorado

• 47670 Tweets

#仮面ライダーガヴ

• 42880 Tweets

Tennessee

• 38493 Tweets

秋分の日

• 35677 Tweets

秒速5センチメートル

• 33321 Tweets

Iowa

• 32564 Tweets

ADLK NEVER ENDING

• 26342 Tweets

Auburn

• 23874 Tweets

#อ๋อมอรรคพันธ์

• 22347 Tweets

ショウマ

• 17040 Tweets

Dhyan Mahima

• 17010 Tweets

ダブルドライバー

• 15399 Tweets

Bristol

• 14243 Tweets

設営完了

• 13748 Tweets

Baylor

• 11714 Tweets

Shedeur

• 11644 Tweets

Hawkins

• 11583 Tweets

サンシーター

• 11364 Tweets

土砂降り

• 10414 Tweets

Last Seen Profiles

Apply for summer'24 internships @ PRIOR (computer vision)

@allen_ai

Join us in building large scale general purpose models for:

📷Visual Recognition

📄Multimodal Data

🤖Embodied AI

🛰️Planet monitoring

Apply by: Dec 11, '23

12

61

318

Incredibly excited to announce that Ross Girshick (

@inkynumbers

) will be joining the PRIOR team

@allen_ai

!

Ross is one of the most influential and impactful researchers in AI. I'm so honored that he is joining us, and I'm really looking forward to working with him.

6

24

301

I'm thrilled that Ali Farhadi will be the next CEO of

@allen_ai

! This is such an exciting time to work in AI.

2

20

262

I'm so grateful to work with

@tanmay2099

, lucky to work at the wonderful, warm, caring and inspiring AI2

@allen_ai

and honored to win the Best Paper award for CVPR this year!

18

19

252

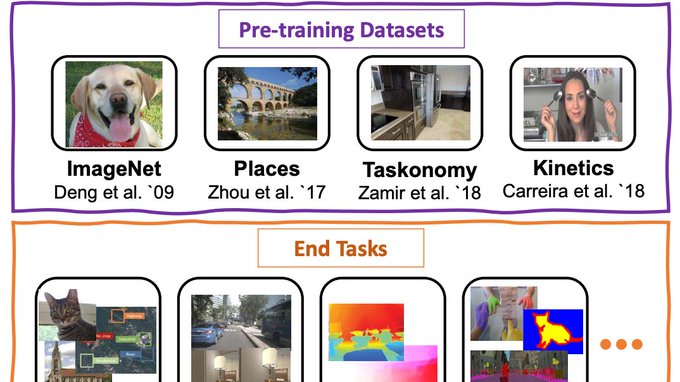

Presenting Unified-IO, the 1st model to jointly perform a large set of AI tasks in:

Classical CV (eg segment,depth,normals)

Image synthesis (eg image-gen,in-painting)

V&L (eg VQA,refexp)

NLP (eg QA,MNLI)

@jiasenlu

ChrisC

@rown

@RoozbehMottaghi

@allen_ai

4

38

159

Applications open for a 1-2 yr Computer Vision residency

@allen_ai

-Partner with strong mentors

-Engage in impactful research

-Author papers at top venues

-Boost your grad school application

Importantly, enjoy a collaborative and supportive environment.

3

25

115

Join our team!

The PRIOR team

@allen_ai

is looking to hire Research Engineers to work on projects spanning: Vision+Lang, EmbodiedAI, AI4Good and more!

Would love to see applications from communities underrepresented in AI.

Apply:

5

28

99

Apply for summer'23 internships @ PRIOR (computer vision)

@allen_ai

Research areas: Embodied AI, Vision+Lang, AI4Good

Check out

ProcTHOR ()

Unified-IO ()

& more:

Apply by: Nov 14, '22

0

25

98

Applications now open for summer '22 internships with the PRIOR (computer vision) team

@allen_ai

We are looking for interns to work in several areas including: Embodied AI, Vision-&-Language and AI for the Common Good.

Deadline: Nov 8, 2021

Apply here:

1

23

81

Computer vision internships

@allen_ai

: Deadline in 12 days.

Looking forward to reviewing all applications. Hope to see many from underrepresented communities.

@black_in_ai

@wimlds

@WiCVworkshop

@QueerinAI

@ai4allorg

@_LXAI

@AiDisability

@WiMLworkshop

@BlackWomenInAI

@women_in_ai

Applications now open for summer '22 internships with the PRIOR (computer vision) team

@allen_ai

We are looking for interns to work in several areas including: Embodied AI, Vision-&-Language and AI for the Common Good.

Deadline: Nov 8, 2021

Apply here:

1

23

81

2

16

69

Very happy that our work on ProcTHOR for large scale Embodied AI has won an outstanding paper award at

#NeurIPS2022

!

@allen_ai

0

7

65

The PRIOR team

@allen_ai

is looking for interns for Summer 2020. We have lots of exciting projects in different research areas including embodied AI and vision & language. Apply here:

1

22

59

A big win for truly Open AI !

But this is just the beginning. Stay tuned for more LargeModel goodness in 2024 😀

0

5

58

GreenCV: Climate change is one of the biggest challenges of our times. As we pursue larger and more capable vision models, we must also seek to develop efficient ones. Towards this goal, I am planning to submit a PAMI-TC motion

@ICCV_2021

.

A first draft:

4

11

56

Excited to release Unified-IO 2 -- a multimodal model to not just parse but also produce images, text, audio and actions for robotics.

This was a very challenging and demanding multi year effort. One of the largest projects to come out of PRIOR

@allen_ai

. Very proud of the team!

0

3

51

We are looking to hire scientists and engineers and are pursuing many exciting directions! Please reach out to

@ehsanik

or DM/email me directly.

3

3

45

Join us for a live panel on Embodied AI

Mon Jun 15 9am PST

Featuring:

@AlisonGopnik

, Sonia Chernova,

@_kainoa_

,

@judyfhoffman

,

@alexschwing

Moderators:

@LucaWeihs

, German Ros,

@erikwijmans

,

@ChengshuEricLi

Submit questions:

Zoom:

2

14

37

Lots of exciting talks at The Embodied AI workshop

@CVPR

Watch them for free at

Topics include:

Cognitive development in young kids,

Navigation and perception for Embodied AI,

Robotics,

Sim-2-Real transfer

Embodied AI for all.

#CVPR2020

#EmbodiedAI

1

19

37

Achievement unlocked 🏆

Paper tweet by

@_akhaliq

before the paper is live on arxiv!

0

4

36

Congratulations Yue!

Yue's work

@allen_ai

this past summer was creating Holodeck: Language Guided Generation of

3D Embodied AI Environments

1

2

36

4 weeks to go until the application deadline for summer 2021 internships with the PRIOR (computer vision) team at

@allen_ai

! Lots of exciting research and engineering areas.

Learn more and apply here:

Deadline to apply: Nov 20, 2020

3

10

35

Agreed. Some resumes sent to grad schools are truly outstanding. But for candidates that aren't able to have such impressive resumes: Don't Worry.

There are many ways to get research experience before applying to a PhD and stand out amongst the applicant pool.

0/n

1

0

33

We are looking for interns to work in several exciting areas including: Embodied AI, Language and Vision, AllenAct, Visual Knowledge Extraction, Representation Learning, Lifelong Learning, AI for the Common Good, Visual Recognition and more!

Applications are now open for summer 2021 internships with the PRIOR (computer vision) team at AI2! Join a creative team working at the forefront of

#computervision

research and tech for the common good.

Deadline to apply: Nov 20, 2020

Apply here:

2

10

37

0

5

30

Very excited to announce the BETA release of AllenAct!

More environments, tasks, algorithms, models and tutorials coming soon!

With AllenAct we hope to enable more reproducible and reusable research in Embodied AI, and lower the bar of entry for new researchers to this field.

AI2 is proud to announce our new

#embodiedAI

framework AllenAct! This library offers free, open, first-class support for a growing collection of embodied environments, tasks, and algorithms, plus reproductions of state-of-the-art

#AI

models.

Learn more:

2

25

72

0

5

29

Join us for a live panel on Embodied AI Simulation Environments

Sun Jun 14 5pm PST

Featuring:

@jana_kosecka

,

@DhruvBatraDB

,

@RoozbehMottaghi

, Karen Liu, Roberto Martin-Martin, German Ros

Moderated by:

@ybisk

Submit questions:

Zoom:

1

8

27

@abhshkdz

The bottom left of your picture showing a bottle of hand sanitizer, will forever remind us that this launch happened during the COVID crisis. What a time indeed.

0

1

24

Visual Programming: a neuro-symbolic approach to solving complex and compositional visual tasks in computer vision.

Uses LLMs to generate programs that invoke CV models and OpenCV routines and produce interpretable rationales.

Work led by

@tanmay2099

@allen_ai

Will multitask learning (MTL) be sufficient for creating general-purpose visual reasoning systems that generalize to 1000s of tasks?

We believe MTL might be necessary but not sufficient. These systems will ultimately need to code!

#CVPR2023

Blog:

🧵

5

19

91

1

5

25

Another possibility is to have reviewers rate each other -- using an N-point scale or by selecting amongst a few checkboxes. And this could be made mandatory.

As a reviewer one often has more insight into a paper to be able to judge another's review.

@ICCV_2021

@CVPRConf

@CVPR

1

3

25

Happy birthday AI2-THOR. The past 5 years have been incredible but we are just getting started! Looking forward to exciting projects to improve the simulator, build capable embodied agents and get these policies working on real robots!

Today we celebrated five years of interactive vision research and

#EmbodiedAI

with

#AI2THOR

!

(The 🤖 guest of honor is wearing a party hat in the center.)

Congratulations to the PRIOR team on an amazing project, and here's to 5 more years!

Learn more:

1

6

67

0

1

23

Over the years, I've seen a lot of people (incl myself) complain about the ECCV template -- in person / online / via surveys.

Now that we are well past publishing hard copies of conference proceedings, is there something we can do to alter this template ?

2

1

23

SATLAS, to be presented

@ICCVConference

, is a new platform by

@allen_ai

for geospatial data products generated by AI using satellite images.

Explore:

This video by Favyen Bastani demonstrates some of the features in SATLAS.

0

6

22

Very cool 2 day event

@CVPR

2020 in Seattle.

The one-stop-shop for Embodied AI this summer!

Embodied AI workshop at

#CVPR2020

- 2 day event (June 14, 15)

- 12 invited talks

- 3 robot nav challenges

- 1 sim only

- 1 sim2real (eval off-site pre-CVPR)

- 1 sim2real (eval on-site at CVPR)

- 33 organizers, 14 affiliations 😎

0

9

53

0

7

22

Very exciting for vision and language researchers to see that the awesome

@ai2_allennlp

now supports computer vision models!

0

1

22

@CVPR

Given the rising cases and outbreaks at

#CHI2022

, are you considering having a masking mandate at the conference venue ?

Packed and loud poster sessions with the audience and presenters shouting out Q&A seems like the recipe for an outbreak if many people are unmasked.

1

2

21

ProcTHOR allowed us to scale Sim houses from 10s to 100000s.

Holodeck now allows us to go beyond plain houses to rich and open domain worlds via natural language descriptions of the environments and occupants!

Terrific internship by

@YueYangAI

in the PRIOR team

@allen_ai

.

🛸 Announce Holodeck, a promptable system that can generate diverse, customized, and interactive 3D simulated environments ready for Embodied AI 🤖 applications.

Website:

Paper:

Code:

#GenerativeAI

[1/8]

8

102

405

1

2

20

A new benchmark for compositionality evaluation.

0

2

20

Objaverse -- A massive scale 3D object dataset from

@allen_ai

that can be used to:

✅Build 3D generative models

✅Scale up Embodied AI research

✅ Improve the robustness of 2D models

and more!

Richer annotations for Objaverse coming soon.

Stay tuned!

Introducing Objaverse, a massive open dataset of text-paired 3D objects!

Nearly 1 million annotated 3D objects to pave the way to build incredible large-scale 3D generative models: 🧵👇

🤗 Hugging Face:

📝ArXiv:

#CVPR2023

296

533

3K

1

2

20

Applications for summer internships with the computer vision team at AI2 due today.

Apply for summer'23 internships @ PRIOR (computer vision)

@allen_ai

Research areas: Embodied AI, Vision+Lang, AI4Good

Check out

ProcTHOR ()

Unified-IO ()

& more:

Apply by: Nov 14, '22

0

25

98

0

4

18

Excited to present our work at

#CVPR2023

:

💻 Visual Programming

📱 Phone2Proc

🧊 Objaverse

🤖 Excalibur

Joint work with

@tanmay2099

@ehsanik

@mattdeitke

@LucaWeihs

Rose Hendrix, Ali Farhadi, Dustin Schwenk, Jordi Salvador, Oscar Michel, Eli VanderBilt,

@lschmidt3

0

2

19

A new benchmark to study the fundamental question:

To what extent do AI systems comprehend the physical world?

Joint work between psychologists: Amanda Rose Yuile, Renée Baillargeon, Cynthia Fisher and

@GaryMarcus

& computer scientists:

@LucaWeihs

,

@RoozbehMottaghi

and myself

1

2

19

The stage for the panel discussion

@CVPR

looks awesome! The PCs have done such a great job this year. Each day seems better than the previous.

0

2

17

The Embodied AI workshop

@CVPR

is hosting 3 challenges this year:

1. Gibson Challenge by

@StanfordSVL

@GoogleResearch

2. Habitat Challenge by

@facebookai

3. RoboTHOR Challenge by

@allen_ai

Details and winner presentations at

#CVPR2020

1

5

18

An interview with the terrific past and present pre-doctoral residents on the PRIOR team. I am incredibly proud of each and every one of them.

@Mitchnw

@sarahmhpratt

@jmin__cho

@RamanujanVivek

KlemenKotar

0

1

16

GRIT: A new large benchmark for vision!

Particularly excited about the parallel tracks:

Restricted - Levels the playing field for researchers with limited GPUs+Data

Unrestricted - Continues to encourage massive models

Joint work

@allen_ai

@IllinoisCS

0

5

17

Introducing VidSitu: A large scale dataset for structured representations in videos.

Take part in our

#CVPR2021

challenge at -- a part of the 2021 ActivityNet challenges.

0

5

15

Grounded Situations by

@sarahmhpratt

@yatskar

@LucaWeihs

from

@allen_ai

. Check out the fun demo on the Computer Vision Explorer!

0

1

16

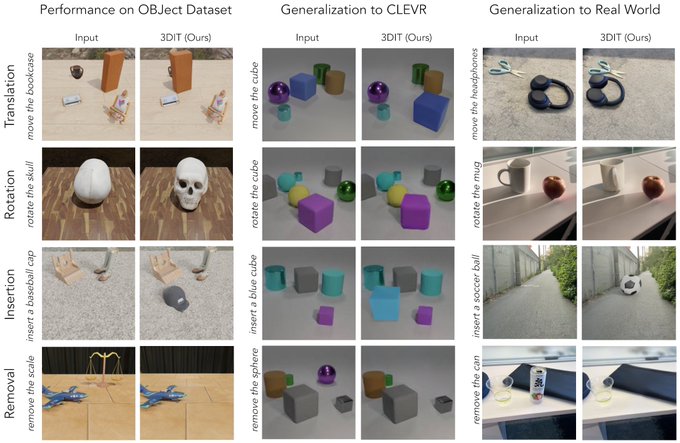

Our latest work

@allen_ai

on Object Centric Image Editing.

Diffusion models trained on diverse synthetic datasets perform well in the real world!

This work also introduces the OBJect dataset -- a diverse and rich counterpart to the popular CLEVR dataset.

0

3

16

Congratulations to this outstanding set of reviewers including

@tanmay2099

and

@LucaWeihs

from the PRIOR team

@allen_ai

and

@anand_bhattad

who recently interned with us.

As an AC, good reviewers are such a joy to work with!

They are objective, detailed, responsive and timely.

0

3

16

I'm very happy to see that both motions have passed. Thank you everyone for your support!

#greencv

The results for the vote at the

#ICCV2021

PAMI-TC meeting are in: both motions passed.

Results for Motion 1: Penalties for Dual Submissions

Yes: 274

No: 57

Results for Motion 2: Green Computer Vision

Yes: 217

No: 122

1

2

18

0

0

16

All work and no play makes Jack a dull boy. Turns out, it isn't great for embodied agents either!

Learning visual representations via gameplay - work with my colleagues in the PRIOR team

@allen_ai

to be presented at

@iclr_conf

What can embodied AI agents learn from gameplay? Our

#ICLR2021

paper () shows that by playing hide-and-seek, agents learn representations rivaling (self-)supervised approaches. We test our agent's with a suite of tasks inspired by experiments for infants.

1

18

81

0

0

15

Check out our ProcTHOR paper with a VR demo at this mornings poster session. Poster 926 or just look for the white and blue balloons!

@allen_ai

Very happy that our work on ProcTHOR for large scale Embodied AI has won an outstanding paper award at

#NeurIPS2022

!

@allen_ai

0

7

65

0

7

15

Our very first step towards using Computer Vision to aid conservation efforts.

Looking forward to working on many exciting projects in this direction.

#AIforGood

AI has a critical role to play in tackling the IUU fishing crisis. Meet the new computer vision model developed by AI2’s researchers, engineers and maritime experts part of our

@SkylightMarine

platform that is set to be a ‘game changer:’

0

4

10

0

2

15

New Grounded Situation Recognition demo available on the Computer Vision Explorer.

Also, we are happy to host your SOTA models as demos for 10 different computer vision tasks. Let us know if you would like us to host your latest and greatest models!

Have you checked out the AI2 Computer Vision Explorer lately? We've added new tasks like Grounded Situation Recognition, where a model classifies a situation and locates objects in that situation.

Try our examples or use your own image:

#computervision

0

8

14

0

6

15

The AC workshop

@CVPR

is one of the best workshops I have attended in the last few years. Lots of interesting discussion and debates, some technical, some organizational and some existential. Great job PCs!

#CVPR2023

@CVPR

AC Workshop in progress! Full day mix of talks, posters, townhalls and debates. Currently debate on foundation models and embodiment running..

4

7

56

0

0

15

This ICCV review cycle, I've seen disparaging comments by reviewers towards one of our submissions and also towards another that I am reviewing and discussing. The lack of accountability in the reviewing process continues to be frustrating.

#ICCV2021

2

1

13

You can't always trust experts. Use your own own judgement!

Can an expert 🧑🏫be too smart to teach🧑🎓?

Our new work introduces ADVISOR, an RL methodology for adaptively ignoring expert supervision during training when beneficial.

📜🔗

Co-led with

@LucaWeihs

@alexschwing

@anikembhavi

@allen_ai

@IllinoisCS

(1/3)

1

7

32

0

1

14

Vroom! Huge speedups to

#AI2THOR

in the latest release.

#EmbodiedAI

AI2-THOR is our open-source interactive environment for training

#EmbodiedAI

. We're pleased to announce the 2.7.0 release of

#AI2THOR

, which contains several performance enhancements that can provide dramatic reductions in training time.

Learn more:

0

7

24

0

1

14

Teaching multimodal transformer models to paint, while still retaining their abilities for QA and Captioning!

Work led by

@jmin__cho

in collaboration with

@jiasenlu

and

@HannaHajishirzi

.

"GPT-3 trained on an enormous amount of text data. What if the same methods were trained on both text and images?"

Learn about fascinating new work from our

#computervision

team in this piece by

@_KarenHao

via

@techreview

:

0

21

47

0

1

14

@rzhang88

@RoozbehMottaghi

@RoozbehMottaghi

only wrote the first 5 words. The rest were produced by GPT-3.

2

0

13

Our latest work on Multi Agent cooperation accepted to

#ECCV2020

Led by

@unnatjain2010

and

@LucaWeihs

(1/3) How can multiple agents ensure that they're on the same page? In our

#ECCV2020

paper we show how multiple agents can form expressive joint policies despite decentralization.

Paper/code:

@unnatjain2010

@anikembhavi

@alexschwing

@allen_ai

@IllinoisCS

1

11

23

0

0

13

Visual Room Rearrangement: Our new work to be presented at

#CVPR2021

.

Take part in the accompanying ongoing challenge here:

I'm thrilled to announce that our paper on Visual Room Rearrangement has been accepted as an oral to

#CVPR2021

!

Paper:

Website & Code:

Video:

M. Deike,

@anikembhavi

,

@RoozbehMottaghi

,

@allen_ai

,

#AI2THOR

2

4

33

0

2

13

Quick reminder: Deadline for summer 2020 internships with the PRIOR (computer vision) team

@allen_ai

is tonight.

4 weeks to go until the application deadline for summer 2021 internships with the PRIOR (computer vision) team at

@allen_ai

! Lots of exciting research and engineering areas.

Learn more and apply here:

Deadline to apply: Nov 20, 2020

3

10

35

1

5

13

Contrasting Contrastive Methods!

A very interesting large scale study of several recent self-supervised learning methods by my colleagues Klemen,

@gabriel_ilharco

@lschmidt3

@ehsanik

@RoozbehMottaghi

0

0

11

Just a few weeks after presenting Objaverse at

#CVPR2023

, we present Objaverse-XL, scaling up assets by an order of magnitude!

0

1

12

We're thrilled to announce this year's winners of the AI2 Outstanding Intern of the Year award! 🏆

Congratulations to our exceptional summer interns Sarah Wiegreffe

@sarahwiegreffe

, Sean MacAvaney

@macavaney

, and Unnat Jain

@unnatjain2010

!

1

11

65

0

0

12

@Suhail

Visual programming:

Won the Best Paper award at CVPR this year. The 10 minute talk at the conference is here:

And the paper can be found on the website.

0

1

11

Looking forward to lots of interesting talks, discussions and perspectives about Embodied AI.

This series is open to all. If unable to attend due to scheduling conflicts or time zone reasons, please watch out for the uploaded talks.

#ai4all

Very happy to announce the Embodied AI Lecture Series @ PRIOR

A live lecture and discussion series in Embodied AI with a focus on cross-disciplinary work.

Lectures held biweekly Fri 11am PST. Open to all!

#embodiedai

@allenai

Details:

1

22

75

0

0

11

Should be a very interesting workshop. Looking forward to it!

0

2

11

Glad you liked it! We are pursuing both approaches and it's been interesting to see the pros and cons and importantly the possibilities of both.

1

0

11

Wonderful post touching upon ICCV 31 years ago, the 5 stages of grief on seeing the AlexNet numbers, and what questions continue to interest and intrigue

@Michael_J_Black

0

0

10

Great to hear from

@drfeifei

. Looking forward to reading the book!

so glad

@drfeifei

stopped for a chat at

@allen_ai

today on her book tour 🥰

Deeply inspiring personal stories and great insights on the future of AI & the role of nonprofits like AI2 and

@StanfordHAI

(our CEO Ali Farhadi did a wonderful job hosting, too!)

0

3

40

0

1

10

I'll be presenting GPV-1, GPV-2 and Unified-IO in my talk titled:

Towards General Purpose Vision

at the ODrum workshop in about 30 minutes. Looking forward to some interesting discussions!

#CVPR22

We are only 4 days away from ODRUM:

#CVPR2022

workshop on Open-Domain Retrieval under Multimodal Settings!

5 AWESOME INVITED TALKS !!

@danqi_chen

@dlarlus

@anikembhavi

@xwang_lk

@HaoTan5

Schedule and details on

See you

@CVPR

, 06/20 8AM-12PM Room 239!

1

8

19

0

0

10

We will be presenting our talk tomorrow afternoon in the oral session. See you all there!

@CVPR

0

1

10

It's been great to collaborate with the Skylight team and build computer vision models to detect suspicious activity on our oceans. AI4Good!

Protecting marine life is key to protecting the planet. To this end, researchers from AI2's

@SkylightMarine

and PRIOR teams partnered together to enhance Skylight technology. Read the blog for more and visit the team's workshop today at

#NeurIPS2023

:

0

2

6

0

0

10

Fantastic accomplishment! Congratulations to

@morastegari

, Ali and the entire

@xnor_ai

team.

0

1

10

Check out CVPR-Buzz @ to find papers that are being discussed/shared on Twitter. Built by Matt Deitke in the PRIOR team

@allen_ai

Instead of scrolling twitter up and down, searching for papers on

@SemanticScholar

or Google, or searching for most discussed papers, for this CVPR

#CVPR2021

, I'm trying CVPR Buzz

by Matt Deitke.

And I LOVE IT!

2

6

26

0

1

10

I find demos to be incredibly useful for exploring a model's qualitative behavior as well as evaluating if a model may be helpful in downstream tasks. This page showcases some demos of popular models that we have used in the PRIOR team.

Announcing the AI2 Computer Vision Explorer!

This new tool from

@allen_ai

is a collection of demos of popular and state-of-the-art models for a variety of

#computervision

tasks - try, compare, and evaluate, using our images or your own!

#aidemos

#ai2

0

47

135

1

1

9

Wonderful ongoing collaboration between researchers

@allen_ai

and

@IllinoisCS

"A Cordial Sync: Going Beyond Marginal Policies For Multi-Agent Embodied Tasks" –

@unnatjain2010

,

@LucaWeihs

,

@RAIVNLab

,

@anikembhavi

ECCV link:

Project:

Spotlight Session 13, Thursday 08:00(UTC+1)=02:00(CT)

Poster

#4354

, Thursday

0

2

9

0

1

9

@Thom_Wolf

@ale_suglia

@huggingface

@mohitban47

@yoavartzi

@jasonbaldridge

@FelixHill84

@GuggerSylvain

@jiasenlu

@allen_ai

We are also hoping to implement models like LXMERT, VisualBERT, ViLBERT, etc. to make them easier for others to consume.

@mohitban47

@kaiwei_chang

1

0

9

Very interesting work to discover objects and estimate attributes in a self supervised manner.

In this recent paper in our Learning by Interaction series, we try to answer the following question: "Can we learn about objects and their properties just by self-supervised interactions?"

#NeurIPS2020

Paper:

Code:

2

12

48

0

0

9