Ben (e/sqlite)

@andersonbcdefg

Followers

3,591

Following

2,914

Media

405

Statuses

6,955

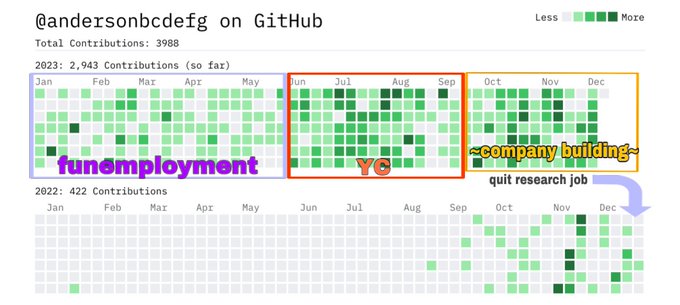

🤖 Computer scientist, next-word-prediction enjoyer 📊 Prev. research fellow @ Stanford RegLab 🛠️ bUiLdiNg sOmeThiNg nEw ( - YC S23) 🏳️🌈

San Francisco, CA

Joined August 2020

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Chelsea

• 287384 Tweets

#LAMeetandGreetxENGLOT

• 133284 Tweets

Celtics

• 80853 Tweets

Pacers

• 65981 Tweets

Tatum

• 29577 Tweets

Mikael

• 24783 Tweets

Barco

• 22523 Tweets

O Vasco

• 22108 Tweets

Omar Geles

• 21844 Tweets

Gaston

• 20796 Tweets

Fortaleza

• 20354 Tweets

Herrera

• 19331 Tweets

#WWENXT

• 17453 Tweets

#Aashirwad

• 14934 Tweets

Haliburton

• 13335 Tweets

Temperley

• 12572 Tweets

#サブウェイ新サンド

• 11283 Tweets

Tyrese

• 10259 Tweets

Vallenato

• 10060 Tweets

Jアラート

• 10022 Tweets

Last Seen Profiles

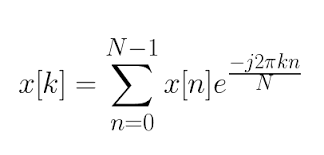

You’re powerless. You’re weak. You’re unemployed. You do not understand the Fast Fourier Transform. You have no assets. You have no legitimate network.

You will never be a scientist. You will never build predictive models. You will never discover fundamental truths. You can’t do

@Katerationopia

@schwatd2

@Lormif1

@KathrynTewson

@Eodyne1

@chadcmulligan

@riScorpian

@col_bosch

@JosephPoulin175

@NoLongerBennett

Cope, and seethe, I bet you dont even know how to use the fast Fourier transform.

You will never be a scientist, you will never build predictive models, you will never discover fundamental truths, nobody will ever intellectually respect you or what you say

119

80

566

27

23

633

maybe but it's a little sus that the message went from "text is the universal interface and will lead to super intelligence" to "oh wait haha not quite, but video will!" we'll see i guess but lowkey i think we are just throwing spaghetti against the scaling wall here

45

17

447

posts like this annoy me. sleep is really important, & lack of it heavily impairs cognitive function. it's fun to stay up working on something cool, but doing it regularly is nothing to aspire to. founders should make time for sleep, physical activity, & socialization 👍

People grind hard at Newton

Came in at 6am this morning &

@jacksonhmg1

was finishing up an all-night tear

Tab is going to be an incredible product

28

5

302

21

20

417

@ryxcommar

the people quoted absolutely would keep every penny if it were them and we all know it. just pure sour grapes and want other people to be as miserable as they are

1

0

333

@twofifteenam

your first mistake was thinking that startup founders are not trying to attract twinks

6

4

285

@___frye

he saw all the gay coded disney villains and thought they were meant to be role models

1

0

261

@max_spero_

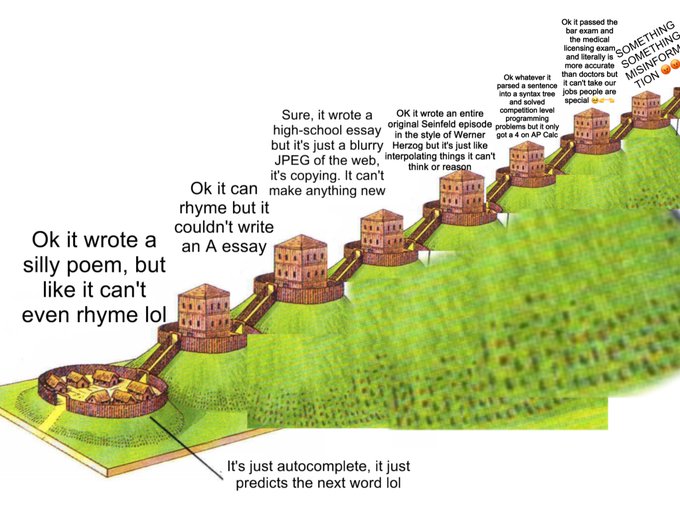

imo it's possible to pass green line by some combo of "more knowledge" and "speed". often a person we think of as smart either thinks fast (search) or knows a lot (memorization). NNs can do both better than people w the same data available. i do think it flatlines, but that might

9

1

219

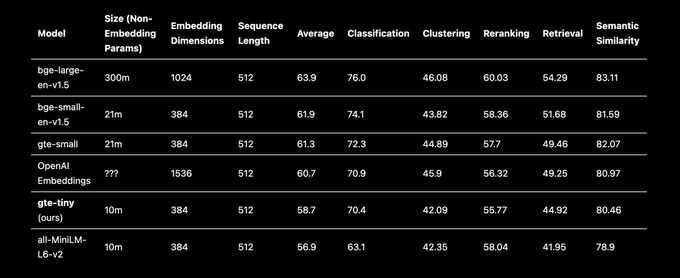

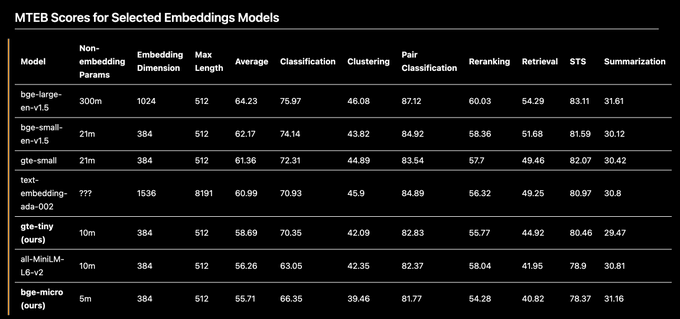

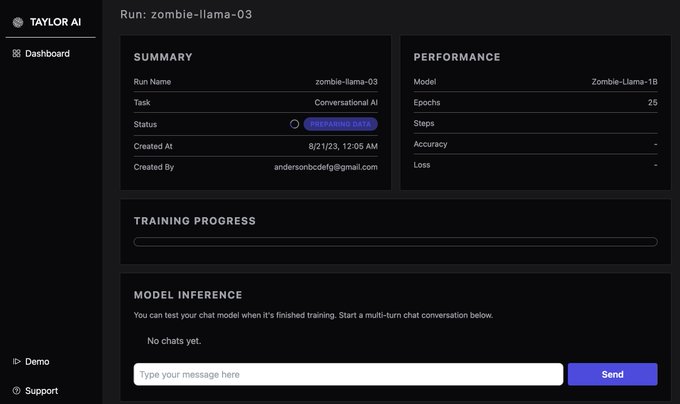

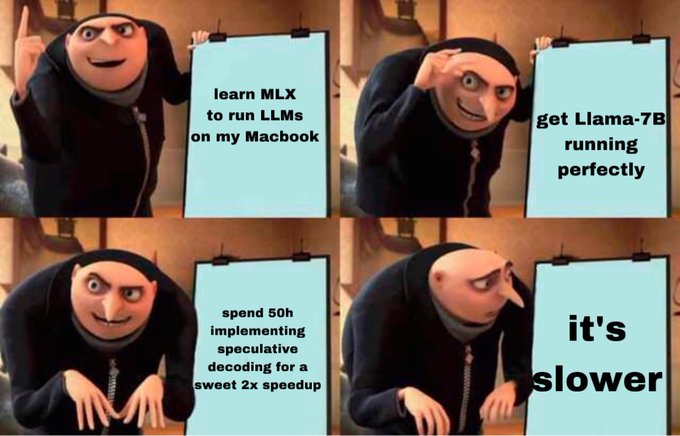

Early testing—can embed 2000 docs per minute on my 4y-old Intel Mac with ONNX. No GPU required! (New M2 CPU is about 2x faster than this). 2k docs / min ~= 1M tokens per minute, which is 3–5x more than OpenAI with typical rate limits. Local models stay winning?

4

19

168

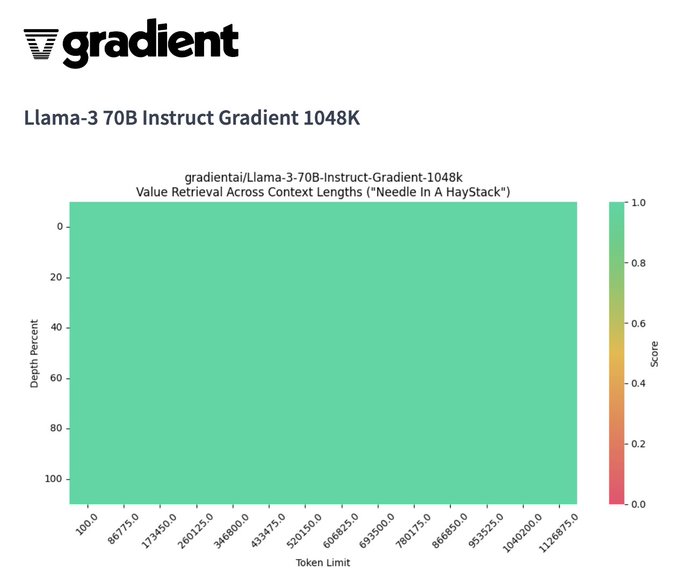

these models really need to be evaluated with something like RULER (), needle-in-a-haystack is not sufficient for me to believe that performance doesn't suffer across the long-context window

We’re going back 2 back! 🔥 Introducing the first 1M context window

@AIatMeta

Llama-3 70B to pair with the our Llama-3 8B model that we launched last week on

@huggingface

. Our 1M context window 70B model landed a perfect score on NIAH and we’re excited about the results that

39

115

656

13

14

165

can we stop backfilling the decision to live in the city you want to live with a business justification? its okay to just live where you want, you don't have to add cope on top about how Tulsa is going to be the new AI capital, trust me bro

16

4

158

@abacaj

to be fair, "open source" does not have to be synonymous with "local models" or "consumer GPUs". open source software can be developed in the cloud, it's just that lots of OSS devs have a reflexive distaste for cloud computing

4

2

149

@KhanStopMe

@lilianweng

lots of parts of therapy are like, doing homework in a CBT workbook. there are iOS apps, chatbots, etc. for this purpose. i don't see why a language model can't be a helpful partner for self-reflection and talking through feelings.

19

2

140

@eigenrobot

> looking for accounts to follow

> ask girl if eigen is charming or hot

> she doesnt understand

> pull out illustrated diagram explaining what is charming and what is hot

> she laughs, "he's a good robot sir"

> follow him

> he's hot

2

3

141

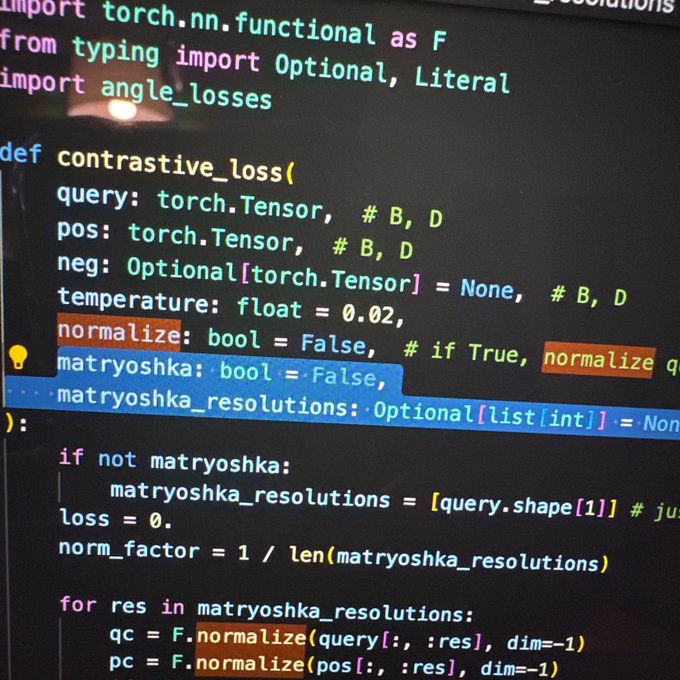

we are going to re invent BERT and i am so here for it

4

6

139

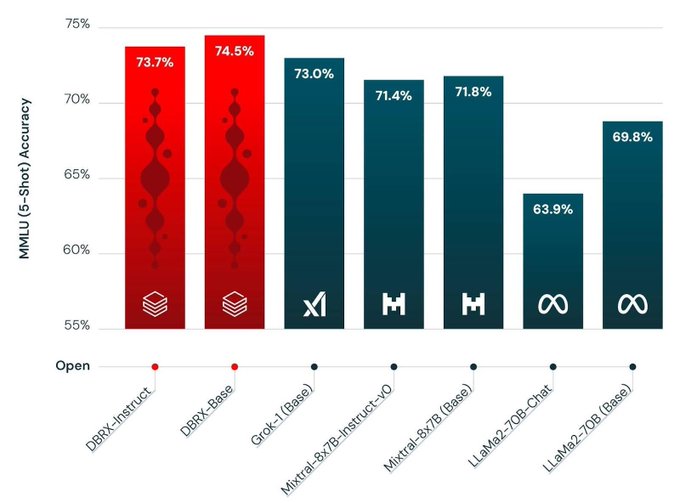

mosaic team is great

- smart, scientific, data-centric

- great at posting

- no weird agi millenarianism

very pumped for them & this release 🎉

6

10

135

it's also named after a dude called joseph pilates. it sounds like one of these stupid jokes but it's Actually Real

1

5

120

@somewheresy

spending the last 18 months laughing at the AI because it "can't do hands" and then getting mogged by it is peak tragic downfall

4

1

109

OpenAI is building Her but cohere is building Him (for enterprise)

9

6

113

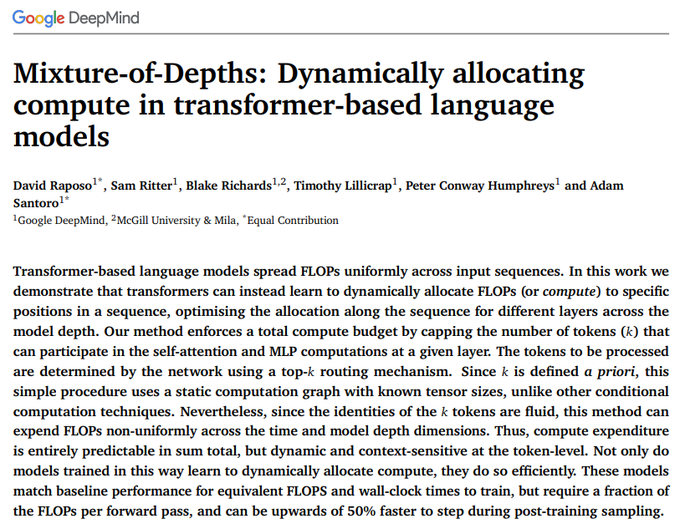

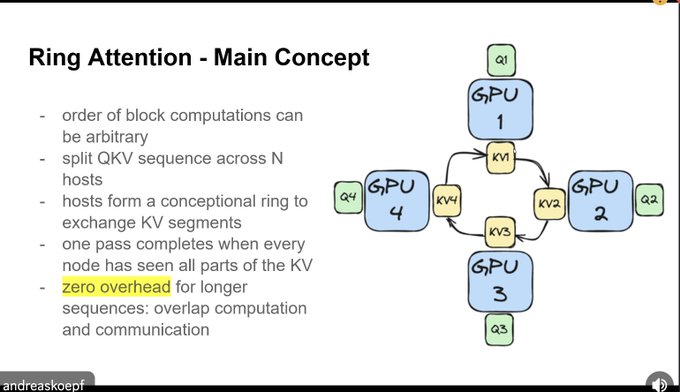

who would win: nerd snipe galaxy brain subquadratic architecture

OR

one brute force circley boi

5

7

107

ok let me be helpful and not just catty: a typical mixture-of-K-experts is in no way K models put together. it is a transformer where at each feed-forward layer, each token is routed through 1 of K FFNs. it is trained as 1 model.

8

6

103

gary marcus vs. openai

@CFGeek

imo diminishing returns is sort of ill-defined. scale maximalists see a power-law relationship and think "ok cool let's put in 10x more compute". skeptics see a power law and think "looks like diminishing returns". neither of them is technically wrong?

1

0

39

0

2

107

@suchenzang

smh that's less data than like tinystories or proof pile. are they not aware we can download all of pubmed, all of arxiv, all of semantic scholar, not to mention MIT OCW? this is nothing

4

1

102

@nearcyan

i love that it still has the silly little alignment artifacts like numbered lists while also being fucking annoying

4

0

94

@jxmnop

security concerns aside, "unembedding" back to text would actually be incredibly useful for stuff like interpreting cluster centers in latent space! i'm definitely gonna look into integrate some of these models into an open-source project for exactly that

7

2

97

wait this is actually so smart im pissed i didnt think of it. RLHF is fucking annoying because you need 3 models in memory: reference model, policy model, reward model. but all 3 are usually (or can be) finetuned from the same base model which means..... LoRA time! this rules

2

5

86

@jxmnop

this is just like when gwern said you dont need to know the formula for integrals just randomly sample points and see how many are under the curve

1

1

88