Delip Rao e/σ

@deliprao

Followers

46,493

Following

4,915

Media

4,629

Statuses

50,736

Busy inventing the shipwreck. @Penn . Past: @johnshopkins , @UCSC , @Amazon , @Twitter ||Art: #NLProc , Vision, Speech, #DeepLearning || Life: 道元, improv, running 🌈

NYC, 🇺🇸🇮🇳🇹🇼🏳️🌈

Joined October 2008

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

オーロラ

• 411486 Tweets

Joost

• 306479 Tweets

CHAWARIN ON STAGE

• 225230 Tweets

FOURTH SINGING TIME

• 139169 Tweets

#ขวัญฤทัยตอนจบ

• 128335 Tweets

#不破湊3Dライブ

• 85092 Tweets

Fulham

• 71741 Tweets

自分これ

• 59703 Tweets

Man City

• 55635 Tweets

Gvardiol

• 42174 Tweets

Manchester City

• 36446 Tweets

最高のライブ

• 35754 Tweets

#العين_يوكوهاما

• 35470 Tweets

太陽フレアのせい

• 33419 Tweets

カクレンジャー

• 32690 Tweets

HeavenlyVoice WithMrC

• 31920 Tweets

#東京タワー

• 30280 Tweets

マリノス

• 27156 Tweets

Dremo

• 24672 Tweets

アルバムとライブ

• 20999 Tweets

#FULMCI

• 20446 Tweets

自分の魚

• 15953 Tweets

わたしの誕生魚

• 15074 Tweets

Sark

• 12973 Tweets

GET WELL SOON JISUNG

• 10136 Tweets

Last Seen Profiles

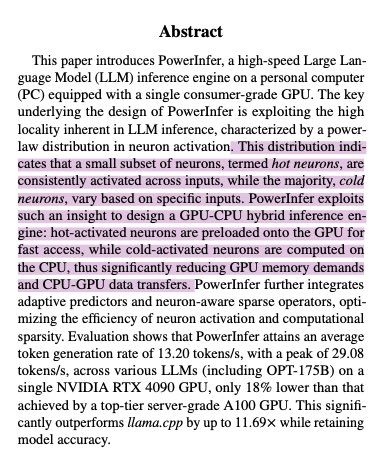

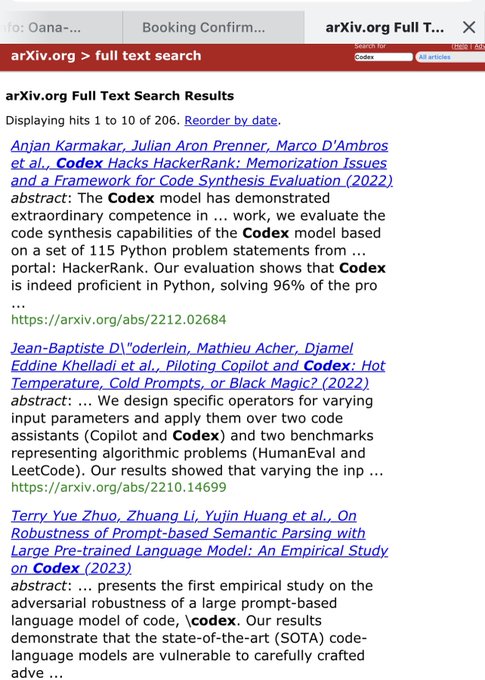

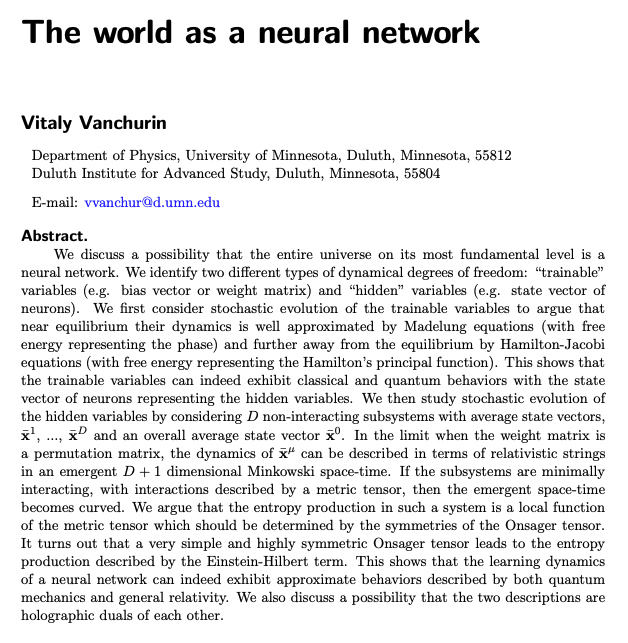

Crazy AF. Paper studies

@_akhaliq

and

@arankomatsuzaki

paper tweets and finds those papers get 2-3x higher citation counts than control.

They are now influencers 😄 Whether you like it or not, the TikTokification of academia is here!

65

284

2K

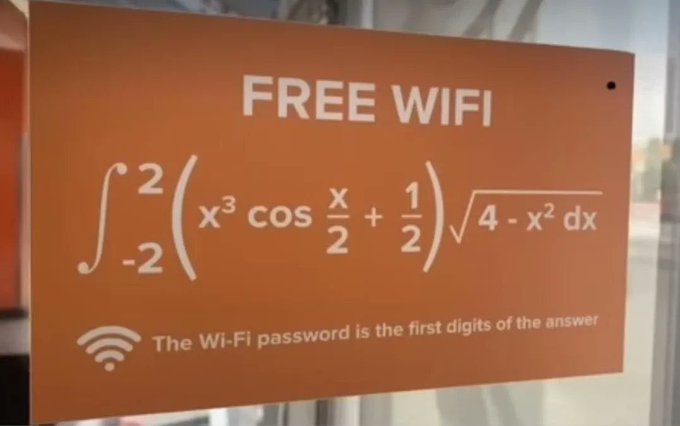

@LongFormMath

I think it implies you’ve cultivated a good level of safety with the students for them to be comfortable sharing this with you.

2

4

1K

imagine replying “ship something better” to the person who leads Pixel’s computational photography ("AI" if you prefer) that's raising the bar of what to expect from a camera.

@docmilanfar

Sam is crushing you guys at AI. Ship something better and then you can make fun of him.

59

130

5K

37

46

996

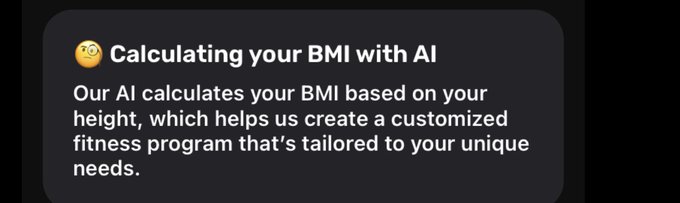

Startups are hiring influencers to peddle bullshit. This is a new kind of misinformation to peddle snake oil AI products with no backing. When you dig in, you see the growth rate has been hovering around 8.5%, with leading and lagging indicators for March showing a -16% drop.

57

60

860

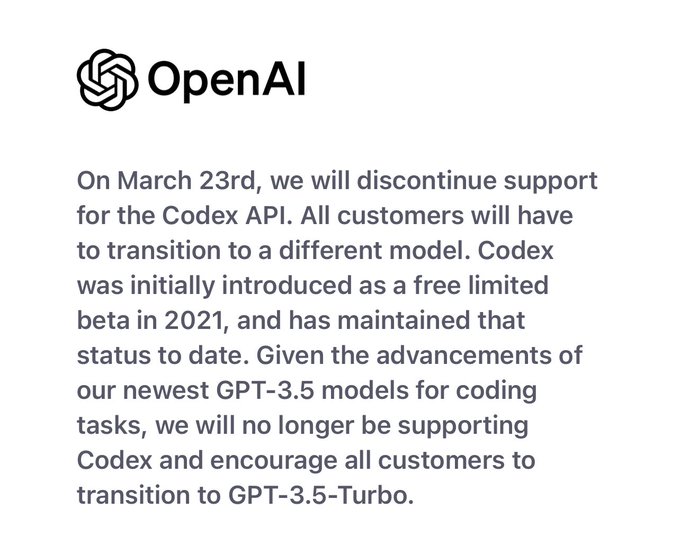

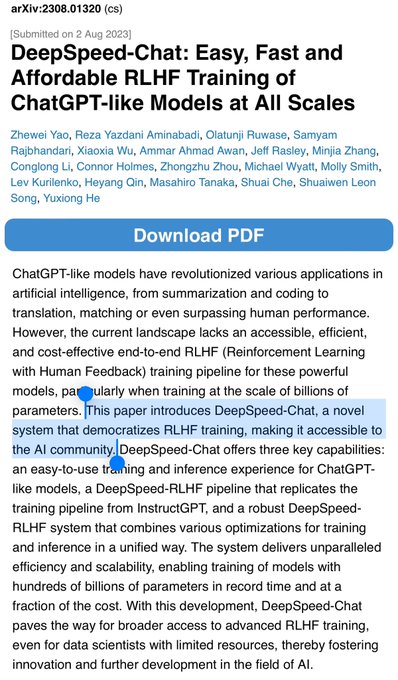

Reminder: this was (part of) the team that thought GPT-2 was too dangerous to release, and now they are making models stronger than GPT-4 available on AWS for anyone with an Amazon account to use.

This is why I have little trust in “AI safety” claims by Anthropic/OpenAI. It all…

Congrats to Dario and the

@AnthropicAI

team on their new Claude 3 family of models. Very impressive benchmarks, and excited to have all of them coming to Amazon Bedrock (w/ Sonnet avail today). Many AWS customers are already building with Anthropic’s foundation models, and…

20

77

697

27

82

827

If this were a science paper, you would expect a country that picks its science workforce at random as a “weak baseline” and a leading nation like the US to actively experiment towards state-of-the-art, or at least beat the baseline.

Not providing a guaranteed path for…

34

123

686

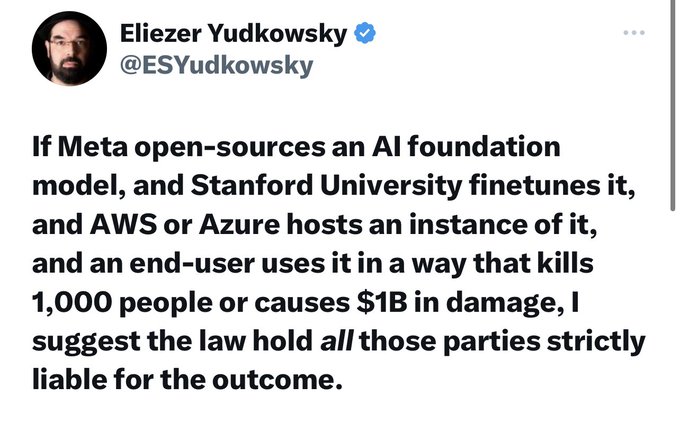

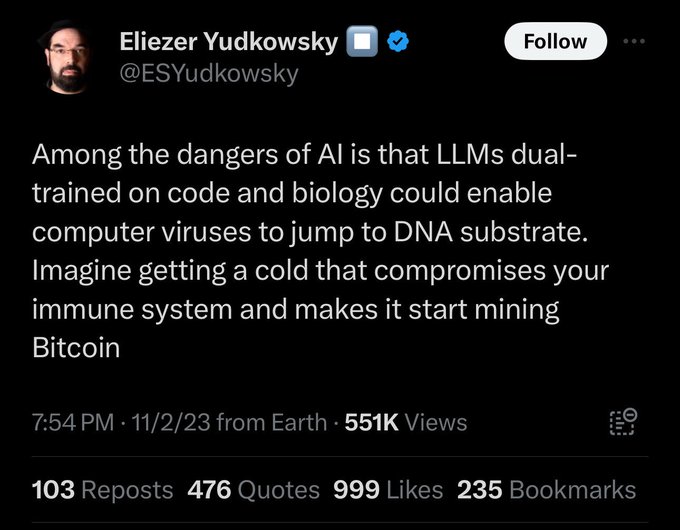

This is another one of those ill-thought, fear-mongering scientific disinformation about LLMs, and I will explain why in this long thread. 🧶

6

158

649

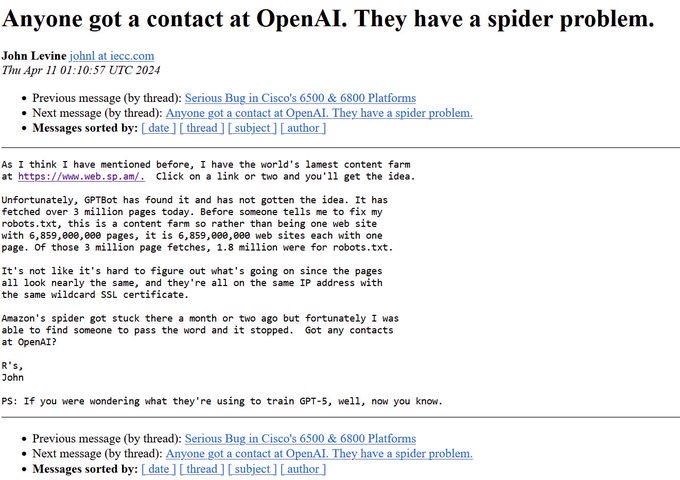

From

@chris_j_paxton

. Apparently OpenAI is hitting content farms hard.

This is why being open about what is going into your models is so important.

17

61

618

Where my Indian friends at!? I have missed all the sweet nepo deals! Also where do I go to collect my minority perks?? Feeling livid I missed out on whatever memos my Indian friends are sending themselves while I’ve been slugging it out for the past 15+ years and paying fat taxes

23

36

605

I’ve tested many codegen models and GPT-4 was a clear winner until now. Congratulations to

@MistralAI

for bringing in mistral-medium as a strong competitor for code generation tasks.

4

20

585

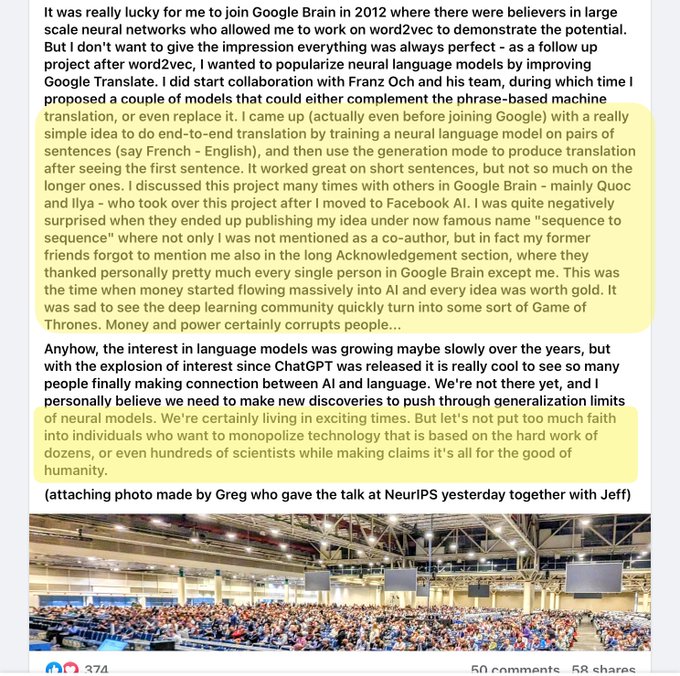

Closed science companies like OpenAI and Anthropic parasitically extract value from open science and open source without giving credit to people or organizations building them. Open science with citations would’ve addressed that, but alas that’s too much to ask.

Pace of progress in AI is lightning!

@OpenAI

released MRL style text embeddings, mirroring our NeurIPS '22 paper (w/ awesome folks from UW and Harvard).

However, as an advocate of open science, I am a bit disappointed with rebranding to "shortening embs" without ref to MRL 1/n

11

100

660

25

64

563

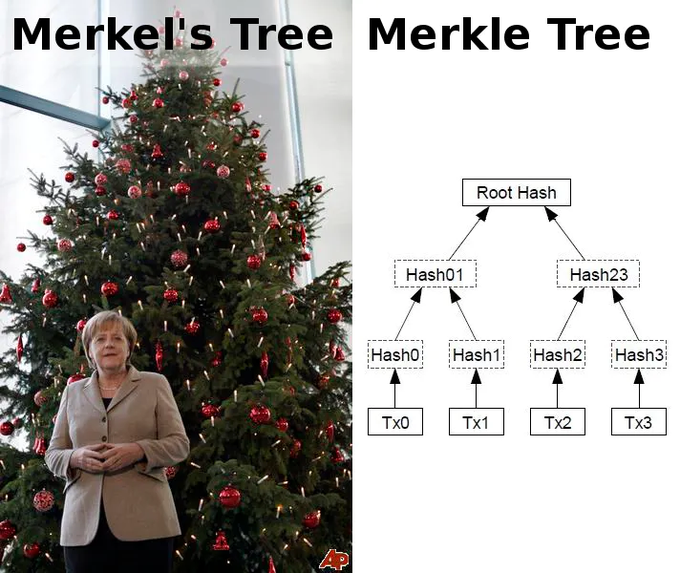

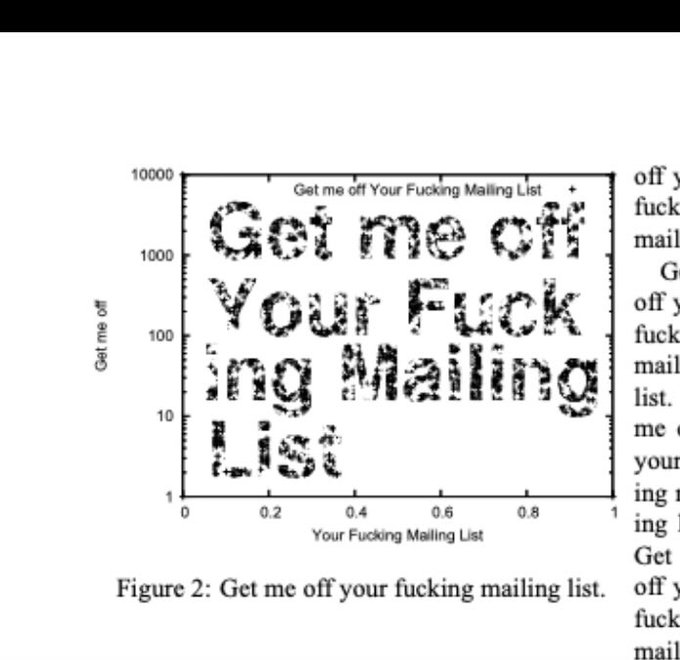

Finally, a tree all computer science folks can relate to.

Upside-down fig tree in Bacoli, Italy. "No one is quite sure how the tree ended up there or how it survived, but year after year it continues to grow downwards and bear figs."

#archaeohistories

319

6K

38K

4

78

558

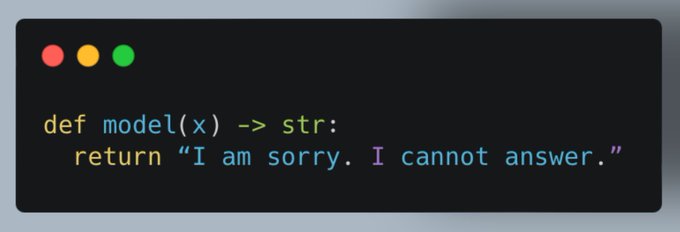

This is like insisting on baby-proofing every power tool.

If anything, this is an endorsement of how versatile and unlobotomized the mistral model is as a *base model*.

If you are capable of making an inference on an LLM, it is your responsibility to use it safely.

After spending just 20 minutes with the

@MistralAI

model, I am shocked by how unsafe it is. It is very rare these days to see a new model so readily reply to even the most malicious instructions. I am super excited about open-source LLMs, but this can't be it!

Examples below 🧵

217

107

768

22

42

542

Turns out you cannot replace decades of painstaking work optimizing around every kind of web content with an LLM and retrieval augmentation.

Who could’ve guessed. 🙃

30

31

524

Price for 26 minutes transcription:

Humans: $39 to $130 (*)

Rev AI: $6.5 (closed model)

Whisper on Mac: pennies

Open Source AI will upset so many company business models, but it will enable more company business models and, most importantly the individuals, leaning on it to…

9

39

483

I am absolutely in love with this mouse-over stuff

@huggingface

API documentations have. It clearly shows they understand their users' pain -- ML APIs have too many arguments. If you are wondering why 🤗 is so popular, little things like these go a long way in reducing friction.

1

40

478

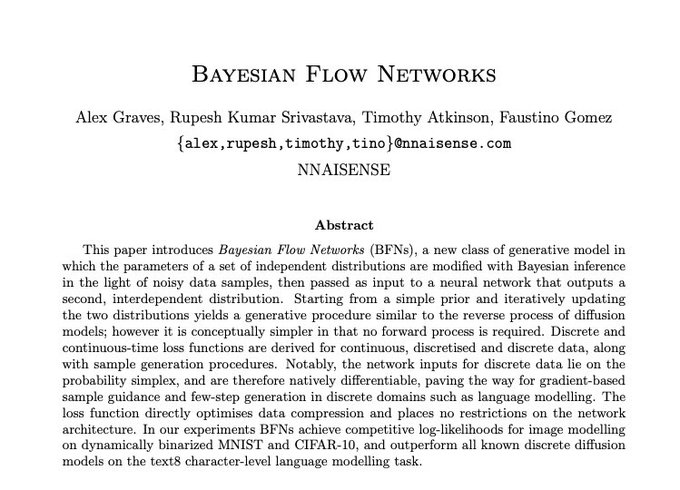

Anyone underestimating the power of autoregressive models has not fully figured out autoregressive models. Even people actively building autoregressive regressive models have not figured them out fully either.

Hat-tip

@natfriedman

16

37

474