Sanyam Bhutani

@bhutanisanyam1

Followers

34,654

Following

996

Media

933

Statuses

8,009

👨💻 Sr Data Scientist @h2oai | Previously: @weights_biases 🎙 Podcast Host @ctdsshow 👨🎓 International Fellow @fastdotai 🎲 Grandmaster @Kaggle

India

Joined October 2016

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

CHASING THE SUN

• 381167 Tweets

billie

• 351849 Tweets

Alito

• 275089 Tweets

Scottie

• 168343 Tweets

Crockett

• 124768 Tweets

Diddy

• 97276 Tweets

Diddy

• 97276 Tweets

Fauci

• 84169 Tweets

#النصر_الهلال

• 71575 Tweets

RPWP TRACKLIST

• 54050 Tweets

#วอลเลย์บอลหญิง

• 51147 Tweets

Juventus

• 46069 Tweets

Louisville

• 45711 Tweets

कन्हैया कुमार

• 44694 Tweets

Barron

• 43370 Tweets

NCAA

• 39808 Tweets

أبو عبيدة

• 36089 Tweets

ابو عبيده

• 35930 Tweets

#عزالدين_سليم_المفكر_الشهيد

• 30133 Tweets

Homofobia

• 29326 Tweets

ジュラシックワールド

• 29147 Tweets

Shani Louk

• 28949 Tweets

Amit Buskila

• 27306 Tweets

LOST IS COMING

• 23847 Tweets

Madden

• 17994 Tweets

Tabilo

• 17328 Tweets

المنطقه الشرقيه

• 16521 Tweets

$BASED

• 11483 Tweets

Kinds of Kindness

• 10680 Tweets

Sean Combs

• 10303 Tweets

للنصر

• 10232 Tweets

Last Seen Profiles

Pinned Tweet

This is the best week of my life 🙏

✅ Reached Kaggle Grandmaster tier

✅ My ML Hero & Guru:

@jeremyphoward

was kind enough to host me for an interview about my journey

I promise to continue creating ML content to the best of my ability & sincerely take up competitions next 🍵

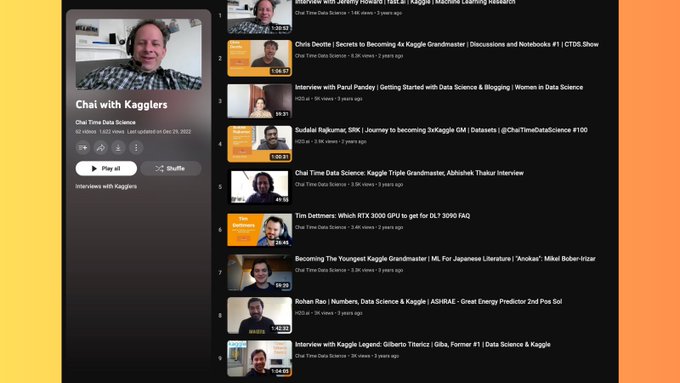

This week I'm filling in for regular "chai time data science" podcast host

@bhutanisanyam1

, with a very special interview with a recently-anointed Kaggle grandmaster...

10

40

267

19

10

268

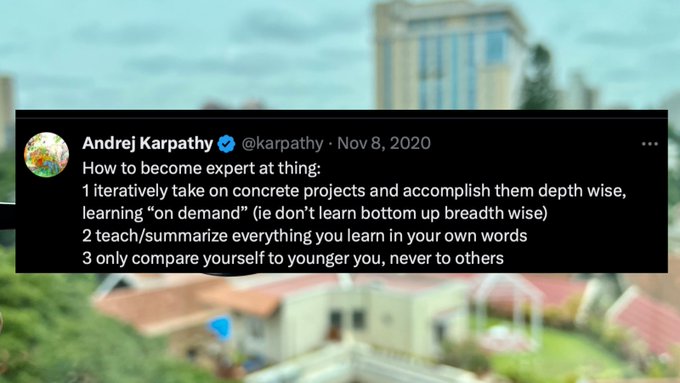

How to become an expert at any thing 🙏

I rediscovered this gem by

@karpathy

in my bookmarks today

21

159

1K

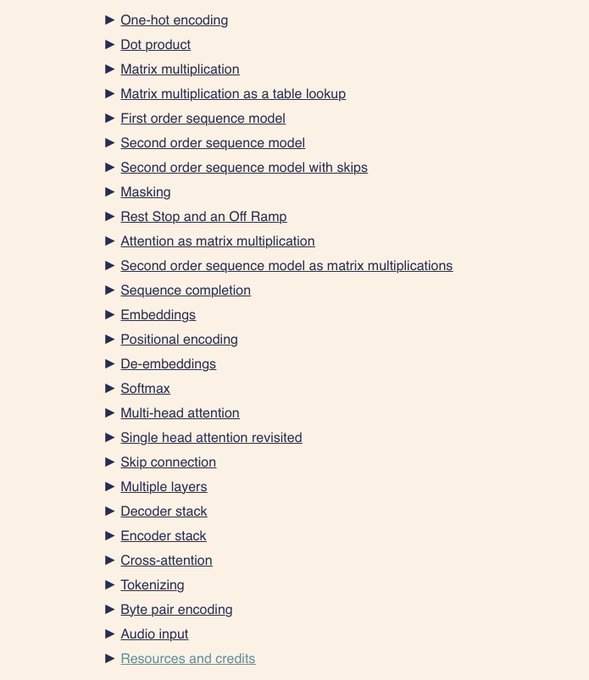

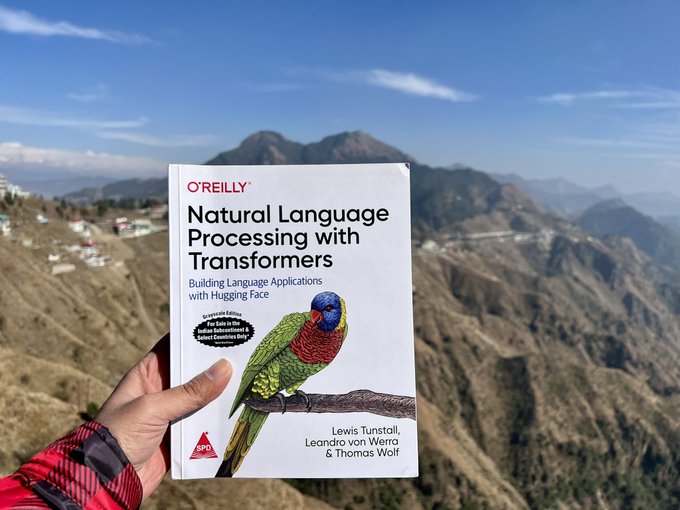

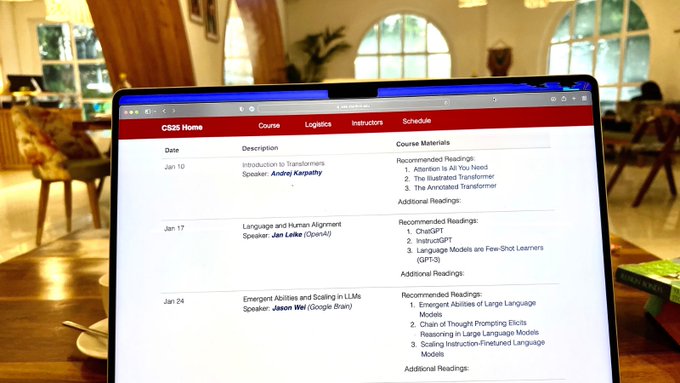

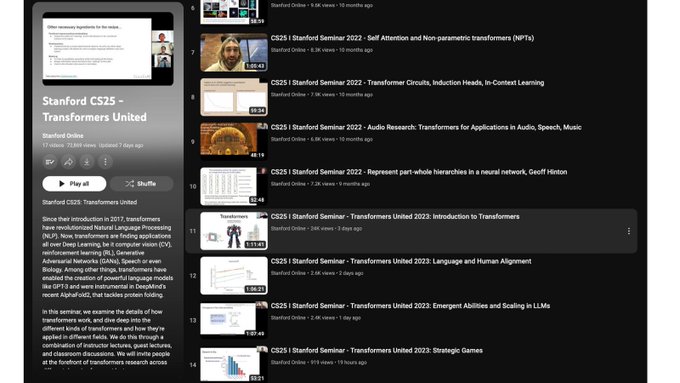

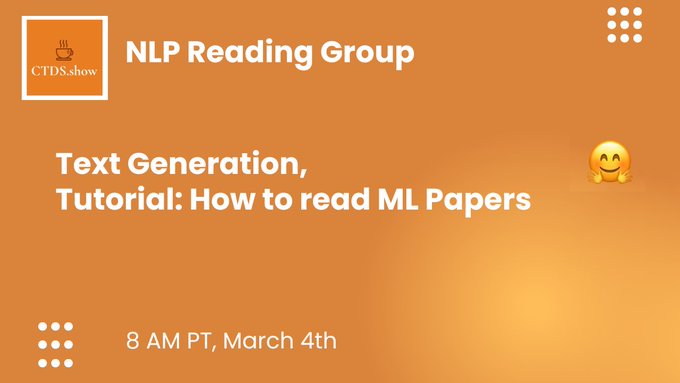

This is the best resource to get started in NLP in 2023 🙏

In 2 days, I will be kicking off a weekly study group to learn with everyone:

@_lewtun

will kindly join us for an opening AMA

18

120

967

The best summary of Transformers and it’s evolution 🙏

I found

@giffmana

’s slides from 2022 to be the best “pocket reference” on the topic.

Many posts have covered Transformers however this one also covers the state of field before and how it got adopted to different domains.

11

158

907

Best tutorial on setting up LLMs locally! 🙏

@Rob_Mulla

made an end to end video teaching how to install, run with GUI and connect a Large Language Model to your own data on your own machine.

All open source, running offline:

13

158

864

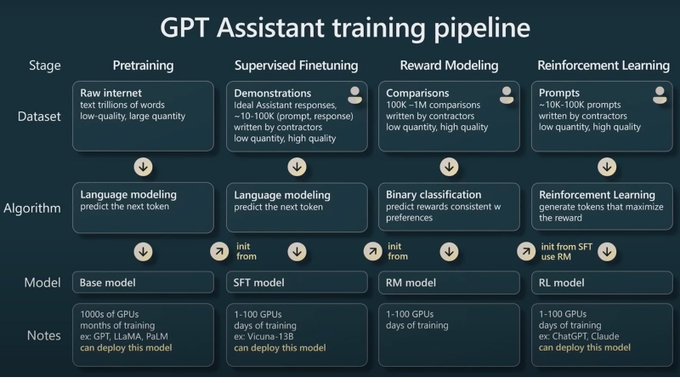

Watching “State of GPT” by

@karpathy

is the best 40 minutes you will spend this week 🙏

I actually found it really helpful for filling a lot of my knowledge gaps:

- Comparisons against human brain and LLM brain

- Why prompting works and why is it helpful to ask a model to “be

17

124

837

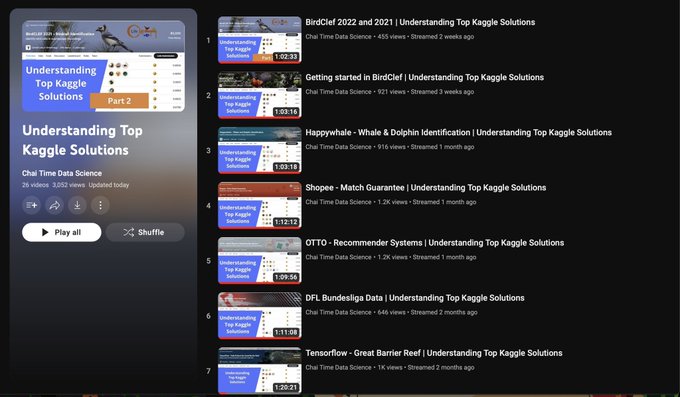

Officially wrapping up the

@kaggle

Top Solutions Series 🙏

I’ve hosted over 25 videos sharing and explaining tricks, secrets of Kagglers for all domains of machine learning.

The series is quite complete & I’m graduating to more challenges:

13

174

822

The best tutorials on building LLM powered applications 📚

@GregKamradt

is an incredible teacher of

@LangChainAI

:

✅ Top down & applied series

✅ Amazing teaching style

✅ Very practical examples

20

158

797

MAJOR personal update:

I’ll be starting my work in a full time role

@h2oai

today as a Machine Learning Engineer and AI Content Creator!

I’m really excited to be a part of a team of many of my “ML Heroes” and THE best kagglers.

Recap on my ML journey:

73

60

749

Arxiv Chat: Chat w the latest papers 🙏

I made a really simple demo that makes it easy for me to understand the latest papers.

The whole app is <100 lines of code:

✅

@LangChainAI

for the main logic

✅

@h2oai

Wave for the UI

✅ ChatGPT for asking Qs

27

93

730

Run 13B model on an iPhone! 🤯

Just finished reading

@Tim_Dettmers

’ amazing work on SpQR.

SpQR unlocks 3.35 bit quantisation which lets us run 33B models on 3090s and 13B models on an iPhone.

Here are my notes from the paper:

- Quantisation is basically like compressing the

29

113

704

Insanely detailed notes on Training LLMs! 🙏

@StasBekman

has shared his field notes on training foundational models.

These are insanely detailed, cover a lot of gotchas and caveats.

A crispy read with well documented code, in depth discussions:

6

129

692

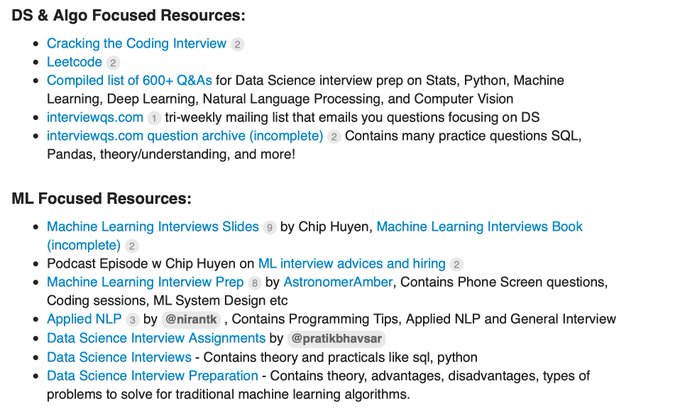

If you're looking for the best resources to prepare for ML interviews in 2020,

Here's a wiki from the

@fastdotai

forums:

Also, all contributions are welcomed!

6

155

681

"Start by learning the basics really well [..] Most advanced research projects require you to be excellent at the basics [..]

@AndrewYNg

always told me to work on thorough mastery of these basics"

Read the complete interview w

@goodfellow_ian

@hackernoon

:

4

185

662

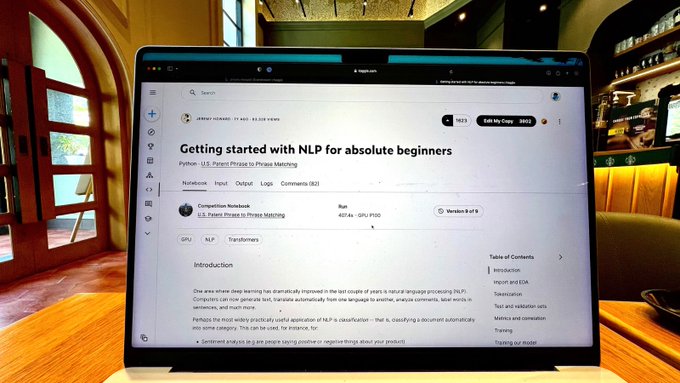

NLP for absolute beginners 🙏

@jeremyphoward

kindly shared the Stanford materials which are incredibly high signal resource for NLP.

Here’s another tutorial teaching you the absolute NLP basics upto how to make a submission on Kaggle, by Jeremy himself!

3

142

649

I'm tea-ry eyed. I can't believe this :')

I've reached the

@kaggle

Grandmaster tier today! Thank you so much everyone!🍵

+ My sincerest gratitude to

@jeremyphoward

for introducing me to Kaggle and to

@vopani

for pushing me to pursue it! 🙏

65

15

614

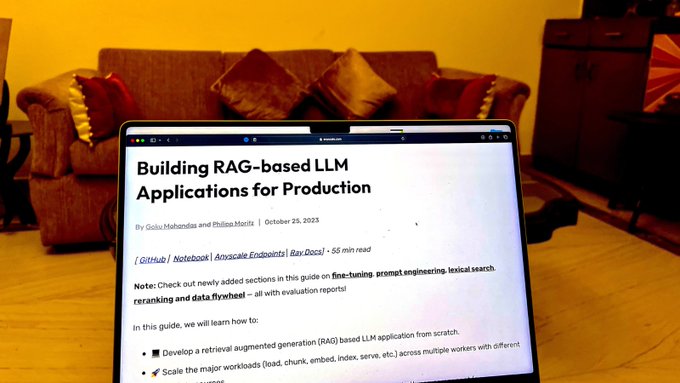

The definitive guide to RAG in production! 🙏

@GokuMohandas

walks us through implementing RAG from scratch, building a scalable app

It now has updated discussion on embedding fine-tuning, re-ranking and effectively routing requests

I think this is easily the most complete

12

92

575

This is just surreal!

I just won the

@hackernoon

Contributor of the year award for 2 categories! 🙏🍵

- Machine Learning

- Tutorial

44

31

548

I finally got my copy of Deep Learning w Python by

@fchollet

! 📚

I couldn't be more excited about this 🙏

I'll be starting a reading group on Jan 8, and Francois has kindly agreed to join for an AMA! 🍵

Please send your Qs around Keras/the book as here!

Links TBD Soon!

23

29

533

The most detailed and practical write up on applying LLMs! 🙏

This reads like a survey paper but written for the industry and applications

@eugeneyan

is known as the best NLP writer for a reason. It’s the most comprehensive overview of patterns on building Large Language Models

7

97

510

Personal Update Thread on The

@GoogleAI

Residency:

Earlier this year I got a life-changing email. My Google AI Residency application had made it to the final interview rounds! This spring, Google flew me out to NYC where I gave my "On-site" interviews.

15

44

460

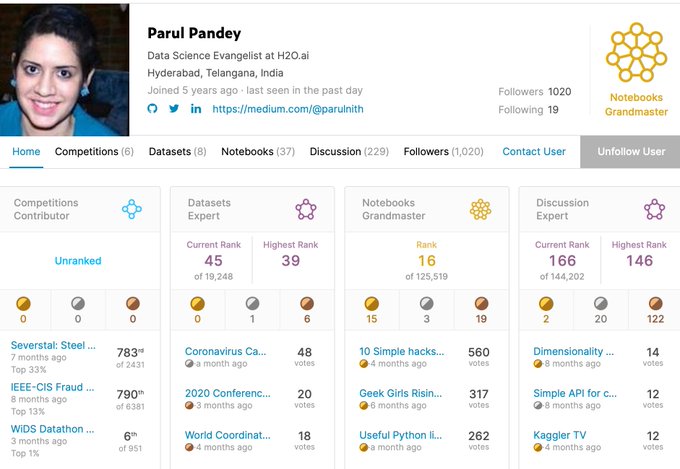

"Machine Learning doesn’t have to be a black box anymore. What use is a good model if we cannot explain the results to others. Interpretability is as important as creating a model."

A neat kernel on "Intrepreting Machine Learning models" by

@pandeyparul

5

99

444

I'm super excited to share that I've joined

@weights_biases

! 🍵

I've been a fan of their community since the early days, I'm really looking forward to contributing to it further.

Please expect study groups, events, Kaggle deep dives, and much more! 🙏

56

20

444

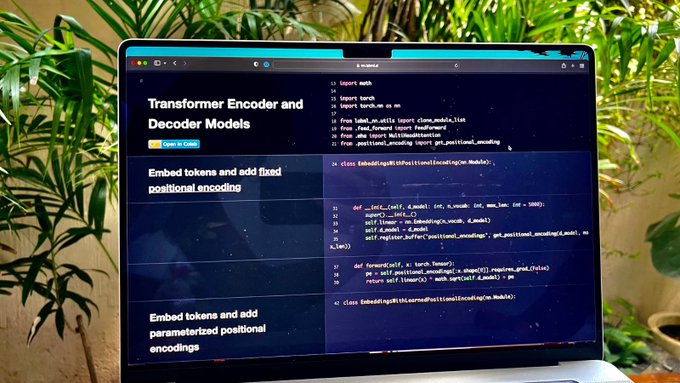

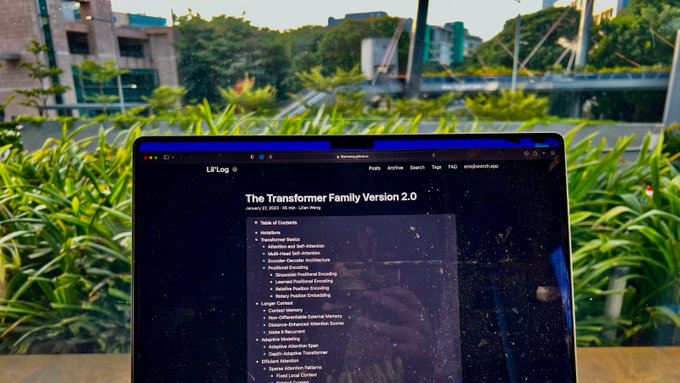

Incredible recap of key Transformer concepts! 🙏

What I really like about this write up is it covers 30 key papers and flows really well as a recap

@lilianweng

has written so many incredible posts, this one captures all key architectural concepts:

- Transformer basics:

6

74

433

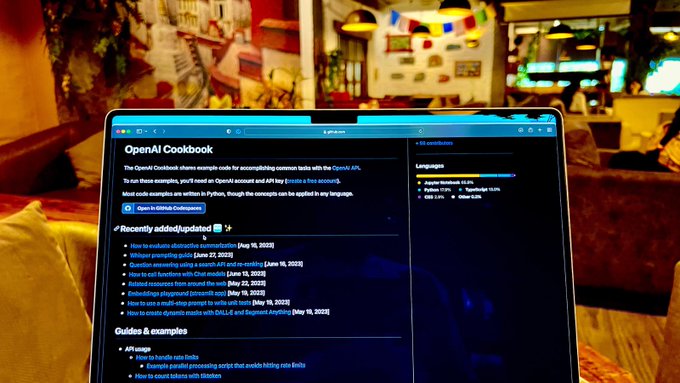

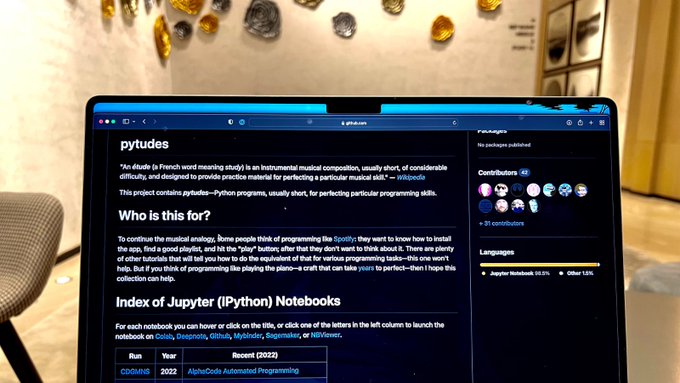

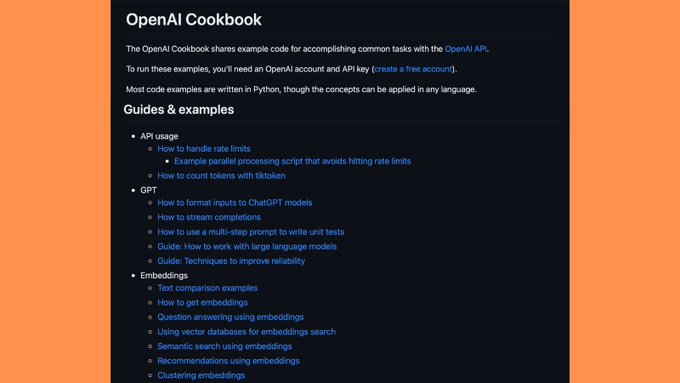

The most underrated LLM Cookbook! 🙏

@OpenAI

’s guide is an incredibly underrated resource

My favourite bit is the practical advice and guides sprinkled throughout the examples

It also has the highest quality code of many learning resources

The examples cover all important

4

80

425

Great tutorial on Deploying Deep Learning Models On Web And Mobile (Along with a working demo!) by

@reshamas

and Nidhin P.

They've used the library, but the tutorial can be used to create a web and mobile app using any framework

1

105

427

GitHub GPT: Understand any repository! 🚀

Here is a demo where I played with connecting GPT-4 to any repository.

The main logic is <20 lines of code:

✅

@LangChainAI

for the main logic

✅

@activeloopai

for storing embeddings

✅ Simple App that runs in the terminal

15

62

423

I’m in happy tears to awarded “Top GenAI Scientist” award by

@AnalyticsVidhya

🙏

I feel really honoured by the recognition. Will make this one count!

31

9

422

A perfect intro to open source LLMs! 🙏

The course by

@asangani7

is now my top recommendation for getting started with Large Language Models:

- Just enough theory for a whole picture

- Teaches prompting, special tokens and conversational agents

- Perfectly abstracts the

0

56

338

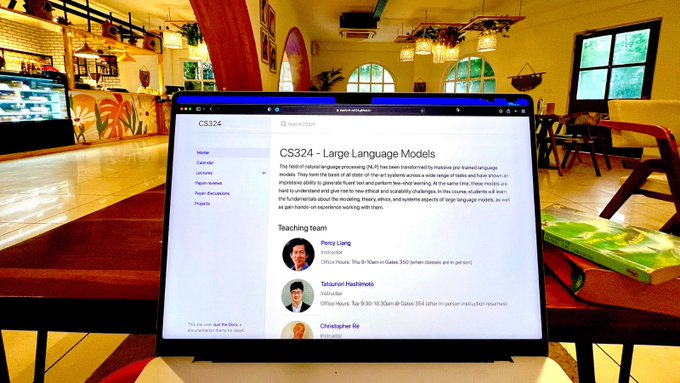

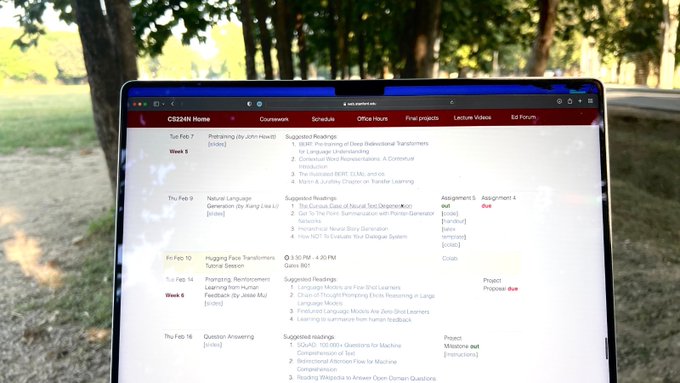

The best NLP lectures! 🙏

@chrmanning

’s latest CS224n lectures are finally live!

The 14 hours of new content covers Large Language Models, Interpretability, and some crispy framework tutorials:

4

76

393

My next goal:

I will spend at least 500 hours this year competing on

@kaggle

🍵

If I fail to do it, I will not drink chai for an entire year and giveaway all my GPUs 🙏

43

12

381

The Interview with

@kaggle

Grandmaster and Senior CV Engineer

@LyftLevel5

: Vladimir Iglovikov

@viglovikov

just got published

@hackernoon

. The Grandmaster has really been kind enough to share *ALL* of his secrets, you can find all of them here:

8

87

377

Very practical course on applying LLMs! 🙏

@HamelHusain

had mentioned that langchain makes for a great cookbook of cutting edge ideas.

This course is a refreshingly applied one teaching how to use

@LangChainAI

to build different applications.

My favourite part is it’s

6

63

370

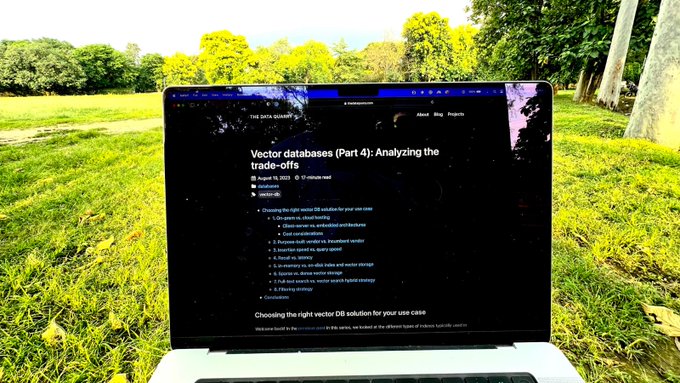

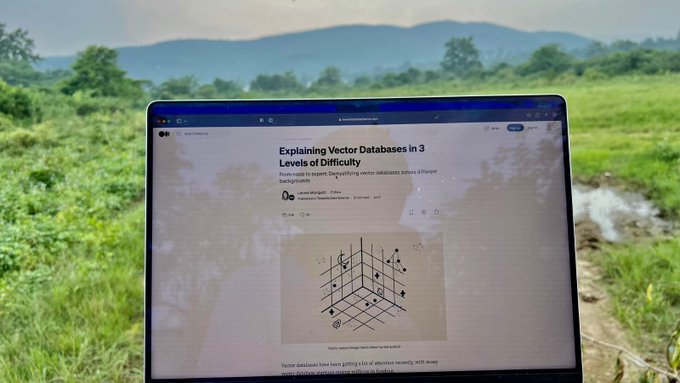

The most comprehensive series I’ve read on Vector databases! 💾

Most of us got exposed to vector dbs via Langchain or llamaindex documentation

However, There’s a lot of nuance and options to select from when building Large Language Model apps

@tech_optimist

has written a 4

9

59

372

Terrific tutorial on fine-tuning LLMs to your own data 👨🔬

Tomas Bratnic has shared a really crispy write up on creating a Cypher generating LLM:

✅ All Open Source tools

✅ Walkthrough of setup

✅ Detailed steps on how to solve this

@h2oai

LLMStudio

5

66

360

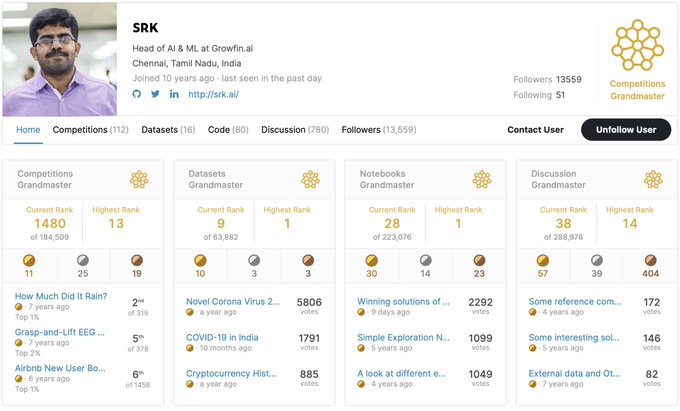

Today is a glorious day for

@kaggle

community! 🍵

Kaggle legend:

@sudalairajkumar

has conquered all categories and become the newest 4x Grandmaster! 🙏

11

19

357

“A cookbook of Self-Supervised Learning”

@ylecun

et al👩🍳👨🍳

SSL is the tasty sauce behind a lot of the success in Language models, Computer Vision and beyond.

It permits working with limited data by allowing you to include unlabelled data in your workflow. Hence becoming “the

5

64

341

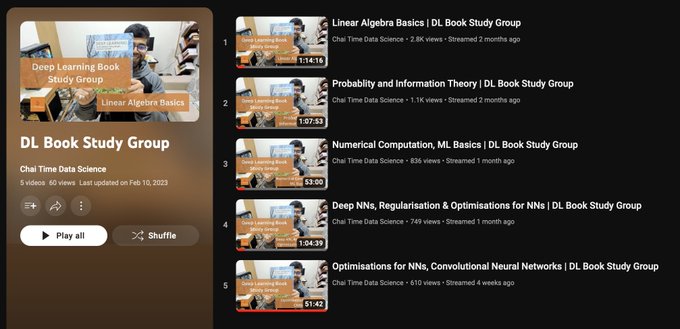

NLP with Transformers Study Group 🤗

Starting next week, I’m hosting a study group on the absolute gem book by

@huggingface

team 🙏

@_lewtun

has kindly agreed to join the kickoff session. I can’t think of a better way to learn NLP:

10

48

337

Weekly

@kaggle

Top Solutions Study group 🙏

Starting this Sunday, I will be going through top solutions of recently ended competitions that might be relevant to the ongoing ones:

4

58

330

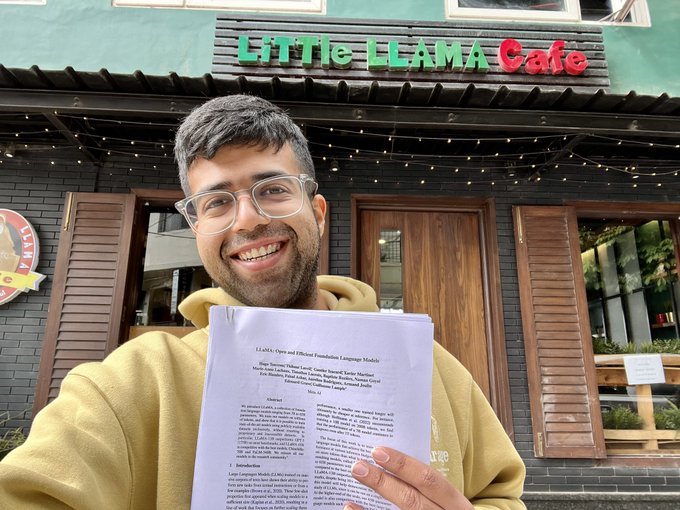

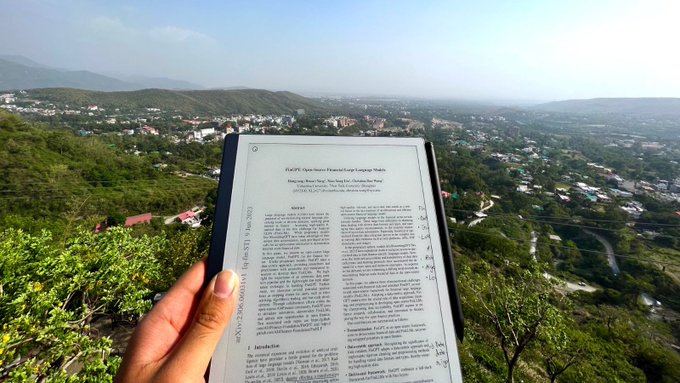

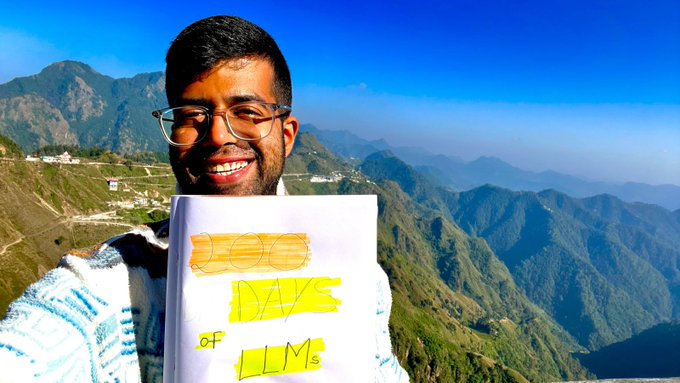

Am I doing

@karpathy

and chill right?

Cafe overlooking Himalayas, tasty breakfast and lecture rewatch 😋🙏

19

2

328

This is THE BEST CAREER ADVICE for Data Science that I’ve ever read:

IMO

@kaggle

forums often have write ups/advice of *much* higher quality than most of the blogposts out there

I’d highly recommend reading all of

@ryan_chesler

’s write ups on Kaggle.

0

70

326

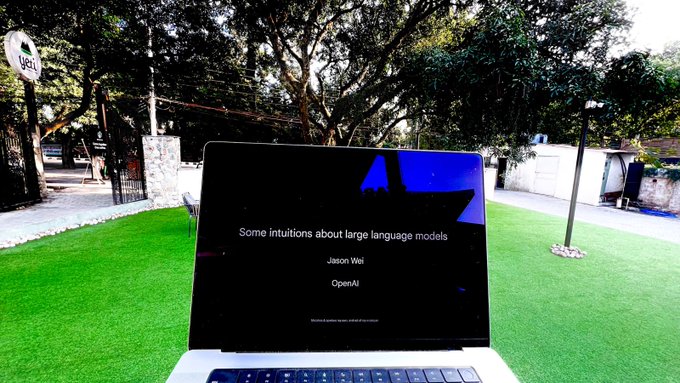

This really helped me understand why LLMs work! 🙏

- Why next word prediction is powerful

- Why prompting works

- What we know about emergence

Thanks

@_jasonwei

for the gem:

1

39

328

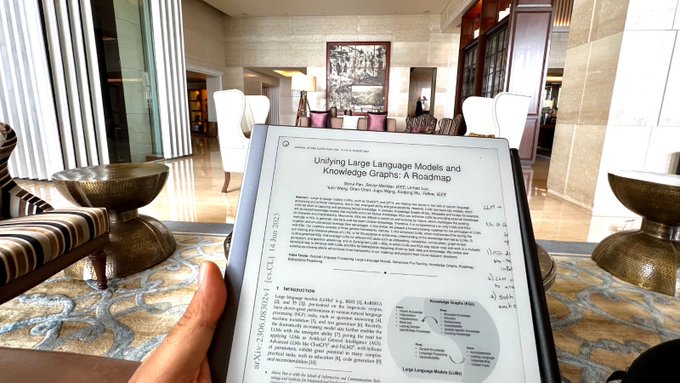

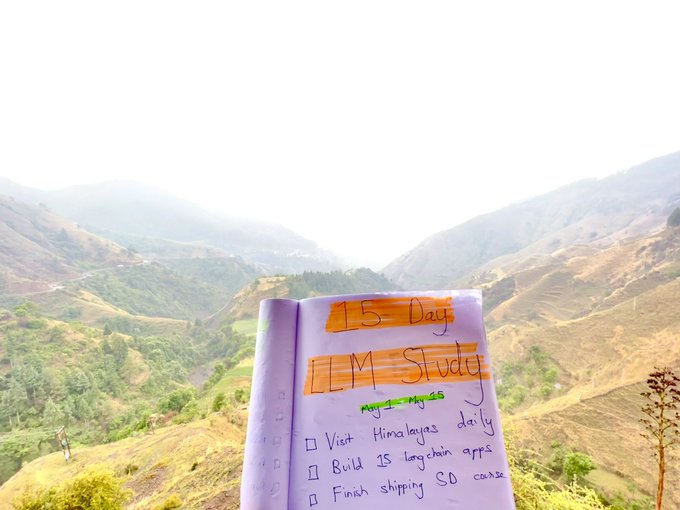

My 15 day LLM Study Vacation! 🚀

The plan for next 2 weeks:

✅ Hike/Visit a Himalayan mountain daily with a paper to read

✅ Build 15

@LangChainAI

apps

✅ Finish catching up on LLM research

22

12

314

An extremely crispy intro to Vector Databases! 🙏

Have you watched those wired videos explaining concepts at incremental levels of detail?

@helloiamleonie

done the same for Vector Dbs

She teaches the topic using Feynman technique, in 3 levels of detail

5

52

308

If you're looking for a code first NLP course 👨💻

There is an NLP course by

@fastdotai

covering 🕵️♂️

- What is NLP

- Topic Modelling

- Sentiment Classification

- Regex

- LM

- RNNs

- Transformers

- Bias & Ethics

Blog:

YT Playlist:

5

64

302

I'm really excited to share the interview with my and

@fastdotai

family's greatest ML Hero:

@jeremyphoward

Not adding any Tweet introductions this time 🍵

Audio:

Show Notes:

Video:

11

60

307

Strong LLM blog recommendation! 🙏

For any practitioner interested in Large Language Models, this is the best blog

@eugeneyan

is magical at combining industrial patterns, experiments & research ideas very clearly

His weekend exp are my fav read:

3

40

304

This is my favourite type of tutorial! 🙏

Remember the awesome fastai tutorials that share only the necessary theory and quickly dive into applying it?

@Sentdex

teaches us QLoRA in the exact manner by applying it to give llama-2 more personality

6

42

301

2022 Goals:

1 Workout for 350 hours

2 Compete on

@kaggle

for 200 Hours

3 Write 3 Kaggle Kernels

4 Host 50 meetups & interviews

@weights_biases

5 Spend 500 hours reading

6 Write 4 High-Quality

@PyTorch

blogposts

7 Publish 1 Open Source Repo

11

15

295

Huge congratulations to

@pandeyparul

on becoming the 1st Woman

@kaggle

Kernels Grandmaster from India.

And to the best of my knowledge, 2nd one in the world.

Although, I really hope she won't stop sharing her amazing kernels with us🍵

4

22

288

Official guide on the GPT-4 API 👨🍳

@OpenAI

cookbook has a set of crispy examples teaching how to build on the API.

While, it doesn’t have a “0 to 100” flow, it’s worth going through in an evening. The code is very readable.

3

48

286

The biggest LLM release!🚨

@AnthropicAI

have given us the “dream” Large Language model with Claude 2:

- Trained till early 2023

- Context length upto 200k tokens

- Ability to upload documents

- Better code abilities

- Free beta for public

Please see my demo below of comparing

5

48

285