Ippi

@Coolzippity

Followers

487

Following

54K

Media

805

Statuses

7K

Joined May 2014

Extremely excited to announce LigandForge 🧬⚡ Generate high-quality peptides at over 10,000x - 1M the speed of state-of-the-art methods like Bindcraft and Boltzgen. Predict binding affinity with 83% correlation to experimental binding data. 150 protein targets benchmarked.

54

165

1K

“OpenClaw exceeded what Linux did in 30 years”

"OpenClaw is the new computer." — Jensen Huang This is the early PC era all over again. A few power users see it. Everyone else hasn't even started. "It's the most popular open source project in the history of humanity, and it did so in just a few weeks. It exceeded

135

768

14K

New windirstat just dropped.

5 minutes ago, @karpathy just dropped karpathy/jobs! he scraped every job in the US economy (342 occupations from BLS), scored each one's AI exposure 0-10 using an LLM, and visualized it as a treemap. if your whole job happens on a screen you're cooked. average score across

0

0

0

Lord Of The Rings didn't CGI Isengard. They built it.

15

161

2K

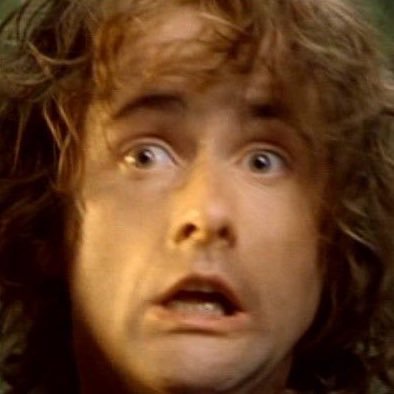

how it feels to press Ctrl + C three times to stop a terminal process that’s still running

57

783

11K

Posterity will regard today's medicine as the treatment of dynamic systems with static tools. https://t.co/dEV7GT1OHR

nature.com

Nature Communications - Real-time tracking of levodopa dynamics is key for precise Parkinson’s therapy. Here, the authors develop a continuous monitoring system with a nano MIP-functionalized...

0

0

0

It's time for the Yann resurgence

0

0

0

*fixes sleep schedule* *gets annoyed by how endless the days suddenly feel*

44

3K

87K

When she's begging you to launch the prototype but you want to rewrite the entire backend in C++ because you're afraid of losing 0.0001ms by keeping it in Python

35

159

4K

When you ask some high elves of rivendell if they want to join your quest to erebor and they hit you with that light of valinor stare

34

122

4K

Sam altmann right now:

BREAKING: ANTHROPIC REVENUE JUST DOUBLED Anthropic revenue run rate $20 billion >$9 billion at the end of 2025 >$14 billion a few weeks ago >$19 billion now MORE THAN DOUBLED in 2 months >Valuation $380 billion >#1 on the App Store claude code cooked

28

166

4K

Z-image trains very easily and needs a relatively small amount of compute to do so considering its size. The most artistically compliant model i have touched to date. examples from a small test tune:

5

2

10

Marco Rubio finding out he has to run Anthropic now too.

315

2K

23K