Sophia Sanborn

@naturecomputes

Followers

4,399

Following

2,824

Media

23

Statuses

597

Theory, neural networks, neurotechnology @ | Organizer @neur_reps | Prev: @geometric_intel @berkeley_ai @redwood_neuro @intelai @harvard

San Francisco

Joined March 2018

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

#GranHermano

• 233687 Tweets

उत्तर प्रदेश

• 209951 Tweets

#WorldEnvironmentDay

• 80941 Tweets

Juliana

• 69993 Tweets

Clorinde

• 45401 Tweets

REBEL STORM LOADING

• 44024 Tweets

クロリンデ

• 37995 Tweets

東京ダービー

• 33022 Tweets

मुख्यमंत्री श्री

• 27900 Tweets

#TheAcolyte

• 26017 Tweets

Saint MSG

• 23849 Tweets

SB19 ON NICKELODEON KCA

• 19988 Tweets

EFEITO BORBOLETA

• 18816 Tweets

#無限にとまらない

• 16955 Tweets

ヒンメル

• 15798 Tweets

Jedi

• 14157 Tweets

#NDA_सरकार_है_तैयार

• 14018 Tweets

実質賃金

• 13815 Tweets

ガーシー

• 10468 Tweets

पर्यावरण संरक्षण

• 10287 Tweets

Last Seen Profiles

Pinned Tweet

In this new paper, led by

@giovannimarchet

, we present a unique theoretical result that provides guarantees for the concrete representational structure expected to emerge in a learning system

Glad to announce our new preprint with C. Hillar, D. Kragic, and

@naturecomputes

!

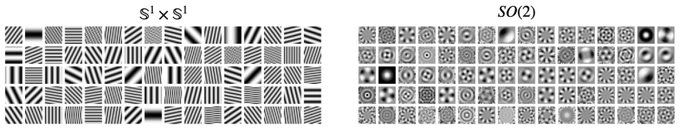

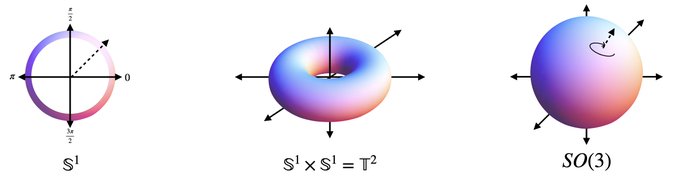

We show via group theory how Fourier features emerge in invariant neural networks -- a step towards a mathematical understanding of representational universality. 🧵1/n

11

124

600

4

57

340

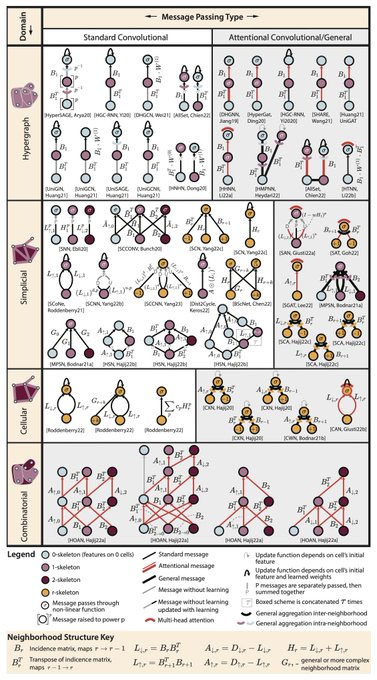

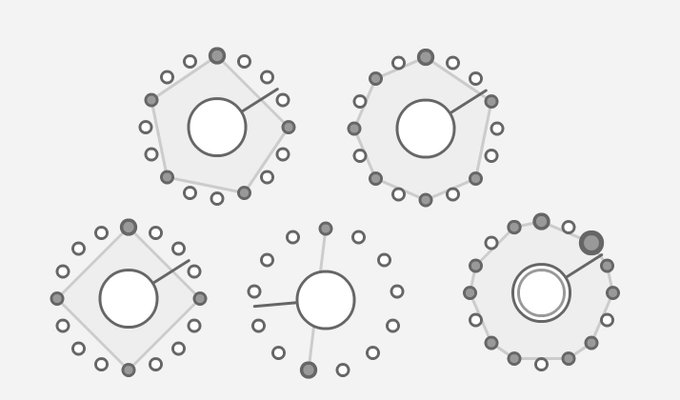

This figure summarizes the landscape of topological neural network architectures on hypergraphs, simplicial, cellular, & combinatorial complexes in a unified graphical notation. Check out our paper and full repository of TNN equations for more ✨

9

200

936

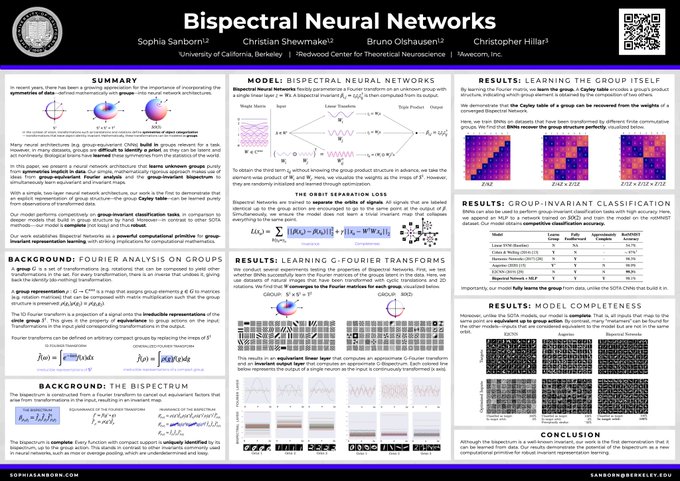

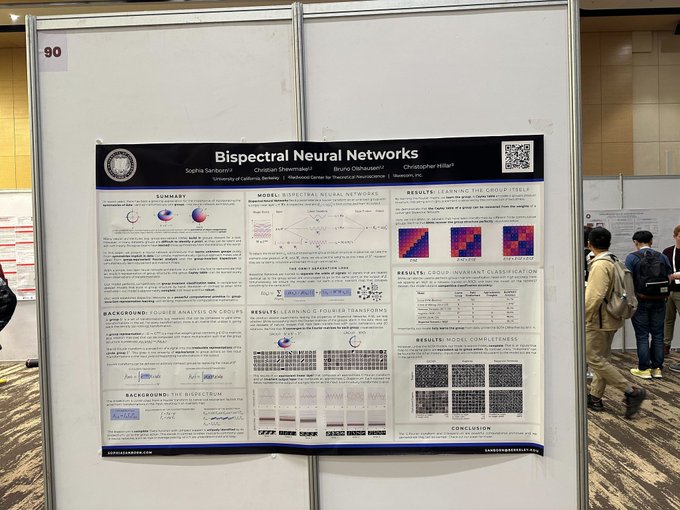

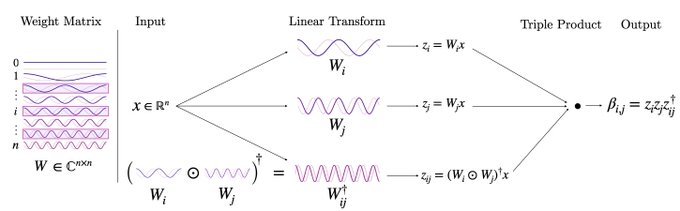

Bispectral Neural Networks go to

#ICLR2023

!

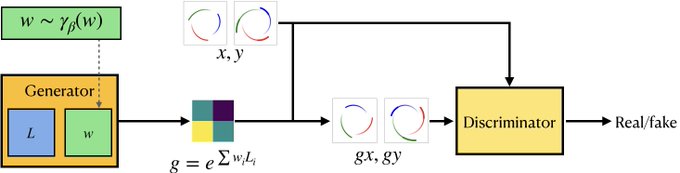

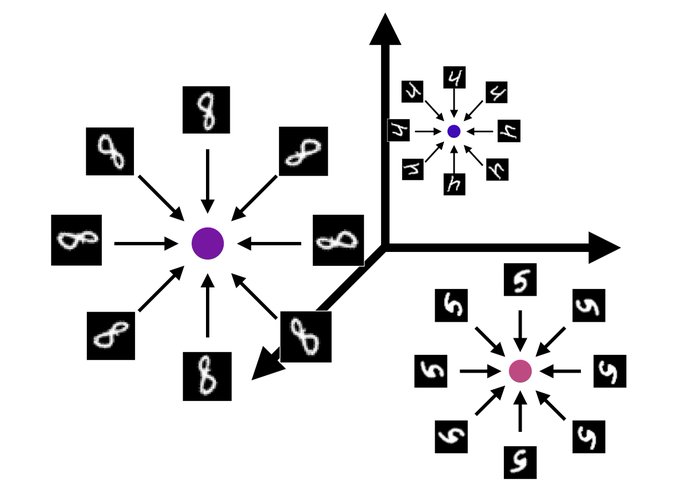

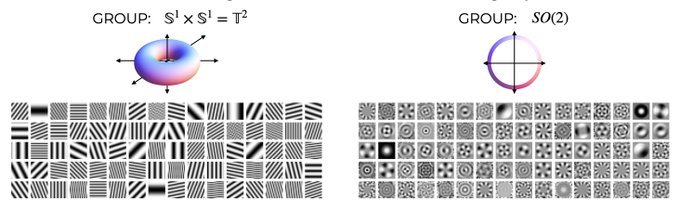

In this work, we present a new neural network architecture capable of learning unknown groups purely from the symmetries implicit in data

—with

@cashewmake2

, Bruno Olshausen, and Christopher Hillar

1/17

10

105

493

🎉 If you're interested in working on understanding visual representations in deep networks through the lens of symmetry & geometry, the

@geometric_intel

lab is recruiting!

10

30

340

Our workshop on Symmetry and Geometry in Neural Representations has been accepted to

@NeurIPSConf

2022!

We've put together a lineup of incredible speakers and panelists from 🧠 neuroscience, 🤖 geometric deep learning, and 🌐 geometric statistics.

4

43

308

If you find this interesting, check out our ICLR 2023 paper, in which we demonstrate that you can *learn* group-Fourier transforms by learning to be invariant to transformations in data:

3

42

223

Seeking: Creative and ambitious computational neuroscience / ML PhDs interested in building next-generation brain-computer interfaces. My team at is hiring research scientists. Reach out at sophias

@science

.xyz for more info.

4

55

214

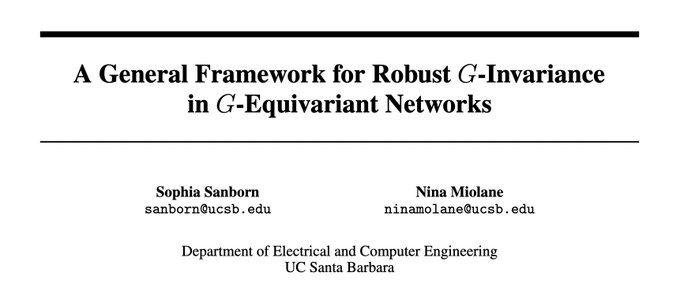

Looking forward to presenting our work at

@NeurIPSConf

in December!

Here we use the triple correlation on groups to define a group-invariant layer for group-equivariant networks that improves both accuracy and robustness. Stay tuned for the camera-ready release!

w/

@ninamiolane

0

21

135

On this episode of the

@twimlai

podcast, I talk about some of my favorite topics - universality, compression, group theory - and how mathematics reveals principles of neural representation that transcend substrate

Today we’re joined by Sophia Sanborn (

@naturecomputes

) from

@UCSB

to discuss the universality between neural representations and deep neural networks along with her

#ICLR2023

paper on Bispectral Neural Networks.

🎧🎥 Check out the full episode at

0

8

42

4

22

132

The phenomenon of universality is a fascinating one:

Why do certain features consistently emerge across different neural networks (incl. the brain!) trained on different datasets?

In our forthcoming paper, we provide one answer, grounded in group representation theory

Glad to share that our paper titled 'Harmonics of Learning' got accepted at the Conference on Learning Theory (COLT 2024)!

This is a joint work with C. Hillar, D. Kragic and S. Sanborn (

@naturecomputes

)

2

3

37

3

14

128

Thrilled to receive this honor. Thank you

@pimsmath

and

@SimonsFdn

!

Meet Sophia

@naturecomputes

from our lab🤩

Sophia studies fascinating geometric properties of neural representations

@neur_reps

@ucsbcs

She was just awarded the prestigious PIMS-Simons fellowship for her outstanding research in mathematical sciences!🏆

2

6

72

11

4

119

In a new paper led by

@hopfbifurcator

, we bring the power of Riemannian geometry to the problem of neural decoding 🌐🧠

To appear at the

@CVPR

@TAGinDS

workshop: Quantifying Local Extrinsic Curvature in Neural Manifolds

With co-authors

@manusmad

,

@kdaoduc

, &

@ninamiolane

2

25

102

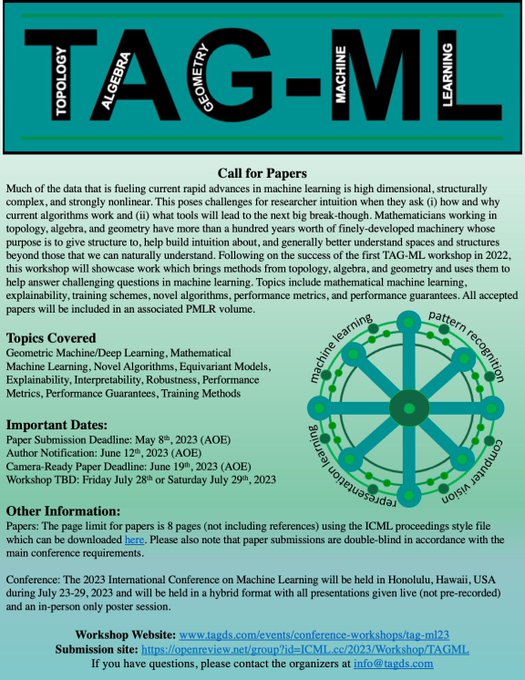

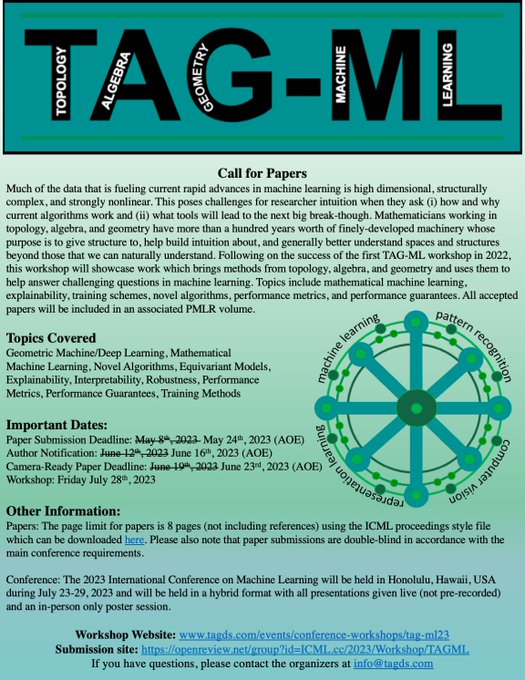

This year's

@icmlconf

will host the 2nd annual Topology, Algebra, and Geometry in ML Workshop 🌐

Stay tuned for our call for papers 📄

Can't wait to bring the TAG community to Hawaii 🌴

Co-organized w

@emerson_tegan

@HenryKvinge

@Pseudomanifold

@ninamiolane

@tdoster

@TAGinDS

0

8

83

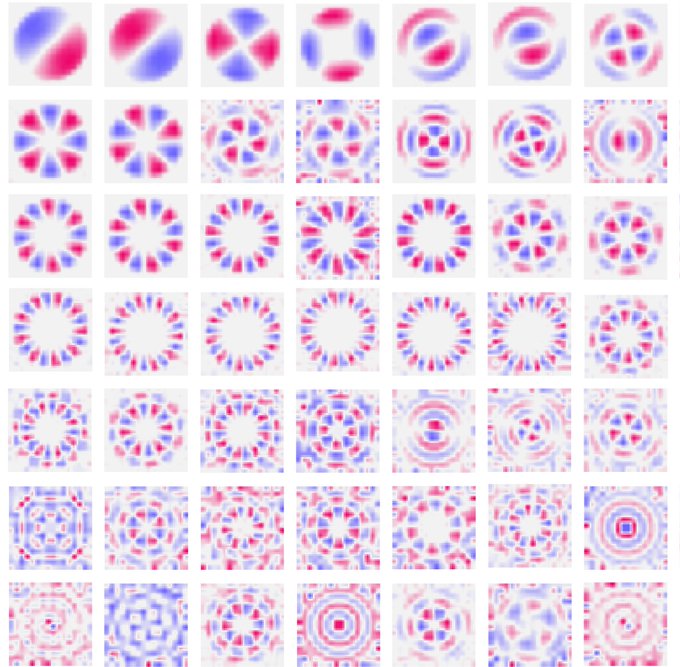

Our latest pre-print develops a method for automating interpretability research in vision models 🤖👁️

Our method is grounded in human perceptual judgments, but is fully scalable 👥

Preprint alert: Superposition in CNNs and Brains

Context: Recent work on LLMs showed how sparse coding can recover interpretable representations in transformers

@AnthropicAI

TL;DR: Concurrently, we have been doing similar analyses in vision models and brains.

4

73

313

1

16

78

Curious about how Riemannian geometry and VAEs can be used to understand neural population codes? Check out our latest pre-print, lead by Francisco Acosta (

@hopfbifurcator

), with myself,

@kdaoduc

,

@manusmad

, and

@ninamiolane

Check out my first (!) first-author preprint, with

@naturecomputes

,

@kdaoduc

,

@manusmad

, and

@ninamiolane

:

We propose a method for calculating the curvature of neural manifolds, using deep generative models and Riemannian geometry.

1/7

6

30

154

1

7

75

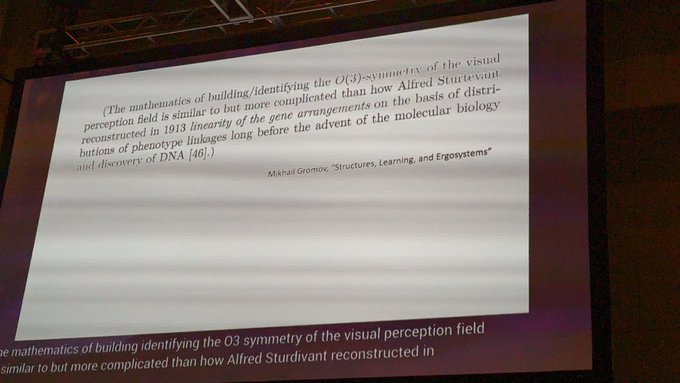

A very interesting and prescient quote from Mikhail Gromov in

@doristsao

's

@neur_reps

talk on constructing visual representations through local diffeomorphisms

2

11

68

Great opportunities in the

@geometric_intel

lab for researchers interested in the intersection of geometry, topology, deep learning, and neuroscience

0

7

66

Are there principles of representation learning that transcend model architecture and substrate?

Looking forward to discussing this and more in the new 🔵

@unireps

@NeurIPSConf

workshop 🔴

We're excited to announce the first edition of 🔵🔴 UniReps: the Workshop on Unifying Representations in Neural Models! 🧠

To be held at

@NeurIPSConf

2023!

SUBMISSION DEADLINE: 4 October

Check out our Call for Papers, lineup of speakers and schedule at:

3

34

95

0

7

65

@CimesaLjubica

I highly recommend Principles of Neural Design by Sterling & Laughlin: a beautiful book that reverse engineers the structure of neural systems from the physical / environmental constraints faced by organisms

1

8

64

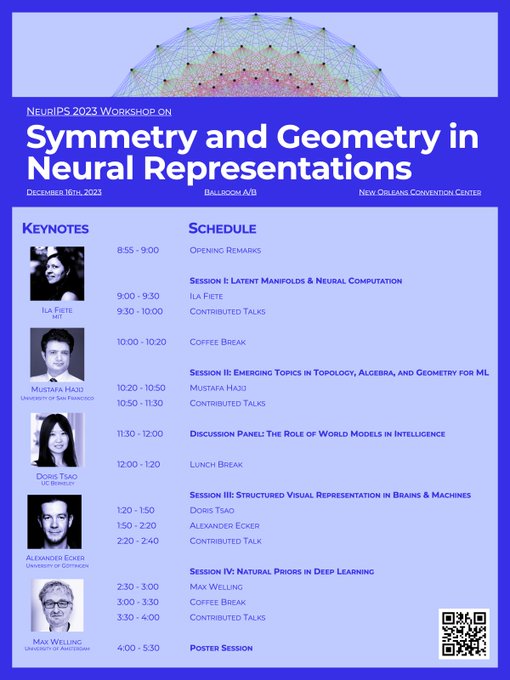

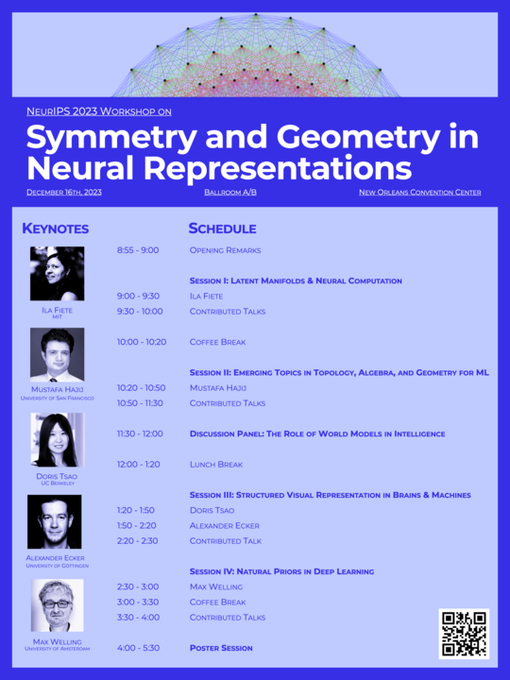

Looking forward to a day of dynamic presentations and conversations with the NeurReps community. Join us on December 16th in Ballroom A/B

NeurReps is this Saturday Dec 16 in Ballroom A/B at

@NeurIPSConf

!

Come out for an exciting program of talks, posters, and discussions at the intersection of deep learning, higher mathematics, and computational neuroscience

6

41

175

0

10

62

Interested in getting into topological deep learning? Check out the TDL Coding Challenge at

#ICML2023

hosted by

@TAGinDS

0

10

61

🧐 Getting into Topological Deep Learning?

👩💻 Try PyT, a new and comprehensive platform for deep learning on topological domains

🪢 Built on top of PyTorch, PyT libraries standardize diverse models and common operations into a single unifying framework

Start building your topological neural networks today with

#PyT

.

#TopoModelX

#TopoNetX

#TopoEmbedX

@ninamiolane

@PaulRosenPHD

@tolga_birdal

@HajijMustafa

@mathildepapillo

@naturecomputes

@ghadazamzmi

0

17

44

0

11

56

Check out the proceedings of the 2022 NeurIPS Workshop on Symmetry and Geometry in Neural Representations, now online with PMLR ✨

@NeurIPSConf

@neur_reps

The proceedings of the 2022 NeurReps Workshop are now online!

PMLR Volume 197: NeurIPS Workshop on Symmetry and Geometry in Neural Representations features 21 fantastic papers from our contributing authors

View online here:

@NeurIPSConf

1

19

60

0

6

56

A fantastic resource for those interested in manifold learning for neural population codes 🧠

We're also happy to share our 'tutorial on generative models', which

@marineschimel

,

@davindi09

and I generated for this workshop:

It consists of three notebooks giving an overview of some of the models discussed in the workshop!

1

7

59

0

4

52

At NeurIPS this week. Come find me at the following places:

- Thu Poster Session 5 10:45:

#1016

on Invariance in G-CNNs

- Fri

@unireps

Workshop 9:00 - 9:30: Talk on Symmetry & Universality

- Sat

@neur_reps

Workshop 11:30 - 12:00: Moderating the panel / organizing all day ✨

1

6

50

Check out our work featured in

@patrickmineault

's excellent NeuroAI paper roundup: polysemanticity & mechanistic interpretability in deep vision models & the visual cortex, led by

@klindt_david

👁️🧠

It's new post day 🎉 Could a neuroscientist understand an ANN? I cover recent work in mechanistic interpretability at

@AnthropicAI

led by

@ch402

and at UCSB with

@naturecomputes

@ninamiolane

@KlindtDavid

0

25

132

0

7

50

@IntuitMachine

Nice paper from Rodola and colleagues! You might find our recent paper on symmetry and universality interesting:

2

5

50

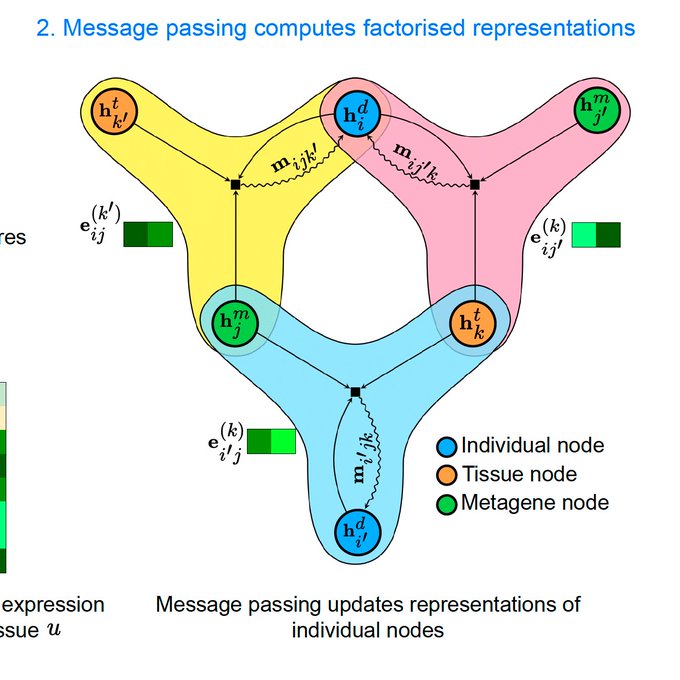

Beautiful real-world application of hypergraph neural networks!

1

4

48

⏰ Just two weeks to the deadline for the ICML Workshop on Topology, Algebra, and Geometry in ML!

🗓️ Deadline: May 8 AOE

🔗 Click here for submission instructions and more details

🧑🏫 We look forward to seeing your work!

0

8

49

I'm at

@NeurIPSConf

all week and looking to meet as many new people as possible

If you're interested in the intersection of geometry, deep learning, and neuroscience, let's chat

Shoot me a DM or come by our NeurReps Workshop on Saturday ✨

@neur_reps

0

4

48

(give rise to symmetries in learning systems - biological or artificial)

1

2

46

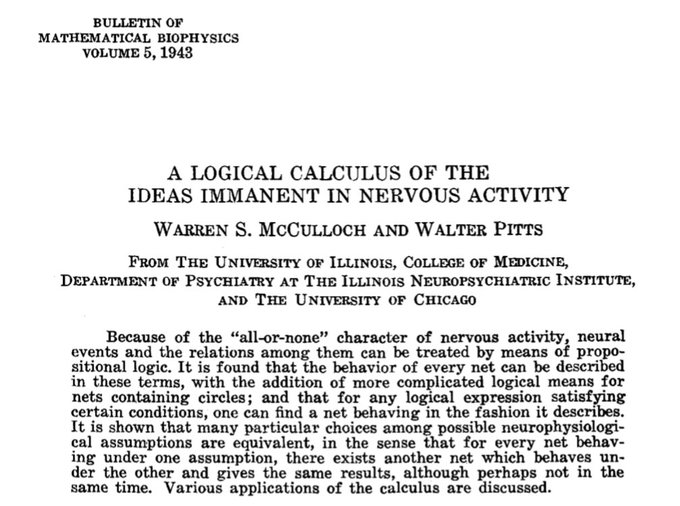

We think this provides theoretical backing for the emergence of Fourier features / irreps in the nice recent papers by

@NeelNanda5

,

@bilalchughtai_

, et al in the context of learning modular arithmetic / group composition:

2

2

43

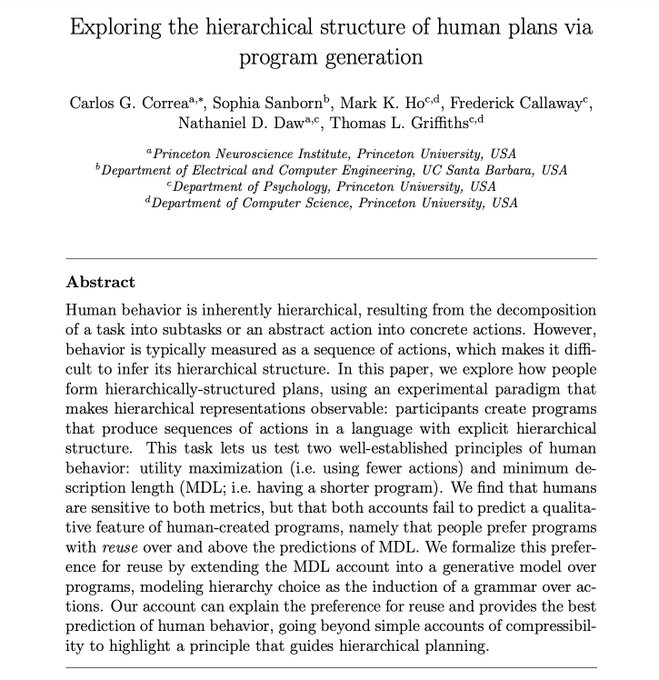

New preprint with

@_cgcorrea

and colleagues on planning as sampling from an "action grammar"

0

9

44

@patrickmineault

For those interested in the intersection of neuroscience, ML, and geometry/topology, check out our repo awesome-neural-geometry 🧠🌐

0

4

39

We're hoping to bring the 2nd edition of the

@neur_reps

workshop back to NeurIPS this year — Let us know what you want to see at NeurReps 2023 ✨

We are beginning to look towards

#NeurIPS2023

& we want to incorporate your ideas!

For the 2023 NeurReps Workshop:

🔣 What topics do you want to see?

👩🏫 Who do you want to hear from?

👥 Have ideas for interactive programming?

Leave comments & submit suggestions below 👇

1

7

37

0

13

41

The deadline for NeurReps has been extended to October 4th AOE 🌟

We have two tracks: Extended Abstracts (4 pg, non-archival) and Proceedings (9 pg, published in PMLR)

Last year saw a fantastic batch of submissions. We look forward to seeing this year's creative work! 🧠

0

8

35

✈️Amsterdam bound. Thrilled to be participating in the Geometry and Shape Analysis for Neuroscience Minisymposium at

#SIAMCSE23

this Monday. Please reach out if you are attending or in the area. Looking forward to great conversations

1

0

34

Christian Shewmake (

@cashewmake2

) leads our last panel discussion on geometric and topological principles for representations in the brain with Bruno Olshausen,

@manusmad

,

@KrisTorpJensen

, and

@gkreiman

0

4

33

In the

@neur_reps

slack community, we've been compiling our favorite resources on geometry for neuroscience and deep learning. Check it out on Github and contribute yours!

1

5

32

Our call for papers is out!

Seeking your latest work on GDL, geometric statistics, and geometric/topological methods for neuroscience.

Hope to see you in New Orleans 💫

0

8

32

@rballeba

Stay tuned for a review paper surveying the landscape of topological deep learning architectures with my co-authors

@ninamiolane

@HajijMustafa

and lead author

@mathildepapillo

! We'll be posting it online next month.

1

3

31

👩💻 Seeking reviewers with expertise in topology, algebra, and geometry for

#ICML2023

🌟 Sign up to help out below 👇

0

9

31

The TAG-ML proceedings are now online. Check out this collection of excellent papers at the intersection of topology, algebra, geometry, and machine learning! 🌐

The proceedings volume of the 2nd Annual TAG-ML workshop

@icmlconf

is available now at

Thank you to all of the contributing authors and editors- it takes a village!

@neur_reps

@mathildepapillo

@ninamiolane

@Pseudomanifold

0

11

53

0

4

31

Symmetry has the potential to serve as an organizing principle for theories of neural coding, much like it did for 20th century physics. Thrilled to be a part of this exciting workshop organized by

@simoneazeglio

and

@ari_dibe

.

It's official!

@BernsteinNeuro

accepted our - with

@ari_dibe

- workshop proposal 🧠

In "Symmetry, Invariance and Neural Representations" we will explore the intimate relationship between the physical world and neural representations.

Join our speakers in this experience!

1

10

52

1

3

30

Fantastic and fascinating new work from the

@atolias

lab. I've been wanting to this experiment run like this since the original Circuits thread by

@ch402

et al 👏

Does the concept of cortical columns extend to higher-level primate cortex?

Using

#DeepLearning

& physiology, we found that V4 neurons cluster in columns & form functional groups

Led by

@KonstantinWille

@kelli_restivo

w/

@sinzlab

@kfrankelab

@alxecker

🧵

6

143

427

0

0

28

@patrickmineault

If you're interested: we applied a similar approach to both deep image models and data from the visual cortex and found some interesting results, in line with the concept of "mixed selectivity" / "polysemanticity"

0

0

29

An exciting program on geometry and dynamics in the brain put together by

@adelardalan

,

@tafazolisina

, and

@timbuschman

. Looking forward to discussing these topics with everyone in Mont Tremblant 🍁

0

1

26

Join us on Slack to take part in a growing community at the intersection of applied mathematics, deep learning, and neuroscience 🌐🤖🧠

0

11

23

Another exciting paper on symmetry discovery. Great to see more attention on this problem!

How can

#GenerativeAI

help scientists discover symmetry from data? Check out our

#ICML2023

paper on ``Generative Adversarial Symmetry Discovery''.

Paper:

Code:

(1/3)

3

42

195

0

1

24

@CimesaLjubica

One thing I appreciate greatly is that they start from single-celled organisms that have no neurons at all. An individual cell exhibits an immense amount of intelligence & I think the field would do well to release our fixation on the brain as the locus of intelligent computation

0

2

18

Reviewers needed for the Topology Algebra and Geometry in Pattern Recognition Applications workshop

@CVPR

! Fill out the Google form below if you are able to help out 👇🙏

0

1

17

And be sure to check out our poster at

#ICLR2023

: Wednesday May 3rd 11:30AM to 1:30PM in MH 1-2-3-4

#90

I was not able to make it in person, but

@Yubei_Chen

has transported the poster across the globe 🗺️🙏

16/17

1

0

16

Thrilled to have contributed to this work! Loihi 2 allows engineers to program custom neuron models, facilitating the design of all sorts of exotic spiking neural networks.

“We are trying to establish a new flexible and versatile, general purpose intelligent computing chip,” says Mike Davies,

@Intel

’s

#Neuromorphic

Computing Director. Read about Intel’s neuromorphic research journey and the Loihi-2 chip in

@ScienceMagazine

.

0

15

54

1

2

16

⌛️ One week to the TAG-ML Deadline for

#ICML2023

🍩 Get in your work on topology, algebra, and geometry in machine learning!

🎯 Deadline: May 8th AOE

👉

1

4

16

Can't think of anyone more deserving. Congrats to our lab leader! 🏆💥

Honored to be named Hellman Fellow!

Esp grateful to extraordinary lab members

@mathildepapillo

@AdeleMyersPhD

@hopfbifurcator

@BongjinKoo

@naturecomputes

@klindt_david

@cashewmake2

who made it happen!

👉We create cutting-edge AI to reveal the geometries of intelligent life🌐🍩

9

7

116

1

0

15

Since I couldn't make it to the conference, please reach out if you're interested in chatting: sanborn

@berkeley

.edu 📨

17/17

1

1

14

Had fun making generative suminagashi last night with

@eangolden

and

@FluintArt

. Awesome project.

0

0

13

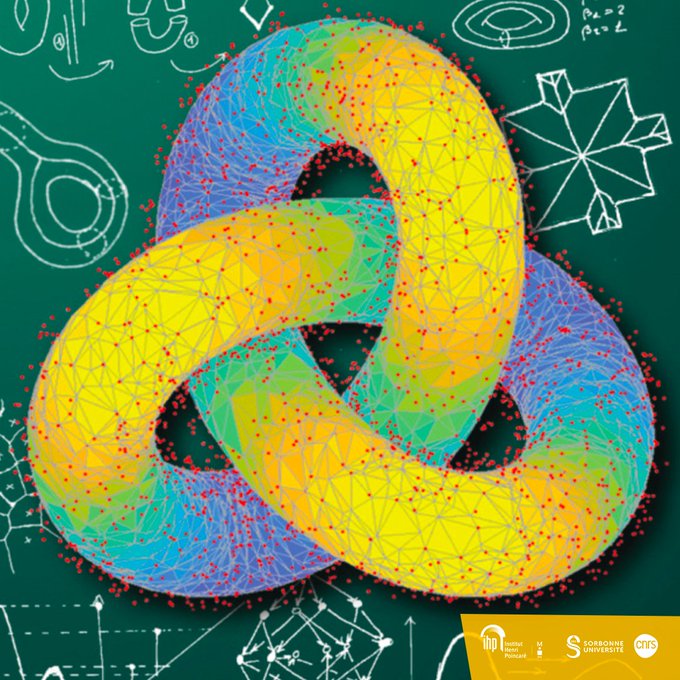

I'll be joining for the

@geomstats

hackathon. Hope to see some of you in Paris!

📢We are pleased to announce the beginning of the thematic trimester "Geometry and Statistics in Data Sciences” (GESDA) to be held at

#IHP

(05/09 - 09/12/2022)

👉Full programme on

#scientificprogramme

#geometry

#statistics

#datascience

#Maths

#gesdaihp

0

19

37

1

1

14

Looking forward to speaking in the Mathematics of Neuroscience Symposium on the beautiful island of Crete this summer! 🌊☀️🇬🇷

The call for talk/poster submissions is now open, check it out below 👇

#ICNAAM2022

0

5

13