Louis Castricato

@lcastricato

Followers

3,538

Following

481

Media

422

Statuses

7,593

Math @uwaterloo , RLHF @BrownCSDept , Goosefluencer. x-RS @aieleuther , x-Head of LLMs @stabilityai , x-lead @CarperAI . co-founder @synth_labs . We're hiring.

Providence, RI

Joined September 2017

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Charles

• 531895 Tweets

#KKRvsSRH

• 135108 Tweets

#KNYvGS

• 99595 Tweets

Konya

• 92755 Tweets

Şampiyon Galatasaray

• 87595 Tweets

Leeds

• 69691 Tweets

#MayıslarBizimdir

• 65280 Tweets

Gautam Gambhir

• 57372 Tweets

Meral

• 55744 Tweets

Geçen

• 50079 Tweets

Shreyas Iyer

• 45766 Tweets

#GazaGenocide

• 39959 Tweets

Southampton

• 39815 Tweets

Okan Buruk

• 28427 Tweets

Ankaragücü

• 27815 Tweets

Congratulations KKR

• 26624 Tweets

アニオリ

• 22409 Tweets

#IPL2O24

• 21760 Tweets

#Fenerbahçe

• 21444 Tweets

Icardi

• 20277 Tweets

Trabzon

• 19082 Tweets

Seneye

• 16448 Tweets

Beter

• 15193 Tweets

Larson

• 14228 Tweets

#Hedef25

• 11672 Tweets

Last Seen Profiles

Pinned Tweet

Come build with us

An *exclusive* from me about a new startup,

@synth_labs

, which is working to help companies get their AI systems do what they want (and avoid doing what they don't want). via

@technology

1

17

39

8

6

43

Joining

@huggingface

this summer as a research intern to work on large scale contrastive learning and retrieval, super excited 🎉

12

8

273

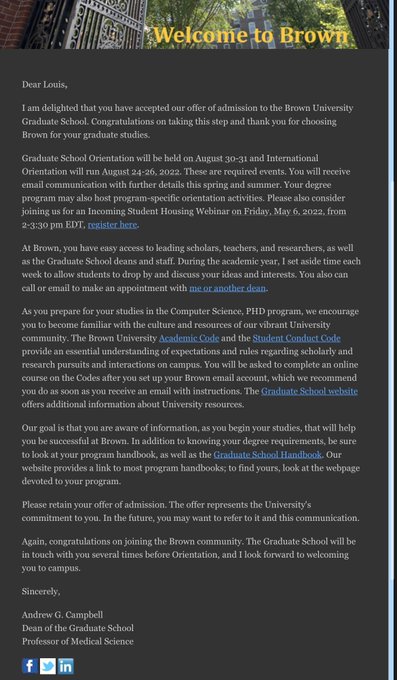

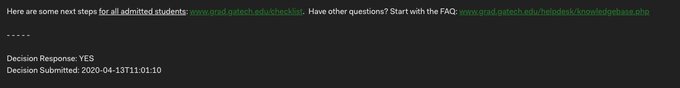

Waited a long time to tweet this 😅 but I'm happy to announce that I am a recipient of

@StabilityAI

PhD fellowship that'll be funding my studies at

@BrownCSDept

until 2026.

18

6

198

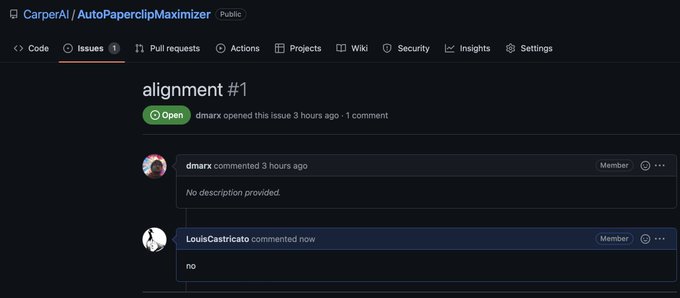

Excited to announce that I've founded new company! is dedicated to solving the AI alignment challenge–ensuring AIs are robustly aligned with human intentions & values.

Grateful to have

@NathanThinks

@fdesouza

as cofounders & Seed funding from

@M12vc

&

18

18

183

So uh... I'm joining

@BrownCSDept

in the fall as a PhD student to work on narrative theory and reader models 🎉

21

3

171

As of today I have left my role at Stability and Carper. I wish them best of luck, and I am excited to join some of my closest friends at

@AiEleuther

along side

@BlancheMinerva

:)

19

2

156

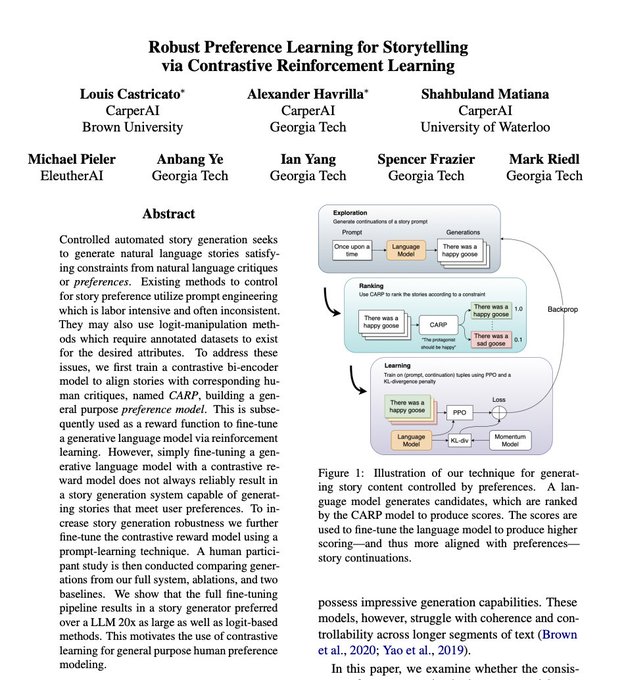

Recent advances with language models have been powered by Reinforcement Learning with Human Feedback (RLHF).

At Carper, we're developing production ready open-source RLHF tools. Blog post by

@natolambert

, myself,

@lvwerra

and

@Dahoas1

1

38

151

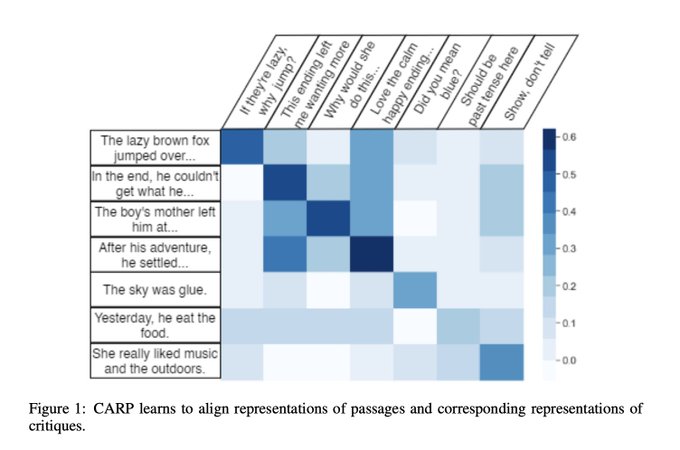

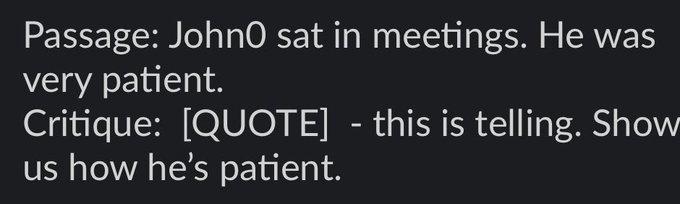

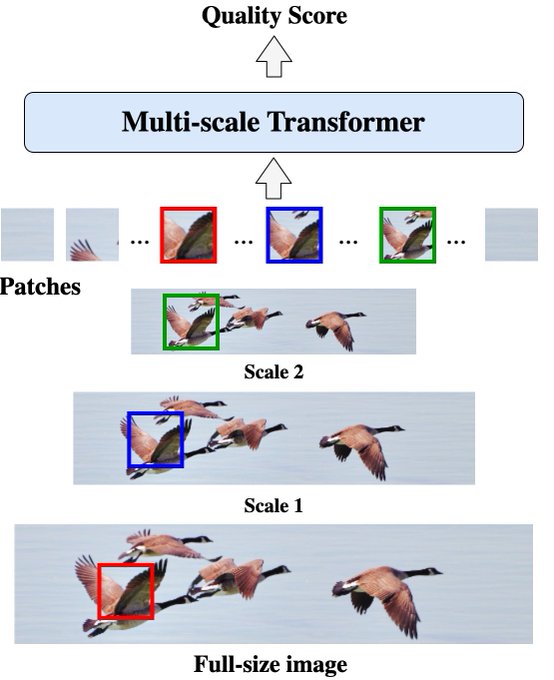

Thanks for the retweet! Are you interested in doing RLHF for story generation but using no human annotation data? Our research direction at Carper has got you covered! We explore the limitations of CLIP-like story critic models ala CARP and show various

4

17

110

.

@carperai

just passed a major milestone a few days ago, its now a whole year old. I thought we could take this time to recap the achievements we've accomplished over the last year as well as discuss potential directions. of the lab. A thread 🧵1/N

2

20

92

we're hiring for all roles.

Open science stuff we're working on:

1) RLAIF for pretraining (we're making open source datasets).

2) benchmarks benchmarks benchmarks.

3) collaborating with

@AiEleuther

on some awesome projects.

Work with us.

4

16

69

@geoffreyhinton

Yes, if t can’t explain how it works then how do you know who is to be held liable if something goes wrong? How do you know if it’s reasoning was flawless but it just so happen to be unlucky circumstances?

8

2

62

Releasing the demo notebook!

You can try CARP-L here, just click "Run all"

You can edit the stories and the critiques. It'll perform prompt softening under the hood using Pegasus.

2

10

48

@mark_riedl

Holy shit I almost died laughing, I'm waiting for someone to try to recruit a candidate they found via a ouija board for a company board

0

1

42

Shame on

@WriteSonic

for using Stable Diffusion on their website while (illegally) not mentioning the RAIL license or crediting

@StabilityAI

. I really hope you guys didn't try to pass that model as your own to VCs ;)

3

6

39

@colinraffel

“Oh cool some hard math! The work must be right then” it’s so hard to get feedback on my mathematics if no one in my field fully understands what I’m actually doing! Everyone gets it at an intuitive level sure, but I still don’t know if I’m using the right abstractions.

1

0

28

In an event to mimic the success of

@KordingLab

's recent neuro event, I decided to host my own pure math in deep learning event. Here is the calendar event URL. Please RSVP if you intend to attend. The meeting is tomorrow night (Tuesday) at 10PM EST.

10

10

25

@arankomatsuzaki

Absolutely shameless plug:

I wrote about this exact relationship back in April. I think my theory took it in a different direction than theirs as I borrowed more deeply from the cognitive neuroscience side of things. I should contact the authors.

1

2

22

Trying to figure out interest, who would be interested in a

@StabilityAI

launch event in Boston on October 16th? A dinner party in Cambridge, hosted by yours truly.

Not interested

96

Interested

89

Tentative

38

6

4

23

@ClementDelangue

There's an Eleuther meetup in dolores park at 3pm on Sunday if that counts 😆

5

1

23

@omarsar0

Incredibly misleading. We have no fast how ChatGPT trained or if it was based on a chinchilla-like approach. Similarly its only 15x faster because inference fits on a single gpu, not because of anything the repository does. This is a thin DS wrapper around a naive PPO implt.

2

1

23

@omarsar0

For a signficiantly faster RLHF implementation, check out trlX. Its usually 3x-4x faster than competing implementations. Including this one.

0

0

22

Stella is completely right. I am at EMNLP right now and I've already heard two people quote

@kchonyc

as if his joke was fact.

@kchonyc

@GoogleDeepMind

This is misinformation. The model does not know this information and people will take this as evidence that the numbers are correct. Please delete it and refrain from encouraging people to ask models about themselves

5

2

142

2

0

21

Tiny RLHF ideas!

Now that we can write Tiny Papers

@iclr_conf

, what should we write about?

I'd like to invite all established researchers to contribute Tiny Ideas as inspirations, seeds for discussions & future collaborations!

#TinyIdeasForTinyPapers

I'll start. Note: bad ideas == good starts.

5

22

166

1

4

21

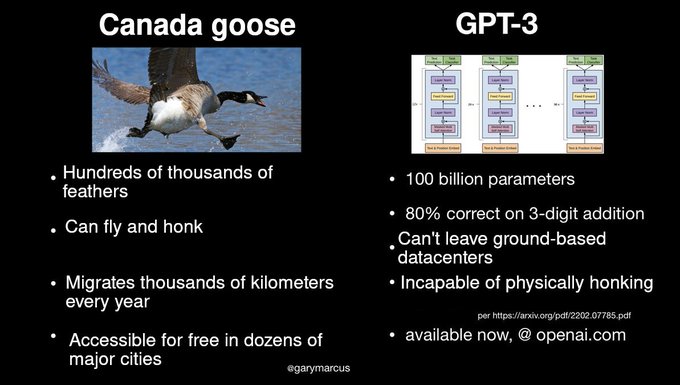

Thank you google, incredibly based and goosepilled.

1

1

19

@_joaogui1

@rodrigfnogueira

@carperai

Didn't wanna reply from the CarperAI account since this is my opinion and not my org's opinion but we have some preliminary results already confirming that RL makes a noticeable difference in terms of capabilities.

1/2

2

1

17

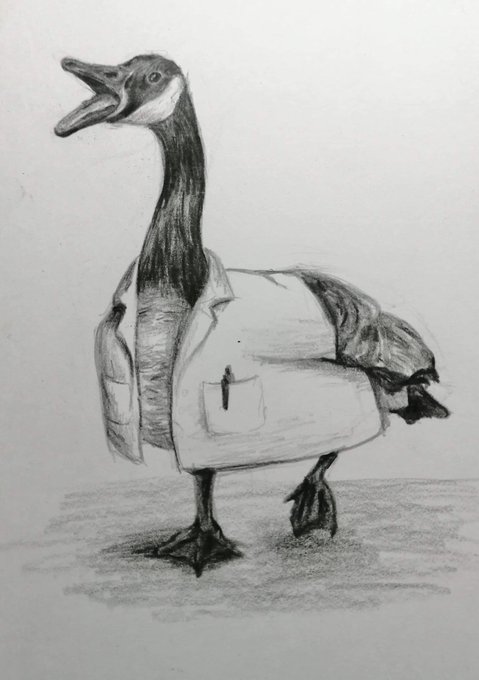

Hi 👋 if you are interested in:

🪿 Goose explainers, discussion, and resources

📃 Sharing the top goose related news

🔬 Learning more about geese

😂 Goose-related memes

Consider following me. ✔️

I have lots of great content planned for the new year! Stay tuned!🎉

1

0

17

@kaixhin

Basically failed introduction to neuroscience, went on to win a best paper a few months after on neuroscience

1

0

15

New CARP model just dropped!

Model:

Example script:

Half the number of parameters but matches CARP-L performance across the board. Also perhaps subjective but qualitatively the results it produces are much more satisfying ;)

1

2

14

@pixlpa

Fabula vs syuzhet. The idea that a written story is different than the actual raw story (Eg a story need not be written to express identical information)

1

2

14

@gabrielpeyre

@jwkritchie

Bill Cook did a whole course on integer programming and solving TSP using DNNs. I recommend you read it! It’s super cool. A lot of people are copying his work now adays and not crediting him, it really sucks. He did combinatorial deep learning back in 2012ish

2

2

14

Come work on fun code projects with us at

#EleutherAI

we need an AWS expert! Easy way to get onto a paper with the lovely

@TaliaRinger

@moyix

and

@BlancheMinerva

:)

2

6

14

“Towards a Model-theoretic View of Narratives”

@BlancheMinerva

@recardona

@DavidThue

and myself describe a foundation of narratology in the vocabulary of model and information theory. 1/N

2

0

14

@arankomatsuzaki

1) algorithm distillation

2) evolution through chain of thought to build out extensive behavior cloning datasets

3) Offline RL, results in better reward/TFLOPS

4) synthetic preferences

5) various (many) ways to improve generation diversity and prevent modal collapse.

1

0

13

I should make a bot that screenshots anyone's

#NFT

and sells it at less than what they were selling it at (and donates all income)

1

0

13