Miguel Angel Bautista

@itsbautistam

Followers

2,541

Following

192

Media

42

Statuses

902

I am a research scientist @ Apple ML Research, seeking a grand unification of generative modeling 🇪🇸🇺🇸

San Francisco, CA

Joined April 2014

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

#WeAreSeriesEP12

• 336946 Tweets

LINGLING TSOU OST

• 266813 Tweets

FREENBECKY PILOT TLP REACTION

• 187427 Tweets

PondPhuwin WeAre EP12

• 141778 Tweets

3Days Left Kabir Prakat Diwas

• 109826 Tweets

#BREAKOUT

• 72372 Tweets

#Juneteenth

• 69894 Tweets

Albania

• 65952 Tweets

Croatia

• 46984 Tweets

#スノの衝動

• 38644 Tweets

Stonehenge

• 35261 Tweets

WeAre WinnySatang EP12

• 29581 Tweets

アンジュルム

• 29577 Tweets

あんスタ

• 29448 Tweets

Batshuayi

• 25746 Tweets

スタフォニ

• 21807 Tweets

Just Stop Oil

• 20348 Tweets

GAME TO BUSAN

• 15577 Tweets

#CROALB

• 12523 Tweets

マリノス

• 11263 Tweets

Pistons

• 10419 Tweets

Galveston

• 10161 Tweets

Last Seen Profiles

Excited for this to be out! Introducing GAUDI: a generative model for 3D indoor scenes. We tackle the problem of learning a generative model of 3D scenes parametrized as radiance fields. This has been a great collaboration across multiple teams at

@Apple

.

21

114

438

Introducing Generative Scene Networks (GSN), a generative model for learning radiance fields for realistic scenes. With GSN we can sample scenes from the learned prior and move through them with a freely moving camera.

Arxiv:

Scenes sampled from the prior:

5

46

230

I find it interesting that the perception of the ML community is that

@Apple

"does not publish" or that it "does not contribute frameworks". Anyways, I'm going to start actively sharing my colleagues works to gently push back on that perception :)

11

10

202

We are looking for residents to join MLR at

@Apple

for 2023! We are specially interested in candidates with a strong expertise (MSc/PhD) on physical sciences (eg. physics, climate, bio, chem) and exposure to computational models/ML.

3

40

133

Introducing Manifold Diffusion Fields (MDF), our new work on learning generative models over fields defined on curved geometries. This is joint work with our intern

@Ahmed_AI035

(who hasn’t even started his PhD yet!) and

@jsusskin

at

@Apple

MLR 🧵

1

28

117

1/n Check out our

@Apple

research paper "On the generalization of learning-based 3D reconstruction" (or 3D43D)

2

22

110

Congrats to my lead authors at Apple on getting two great papers accepted to

#ICLR2024

:

-

@Ahmed_AI035

with Manifold Diffusion Fields ()

-

@xmzhao_

with Dynamic Novel View Synthesis ()

1

12

81

We will be presenting GAUDI at

#NeurIPS2022

! Excited to chat about this and more generative models for 3D in Nola. I’ll share more about code release and checkpoints for GAUDI after ICLR deadline.

Excited for this to be out! Introducing GAUDI: a generative model for 3D indoor scenes. We tackle the problem of learning a generative model of 3D scenes parametrized as radiance fields. This has been a great collaboration across multiple teams at

@Apple

.

21

114

438

4

11

76

Thanks for sharing

@ak92501

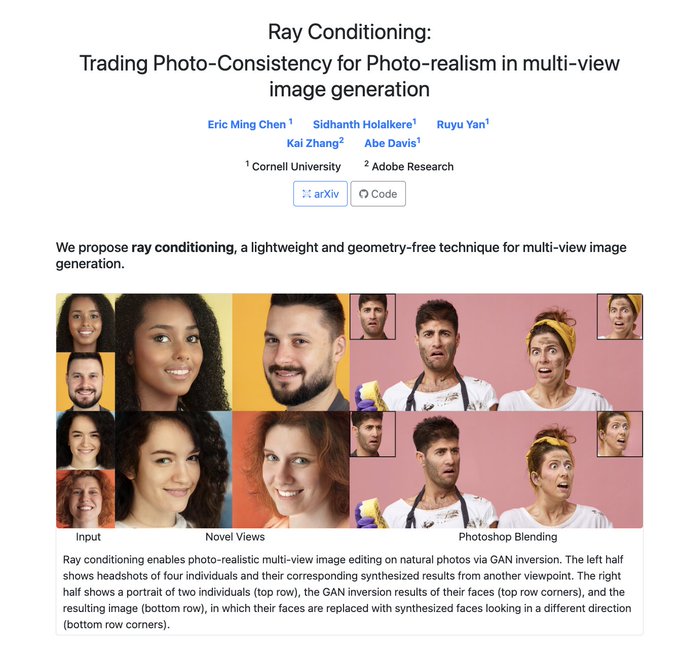

! I’ve always been interested in simple yet effective methods that scale. In this recent

@Apple

paper on learning 3D view synthesis we follow that philosophy.

0

12

75

Congratulations

@Ahmed_AI035

well deserved! It was a pleasure to host you at

@Apple

MLR! What a way to start your PhD! 💪💪💪

1

6

70

Some intern positions still open at the Machine Learning Research team

@Apple

! If you are a PhD student interested in generative models, neural rendering and language grounded 3D vision please consider applying through the links or DM me.

4

15

65

Two papers by our ML research team at

@Apple

accepted to

@ICCV_2021

! More details/code coming soon. Congratulations to all my amazing colleagues!

@DevriesTerrance

@nitishsr

@jsusskin

@uoguelph_mlrg

@mikeroberts3000

@anuragranj

@jramapuram

3

4

64

We wrote a blogpost summarizing our generative model for scene level radiance fields (GSN) paper to be presented at

@ICCV_2021

. If this post and area of research are interesting to you check out FT/intern opportunities on our team

0

15

64

Official code release for our Generative Scene Networks

@ICCV_2021

paper! We provide code, training data and pre-trained models for you to try out. Check out the interactive exploration notebook, you can move through scenes sampled from the generator!

1

8

55

Here’s a way to speed up training even more. Optimize the parameters of the neural network jointly on a training set of multiple samples (eg. multiple scenes for NeRF, multiple objects for SDFs, etc). Let the hash tables be specific for each sample. Boom! Now you are amortizing!

1

6

53

I’ll be at the

@Apple

booth at

@NeurIPSConf

today from 1-3pm. Come say hi and lets chat about all things Apple MLR!

#NeurIPS23

0

1

46

Attending

#NeurIPS2022

after a few year hiatus! I will be giving an expo talk about “Generative Understanding of 3D Scenes”

@Apple

Mon at 3pm, and presenting the poster for GAUDI () on Thu at 9:30am. Will also be at the booth on Tue from 3-5pm. Come say hi!

1

7

45

Happy that this cool work lead by

@pengsheng_guo

has been accepted at

#wacv2022

. Updated preprint with results on DTU and project page coming soon!

0

6

45

Happy to announce that our paper “On the generalization of learning-based 3D reconstruction” was accepted to

@wacv2021

!!! I would like to highlight that we got very insightful reviews and comments that will be included in the camera-ready version.

1/n Check out our

@Apple

research paper "On the generalization of learning-based 3D reconstruction" (or 3D43D)

2

22

110

3

8

42

Using

@code

to ssh into compute, commit code and submit experiments from 38k feet has to be one of the greatest achievements of human history.

1

2

42

If you are attending

@NeurIPSConf

check out all the papers we are presenting! Also swing by our booth if you want to hear more about internships and FTE opportunities! I will be there on Weds 11am-1pm to answer all your questions :)

0

6

42

Interested in generative models and neural fields/INRs? Come by our poster where we present “Diffusion Probabilistic Fields” an approach for learning distributions over fields that can be trained in a single stage. Tuesday morning poster session!

#ICLR2023

0

14

40

If you are at

#ICML2023

please check out the following Apple papers . I won’t make it in person this year but please reach out to any of my fantastic colleagues that are around!

0

11

39

Starting the long trip to Kigali! Excited for

@iclr_conf

and catching up colleagues! If you want to chat about internship/FT opportunities at

@Apple

let me know!

3

2

37

Planning to attend

@CVPR

? Check out the workshop sessions on Sunday, I will be talking about generative modeling for fields and manifolds at the

@_LXAI

workshop!

2

9

37

More cool stuff on 3D scene generation! I’ve been waiting for someone to look into 3D consistency for inpainting-style objectives. IMO, having the text prompt being spatially distributed is the next layer of complexity.

0

2

31

Excited to be part of the mentoring panel for

@_LXAI

at

@ICCV_2021

, come by and chat with me to learn about the ML/CV opportunities at

@Apple

.

0

5

30

I finally have some time to engage in the discussion of our conformer generation paper sparkled by

@tkipf

's tweets. There's 3 things I'd like to clarify:

1) Symmetries are really important for any learning algorithm! Without structure learning gets harder!

3

2

27

Interesting times ahead, as bigger 3D datasets become available I predict the community will shift to “3D gen models from the ground up” as opposed to “distilling 2D models into 3D”.

1

2

29

Generative models for 3D are 🔥. This Friday at

@ml_collective

I will be talking about GAUDI, our approach to learn generative models of unconstrained 3D scenes. Check if you are interested in attending. Really looking forward to a great discussion!

Excited for this to be out! Introducing GAUDI: a generative model for 3D indoor scenes. We tackle the problem of learning a generative model of 3D scenes parametrized as radiance fields. This has been a great collaboration across multiple teams at

@Apple

.

21

114

438

0

3

29

This is the best explanation of the Laplace operator for geometry processing that I have seen so far.

@JustinMSolomon

0

4

27

Generative Scene Networks was accepted at

@ICCV_2021

! Congratulations

@DevriesTerrance

and collaborators

@nitishsr

@jsusskin

@uoguelph_mlrg

! Excited for what’s coming next :)

2

4

28

NeRF papers have become quite frequent nowadays (and I predict it will become even more so). Out of all the papers that have come out recently, to me this is the one that points to the most interest direction so far.

1

0

27

Making progress in breaking stereotypes around Apple and ML research ☺️ really looking forward for people to try MLX!

0

4

26

More work from MLR at Apple! Check out this fantastic paper by

@AggieInCA

and team. How can we effectively evaluate SSL models without requiring labels? :)

0

0

25

What would happen if we pretend all samples are neural fields in diffusion generative models? I’ll be talking about work that we have been doing on this direction at

#Apple

MLR on Sunday 1:30pm

@_LXAI

workshop!

#CVPR2023

#neuralfields

0

8

24

Congratulations

@Ahmed_AI035

this is so exciting! It was a pleasure to host you for your first internship at

@Apple

even before you started your Phd!!! Cant wait to see all the cool stuff you will do!

0

2

23

The great

@YuyangW95

and I will be presenting this tomorrow at

@genbio_workshop

in the morning poster session! Come to learn about why a non SE(3) equivariant model gets state of the art performance in conformer generation!

0

4

20

Happy to get an outstanding reviewer award from

@ICCV_2021

, I will be donating my free registration to a researcher from an under-represented group that wishes to attend. Will share details soon.

2

0

20

MDF is an oral at the Diffusion Models workshop tomorrow at

@NeurIPSConf

! Catch

@Ahmed_AI035

’s talk (via zoom because well…visas) and also

@YuyangW95

and I will be around in the poster session! Come say hi and lets chat about practical diffusion models on manifolds!

Introducing Manifold Diffusion Fields (MDF), our new work on learning generative models over fields defined on curved geometries. This is joint work with our intern

@Ahmed_AI035

(who hasn’t even started his PhD yet!) and

@jsusskin

at

@Apple

MLR 🧵

1

28

117

0

5

19

Excited to catch with folks at

@CVPR

and chat about generative models for functions (including our recent MDF work)!

1

1

18

Cool stuff! Although I wonder how would the training speed/accuracy compare if you replace the 27 SH parameters per voxel vertex with a single linear layer of the same dimension. IMO that’s the right baseline to compare with. Are SH params easier to learn?

0

2

19

I will visiting

@CMU_Robotics

next week to talk about generative models of fields in the VASC seminar . Excited to chat with the awesome faculty and students! If you are around and want to chat please ping me :). Thanks for the invite

@FerranDeLaTorre

!

2

4

18

Diffusion models tend to be notoriously slow during inference. Check out this amazing piece of work by great colleagues at

@Apple

looking at the problem of distilling diffusion models for single-step sampling. Congrats

@D_Berthelot_ML

and team!

0

2

18

Very exciting times ahead for the interplay of powerful generative models and 3D data! Shoutout to everyone involved in this effort

@pengsheng_guo

@samiraabnar

@Waltertalbott

@toshev

@ZhuoyuanChen

@laurent_dinh

@zhaisf

@hanlingoh

@jsusskin

.

1

0

18

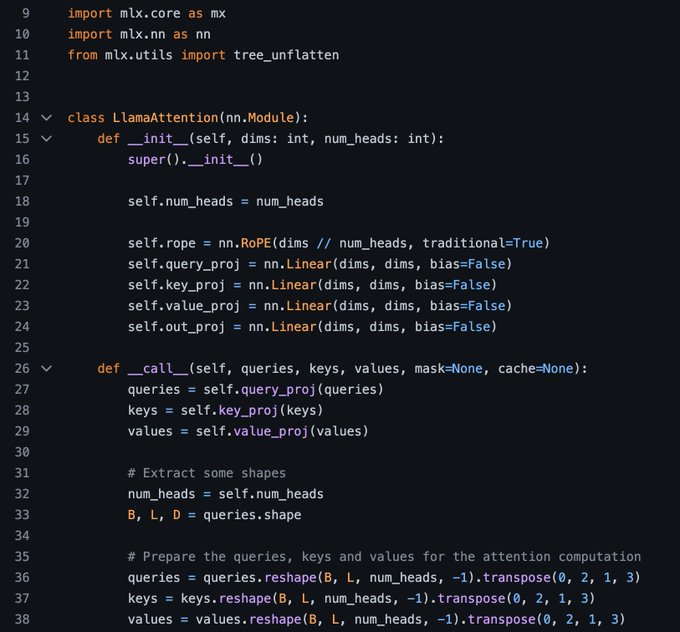

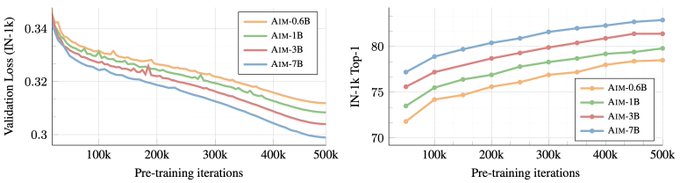

Data scale + transformers + autoregressive objective is the gift that keeps on giving! Now also in vision :) What an incredible work led by

@alaaelnouby

and team from Apple MLR. Check out the repo with checkpoints and bindings to MLX/Jax!

0

1

17

Publication link also available at:

Project page with some additional visualizations now online at (source code will be available in the next few weeks)

0

5

16

I’ve had several situations where a paper wasn’t ready to submit by conf. deadline date. This usually causes added stress (specially to phd students/interns), being able to submit when *work is ready* is going to be great for the community.

Today,

@RaiaHadsell

,

@kchonyc

and I are happy to announce the creation of a new journal: Transaction on Machine Learning Research (TMLR)

Learn more in our post:

54

691

3K

0

0

17

Check out our new work on learning generative models of functions on graphs for molecular conformer generation, led by the amazing

@YuyangW95

! A few things that I found really exciting about this work:

1

3

16

Always fun to go back to the origins! More so if its to talk about generative models! Thanks for hosting me

@SergioEscalera_

!

0

1

14

Check out this new work lead by

@bogdan_mazoure

and

@waltertalbott

using diffusion models to capture the distribution of the value function! IMO this is a very interesting way to think about how do we leverage large probabilistic models in RL settings.

Latest preprint from

@Apple

MLR - we use conditional diffusion models + Perceiver I/O to learn the policy's state visitation and the value function on hard offline robotic tasks . Work with

@waltertalbott

,

@itsbautistam

, Devon, Alex and

@jsusskin

.

0

15

103

0

1

13

Want to take generative modeling of the 3D world to the next level? Check out and come help us take it to the next level.

0

1

14

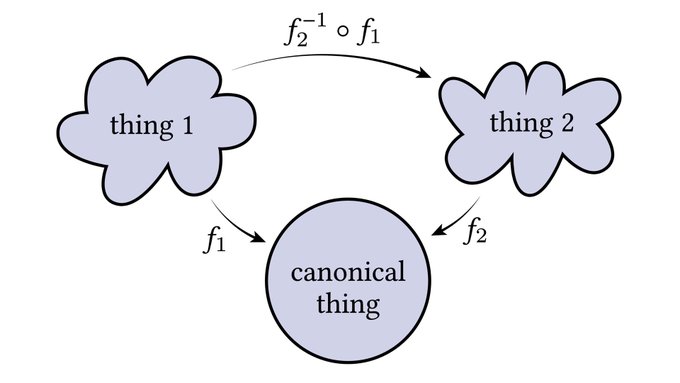

The fundamental problem of unsupervised correspondence learning is oftentimes formulated using this framework. Things become trickier when f^{-1} is not defined and needs to be approximated, a couple of cool papers dealing with it:

1

1

13

We will be presenting GSN at

#ICCV2021

, session 11A (today at 3pm PDT). Join

@DevriesTerrance

and myself if you want to chat about generative models for 3D scenes and what the future holds for these types of models.

#apple

#generativemodels

#computervision

0

3

13

How about an open source framework for ML development on Apple silicon? :)

0

1

12

Catch me and

@YuyangW95

at

@NeurIPSConf

next week! Happy to chat about anything from generative models, geometric deep learning, applications in scientific domains, as well as in vision and 3D. Also happy to chat about internship and FTE opportunities! Come join Apple MLR!

I’ll be attending

#NeurIPS2023

next week. Please feel free to reach out if you want to chat about generative models, AI4Science, geometric deep learning, and more!

2

0

18

0

1

12

Excited to participate in the

@_LXAI

mentorship hour at

#NeurIPS2021

alongside incredible mentors. Join and say hi!

Let's all welcome the Mentor Representatives from the

@_LXAI

Sponsors for the workshop this year co-locating with

#NeurIPS2021

!! Today at 4:30 PM PST during the mentoring hour! Join here 👉

#LXAI

#MentoringHour

#NeurIPS2021

0

2

8

0

3

12

The DFN-2B dataset used to train AIM is also available on HF (by the great

@Vaishaal

)

0

2

11

Text-to-3D from amazing colleagues at

@Apple

!! Congratulations

@pengsheng_guo

🔥🔥🔥

0

1

10

Check out the fantastic video explanation by

@artsiom_s

of a couple of papers on self-supervised learning that we worked together on a few years ago before CPC was cool. Those were good times

@artsiom_s

!

My new video on self-supervised representation learning (also easy to understand for beginners). I explain CliqueCNN which builds compact cliques for classification as a pretext task and I discuss other self-supervised learning approaches.

@itsbautistam

3

19

95

0

3

10

I love seeing more papers on scene generative models. I believe that to make substantial progress in RL we need very powerful world models. We are just seeing the beginning of what these models are capable of!

Pathdreamer: A World Model for Indoor Navigation,

@kohjingyu

et al. ()

A neural network hallucinating indoor scenes from a single given observation in a previously unseen building.

Possibilities are endless:

5

72

347

0

0

10

Check out this opening if you are interested in foundation models and embodied AI!

@alexttoshev

’s team is going cool stuff!

0

1

10

@karpathy

How about "These violent delights have violent ends - Geoff Hinton". I bursted into laughter :D

0

1

9

Nice! I feel fast geodesic distance extraction could be useful as GT for learning-based approaches for correspondence modeling.

Point cloud code releases in

#geometrycentral

C++, with Python bindings on pip!

Fast computation of geodesic distance, nearest-geodesic-neighbor interpolation, parallel transport, and the logarithmic map.

(C++)

(Python)

(1/4)

8

118

530

0

2

9

On Gemini day you can also learn how to scale your EMA! From great colleagues at Apple MLR

Excited to be at NeurIPS in New Orleans next week and hope to see many of you there! On Wednesday, my co-authors (

@jramapuram

,

@PierreAblin

, Tatiana Likhomanenko, Xavier Suau, Russ Webb) and I will present our🥳spotlight-awarded🎉work “How to Scale Your EMA”.

1

14

49

0

0

8

Thanks for having me

@ml_collective

and

@savvyRL

, I hope folks enjoyed as much as I did. All questions and comments were really insightful!

2

1

9

Sharing this because I believe this work deserves more attention! I really enjoyed the clean and elegant formulation of the problem from the lens of interpolating densities.

Our paper on a general framework for efficiently building continuous normalizing flows between any distributions has been accepted

@ICLR

2023! Here are some flow-flowers. of interest:

@DaniloJRezende

@KyleCranmer

@FrankNoeBerlin

@ylecun

@wgrathwohl

Paper:

5

39

281

1

0

9