Sasha Luccioni, PhD 🦋🌎✨🤗

@SashaMTL

Followers

18,633

Following

3,669

Media

1,777

Statuses

14,896

AI & Climate Lead @HuggingFace , Board Member of @WiMLworkshop , Founding Member of @ClimateChangeAI . @TEDTalks speaker. She/her/Dr/ 🦋

Tiohti:áke Montréal

Joined January 2015

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Madonna

• 1240735 Tweets

어린이날

• 325138 Tweets

こどもの日

• 287795 Tweets

Not Like Us

• 268076 Tweets

Canelo

• 237214 Tweets

Anitta

• 149487 Tweets

NHKマイル

• 122327 Tweets

子供の日

• 79433 Tweets

鯉のぼり

• 69862 Tweets

Leafs

• 68888 Tweets

#マリカにじさんじ杯

• 48702 Tweets

#Mile1stSoloConcert

• 34451 Tweets

3ZAAAP X GULF

• 33260 Tweets

Pantoja

• 33001 Tweets

ジャンタルマンタル

• 32924 Tweets

Erceg

• 30745 Tweets

アスコリピチェーノ

• 28907 Tweets

#SB19onITunesWorldwideChart

• 26355 Tweets

マリカ杯

• 19003 Tweets

新潟大賞典

• 16591 Tweets

ボンドガール

• 16431 Tweets

TOP10Worldwide MOONLIGHTiTunes

• 13913 Tweets

ロジリオン

• 12275 Tweets

#アニソンプレミアム

• 12153 Tweets

ルメール

• 11713 Tweets

Marner

• 10997 Tweets

Benavidez

• 10843 Tweets

Last Seen Profiles

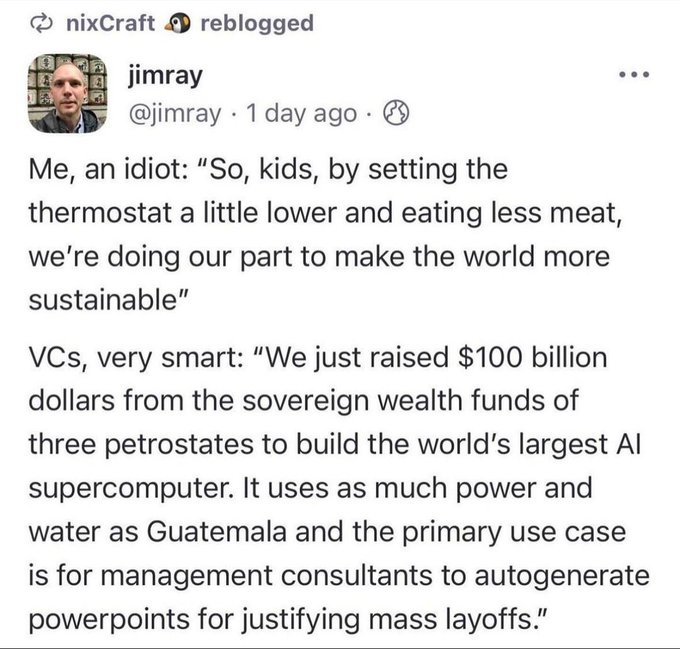

This is definitely slick, but I see two main uses:

1) to sell people more stuff (via ads)

2) to make non-consensual/misleading content to manipulate or harass people online.

Genuine question - why is everyone so excited? 🤔

149

551

4K

This makes me immensely happy...

@timnitGebru

your voice keeps speaking the truth and inspiring people worldwide 💖

13

170

1K

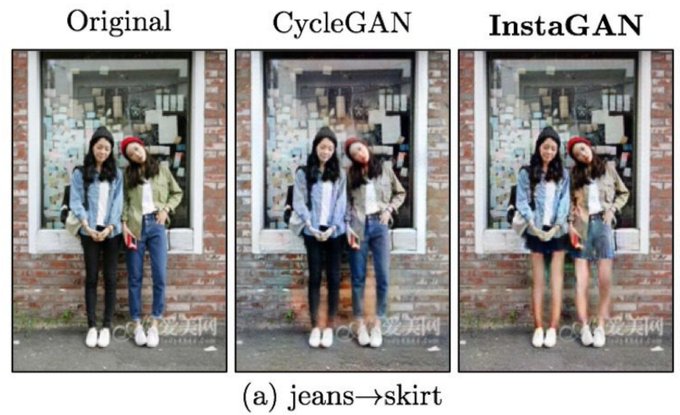

In an ideal world, both

#machinelearning

researchers and reviewers would use their critical thinking to decide that using

#GANs

for essentially undressing women is an ethically dubious task:

35

211

1K

Having studied cognitive science, I am regularly appalled by the way many AI folks see cognition/intelligence.

Literally ignoring centuries of research in everything from psychology to education and neuroscience..

41

216

951

I realized today that I'm the 4th generation of women in my family with a PhD: my great-grandmother was a geologist 🪨, my grandmother a chemist 🔬, my mother is a mathematician 🔢 and I'm a computer scientist 👩💻.

I'm incredibly proud of this legacy of

#WomenInScience

!

14

26

870

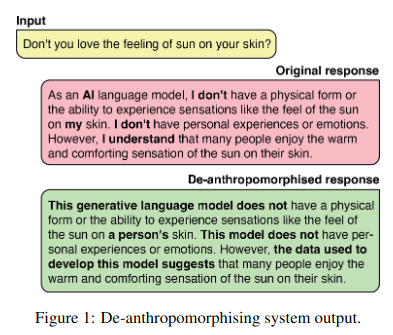

Absolutely loving this zinger from

@ZeerakTalat

and friends about the dangers of anthropomorphizing AI systems -- it does an amazing job at explaining all of the risks that come with it! A must read 🤗

27

183

587

Wow,

@timnitGebru

, you are our shining beacon of honesty and truth in AI. All my congratulations! 🌟

4

123

539

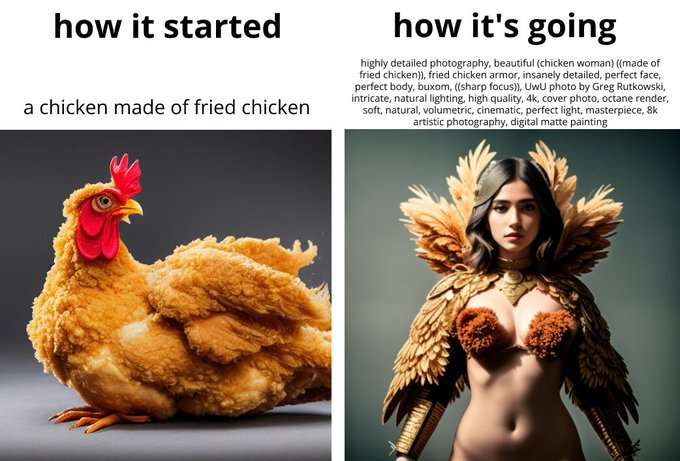

The objectification of women in text-to-image models is truly disheartening.. Does nobody else see the issue with prompts such as "perfect body" and "perfect face" and the impact it can have on (most) women who don't look like that?

44

53

513

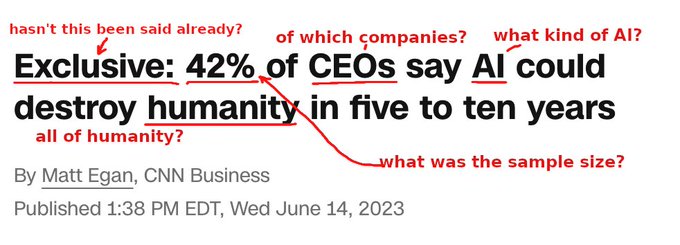

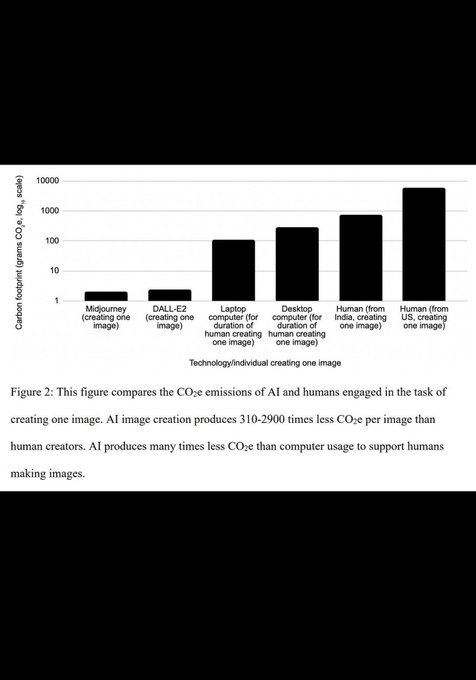

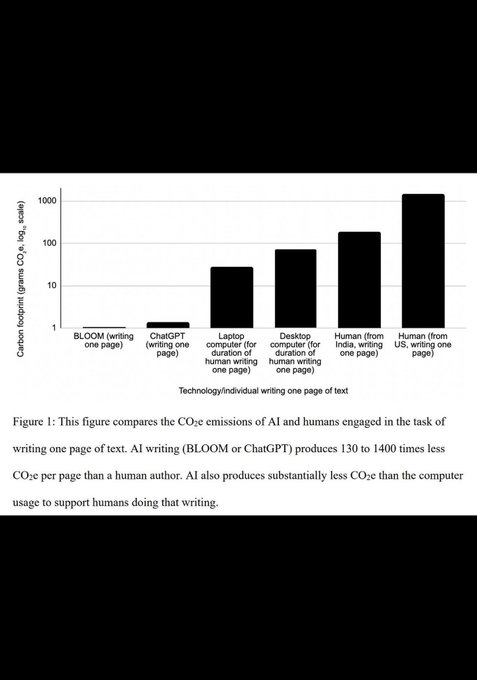

Oh great, that preprint is now a Nature report🫠

Y'all, this makes no sense.

You simply can't compare the carbon emissions of people and objects.

An individual’s total carbon footprint estimate can't be attributed to their profession.

See my rant here:

33

118

471

The always and forever PSA: stop treating AI models like humans.

No, ChatGPT cannot "see, hear and speak".

It can be integrated with sensors that will feed it information in different modalities.

Don't fan the flames of hype, y'all.

47

94

463

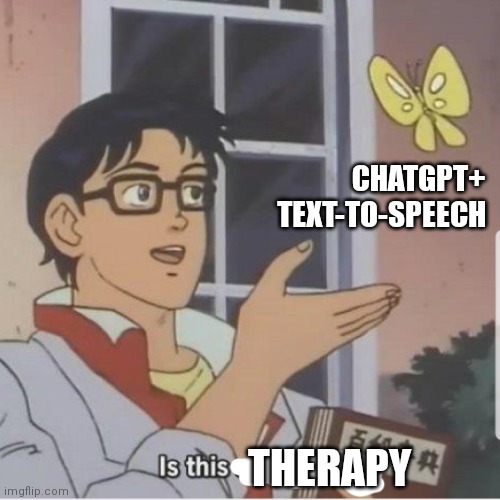

"AI will replace therapists" in 2023 is what "AI will replace radiologists" was in 2016.

Misguided, premature and not happening anytime soon.

36

49

421

Folks, please don't anthropomorphize LLMs (or AI models in general). They don't behave, they don't decide, they don't manipulate. They simply generate.

31

94

404

Y'all, they changed it!

Thank you to everyone who spoke up -

@BrigitteTousi

,

@Thom_Wolf

,

@ClementDelangue

and the whole

@huggingface

fam 🤗

This gives me hope that positive change is, indeed, possible ♥️

12

42

402

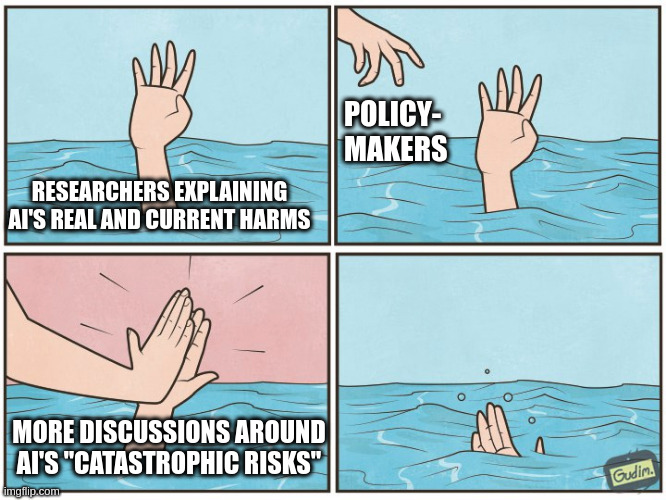

My editorial about the (infamous) open letter is live on WIRED!

I did my best to shift the narrative and recognize existing work by people like

@timnitGebru

,

@ruha9

and

@rajiinio

, who have been proposing ways to make AI safer and less harmful for years.

5

141

372

Man, this preprint is really the gift that keeps on giving.

In case people missed my previous PSA : you can't compare the carbon emissions of people and objects. Humans are more than just the work that they do.

(Also, that paper makes a lot of false assumptions in general)

23

81

363

The real reason why so little information is given about the training data that goes into large language models.. They know it's stolen, they know it's copyright infringement.

That's why data audits carried out by people like

@Abebab

are important, they give us crucial evidence.

11

104

355

@ylecun

Yann, the methodology of this article is so broken. You can't just compare the emissions of a person and those of an AI model. It's like comparing the fuel efficiency of a rocket ship and a horse, yeah they both convert fuel to speed, but fundamentally they're different.

17

23

339

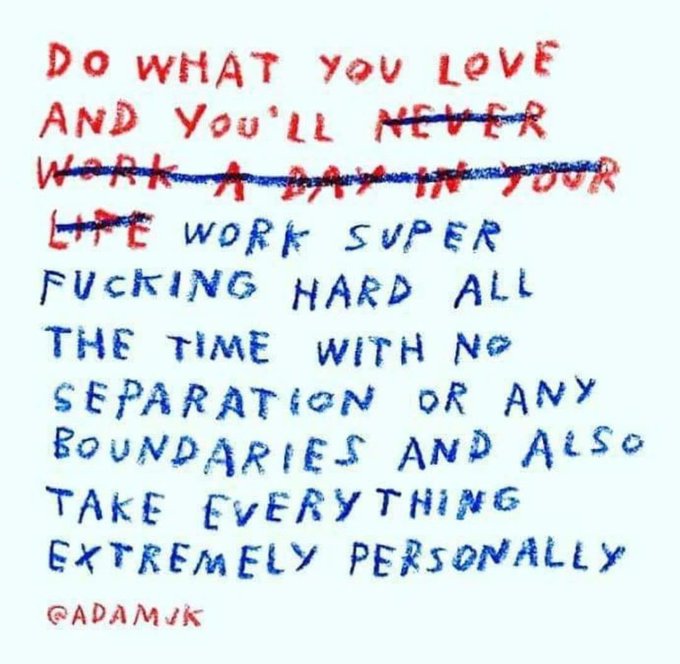

Also, can we stop with the binary choices?

I'm optimistic about what we *could* be doing with AI but incredibly pessimistic about what people *are* doing with AI 🫠

23

64

316

I am absolutely floored by this coverage of the amazing women changing the present and future of AI:

@timnitGebru

,

@ruchowdh

,

@safiyanoble

,

@jovialjoy

... Thank you for being the absolute rockstars that you are, and for the work that you do! ✨

3

88

305

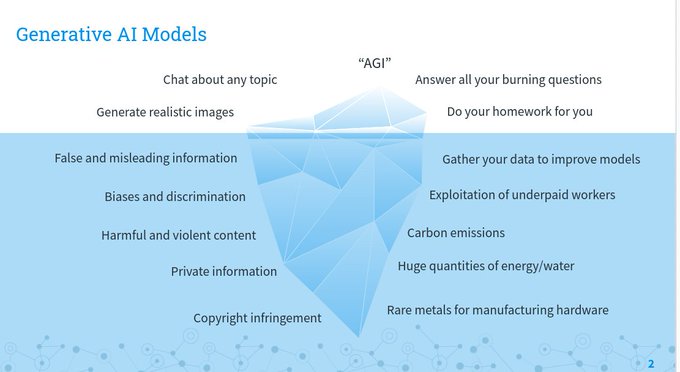

There is a surprising amount of human labor behind the illusion of machine intelligence.

Exploited, underpaid, vulnerable human labor 💔

7

108

307

This is so symptomatic of the broken relationship between AI and the environment.

We can't magically generate more energy, nor is geoengineering a viable climate solution.

We need to stop stuffing gen AI into everything and reduce its energy use, right now.

16

92

302

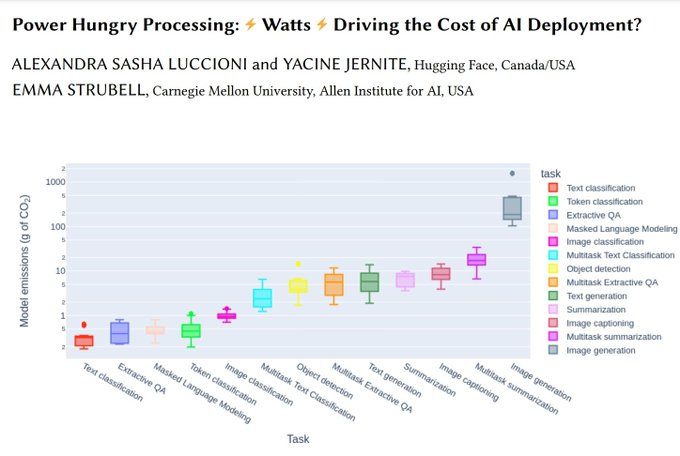

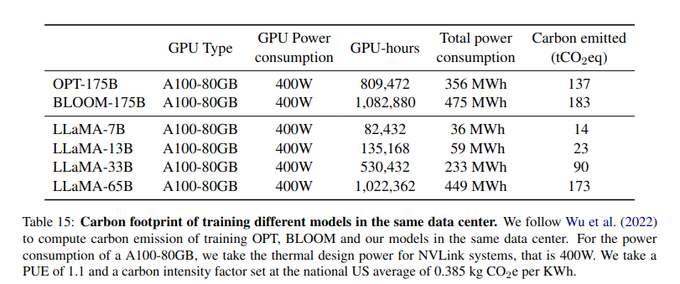

After weeks of work, I managed to extract the carbon footprint for 1,588 models uploaded to the

@huggingface

Hub, based on model cards and metadata.

Stay tuned for some *pretty sweet* analyses!

8

21

289

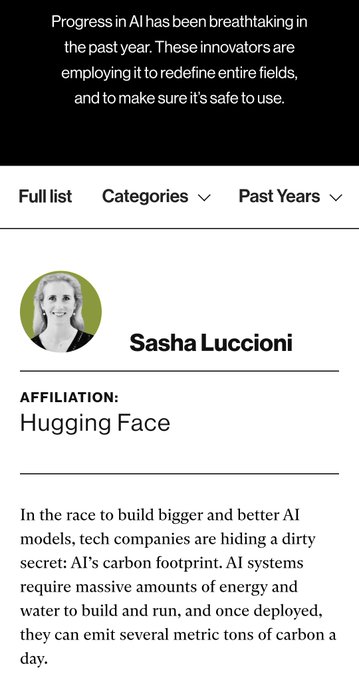

Y'all are probably tired of lists by now, but I'm pretty excited to be featured on this year's

@techreview

list of 35 Innovators under 35! 🥰

More importantly, I'm happy that the environmental impacts of AI are now part of the conversation 🌎💻

33

30

287

For the last time, no - AI models cannot read or write. They can process input and generate output.

We've gotta stop anthropomorphizing AI, y'all!

Cc

@emilymbender

22

66

271

For the past few months,

@mmitchell_ai

,

@YJernite

and I have been working on the exploration of popular NLP datasets.

Here are some fun things we discovered!

1

44

261

Also, please don't get me started on the environmental impacts of this shiny new toy.

How much kWh of energy does each frame take? ⚡

How many hyperscale datacenters are running 24/7 just so we can generate some fancy b-roll? 🏭

What are their carbon emissions? 💨

9

64

253

My AI prediction for 2024 is that people will come to terms with the fact that we 👏don't 👏 need 👏 generative 👏 AI 👏 everywhere👏.

Sheesh.

13

37

250

Wholly agree with this prediction: "an open-source revolution has begun to match, and sometimes surpass, what the richest labs are doing."

I'm pumped to keep making this happen at

@huggingface

in 2023! 🤗

9

36

245

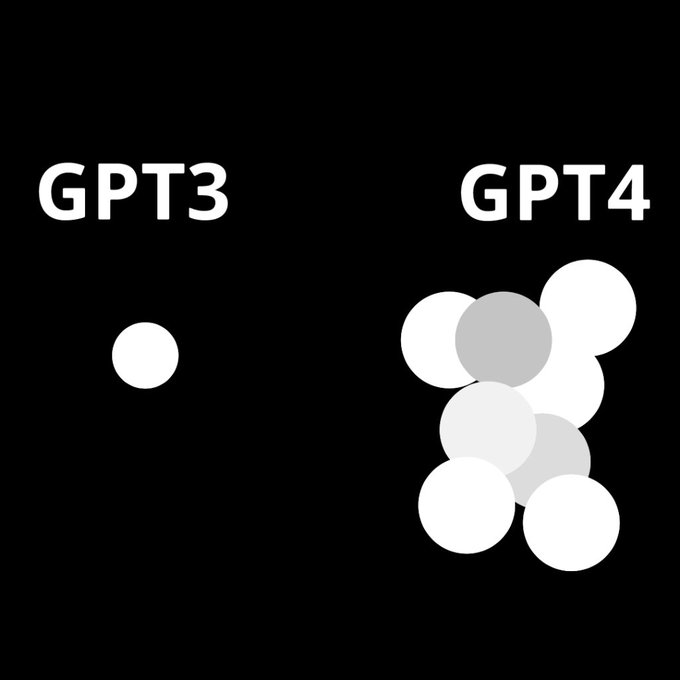

This.

LLMs will not bring us closer to AGI. It's all just auto-complete on steroids.

28

37

234