Robert Dadashi

@robdadashi

Followers

1,793

Following

428

Media

8

Statuses

170

reinforcement learning research @GoogleDeepMind , Gemma post-training lead

Paris, France

Joined September 2014

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

#Alikocistifa

• 255316 Tweets

BIENVENUE À PARIS APO

• 160788 Tweets

連休最終日

• 101128 Tweets

The Next Prince Q5

• 78217 Tweets

立憲民主党

• 55124 Tweets

더보이즈

• 47427 Tweets

野田佳彦

• 37938 Tweets

イチロー

• 34206 Tweets

#polis

• 31970 Tweets

Şeyda Yılmaz

• 30240 Tweets

野田新代表

• 18511 Tweets

グリフィン

• 18471 Tweets

野田さん

• 16391 Tweets

松井秀喜

• 14798 Tweets

坂本勇人

• 13987 Tweets

政権交代

• 13601 Tweets

KALAKAL LIVE ON ROADSHOW

• 13425 Tweets

どらほー

• 11807 Tweets

Last Seen Profiles

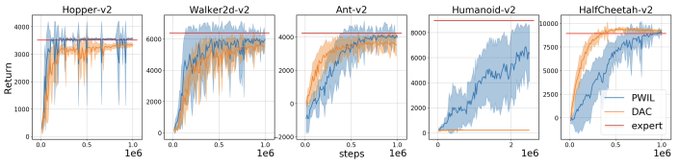

We have just released the code for our latest paper on Imitation Learning: PWIL (). It’s simple and concise and yet performs strongly with MuJoCo environments. The code builds on

@deepmind

’s Acme (which is great !)

2

13

71

During my

@GoogleAI

residency I have been fortunate to work with my research mentor

@marcgbellemare

and other great collaborators on projects that I am weirdly excited about. This led to 2 papers accepted at ICML: (1/2)

2

4

67

I am so proud to see Gemma released today! I have had a fantastic time working on post-training and RLHF with an amazing team. Cannot wait to see what the community builds with these models!

3

9

57

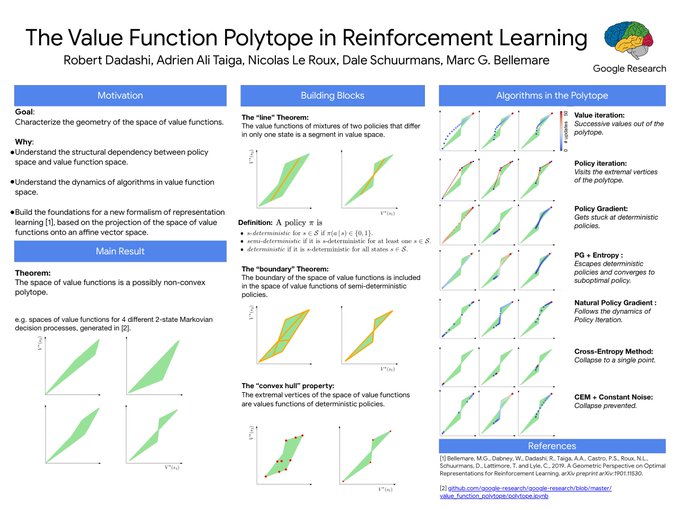

I will talk about the *mysterious* polytopes in reinforcement learning at

#ICML2019

, Tuesday June 11th at 5:15pm in room 104 and at 6:30 at poster 119.

1

6

50

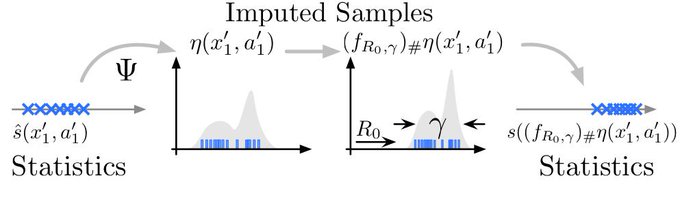

Proud to be part of this project tackling how we should think of statistics propagation & derive new algorithms in the context of distributional reinforcement learning

Mark Rowland's distributional RL paper on samples and statistics (& potential mismatch) is out -- big step towards understanding the method w/

@wwdabney

@RobertDadashi

S. Kumar R. Munos

0

23

80

0

3

34

This was done with my great collaborators:

@piergsessa

,

@suryabhupa

,

@leonardhussenot

,

@johanferret

,

@olivierbachem

and of course the Gemma team.

11/11

2

1

35

Gemma 1.1 is making a big jump on the Arena leaderboard!

1

4

30

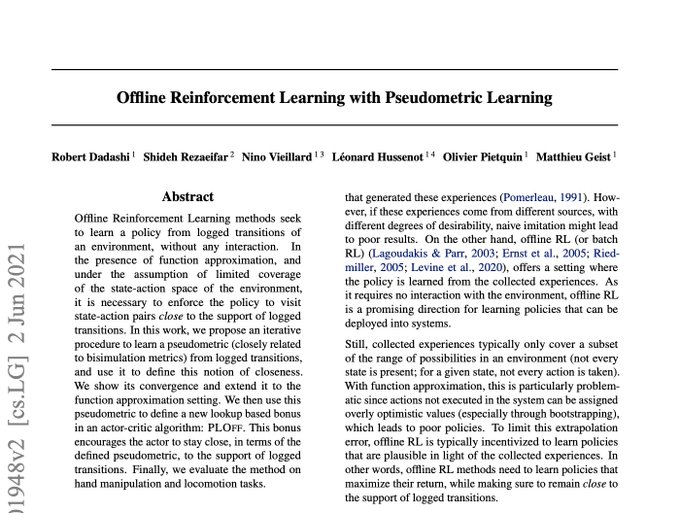

New paper to appear at

#ICML2021

: Offline Reinforcement Learning with Pseudometric Learning (PLOff)

PLOff first learns a metric in the spirit of bisimulations using offline transitions, and uses it to derive a bonus preventing OOD action extrapolation

1/n

1

7

18

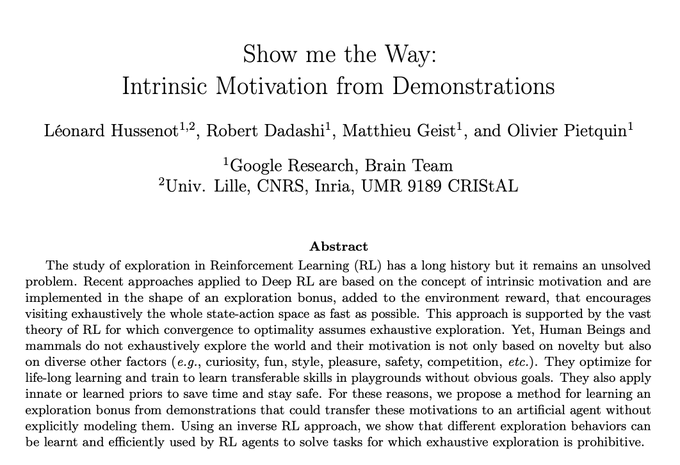

Exhaustive exploration makes sense when we have no prior about an environment (which most novelty based exploration bonuses assume). In this work, we use demonstrations to derive an exploration bonus, and show that we can extract the priors of the demonstrator.

1

3

18

It has been an absolute blast to lead this effort with my wonderful contributors:

@suryabhupa

,

@piergsessa

,

@leonardhussenot

,

@bshahr

,

@sabelaraga

,

@OlivierBachem

,

@johanferret

,

@alexandrerame

,

@mw_hoffman

,

@abefriesen

,

@charlinelelan

, Sertan, Piotr, Nikola, Danila,

10/n

1

2

18

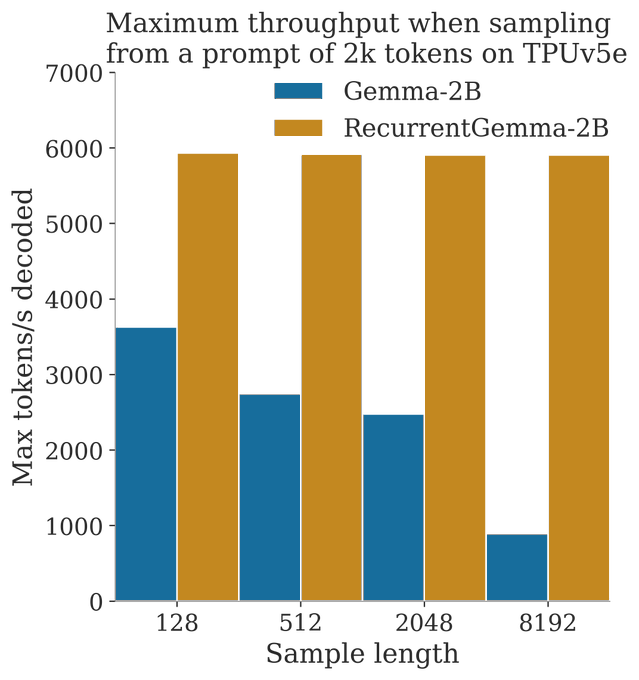

It was great to collaborate with the RecurrentGemma team on post-training (with again, a colossal effort from

@piergsessa

)! I am so excited to see the applications that RecurrentGemma opens :)

0

1

13

I recommend reading this, thanks

@natolambert

! Here are a few additional thoughts:

1

1

12

Although I received a "Visual Intimidation Award" for most equations (thanks

@MILAMontreal

), there will be no equation in my talk.

2

0

7

Congrats

@aalitaiga

!

Congrats to my PhD student

@aalitaiga

for winning Best Paper Award at the Exploration in RL Workshop at ICML19, "Benchmarking Bonus-Based Exploration Methods on the Arcade Learning Environment"! Talk today, 11:30, Hall A.

#ICML2019

#ERL19

@AaronCourville

@cholodovskis

@LiamFedus

4

15

124

0

0

6

We recover near-optimal expert behaviour on all tasks considered. Joint work with my great collaborators:

@leonardhussenot

, Matthieu Geist and Olivier Pietquin ! 6/

0

0

5

@EugeneVinitsky

In some way you can think of a reward model learned from preferences as a discriminator (and so RLHF really means IL). The SFT phase is what a lot of IL methods do in practice: start from the BC policy

1

2

4

Analytics have changed the game in basketball or baseball. Very excited to see the impact they will have on football.

.

@Polytechnique

&

@PSG_English

launch the “Sports Analytics Challenge”: an exclusive project that invites candidates worldwide to take part in the

#datascience

& sports performance

#challengexpsg

⚽️. Consult the challenge website for more information:

0

4

9

0

0

3

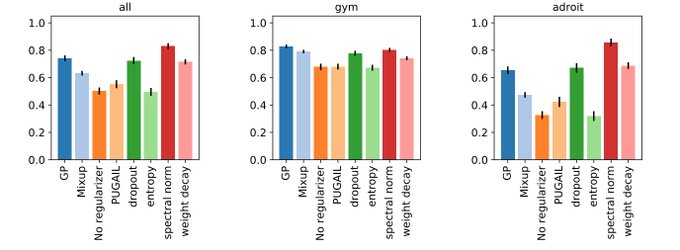

Check out our new paper: an extensive study of the experimental and design choices of GAIL-like methods !

0

0

3

@RorySmith

"There is no plan that contains Messi." Wrong, there is a one man plan named

@nglkante

.

0

0

3

This is joint work with my great collaborators

@shidilrzf

,

@leonardhussenot

, Nino Vieillard, Olivier Pietquin and Matthieu Geist.

n/n

0

0

2

@jishanshaikh41

I learned with David Silver's online lectures from his RL course at UCL. You can also check out the RL course from

@mlittmancs

and

@isbellHFh

on udacity.

0

0

2