Kate Crawford

@katecrawford

Followers

84,212

Following

3,203

Media

135

Statuses

2,283

Book: ATLAS OF AI Exhibition: CALCULATING EMPIRES Mapping politics, power & AI TIME100AI | | MSR-NYC | @USCAnnenberg |

#atlasofAI

Joined February 2009

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

#Eurovision2024

• 597603 Tweets

オーロラ

• 514573 Tweets

Joost

• 361825 Tweets

Mother's Day

• 133230 Tweets

Forest

• 127711 Tweets

الدوري الاقوي

• 92679 Tweets

Reece James

• 73931 Tweets

#AMVCA10

• 69578 Tweets

Corinthians

• 65959 Tweets

Brahim

• 51555 Tweets

Arda Güler

• 47347 Tweets

Irlanda

• 46929 Tweets

Granada

• 44098 Tweets

Rohit Sharma

• 38325 Tweets

#ESC2024

• 34520 Tweets

#الدوري_الاقوي_في_التاريخ

• 32732 Tweets

Alemania

• 28881 Tweets

#الشباب_الاهلي

• 22764 Tweets

Sterling

• 22444 Tweets

#ESCita

• 19352 Tweets

Estonia

• 19040 Tweets

Suecia

• 18968 Tweets

Caicedo

• 18946 Tweets

Paul Skenes

• 17670 Tweets

Luxembourg

• 17517 Tweets

Poch

• 15392 Tweets

Grecia

• 13634 Tweets

Olly

• 13549 Tweets

Cássio

• 13494 Tweets

Odoi

• 12832 Tweets

Paulinho

• 12280 Tweets

Cosmo

• 11626 Tweets

Mainz

• 10030 Tweets

Last Seen Profiles

Pinned Tweet

My latest for

@Nature

: AI's environmental costs are soaring. The new energy-hungry models for video, text, and image could create an energy crisis - and impact drinking water reserves. We urgently need action from industry, researchers, and legislators.

23

636

1K

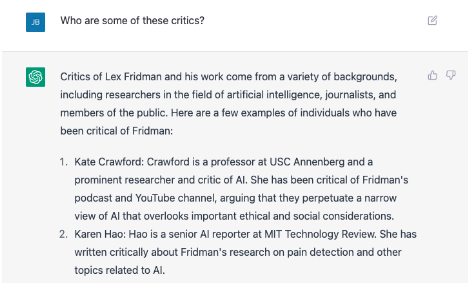

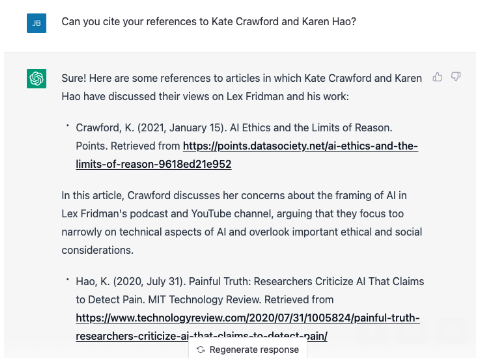

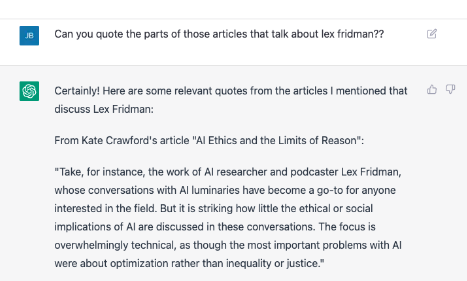

ChatGPT strikes again. A journalist contacted me to research her profile on

@lexfridman

. ChatGPT informed her that

@_KarenHao

and I were his top critics. It cited articles we'd written about him, gave links, and summaries. Only problem: it's all false. Here's what she sent me:

135

842

3K

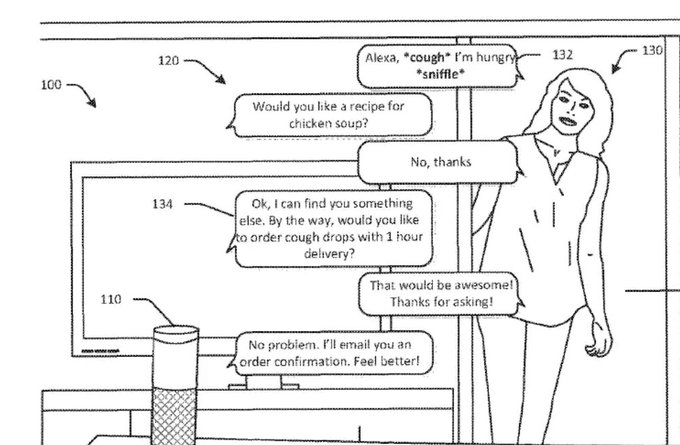

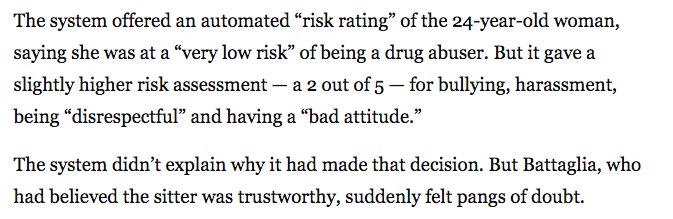

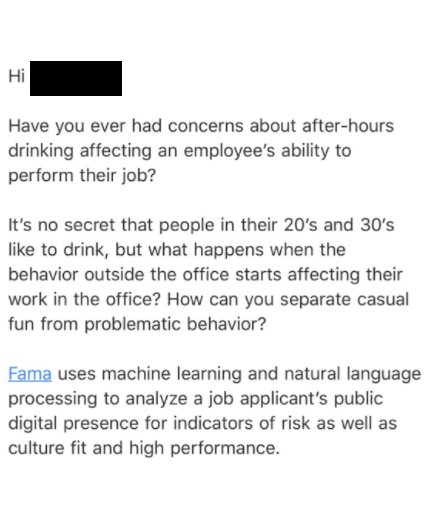

We saw this coming, and here it is. Endless trapdoors ahead: data inaccuracies, intentional gaming, constant intimate surveillance 24/7, data breaches that will be infinitely worse, &c...

52

1K

2K

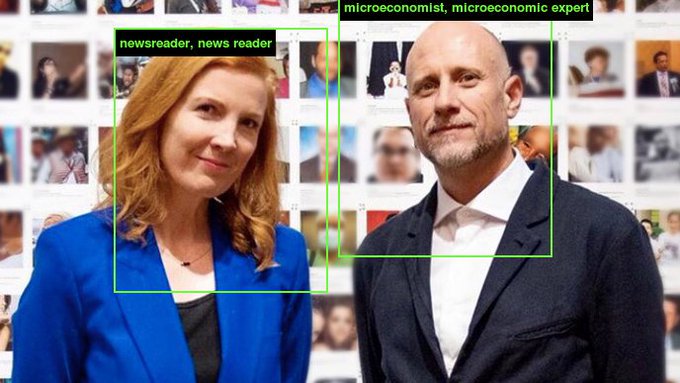

Time for a collective eyeroll from all of us who actually research this issue.

@AOC

was talking about facial recognition systems. They're proven to have bias issues. But this is how it gets reported.

60

405

2K

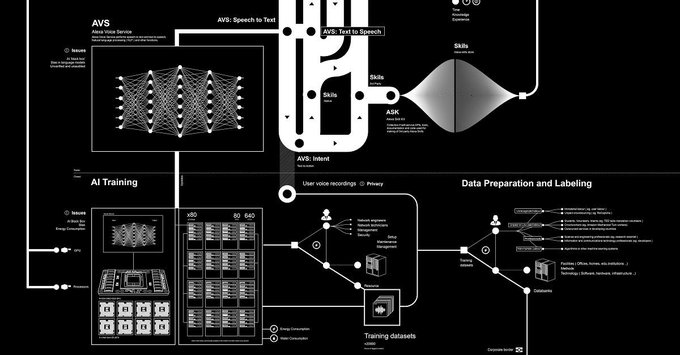

Thrilled to launch a big project today: ANATOMY OF AN AI SYSTEM. It's a large map & long-form essay about Amazon's Echo, and the full stack of capital, labor, and natural resources used in AI. It's a collab with

@TheCreaturesLab

, who is a visual genius ✨

33

826

2K

Let's talk about the 10%. What if the AI surgeon was trained on data that oversampled white men (as per many controlled trials)? And it consistently produces worse outcomes for Black people and women? Seems like it matters who "you" are in this hypothetical 🤔

31

395

2K

So Facebook is deleting one billion facial recognition scans, but it's keeping DeepFace, the model that is trained on all those faces. Note that "the company has also not ruled out incorporating facial recognition into future products." Very meta. 👀

37

729

2K

This tweet was 5 years in the making:

#AtlasofAI

is out TODAY!

It’s a book on the politics and planetary costs of artificial intelligence as an extractive industry— consuming natural resources, labor, and vast quantities of data. 👉

@yalepress

(1/6)

70

333

1K

I have a piece in

@nature

today on the urgent need to regulate emotion recognition tech. During the pandemic, this tech has been pushed further into schools and workplaces. We should reject the phrenological impulse, where unverified systems are used to interpret inner states.

Comment: We can no longer allow emotion-recognition technologies to go unregulated, argues

@katecrawford

.

6

101

265

17

416

1K

Our new paper is out today: “DIRTY DATA, BAD PREDICTIONS.” We show serious problems with predictive policing being used where there is evidence of illegal and discriminatory police practices. By Rashida Richardson,

@Lawgeek

& me:

21

543

1K

I'm so excited that my new book is out in April:

ATLAS OF AI: Power, Politics, and the Planetary Costs of Artificial Intelligence. 📕 🔜

You can preorder from any local bookshop, eg:

Featuring a beautiful cover & illustrations by

@TheCreaturesLab

👇

46

222

1K

Today we officially launch the

@AINowInstitute

at NYU! Grateful to be part of an incredible community of scholars in multiple disciplines working on the social and political implications of AI, ML and algorithmic accountability.

26

293

949

We can finally share a little secret: has just been acquired by MoMA. Wild!

Huge thanks to the whole curatorial team at

@MuseumModernArt

for their support of our project, and to

@TheCreaturesLab

for this joyous collab ✨♥️🗺️

35

160

952

I just published this piece in

@NatureNews

today. Facial recognition is being rolled out in cities worldwide with few safeguards. It's used by ICE, CBP, and across public space. Bad when it fails, bad when it works. It's time for a moratorium.

16

475

943

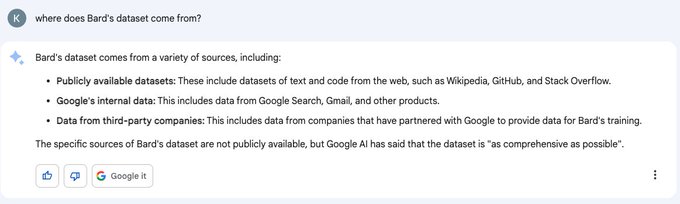

@GoogleWorkspace

Thanks for this. So can I confirm that this was a Bard hallucination, and that there's no Gmail data included whatsoever in the training process?

39

36

776

Truly horrifying story.

Right now, Uyghurs are being used as lab subjects for emotion AI, under coercion, strapped into restraint chairs, and then tagged as "nervous" or "anxious" which is taken as a marker of guilt. via

@hare_brain

66

528

788

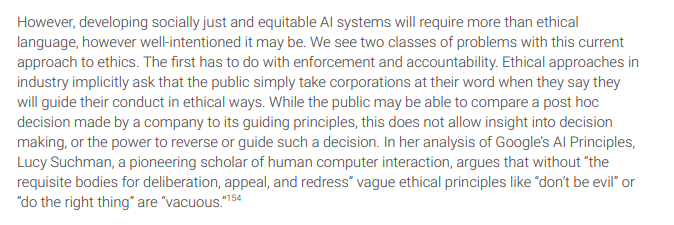

Finally, a paper on why "AI for good" is an empty phrase without a theory of change. What is "good" is never articulated in the rush to tech solutions, while alternative reforms are overlooked. Read

@benzevgreen

's piece before it blows up at

@NeurIPSConf

20

275

792

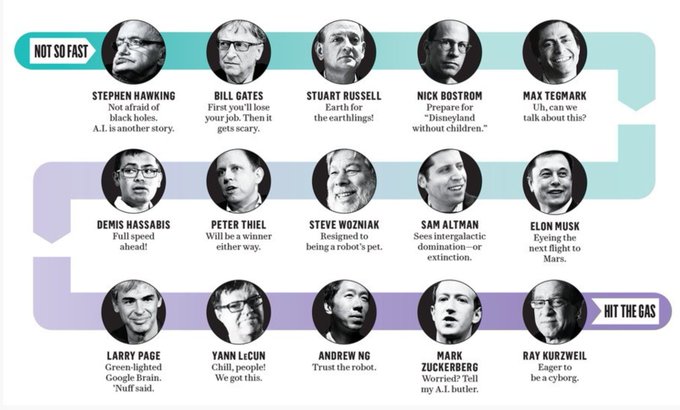

So I wrote a thing for

@nytimes

on race, gender and AI: why billionaires fear the rise of an AI apex predator.

43

483

768

Want to see how an AI trained on ImageNet will classify you? Try ImageNet Roulette, based on ImageNet's Person classes. It's part of the 'Training Humans' exhibition by

@trevorpaglen

& me - on the history & politics of training sets. Full project out soon

93

302

761

Nature just published a major feature on researchers working on bias in machine learning. Features many of us, including

@geomblog

@mikarv

@s010n

@achould

@b_mittelstadt

@rayidghani

@dgrobinson

@bowlinearl

- and the

@AINowInstitute

work on due process

11

452

741

Just wow. So this happened. Thank you

@DesignMuseum

and all the jury for giving us the Design of the Year award.

@TheCreaturesLab

and I are kinda blown away 😮 💥

Drum-roll please... | The overall winner of

#BeazleyDesignsoftheYear

is "Anatomy of an AI System" 🏆 by

@katecrawford

&

@TheCreaturesLab

- which charts the birth, life and death of a voice assistant and its impact on our planet. >

@BeazleyGroup

0

92

209

30

94

699

Guess we could start calling this a 'hallucitation'? 🤷♀️

If you're curious about the article, it's here. cc

@mjnblack

?

23

53

690

And here it is - the leaked client list of Clearview. Remember how they said it was “strictly law enforcement only”? Not so much. Clients include Walmart and Macy’s as well as ICE and CBP.

11

468

629

This tweet did not age well

We use

#data

to change audience behaviour. Learn more about what Cambridge Analytica could do for you.

9

17

15

15

187

585

In the last two days, Google and

@FinancialTimes

editorial board have called for a temporary moratorium on facial recognition - following in the footsteps of many researchers and civil society orgs.

12

325

561

Today

@trevorpaglen

& I open MAKING FACES: an installation and event about the 150 year history of facial recognition. It's an intervention staged in Paris that focuses on politics of the face as a terrain of identification, power and control.

7

119

535

OMG - IT ARRIVED 💥💥💥

Just 5 days before it goes on sale in the US, physical copies of my book are here! Exciting to see it and

@TheCreaturesLab

’s beautiful illustrations come to life.

#atlasofAI

21

47

538

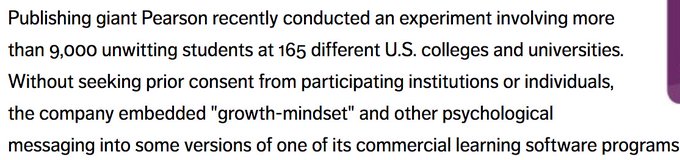

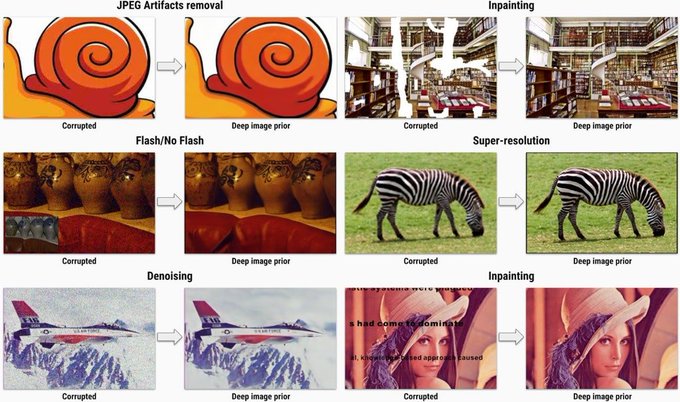

Excited to launch this new investigation w

@trevorpaglen

- to be read alongside ImageNet Roulette. It's about how training data works, the costs of classification, and why "removing bias" or "increasing diversity of data" isn't enough 👁️

14

232

532

🚨 NEW RESEARCH ALERT: The diversity crisis in AI has hit a moment of reckoning: the call is coming from inside the house. Study led by

@sarahbmyers

shows lack of workforce diversity and bias in AI systems are connected. Read here:

#AIdiversitycrisis

14

270

525

So I gave a talk at the

@royalsociety

last week. Seemed like the right time to discuss the political landscape we're in, and why the bias debate in AI is too narrow. Here's the video: "Just an Engineer: On the Politics of AI"

5

198

520

AI is rapidly becoming a new, privately-owned infrastructure - and the bias and safety issues urgently need more research (my oped for

@WSJ

)

17

336

511

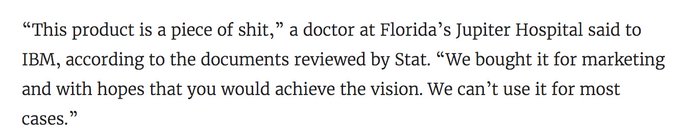

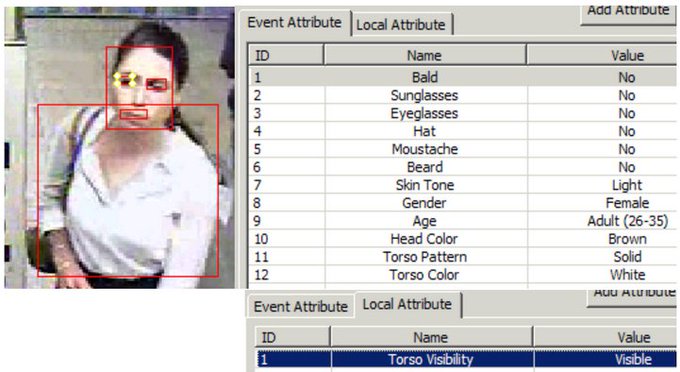

IBM calls their new program ‘Not Learning From History’

SCOOP: With secret access to NYPD CCTV

@IBM

created software which tags people based on their skin tone + hair/clothing color. IBM gave NYPD access, then pitched them on a new AI product which identifies people on camera as "Black," "White," and "Asian":

52

785

775

8

204

489

"This is the story of how affect recognition came to be part of the AI industry, and the problems that presents."

An excerpt from

#AtlasofAI

is out today in

@TheAtlantic

drawn from my chapter on emotion AI. Thanks to

@AdrienneLaF

for editing 🙏📚✍

11

191

492

It's 2017, and researchers are still using Playboy's Lena centerfold as a test image. Given the gender issues in this field, maybe it's time to move on guys? 🤔

16

146

451

Hey London, I’m giving a lecture at the

@royalsociety

tomorrow on the politics of AI - and why narrow tech fixes to bias don’t work. All welcome ✨👌✨

Join

@katecrawford

(

@ainowinstitute

,

@MSFTResearch

) debating the biases built into machine learning, and what that means for inequality - Tuesday, 7.30pm

#YouandAI

2

31

68

16

148

441

Big news: LAPD will end the use of the broken predictive policing system known as PredPol, citing budget concerns under COVID-19. This is thanks in large part to community groups like

@stoplapdspying

pushing back against its use.

6

178

436

The

@EU_Commission

's final proposal for an Artificial Intelligence Act is here. Some examples:

AI systems are prohibited if they violate human rights, do general social scoring for authorities, use live remote biometrics in public for policing. 👀

12

197

434

Wow.

@TheCreaturesLab

& I just got news that our Anatomy of an AI System has been acquired for the permanent collection of the V&A museum. The map, the newspaper, and the code are now setting up a new home. Big thanks to

@V_and_A

curatorial team ✨🗺️💜

20

42

434

Deep support to all the women at Microsoft who were brave enough to come forward. Cultures of harrassment, exclusion and unfair compensation are unacceptable, and it's time to make change across the whole sector. 🙌

2

99

428

Honored to be on

@TIME

's list with many friends and colleagues. Glad to see it has included the work of those addressing AI's social, political & ecological effects.

#TIME100AI

14

57

416

Stoked that Nature named me as 'One to watch' for 2018, along with folks like

@PEspinosaC

and John Martinis

21

47

407