Suresh Venkatasubramanian (mostly in the sky now)

@geomblog

Followers

12K

Following

18K

Media

328

Statuses

27K

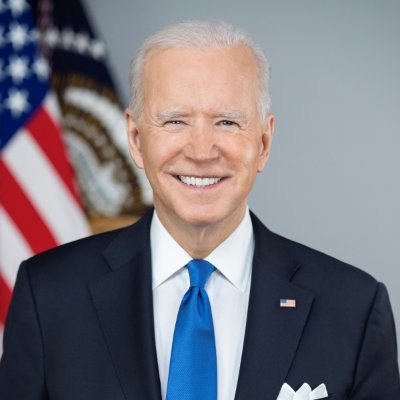

AI Bill of Rights coauthor. Prof@BrownUniversity. Former tech advisor to President Biden @WHOSTP. He/him/his. Tweets my own.

Somewhere between MT and ET

Joined March 2007

I'm going to frame this and put it on my office wall.

Artificial Intelligence has enormous potential to tackle some of our toughest challenges. But we must address its risks. That's why last year, we proposed an AI Bill of Rights to ensure that important protections for the American people are built into AI systems from the start.

6

4

102

🚨🚨 ATTENTION @CAGovernor 🚨🚨 @CaliforniaLabor sponsored #SB7 is sitting on your desk, awaiting your signature. This bill enacts common sense AI safeguards by making sure that human workers cannot be disciplined or fired by an algorithm. (1/6)

1

6

11

@BrownCSDept faculty members Ellie Pavlick and Suresh Venkatasubramanian (@geomblog) have just received a $20M @NSF grant to found ARIA, a national institute to develop intuitive, trustworthy AI assistants. Learn more at Brown CS News: https://t.co/fGqXCiyKrt

1

5

49

At the 2025 ACM FAccT Conference this June, CNTR Director Suresh Venkatasubramanian @geomblog gave one of the keynote talks, addressing what meaningful governance looks like in a world remade by generative AI and the shape of what comes next. https://t.co/NW02NVRu2G

0

2

8

NEWS: The Senate has removed AI moratorium from the reconciliation bill in a whopping 99-1 vote. Deals a massive blow to the effort to make this happen in the GOP package

16

71

359

NEWS: After pulling out of a deal on AI regulation with CRUZ, @MarshaBlackburn filed an amendment to strip the entire provision w/ @SenatorCollins, @SenMarkey, @SenatorCantwell, per a source familiar

2

19

54

Congrats to CNTR PhD student Rui-Jie Yew whose paper with @geomblog and Jeff Huang, "Copyrighting Generative AI Co-Creations," recently won a Best Paper Award at the 2025 ACM Designing Interactive Systems (DSI) Conference! https://t.co/dkLusBmZ5F

0

2

13

The House’s 10-year ban on state AI regulation would wreak havoc on our country. The language is so broad that it could upend everything from state privacy law to contract law. It could even break the internet. I will do everything in my power to stop it.

techpolicy.press

To understand how the so-called “AI moratorium” will destroy state laws and the internet, consider the literal meaning of its text, writes David Brody.

5

10

23

Second year @BrownCSDept and CNTR PhD student Rui-Jie Yew was recently recognized as runner-up for Best Student Paper at the Artificial Intelligence, Ethics, and Society Conference @AIESConf at the end of October with collaborators @Lcyqn and @geomblog. https://t.co/HHs1sKZ2pl

0

3

10

I'm going to still monitor this site just to make sure I'm porting all my followers over. But I don't expect to be engaging any more, and will try to shift the conversation over to bsky. So long, and thanks for all the fish/birds :) 8/x

1

1

1

But this network is being manipulated and used to broadcast content at the whim of its owner, who has also taken a firm position on side of the political divide. I'm not sure I want to support that megaphone, with all the threats to press freedom that are likely looming. 7/x

1

1

0

Is this a bifurcation of the X/twitterverse into red and blue networks? I don't think so. After all there's already truth-social if you want a steady diet of Trump. Would I have jumped if the election outcomes were different? Well it depends on where my network was going. 6/x

1

1

1

I'm a little nervous about abandoning a network that I had built up over many years. But I can't also be trapped by that network into tolerating what is a steadily declining experience over here. 5/x

1

1

2

I'm not expecting the other site to fully replace that environment. Perhaps no individual site can any more. And maybe that's ok. But that's where I expect more interesting discussions to start up again, in a way that isn't really happening any more here. 4/x

1

1

1

But twitter was always a place for me to engage with my professional peers, at least for most of its early existence. I still cannot believe how quickly that crumbled into dust once he took over. It was profoundly depressing. 3/x

1

1

1

When the melon husk first took over twitter, I experimented with both elephant and sky for a bit, or at least planted my ID flag there. But I was still getting a good deal more value here than elsewhere, especially when it came to having a voice in public forums. 2/x

1

1

0

A critical fraction of my professional network is moving over to the other site. Feels like a good time to make the move myself. Same handle: you know the drill. I'll probably lurk here still but am unlikely to post much. What follows are my thoughts on the move. 1/x

1

1

16

Man plays piano music for rescued elephants to help reduce their anxiety

244

1K

9K

Interesting trend that might be new? Universities asking for a personal statement in addition to the research statement for Ph.D applicants. I wonder if this is a post-SFAA move to do an end-run around the proscription on direct AA moves.

0

0

2

If you haven't already, please join me over at bsky. @melaniemitchell.bsky.social

5

2

74