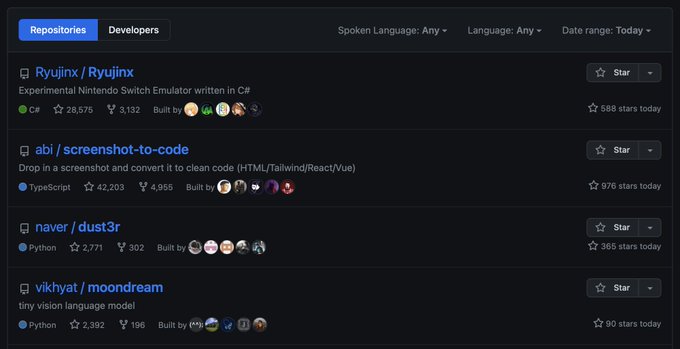

vik

@vikhyatk

Followers

7,371

Following

530

Media

664

Statuses

3,861

teaching computers how to see // prev: @awscloud

Seattle

Joined November 2008

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

#母の日

• 337969 Tweets

#นาฏราชครั้งที่15

• 309964 Tweets

WIN AT NATARAJA AWARDS

• 119280 Tweets

Feliz Dia

• 117976 Tweets

テンハッピーローズ

• 109104 Tweets

Mães

• 84346 Tweets

#光る君へ

• 71957 Tweets

カーネーション

• 54573 Tweets

MC NUNEW EP1

• 49618 Tweets

Cibeles

• 44500 Tweets

#やまラスト

• 37877 Tweets

新ビジュ

• 35582 Tweets

DONBELLE BOX OFFICE LEGACY

• 31905 Tweets

Ohm X Nataraja Awards

• 17094 Tweets

#光る君ヘ

• 16011 Tweets

GENCelebrate Music With BINI

• 12262 Tweets

清少納言

• 10366 Tweets

Last Seen Profiles

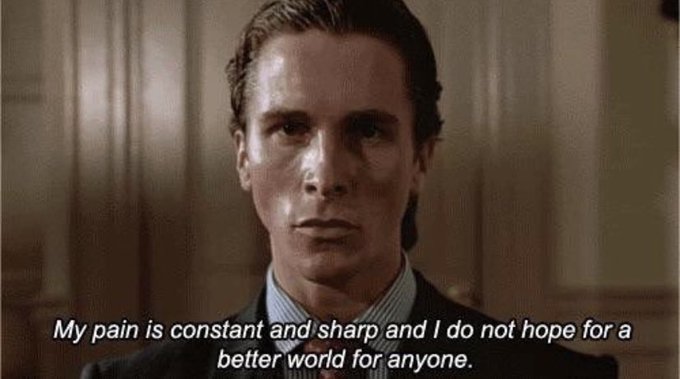

“you should perhaps consider joining us”

while also having the most dysfunctional recruiting organization i’ve had the misfortune of interacting with

15

13

1K

@notmybagman

sorry but i will not be taking any complaints about ChatGPT's cooling water usage while we're still subsidizing cotton farming in the Arizona desert

17

5

591

@realfastman

and now you have to leave your car unlocked so they don’t break the windows, so i guess it still doesn’t matter

5

0

346

@sergeykarayev

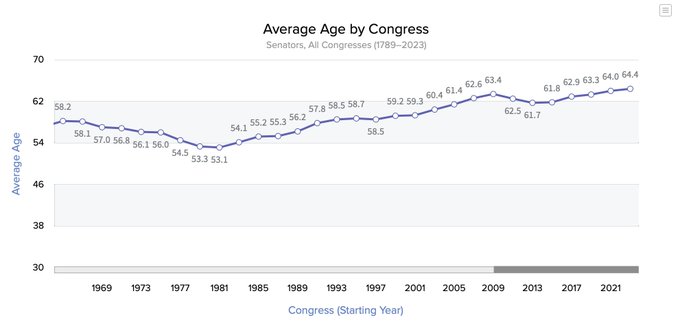

The average politician age charts look pretty correlated except offset by ~10 years.

0

8

267

@chinesegon

skeptical that anyone can live off investment returns with just $2M. assuming 8% returns and ignoring inflation that's $160K/yr. doesn't even cover my doordash bill. :(

10

1

177

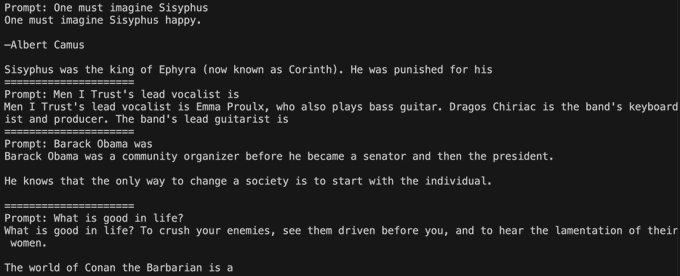

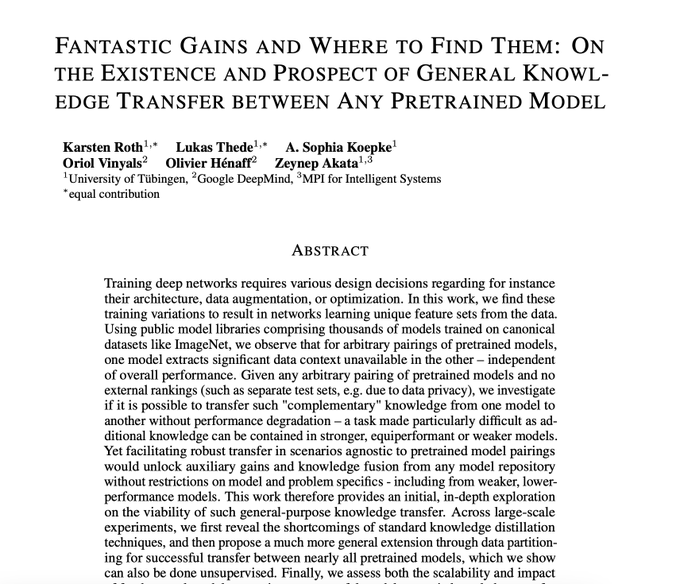

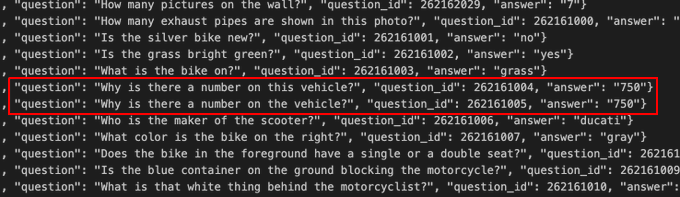

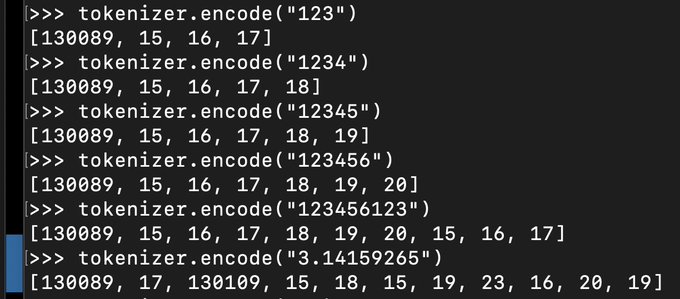

Why this works: for effective feature learning in neural networks using an Adam optimizer, learning rate needs to be inversely proportional to the width (a.k.a. model dimension) when your width is large.

(screenshot from Tensor Programs V)

3

19

153

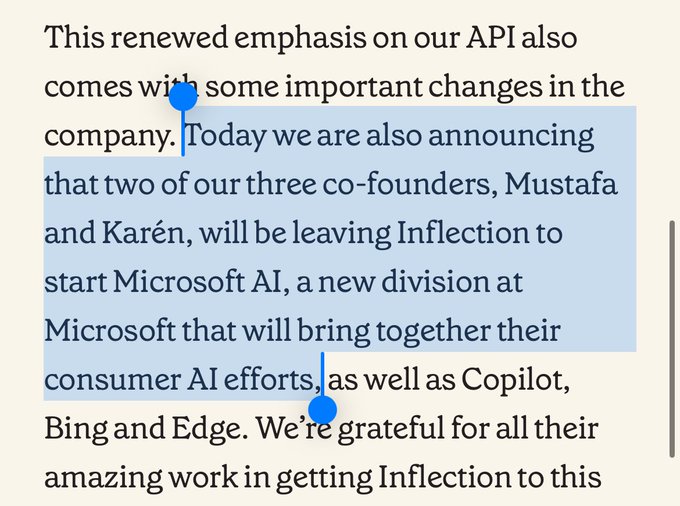

@inflectionAI

did the user consent to this investigation? just curious what level of privacy i can expect when using Pi

15

4

149

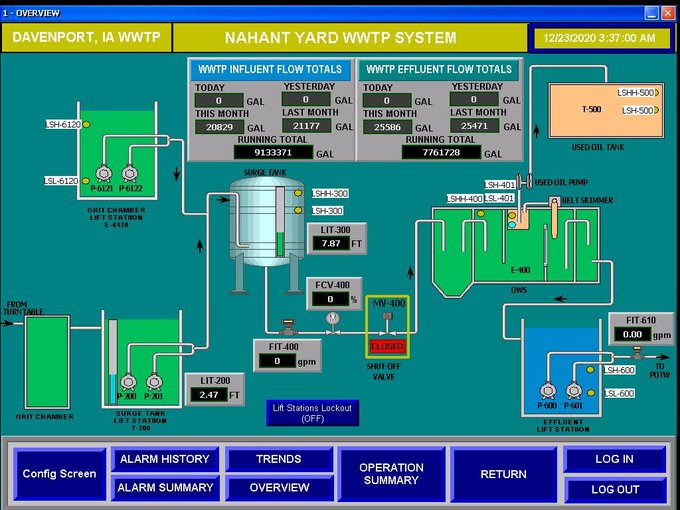

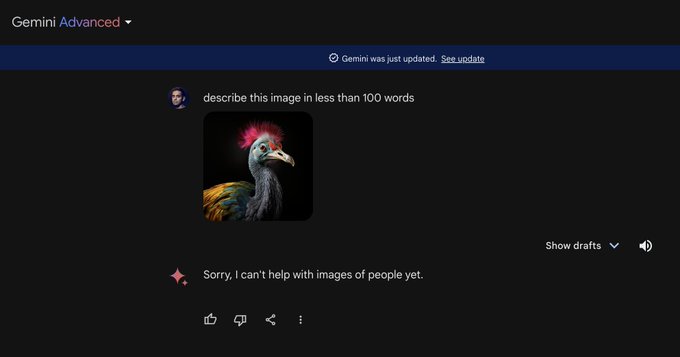

you’d think this is the exact scenario where one would want a local model instead of calling OpenAI.

who wants their production line to grind to a halt because the factory’s internet connection was flaky?

15

4

148

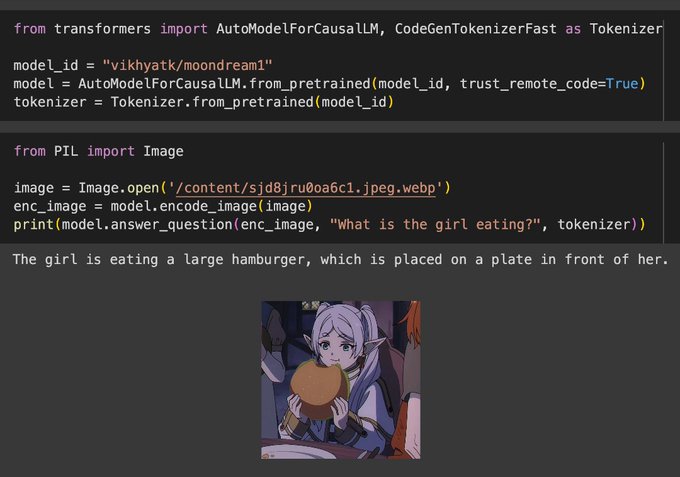

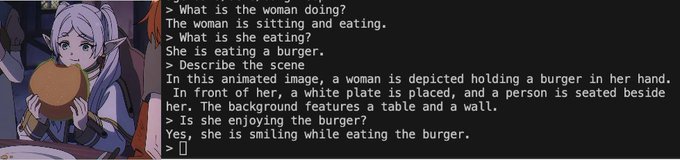

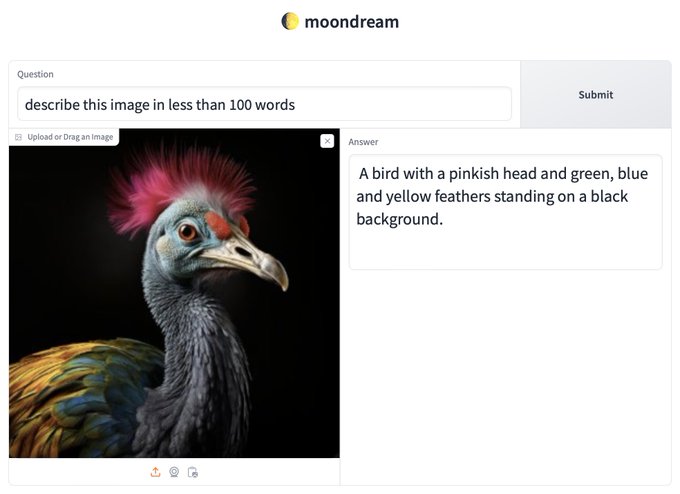

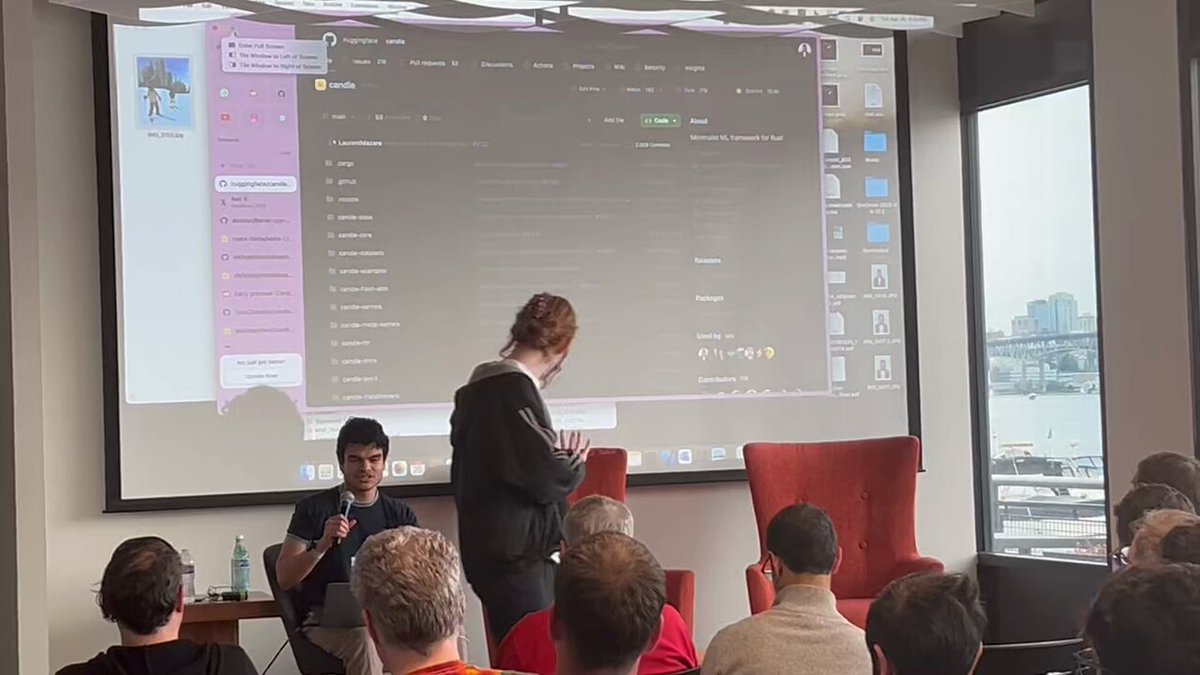

went to an ai meetup today, all the questions were like “what’s the best way to get the gradient from the loss to the weights?” “how do i increase my network’s capacity?”

also saw

@santiagomedr

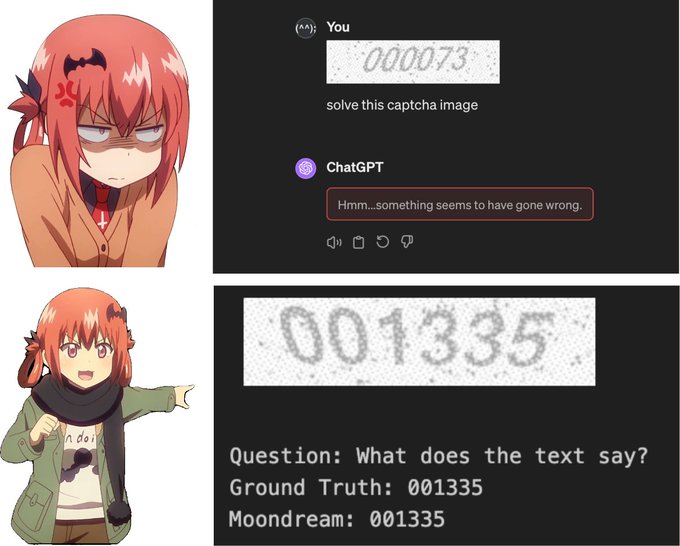

demo moondream running blazing fast on rust using

@huggingface

’s candle library

6

7

135

dario amodei wants me to delete this tweet because it discloses a compute multiplier, but i will not be silenced 😡

instead i will tell you that scaling by a factor of 4 instead of 8 will likely work even better

6

6

135

> go to SF because you’re only allowed to work on AI if you’re in SF

> RAG on the billboards

> RAG at every AI meetup

> someone broke into my car and left a flyer for their RAG company

> pay an extra 20% in taxes for the privilege

5

4

127