Chris Van Pelt (CVP)

@vanpelt

Followers

1,638

Following

280

Media

126

Statuses

865

FigureEight and Weights & Biases co-founder. Reared in #Iowa , big fan of creating things.

Mission, San Francisco

Joined April 2007

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Kroos

• 558052 Tweets

Chelsea

• 196437 Tweets

Reich

• 175769 Tweets

England

• 150169 Tweets

Boeing

• 83585 Tweets

Rashford

• 59794 Tweets

Poch

• 51267 Tweets

Modric

• 48538 Tweets

Southgate

• 40807 Tweets

Potter

• 34534 Tweets

كروس

• 34443 Tweets

Grealish

• 32209 Tweets

Tuchel

• 29270 Tweets

Iniesta

• 26817 Tweets

Vivian

• 26730 Tweets

Hellblade 2

• 22210 Tweets

Gallagher

• 21003 Tweets

25Mayısta KonferansaDavet

• 18188 Tweets

Luna Park

• 18124 Tweets

Boehly

• 15349 Tweets

Denji

• 13209 Tweets

Amorim

• 12485 Tweets

McKenna

• 12425 Tweets

Last Seen Profiles

I wrote about all the horrible tech choices I've made as CTO of

@CrowdFlower

!

http://t.co/0tVYMtgowC

2

21

24

OpenUI is

#2

on product hunt, and has gotten 5k stars on GitHub! We’ve clearly not reached peek GenAI hype yet 🤪.

1

6

25

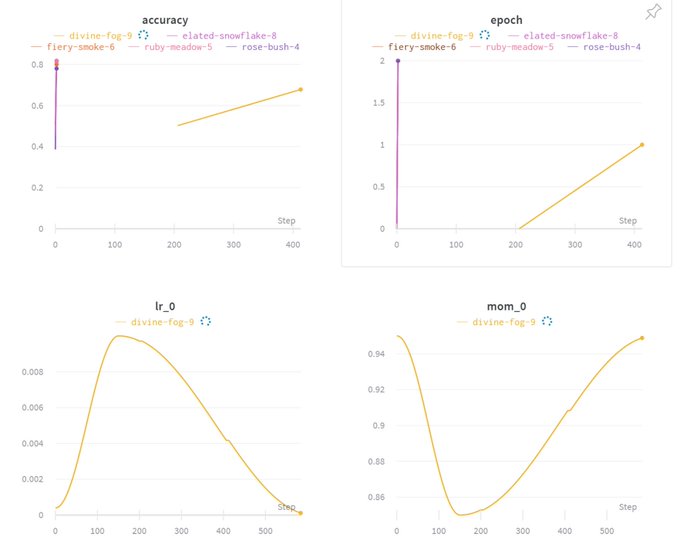

Really excited to be integrated with Hugging Face. If you're doing NLP and haven't used 🤗 you're missing out, it's such a powerful library.

You can now visualize Transformers training performance with a seamless

@weights_biases

integration. Compare hyperparameters, output metrics, and system stats like GPU utilization across your models!

Step-by-step guide:

Colab:

8

178

648

0

4

20

@MatthewBerman

Appreciate it! Feels really good to have so many people acknowledge my little side project 🥰. I’m working on some fine tuned models and new features, stay tuned 🥳

1

0

20

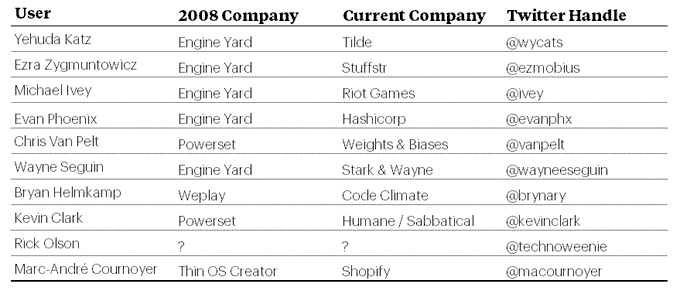

I'm an early adopter and still hacking! It helps when your roommate was

@mojombo

(1/4) The earliest adopters of Github are still doing amazing things!

1)

@wycats

2)

@ezmobius

3)

@ivey

4)

@evanphx

5)

@vanpelt

- founder of

@weights_biases

6)

@wayneeseguin

7)

@brynary

- founder of

@codeclimate

8)

@kevinclark

9)

@technoweenie

10)

@macournoyer

2

7

29

1

0

9

Still remember hacking from coffee shops with

@l2k

... ), feeling so fortunate to be a part of

@CrowdFlower

!

3

5

8

Trained a neural network on

@vanpelt

: My dream is → of what you can get for a low fee.

#huggingtweets

0

1

7

I'm excited to be working more closely with

@danielgross

and

@natfriedman

making the worlds best developer tools for ML 🥳

I have been an investor and user of W&B since iter1, and am thrilled to be leading a $50m round alongside

@natfriedman

:

15

26

277

2

0

6

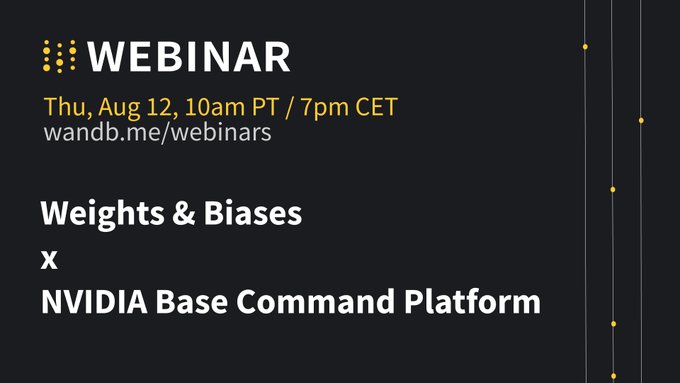

Really excited to be announcing our collaboration with

@NVIDIAAI

!

World-class ML requires managing both infrastructure & the models themselves.

Today we’re excited to announce our integration with

@NVIDIAAI

’s Base Command Platform, a hosted AI dev hub that gives enterprises instant access to state-of-the-art infra!

2

18

77

0

0

7

I'm super excited for fastai v2. So cool to see

@jeremyphoward

and contributors taking the time to do it right.

Want to automatically track *all* hyperparams of your model while it's training, without writing *any* configuration code?

Thanks to

@GuggerSylvain

, you can in fastai v2, with the new

@weights_biases

callback :)

13

132

529

0

0

6

@natfriedman

@l2k

So cool to see Github sharing such an exciting dataset with the NLP community. Can’t wait to see what we build!

0

0

6

I'm excited we launched ML benchmarks to foster collaboration and progress!

0

2

6

Can’t wait to demo W&B monitoring some impressive Nvidia hardware live!

0

0

5

After 8 years in San Francisco, riding Caltrain for the first time like a pro!

http://t.co/KyecBITaKu

1

0

4

@togethercompute

my little llama3 8b fine tuning job has been pending for 18 hours. Is there a status page or way to see queue depth? The suspense is killing me.

1

0

3

So cool to see so many developers & use cases for machine learning in such a cool space

@IBMWatson

developer conference.

0

0

3

For the record,

http://vis.stanford.edu/protovis

is the greatest JS graphing / visualization library ever.

0

1

3

@djdannywhite

killing it in a packed house for his last San Francisco based

#INDIESLASH

http://t.co/RweMt4YZ

0

0

2

For anyone interested in DSLR film making, this is so cool:

http://www.okii.net/ProductDetails.asp?ProductCode=FF-001,

and it's opensource!

0

0

2

@moonpolysoft

maybe some day

@boundary

will have elephantine data scaling issues... I suggest you start using Mongo & Node just in case.

0

1

2

@stormtroper1721

@l2k

@weights_biases

Hey

@stormtroper1721

I wrote most of that code and can help get to the bottom this. I'll try to make a simple script to reproduce. I opened an issue here: I'll post my findings and ping you if I don't see you on GH.

1

0

2

@KirinDave

and I are wearing our new Gunnar's today. It's a whole new world.

http://t.co/Fx4bwmwi

2

1

2

Great guest post on Forbes by

@l2k

: My Board Fired Me -- Here's What I Learned

http://t.co/8Q6Hjx7LSv

0

0

2

Just got my first

#sprig

meal delivered, horrified to find a tasty orange truffle eyeball in my curried carrots.

http://t.co/NqszRoK6rs

0

0

2

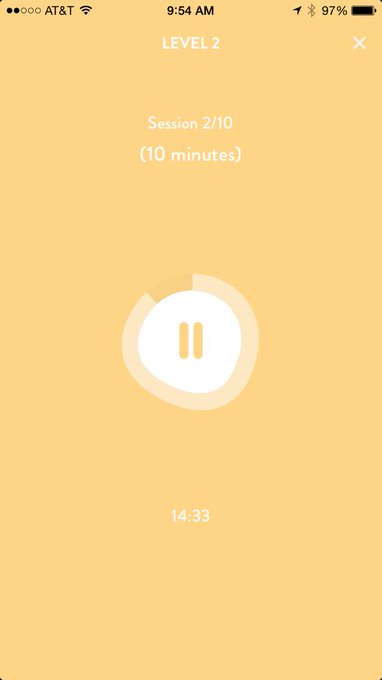

Hey

@Get_Headspace

are you aware of a bug where you specify 10 minute sessions but get 15?

http://t.co/6aQZ4VFref

2

0

2

@stormtroper1721

@l2k

@weights_biases

I just tried to reproduce and I have a very simple working script in the github issue: . Can you try to make a simple script that shows the behavior you're seeing and add it to the thread?

0

0

1

@resistredaction

The utilization logic uses the psutil python library, specifically the cpu_percent function TLDR; if your current process uses multiple threads it can be greating than 100%:

1

0

1

@weights_biases

@karibriski

@nvidia

Really looking forward to Kari's talk. I may just be making a guest experience in it 🥳

0

0

1

Gorgeous views on our way to Machu Picchu today. We see the ruins tomorrow. @ Ciudad de Urubamba

http://t.co/AMMyKGfx

0

1

1

Descending through the clouds. Just landed in rainy Austin. @ Austin Bergstrom International Airport…

http://t.co/hF4iBDyFi7

0

0

1

So excited to see Joseph Childress play to a packed house! @ Great American Music Hall

http://t.co/6yrWkSmIbu

0

2

1

@jjghsjfhehxjs

It’s possible. Today it renders raw HTML into an iframe, and you can later convert that to React. To render React directly in the browser, we would need a bundling step. I’ve thought about it, and Vite can even run in the browser so it’s possible, just more complicated.

0

0

1

Plotted last summers honeymoon using data stored on my iPhone

http://yfrog.com/h3vx7jp

with

http://petewarden.github.com/iPhoneTracker

0

0

1

Also, thank you instagram for making this silly European urinal look more awesome

http://t.co/z7Phj52e

0

1

0

@resistredaction

We don't make any network connections in your training code, they happen in a separate process. Calls to wandb.log to write synchronously to disk by default. You can make those writes asynchrounous by adding `sync=False` to your wandb.log calls.

1

0

1

@brendan642

it's no color post, but Eminem and Detroit are always worth a blog post in my mind ;)

0

0

1

OH: "There's nothing greater than waiting to see someone live for 3 years then seeing it".

#firstcityfestival2014

0

0

1