Lepton AI

@LeptonAI

Followers

3,654

Following

34

Media

9

Statuses

56

We are building an efficient AI application platform.

Cupertino, CA

Joined April 2023

Don't wanna be here?

Send us removal request.

Last Seen Profiles

We are super excited to partner with

@SambaNovaAI

to server the most state-of-the-art Samba-CoE-v0.3 model. In the LLM land, innovation happens with a joint effort on the hardware side and software side. 🧵

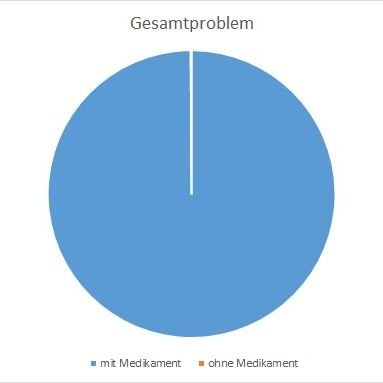

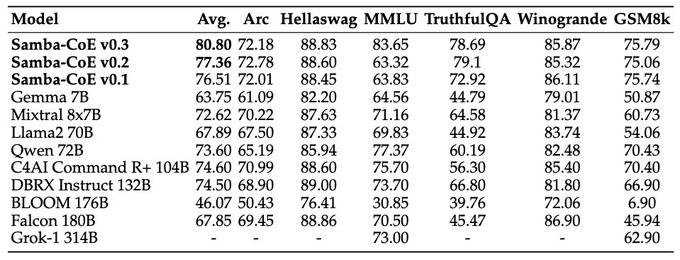

Introducing Samba-CoE v0.3, our latest Composition of Experts (CoE) model that surpasses DBRX by

@DbrxMosaicAI

and Grok-1 314B by

@xAIGrokInu

on the OpenLLM Leaderboard

@huggingface

! 🏆 Samba-CoE-v0.3 is now available on

@LeptonAI

@jiayq

, try now: .

#AI

11

29

74

2

6

24

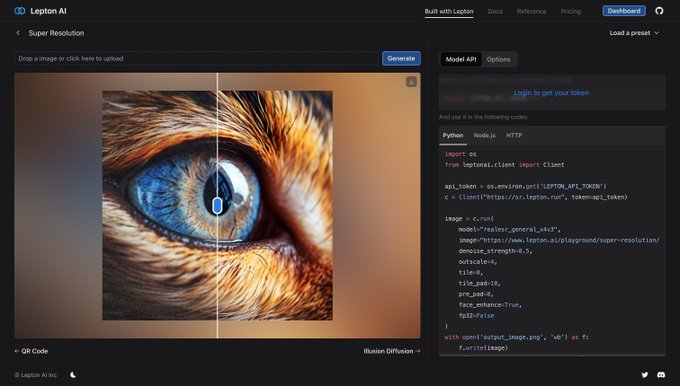

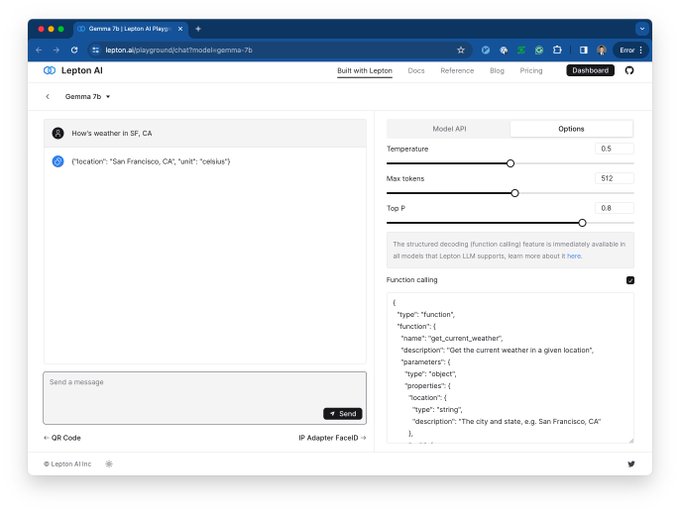

🚀 Excited to introduce the future of machine learning on Lepton AI! We've teamed up with Google's latest marvel, Gemma, to bring you an API that's as powerful as it is user-friendly. 🌟 Start exploring with Gemma today at .

#MachineLearning

#AI

#Gemma

1

7

18

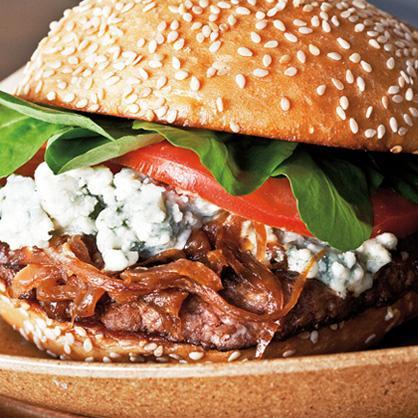

🚀 Introducing Illusion Diffusion Model Apis by Lepton AI

Transform your wildest creative ideas into mesmerizing visuals! 🎨✨ Dive into a world where imagination meets reality.

#IllusionDiffusion

#UnleashCreativity

0

2

14

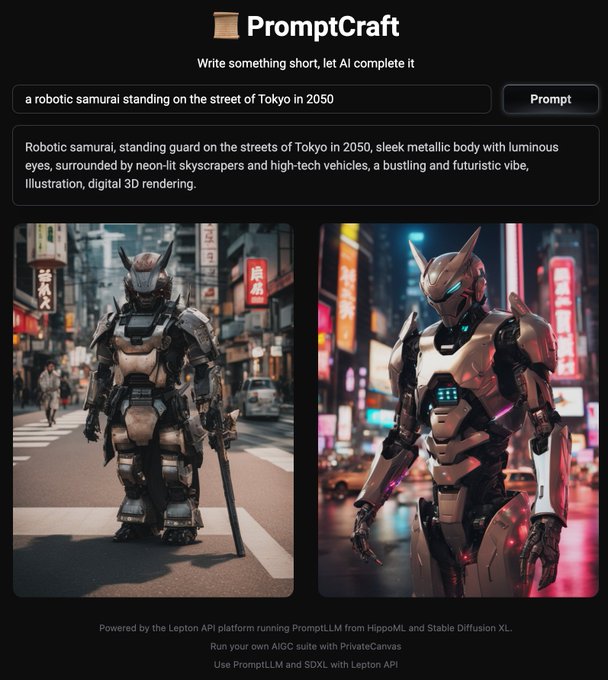

🚀 Introducing "Prompt Craft" made with PromptLLM from

@hippoml_com

! Perfect simple prompts with ease to unleash creativity and efficiency like never before. 🌟💡🤖

Can you tell which one is made by AI? 😝😝😝

#LLM

#Promptshare

#promptllm

1

3

13

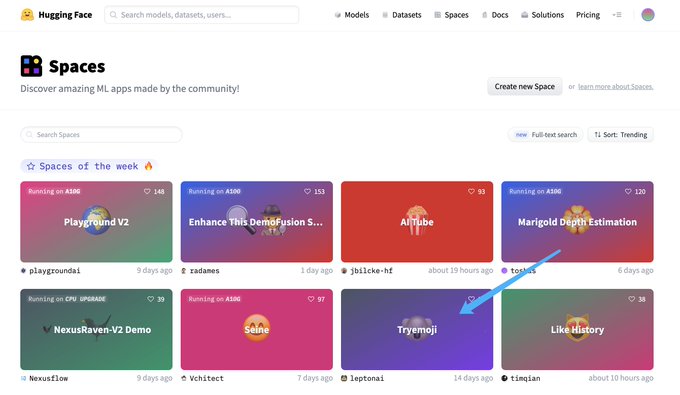

Guess who got a spot on HuggingFace Space of the Week? It's us! Try to checkout the emoji re-imagined with LCM 🚀🚀🚀

Bet you've never seen an emoji like this 😆

#huggingface

#lcm

#SDXLturbo

#sdxl

#imageGen

0

2

7

🚀 Lepton AI's model API now supports

#NodeJS

calls!

🖥️ Build smarter and faster with the power of AI in your favorite runtime.

Dive in now!

#LeptonAIUpdate

0

1

6

@SambaNovaAI

SambaNova's hardware architecture enables one to deliver models with composition of experts - a much interesting modeling approach on top of existing awesome architectures to deliver state of the art results on benchmarks like Alpaca. Check it out at

0

0

5

Looking forward to meeting all of you! 🥳

Most recent (as of Sep 12) Speaker line-up of the largest GenAI event in silicon valley on Sep 23:

@rmisra11

from

@Microsoft

, Dr Jason Wei () from

@OpenAI

,

@DrJimFan

from

@nvidia

,

@hwchase17

from

@LangChainAI

, Arvind Jain from

@glean

, Jason Lopatecki…

2

3

6

1

0

5

@myshell_ai

Glad to see what we are shipping out together!😃 Make AI playable, reachable, and usable!

0

0

5

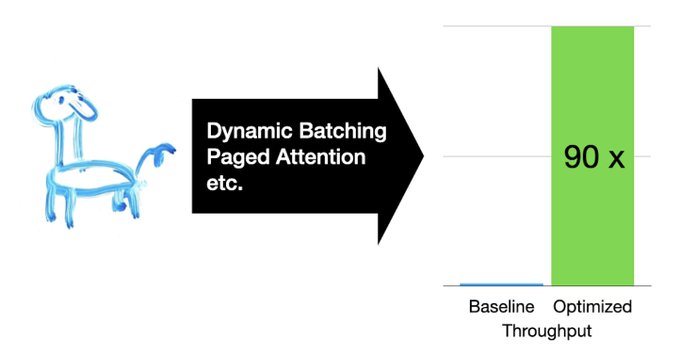

Stay tuned for the update on Medusa in production! 👾👾👾We'll introduce more details on this in production! And no BS😝😝, it's live at (1/x)

1

3

4

Chilling at

@Ray_Summit_Live

? Let's talk about pythonic-AI for building applications! See y'all tmrw and let's talk ~ 🥳

Thanks to

@robertnishihara

and

@anyscalecompute

for inviting 🤠

1

0

3

🚀Check out the blog post by

@YuzeMa5

for the story and tip on fine-tuning open-source models with

@LeptonAI

and

@LangChainAI

. Let us know what you think!

0

0

3

@Ray_Summit_Live

@robertnishihara

@anyscalecompute

Just heads up,

@jiayq

will be giving the talk and showing LVE DEMO! 😝😝😝

1

0

1

@brittwalker_

@swyx

@SmolModels

@lqiao

@jiayq

@YuzeMa5

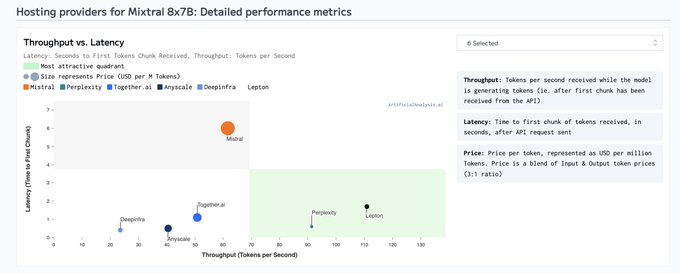

Based on what we are getting from

@ArtificialAnlys

, seems like we are doing pretty good 🥳

1

0

1

@YuzeMa5

Speaking of the demo mentioned in the talk, the demo is available at and the tutorial is at

0

0

1

@a13935257451

@jiayq

We do have APIs where you can call with your workspace token (Default access will be rate limited, but you can let us know at info

@lepton

.ai for unlimited access)

1

0

1