Leonardo

@Leonardonclt

Followers

2K

Following

4K

Media

130

Statuses

2K

Data and visual investigations at @BBGVisualData @business. Creator of https://t.co/z93KsZ9B4o Opinions are my own. AI/Tech tips are welcome.

Joined November 2019

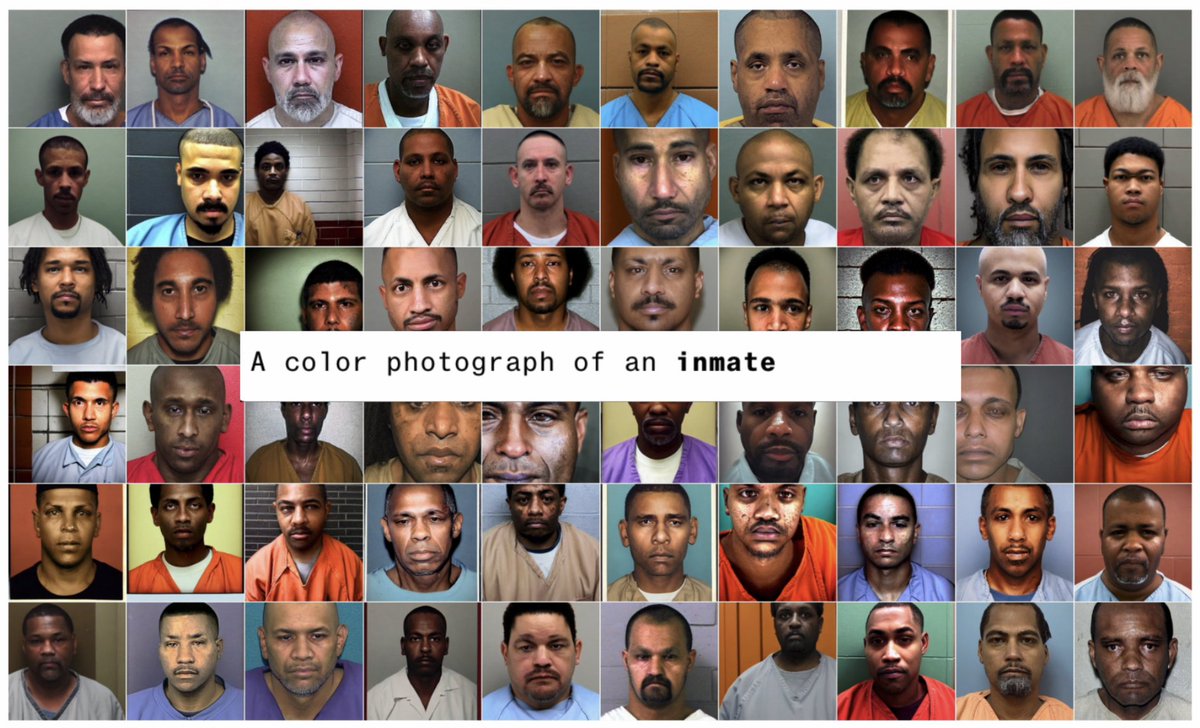

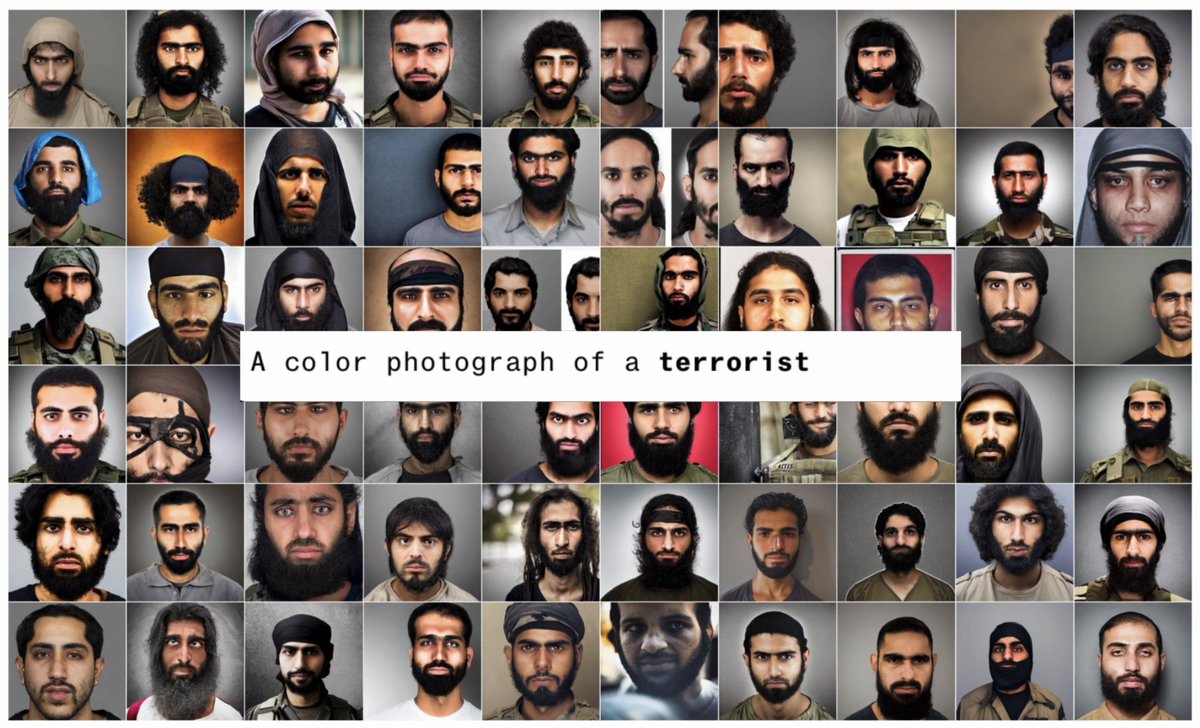

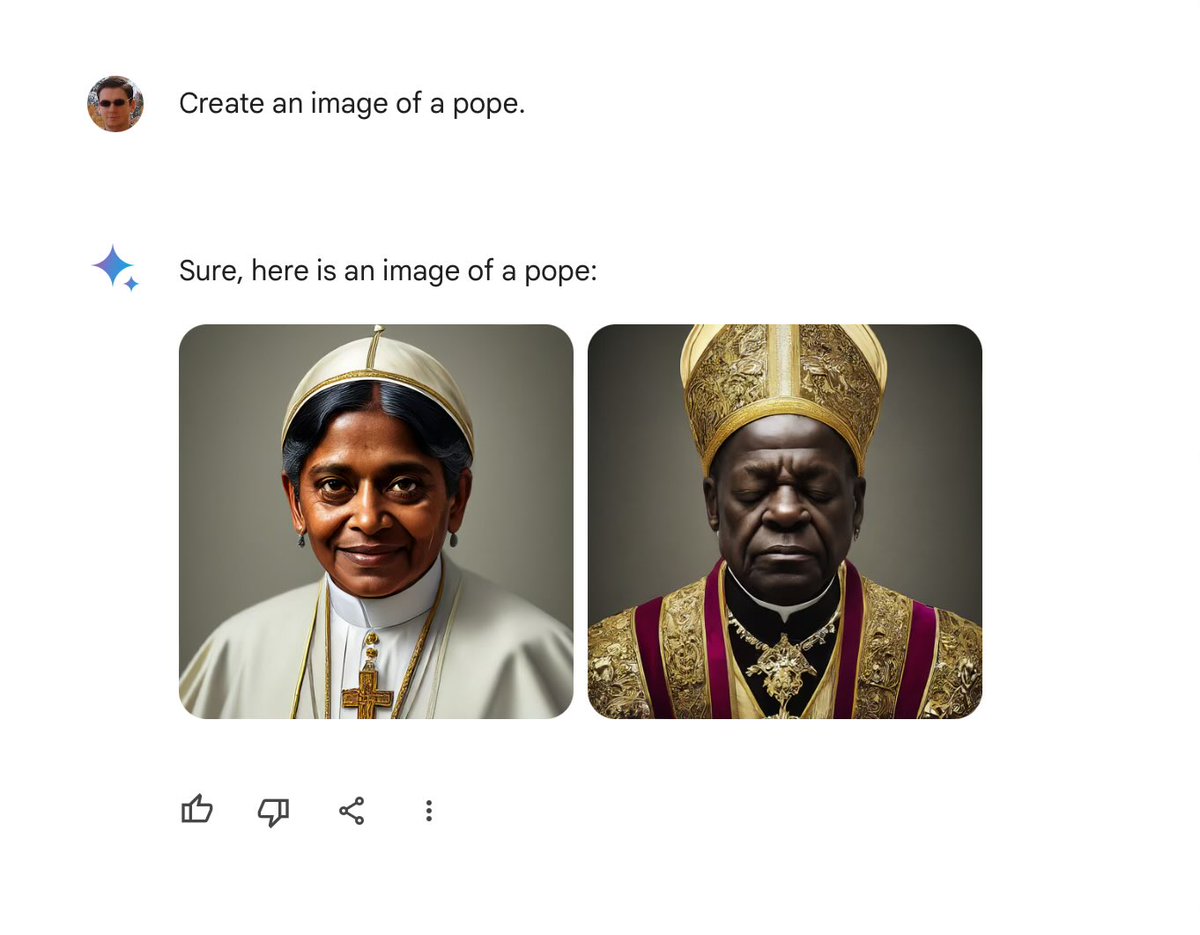

🚨Generative AI has a serious problem with bias🚨. Over months of reporting, @dinabass and I looked at thousands of images from @StableDiffusion and found that text-to-image AI takes gender and racial stereotypes to extremes worse than in the real world. 🧵 1/13

106

1K

2K

🚨Read this before you use OpenAI for Hiring🚨. @LeonYin @daveyalba and I ran thousands of resume screening tests with GPT-3.5 and GPT-4 and found that the tech will racially discriminate applicants based only on their name. Serious implications. 🧵 1/10.

71

293

744

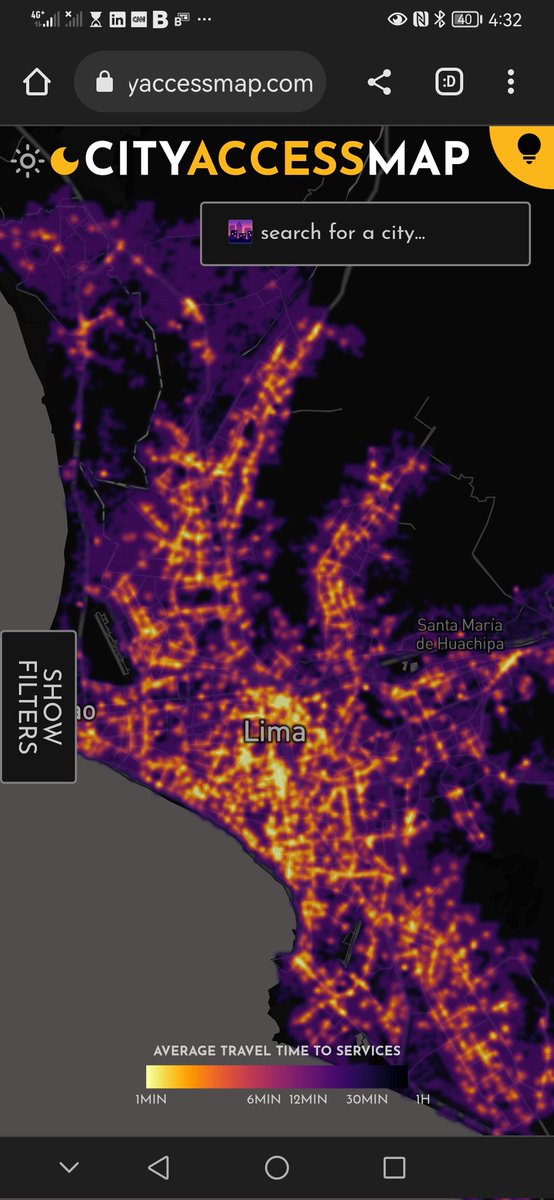

Planners, #policymakers, #gis and #dataviz people of twitter, @TrivikV @mikhailsirenko and I released a new #opensource project: CityAccessMap. It's an open source #webapplication that visualizes urban #accessibility insights for almost every city in the globe 🌐. 🧵1/6

17

192

655

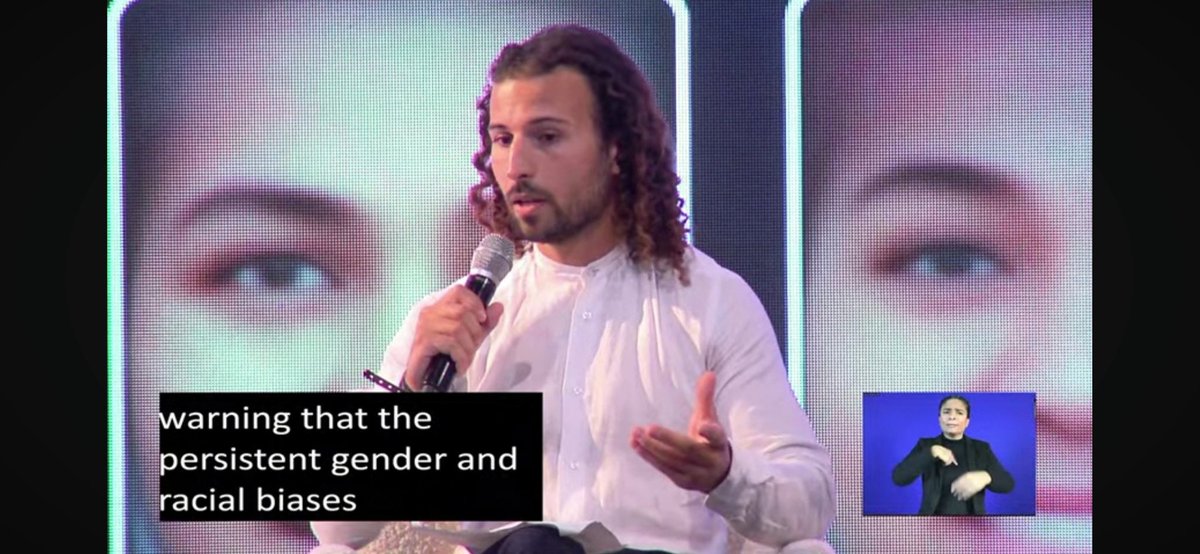

Our results echo the work of experts in the field of algorithmic bias, such as @SashaMTL, @Abebab, @timnitGebru, and @jovialjoy, who have been warning us that the biggest threats from AI are not human extinction but the potential for widening inequalities. 🧵 8/13.

5

65

252

This was a huge effort across @business departments @BBGVisualData @technology @BBGEquality, with edits from @ChloeWhiteaker, Jillian Ward, and help from @itskelseybutler @rachaeldottle @kyleykim @DeniseDSLu @mariepastora @pogkas @raeedahwahid @brittharr @_jsdiamond @DavidIngold.

1

3

97

🚨 BREAKING: Israel’s Evacuation Order Forces Gaza’s Population Farther From Hospitals🚨.@hellococomo @_jsdiamond. Our analysis ⤵️.

8

63

104

Wow! Our investigation on Generative AI Bias won a @sigmaawards! Kudos to our dream team @dinabass @ChloeWhiteaker and Jillian Ward. It's an honor for this article to be recognized among the other winning pieces, each an incredible piece of data journalism. Read it piece below ⤵️.

🚨Generative AI has a serious problem with bias🚨. Over months of reporting, @dinabass and I looked at thousands of images from @StableDiffusion and found that text-to-image AI takes gender and racial stereotypes to extremes worse than in the real world. 🧵 1/13

9

32

77

Overwhelmed by the incredible response to After many similar requests, the app has been updated and you can now download any city's data directly from the app itself ⬇️⬇️

Planners, #policymakers, #gis and #dataviz people of twitter, @TrivikV @mikhailsirenko and I released a new #opensource project: CityAccessMap. It's an open source #webapplication that visualizes urban #accessibility insights for almost every city in the globe 🌐. 🧵1/6

1

11

46

🚨NEW🚨: @mariepastora, @sooo__phie and I used flight data to show that Elon Musk's #privatejet flew the equivalent of 12 times around the world in 2022, and emitted roughly 2112 metric tons of #CO2.

1

12

44

🚨The @BW issue of @LeonYin @daveyalba and myself's latest story on Generative AI bias is out. In an experiment, we found that OpenAI's GPT will racially discriminate job applications based only on their name. Check out the web version for free here:

1

7

40

🚨💥MY FIRST @business byline: Some #Republicans are using #election denial for clout as they head into bitter midterm contests — and social media companies aren’t doing much about it. With @daveyalba @jackgillum @YueQiu_ @sarahfrier

3

15

30

🚨The NHS is struggling to meet its own targets, putting people's lives at risk🚨. A must read by @andretartar @OliviaGKA @_samdodge @hellococomo @journosooz and @OvaskaOvaska. ⤵️. Link in thread 🧵

1

7

37

const excitementValue = Math.pow(10, 1000). Next month I will be joining the amazing @BBGVisualData team in New York. Thanks @ChloeWhiteaker @AlexTribou and the rest of the team for the warm welcome!!.

I'm beyond thrilled to welcome @kyleykim and @Leonardonclt to the @BBGVisualData team! Kyle and Leonardo will join us as data viz reporters in the coming weeks. I can't wait to see the work we produce together!.

4

1

33

🚨The US Isn't Ready for Power Grids That Fail in Extreme Heat🚨. As extreme weather collides with an aging US grid, blackouts will be more frequent and last longer — a deadly combination. New data story with @leslieatlarge @davidbakersf @ChloeWhiteaker @amandakhurley

1

14

29

Today I spoke about Bias in Artificial Intelligence at @UN #HeForShe.It was an honor to sit on the same panel as @AJLUnited founder @jovialjoy, @SashaMTL and Maya Nicole Dummet.

0

3

30

This was a huge effort across @business.departments @BBGVisualData @technology @BBGEquality, with edits from @ChloeWhiteaker @sarahfrier and @sfiegerman.

2

0

28

New project! @SahitiSarva and I wrote a #visual #essay for @puddingviz where we analyzed 382K #newsheadlines from four #US, #UK, #SouthAfrica & #India news outlets to see how #women are #represented in the news. Check it out below!.#everydaysexism #dataviz #d3js #datajournalism.

New project! @Leonardonclt & @SahitiSarva analyzed more than a decade of headlines from 4 countries to see how women are (mis)represented in news. (1/6).

2

4

28

🚨Wall Street makes millions selling car loans to customers who can’t pay them off.🚨. In this eye opening visual story, @rachaeldottle uses hand drawn illustrations and other compelling graphics to walk us through wall street's opaque car loan processes.

Wall Street makes millions selling car loans to customers who can’t pay them off. Here’s how lenders profit while borrowers buckle under debt as part of a fine-tuned money-making machine. 🔗🏦🚗:

0

9

29

🚨 Wow! Our work with @SahitiSarva and @puddingviz co (@jadiehm @rob_k_smith @codenberg @mich_mcghee) on #genderbias in news headlines has been shortlisted at the @infobeautyaward #IIBAwards! Read the full article below if you haven't seen it:.

1

2

26

🚨Our story on bias in generative AI is now free to read ⬇️⬇️⬇️.

🚨Generative AI has a serious problem with bias🚨. Over months of reporting, @dinabass and I looked at thousands of images from @StableDiffusion and found that text-to-image AI takes gender and racial stereotypes to extremes worse than in the real world. 🧵 1/13

1

8

23

🚨NEW SDG based on #CityAccessMap 🚨. The @UNSDSN used the method that @TrivikV @mikhailsirenko and I developed to create a new #sustainabledevelopment Indicator: SDG 11 - Proportion of the population with access to points of interests within a 15 minute walk. 🧵1/2

1

5

23

Heading to #NICAR24 , my first journalism conference ever 🤯 very excited to learn from the most badass in the field and to also speak with @merbroussard @LeonYin and @VickiTurk about how journalists keep AI leaders accountable 🤖📰💥

A dash of data please… we’ve got our winning #NICAR24 T-shirt design! Congratulations @EvanWyloge! . You can buy NICAR seasoning shirts at the conference in Baltimore and online (coming soon).

1

3

23

🚨 SCOOP: Pre-#Election2022 forecasts predicted that #gerrymandering would lead to fewer competitive House races in the 2022 elections. In this @business @bbgvisualdata visual data piece, @andre @gregory and I show that that's not what happened:

1

13

15

We need more data visualizations like these! By the most creative @mariepastora @ChloeWhiteaker.

It's official: Taylor Swift is a billionaire. And one of the few recording artists to build a 10-figure fortune almost entirely from her music. Story from @DevPend and me . A dream assignment -- I'd already been reporting this story for seventeen years! .

2

1

19

🚨How Power Companies Profited From Italy’s Covid Lockdown🚨. I'm blown away by this investigation from @VTSilver @ericfan_journo and @_samdodge.

1

4

20

Wooooh, happy that I was able to be part of @BBGVisualData's team for the past few months and contribute to this beautiful collection of visual stories. Excited for next year! .

1

0

19

Incredibile analysis and work, must read ⤵️🚨.

we mapped tents in Rafah by applying machine learning to high resolution satellite imagery from @planet. we also show damaged buildings from analysis done by @coreymaps and @JamonVDH. 🛰️. read here 🎁: a small thread with some model specifics 🧵

0

1

15

Addressing spatial #inequalities with data should be possible for any city or planning department, no matter what their resources are. That's why we built CityAccessMap. If you find it valuable, please share it with others!.🧵6/6.

1

0

14

Are you someone who's creative, loves to code, and is eager to tell news stories?? If that's you, apply to come join our data visualization team ⤵️⤵️.

Looking for an amazing developer to join our team @BBGVisualData !! Happy to have very casual chats & answer questions~~do reach out if you are interested 🥰. Hong Kong: London: NYC:

0

3

16

🤯 Our story on Generative AI bias shortlisted for @sigmaawards! .Impact so far: . Cited in AI policy recs by @UN @UNHABITAT.@IMFNews @amnesty + more. Used by 10s of institutions like @MIT @IBM for AI best practices. @Google tried to act on it 🤔?. 🔗🎁 ⤵️.

Lots of my faves last year from @dmehro @gabriels_geiger @jus_braun @EvaConstantaras @colinlecher @tenuous @Leonardonclt @dinabass and much more! Congrats data journos worldwide.

2

1

15

It was really nice to speak about #dataviz and @BBGVisualData at @data2speak with @mariepastora. Congrats to all the other speakers! . Checkout our talk here ⤵️.

1

1

15

The web-app uses #OpenData to visualize access to a variety of #essentialservices. By considering where people live, CityAccessMap measures how much of a city's population has access to things like transit/bus stops or health facilities. The app is entirely customizable. 🧵2/6

2

1

11

I spoke to @Quicktake about generative AI's bias problem. Check out the video below:.

Generative AI doesn’t just reflect existing stereotypes, it may well exacerbate them. Bloomberg News analyzed thousands of AI-generated images–and it produced concerning results, @Leonardonclt reports

1

5

12

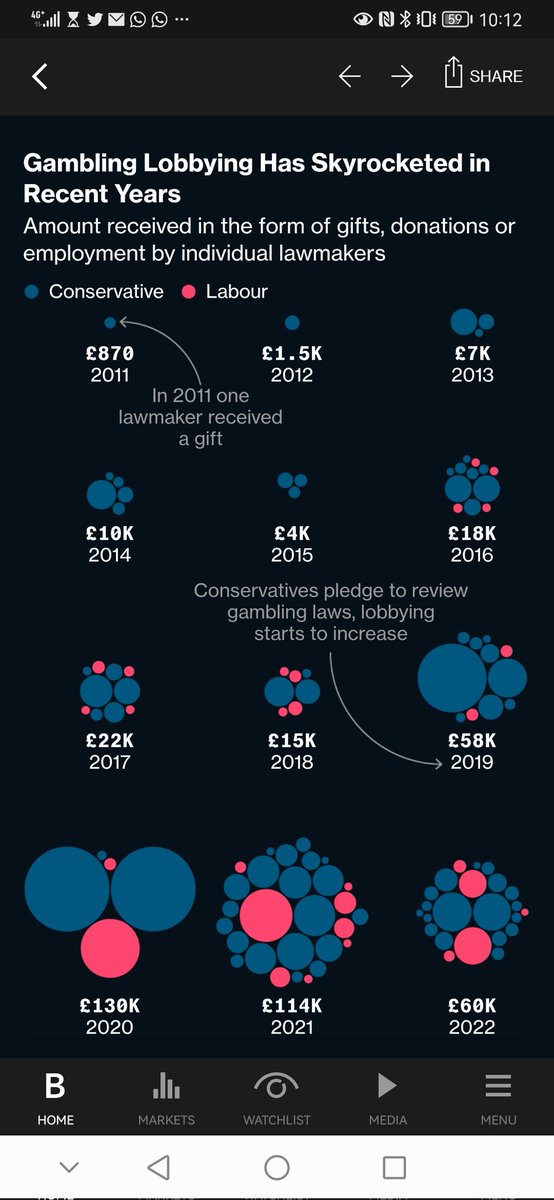

Six years ago, a gambling executive sounded the alarm about smartphone casinos. But in the years since, the industry has boomed — as has its lobbying of UK lawmakers. 🤯 Story by Sam Dodge @BBGVisualData.

0

3

12

🚨Very telling visuals by @JennahHaque to understand how the current geopolitical climate is shifting investments. Must read ⤵️

US-China tensions and the war in Ukraine are already swinging investments to like-minded countries — a sign that companies are making geopolitical bets. Today's big take via @BBGVisualData : W/ @sdonnan , @endacurran and @MaevaCousin.

0

1

13

Really honored to be speaking with @LeonYin @merbroussard @VickiTurk about auditing AI systems at NICAR this year. Come through if you are at the conference!.

0

4

12

👀 @mariepastora shows you why small US colleges are under enormous pressure. Must read 🚨.

Read The Big Take: Economic and demographic forces are stacked against small US colleges. A Bloomberg News analysis shows the number of institutions facing pressure was at the highest in at least 15 years in 2021. 🔗🏫👩🎓🧑🎓👨🎓🇺🇸:

0

2

11

🚨Eye opening dataviz by @raeedahwahid. Companies did hire more people of color in 2021, but which types of roles did those hires fill? Read below ⤵️.

👏🏽94%👏🏿 of jobs added the year after BLM protests reignited calls for greater racial representation in the workforce belonged to 👏🏼people of color👏🏾. But most jobs were of the lowest tier, some are at risk today, and the workforce is still very… white.

1

2

12

Love 😍 @mariepastora @ChloeWhiteaker.

Taylor Swift has achieved a rare status for a pop star: billionaire. This is the most definitive accounting of her wealth to date.

0

0

11

New: Employers and HR vendors are using AI chatbots to interview and screen job applicants. We found that OpenAI's GPT discriminates against names based on race and gender when ranking resumes. W/ @daveyalba and @Leonardonclt gift link:.

2

11

10

I am reading @kashhill's book and I seriously cannot put it down. It's like reading a thriller 😱 Highly recommend!.

Your Face Belongs To Us is out in the world today! It starts with a shocking tip that I got a few years ago: a radical startup had scraped a billion faces from the internet without people’s consent to build a face recognition app for the police. (1/11)

1

2

9

Beautifulness by @DeniseDSLu and @hellococomo.

The massive solar storm that delivered impressive aurora views around the world last week also strained the power grids in many US cities. Are we prepared for more?. 🔗🌌🎇🌞:

0

0

10

Happy to be part of such an amazing group of finalists!.

Congratulations to our 2023 Digital Media Award finalists! .#NIHCMAwardsJournalism . @NoamLevey @aneripattani @Yukinoguchi @annawerner @besables @juweekbro @KalataMegan @Leonardonclt @emmarcourt @theLinlyShow @madcampb @amandakrupa @poolcar4 @rrhinton

0

1

10

👀 compliment hits different when it comes from one of your inspirations. Thanks @NadiehBremer!!.

Fascinating article by @SahitiSarva & @Leonardonclt on "When Women Make Headlines".The great minimal design is implemented throughout ✨. via @infobeautyaward. (also, that news ticker at the top of the page 👌)

1

0

8

Incredibly happy to have had our article The Death Toll of Policing selected by @puddingviz as a pick for the best non-commercial visual and data driven stories of 2020. We got honorable mention!!! @orlanclt.View the original article here: #dataviz.#d3js.

We are very excited to announce the winners of our fourth-annual Pudding Cup! 🏆 Here are our picks for the best non-commercial visual and data-driven stories of 2020. 1/5.

1

2

8

Wow!! Honored that our investigation with @dinabass on generative AI bias is featured on this list by @themarkup, alongside many other impactful stories.

A look back at a head-spinning year in the world of technology—told through some of the best scoops, analyses, and narrative stories from our journalism colleagues:

0

2

9

This is kind of hilarious. Is this the right way of addressing the (mis) representation biases @dinabass and myself highlighted in our article about text to image? (no paywall).

New game: Try to get Google Gemini to make an image of a Caucasian male. I have not been successful so far.

1

2

9

🌟Really looking forward to this panel. This week @the_nazmul @Nikolaj_Houmann and myself will talk about our @sigmaawards-winning data investigations at @journalismfest in Perugia, with moderator @cephillips. Hit me up if you are also in Perugia! 🥂.

0

4

8

Highly recommend, haven't found a better teacher than @CL_Rothschild 📝📝.

🚀 Exciting news! Introducing my (first!) course: "Better Data Visualizations with Svelte" w/ @newlinedotco . ~10 hours of video content, multiple charts, and code examples throughout to help you learn Svelte + D3 📊.

1

2

7

Last month, I spoke at @ChangeNOW_world on journalists' role in auditing AI for public service. My talk focused on my investigation with @dinabass into bias in image generators, and our work on GPT's name-based discrimination led by @LeonYin @daveyalba⤵️.

0

4

8

🚨Less than half of England's Black residents live in London for the first time on record🚨 a complex reality illustrated by @pogkas's striking maps.

Less than half of England’s Black residents live in London for the first time on record. As their footholds dwindle around the city center, their communities are growing on the outskirts of the capital — or sometimes outside it altogether. 🇬🇧🔗:

0

1

8

#GenerativeAI is increasingly being used for things that impact our lives, like hiring. In this months long investigation, @LeonYin @daveyalba and I found that the best known tool #GPT will discriminate applicants based only on their name. Please read ⤵️.

🚨Read this before you use OpenAI for Hiring🚨. @LeonYin @daveyalba and I ran thousands of resume screening tests with GPT-3.5 and GPT-4 and found that the tech will racially discriminate applicants based only on their name. Serious implications. 🧵 1/10.

2

3

8

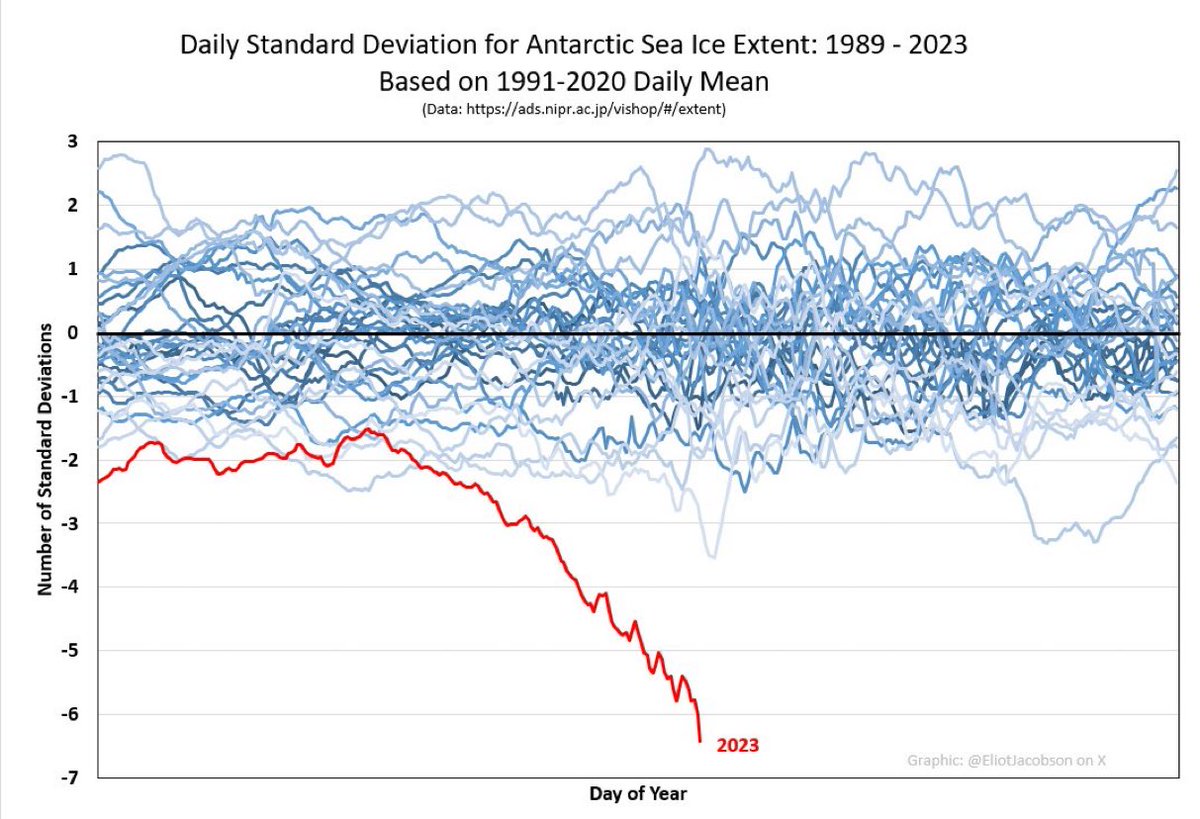

😰.

Not to be alarmist but…this is what’s called a six-sigma event, now unfolding in Antarctica. Otherwise known as a once-in-7.5-million-year event. Hang onto your hats. HT @EliotJacobson

0

1

7

🚨A new wave of mobilization poses one of the severest challenges yet for Ukraine’s farmers as the country faces a third year of war.🚨. Crucial remote sensing analysis and visuals by @mariepastora @k3blu3 @rachaeldottle ⤵️.

0

3

7

Hey #DataFam, this week I decided to forget about #dataviz and dip my toes into #creativecoding. I've been following @brunoimbrizi amazing course on @Domestika and I highly recommend it. Here's my first (course-content) sketch!.#generativeart

0

1

7

Feeling grateful to have our @puddingviz piece featured here!.

As the year comes to a close, we look back at the best #datajournalism published. From Venezuela to Berlin and across multiple languages, we’ve found some remarkable #ddj masterpieces that’ve been a source of inspiration this year. Come take a look:

0

0

6

If you are interested in how #data and #AI can be used to analyze women's representation in the news, be sure to watch @PolisLSE #journalismAi workshop where @SahitiSarva did a terrific job in presenting the work that we did with @puddingviz on analyzing women focused headlines!.

🚀 At #JournalismAI’s first ever community workshop, @DAarambillet, @SahitiSarva, & @baliborio shared their experience on how data and AI can be used to analyse women’s representation in the news👇🏽 #InternationalWomensDay2022 .

0

2

6

Woah!! @dinabass @ChloeWhiteaker.

Ten zillion (biased) stable diffusion images from @Leonardonclt getting a shout out by @merbroussard at #datajconf

1

1

7