Seongyun Lee

@sylee_ai

Followers

319

Following

2K

Media

20

Statuses

483

Research intern @LG_AI_Research | Ph.D. Student @kaist_ai | Researching on Alignment, Safety, Trustworthy AI and multimodality #NLProc

Joined August 2023

🎉 Excited to share that our paper "How Does Vision-Language Adaptation Impact the Safety of Vision Language Models?" has been accepted to #ICLR2025! 🖼 Vision-Language Adaptation empowers LLMs to process visual information—but how does it impact their safety? 🛡 And what about

1

16

61

Today we’re releasing research with @apolloaievals. In controlled tests, we found behaviors consistent with scheming in frontier models—and tested a way to reduce it. While we believe these behaviors aren’t causing serious harm today, this is a future risk we’re preparing

openai.com

Together with Apollo Research, we developed evaluations for hidden misalignment (“scheming”) and found behaviors consistent with scheming in controlled tests across frontier models. We share examples...

231

359

3K

We’re excited to introduce AB-MCTS! Our new inference-time scaling algorithm enables collective intelligence for AI by allowing multiple frontier models (like Gemini 2.5 Pro, o4-mini, DeepSeek-R1-0528) to cooperate. Blog: https://t.co/XikFY55AHb Paper: https://t.co/pnwLBL7Nbk

27

228

1K

🚨 Want models to better utilize and ground on the provided knowledge? We introduce Context-INformed Grounding Supervision (CINGS)! Training LLM with CINGS significantly boosts grounding abilities in both text and vision-language models compared to standard instruction tuning.

2

46

124

🚨 New Paper co-led with @bkjeon1211 🚨 Q. Can we adapt Language Models, trained to predict next token, to reason in sentence-level? I think LMs operating in higher-level abstraction would be a promising path towards advancing its reasoning, and I am excited to share our

4

43

168

[1/6] Ever wondered why Direct Preference Optimization is so effective for aligning LLMs? 🤔 Our new paper dives deep into the theory behind DPO's success, through the lens of information gain. Paper: "Differential Information: An Information-Theoretic Perspective on Preference

5

22

67

[CL] The CoT Encyclopedia: Analyzing, Predicting, and Controlling how a Reasoning Model will Think S Lee, S Kim, M Seo, Y Jo... [KAIST AI & CMU] (2025) https://t.co/T3i1qcKKT1

0

3

7

Does anyone want to dig deeper into the robustness of Multimodal LLMs (MLLMs) beyond empirical observations Happy to serve this exactly through our new #ICML2025 paper "Understanding Multimodal LLMs Under Distribution Shifts: An Information-Theoretic Approach"!

1

30

114

🚨 New preprint! Can small language models (sLMs) solve complex problems like LLMs? We show how to go beyond cloning reasoning—to distill tool-using agent behavior into sLMs as tiny as 0.5B. Meet Agent Distillation: 📄 https://t.co/whBmSl96Em Here's the details 🧵👇:

huggingface.co

2

27

130

The CoT Encyclopedia How to predict and steer the reasoning strategies of LLMs that use chain-of-thought (CoT)? More below:

7

66

311

🚨This week's top AI/ML research papers: - AlphaEvolve - Qwen3 Technical Report - Insights into DeepSeek-V3 - Seed1.5-VL Technical Report - BLIP3-o - Parallel Scaling Law for LMs - HealthBench - Learning Dynamics in Continual Pre-Training for LLMs - Learning to Think - Beyond

5

170

1K

Glad to share that our AgoraBench paper has been accepted at @aclmeeting 2025 (main)! Special thanks to our coauthors @scott_sjy @xiangyue96 @vijaytarian @sylee_ai @yizhongwyz @kgashteo Carolin @wellecks @gneubig! A belief I hold more firmly now than when I started this project

#NLProc Just because GPT-4o is 17 times more expensive than GPT-4o-mini, does that mean it generates synthetic data 17 times better? Introducing the AgoraBench, a benchmark for evaluating data generation capabilities of LMs.

1

11

65

🏆Glad to share that our BiGGen Bench paper has received the best paper award at @naaclmeeting! https://t.co/avEvuskDPt 📅 Ballroom A, Session I: Thursday May 1st, 16:00-17:30 (MDT) 📅 Session M (Plenary Session): Friday May 2nd, 15:30-16:30 (MDT) 📅 Virtual Conference: Tuesday

🤔How can we systematically assess an LM's proficiency in a specific capability without using summary measures like helpfulness or simple proxy tasks like multiple-choice QA? Introducing the ✨BiGGen Bench, a benchmark that directly evaluates nine core capabilities of LMs.

10

23

132

Presenting ✨Knowledg Entropy✨ at #ICLR2025 today in Oral 5C(Garnet 216-218) at 10:30AM and in Poster 6(#251) from 3:00PM We investigated how changes in a model's tendency to integrate its parametric knowledge during pretraining affect knowledge acquisition and forgetting

🎉 Excited to share that Knowledge Entropy has been accepted to #ICLR2025 as an oral presentation! Check out if you are interested in why LLMs lose their ability to acquire new knowledge during pretraining. See you in Singapore!

1

9

46

So excited to drop PaperCoder, a multi-agent LLM system that turns ML papers into full codebases. It looks like this:📄 (papers) → 🧠 (planning) → 🛠️ (full repos), all powered by 🤖. Big thanks to @_akhaliq for the shoutout! Paper:

arxiv.org

Despite the rapid growth of machine learning research, corresponding code implementations are often unavailable, making it slow and labor-intensive for researchers to reproduce results and build...

2

18

68

Paper2Code Automating Code Generation from Scientific Papers in Machine Learning

13

232

1K

#NLProc New paper on "evaluation-time scaling", a new dimension to leverage test-time compute! We replicate the test-time scaling behaviors observed in generators (e.g., o1, r1, s1) with evaluators by enforcing to generate additional reasoning tokens. https://t.co/Qaxhdap52S

3

38

173

Paper: https://t.co/0k7n189iiZ A special thanks to my wonderful co-authors for their contributions to this work! @GeewookKim @jiyeonkimd @hyunji_amy_lee @hoyeon_chang @suehpark @seo_minjoon

arxiv.org

Vision-Language adaptation (VL adaptation) transforms Large Language Models (LLMs) into Large Vision-Language Models (LVLMs) for multimodal tasks, but this process often compromises the inherent...

0

0

7

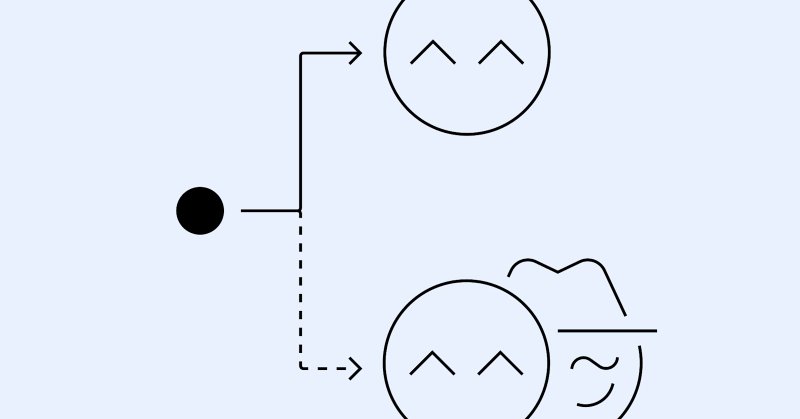

Our key contributions are as follows: 1. We perform a series of experiments to identify that safety degradation during VL adaptation stems from the adaptation process itself, not just the quality of training data. 2. We assess existing safety tuning methods (safety SFT and

1

0

3

Method: Model weight merging for safe and helpful LVLM We develop model weight merging as an effective and cost-efficient approach to mitigate safety degradation while maintaining the helpfulness of LVLMs. Model weight merging is particularly beneficial when the objectives of

1

0

3