Sasha Doubov

@sashadoubov

Followers

478

Following

1,328

Media

19

Statuses

138

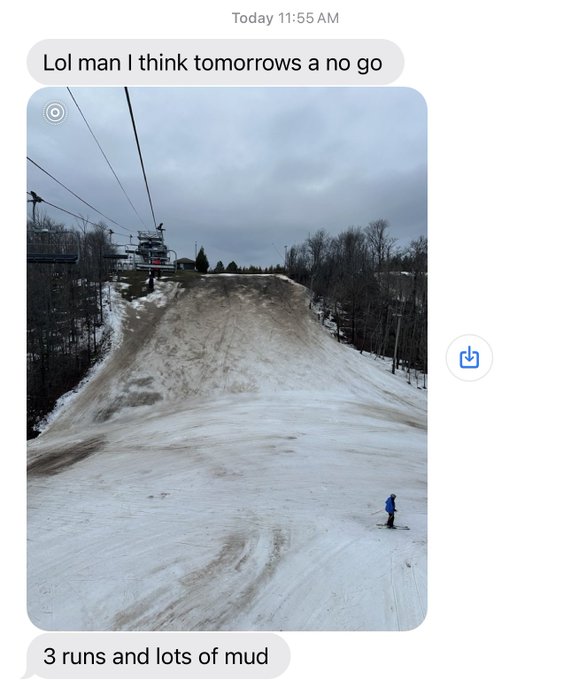

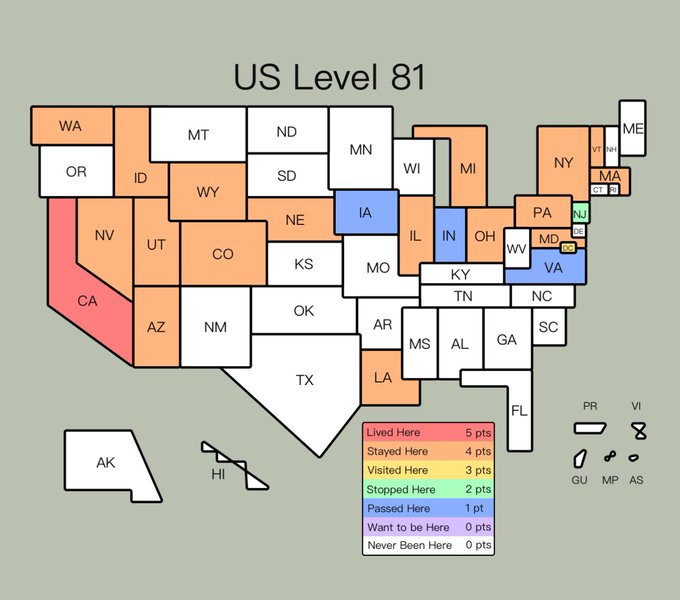

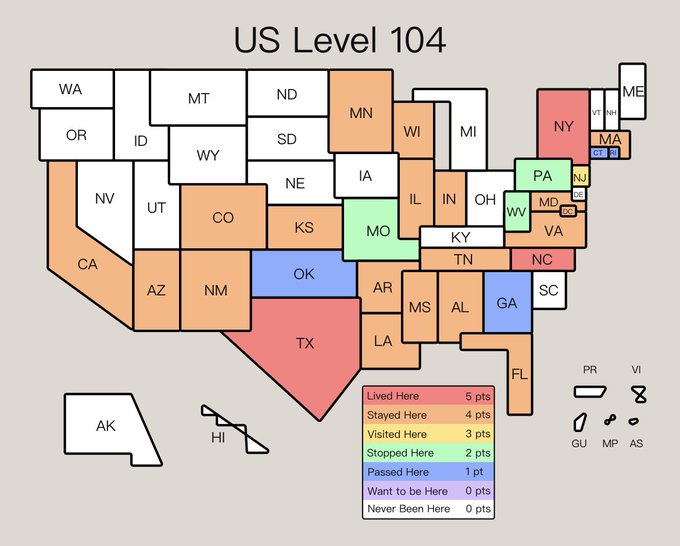

research scientist @mosaicml | ML research + skiing | studied @uoft & @uwaterloo

toronto/sf

Joined February 2020

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Arsenal

• 512486 Tweets

Flamengo

• 166875 Tweets

DAVI NO DOMINGAO

• 124229 Tweets

Corinthians

• 103552 Tweets

Botafogo

• 93032 Tweets

Knicks

• 79909 Tweets

Wesley

• 78136 Tweets

Brunson

• 69711 Tweets

Embiid

• 66532 Tweets

Sixers

• 40199 Tweets

Romero

• 38965 Tweets

Philly

• 38825 Tweets

Sporting

• 33159 Tweets

Candace Parker

• 31664 Tweets

Clippers

• 27995 Tweets

Velez

• 27667 Tweets

Sevilla

• 27470 Tweets

الزمالك

• 25803 Tweets

Felipe Melo

• 22088 Tweets

Diniz

• 19620 Tweets

Betis

• 19061 Tweets

Kawhi

• 16422 Tweets

Kyrie

• 15387 Tweets

Cano

• 14882 Tweets

Harden

• 14242 Tweets

#OMRCL

• 12381 Tweets

Paul George

• 11954 Tweets

Renato Augusto

• 10127 Tweets

Last Seen Profiles

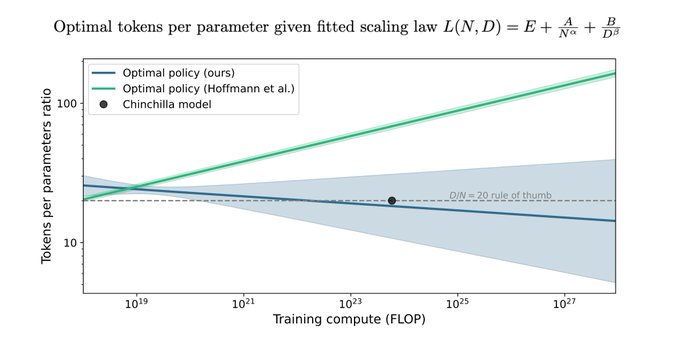

we are in the csi miami "enhance" era of reproducibility research

2

8

88

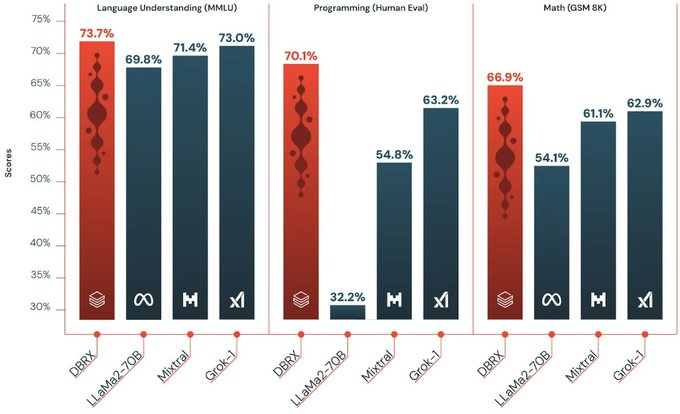

it’s been such a blast getting to work on this model! so excited that the world gets to check it out now 👀

2

5

66

not squashing commits on a PR really helped further my cause… ty

@Tgale96

for letting us stand on the shoulder of giants

0

0

10

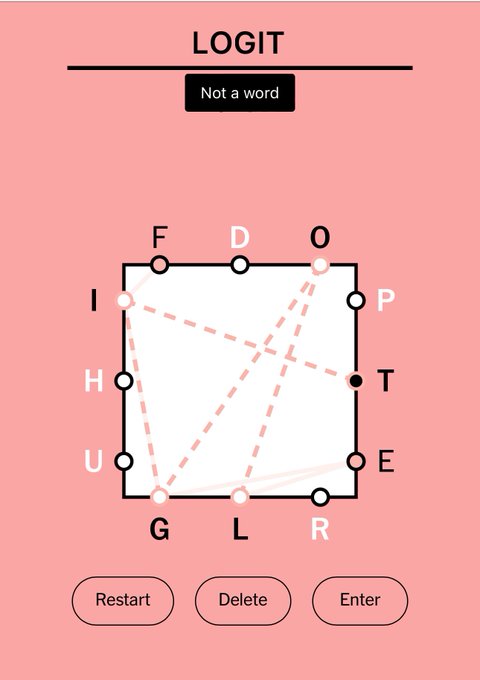

claude 3 confirmed shape rotator

0

0

9

.

@veritasium

's new video may or may not make you halt what you're doing, but it is certain to move you.

Beautiful take on Godel's incompleteness theorems and the halting problem.

0

0

3

@rajko_rad

palisades in Tahoe! specifically the alpine side, you get an amazing view of the lake

0

0

2

@untitled01ipynb

The main use-case I found was downloading the data from this chart as a csv and then plotting elsewhere…

0

0

2

@ninklefitz

@jefrankle

I have been smuggling ketchup chips and sortilège to encourage this program

0

0

1

@aidangomezzz

I used to go to Williams as a student, but that was more bc of convenience/location rather than it being the best 🙃 probably some nicer places uptown tho

1

0

1