Arda Senocak

@ardasnck

Followers

220

Following

6K

Media

30

Statuses

233

Assistant Professor, UNIST https://t.co/zewMlmFRZ0

Daejeon, Republic of Korea

Joined July 2010

Happy to be listed as an Outstanding Reviewer again #ICCV2025 🌟

There’s no conference without the efforts of our reviewers. Special shoutout to our #ICCV2025 outstanding reviewers 🫡 https://t.co/WYAcXLRXla

0

0

4

[2] How Far Can We Go With Synthetic Data for Audio-Visual Sound Source Localization?, Arda Senocak*, Sooyoung Park*, Tae-Hyun Oh, Joon Son Chung

1

0

1

[1] Seeing Through Touch: Tactile-Driven Visual Localization of Material Regions, Seongyu Kim, Seungwoo Lee, Hyeonggon Ryu, Joon Son Chung, Arda Senocak

1

0

2

Thanks for sharing our work @_akhaliq 🤩 Code is coming very soon 🐍🎙️

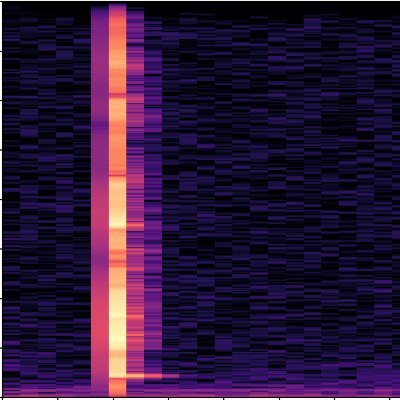

Audio Mamba Bidirectional State Space Model for Audio Representation Learning Transformers have rapidly become the preferred choice for audio classification, surpassing methods based on CNNs. However, Audio Spectrogram Transformers (ASTs) exhibit quadratic scaling

1

2

26

``Audio Mamba: Bidirectional State Space Model for Audio Representation Learning,'' Mehmet Hamza Erol, Arda Senocak, Jiu Feng, Joon Son Chung,

0

3

18

PS: 👏 A big shoutout to @guy_yariv for his great work AudioToken (Interspeech 2023).

1

0

3

Finally, We pair a single image with different object sounds, highlighting our method's interactive sound localization power 🎶

1

0

0

We qualitatively compared our method to a text-conditioned open-world segmentation model🧐 Results suggest that sound sources aren't always well localized with text info🤔 But our audio-visual correspondence-based model excels in pinpointing sounding objects! 🎯

1

0

0

Our method shines in extensive experiments, surpassing state-of-the-art approaches by a wide margin! 🚀 🏆 Check out these qualitative results too! Our model produces precise, compact localization maps for sounding objects 🎯

1

0

0

Our method: 1- Converts audio to CLIP-compatible tokens for audio-driven embeddings. 2- Creates audio-grounded masks for the audio embeddings. 3- Extracts image features from highlighted regions, aligning them with audio embeddings using audio-visual correspondence.

1

0

1

Can Foundational Model Help Alignment? 🤔 We aimed to use CLIP's robust multi-modal alignment into audio-visual correspondence🌟But without using any explicit text input, just pure audio-visual correspondence!

1

0

1

Introducing our new #WACV2024 paper!🎉 📝 : https://t.co/JbFyRbRidN 🤗@huggingface Demo: https://t.co/HFPxkCNKK6 Wed. 5th (Today) 8:00PM-10:00PM "Can CLIP Help Sound Source Localization?"

2

0

20

There's one more goodie in the paper. By synthetically pairing a single image with various sounds from objects present in a scene, we showcase our method's strength in interactive sound localization. We observe a clear edge over competing methods!

1

0

2

Cross-modal semantic alignment is important in understanding semantically mismatched audio-visual events, e.g., silent objects, offscreen sounds. Our method performs better than the competing methods in false positive detection as this task also require cross-modal interaction.

1

0

2

We put all existing sound localization methods to the test in the cross-modal retrieval task. Thanks to our robust cross-modal alignment, we outshine other state-of-the-art methods 🌟 High sound localization performance doesn't always translate to superior cross-modal retrieval!

1

0

2

The current evaluation settings do not capture the true sound source localization ability. We propose two auxiliary evaluation tasks stemming from the cross-modal alignment task : 1️⃣Interactive sound localization 2️⃣Cross-modal retrieval

1

0

2