Wei Lin @ CVPR 2025

@WeiLinCV

Followers

126

Following

175

Media

13

Statuses

68

Research associate @ ELLIS Unit, LIT AI Lab, Institute for Machine Learning, JKU Linz. Collab with MIT-IBM Watson AI Lab. PhD@TU Graz

Graz, Austria

Joined January 2022

🚨 New @ICCVConference 2025 paper!.Can GPT-4o actually localize an object from just a few examples?.Turns out not really. In our @ICCVConference paper, we propose a simple fix: teach it from video tracking data. Results? Better few-shot localization, stronger context grounding.

IPLOC accepted to ICCV25 ☺️.Thanks to all the people that were part of it 🩷. The idea for this paper came by a lake during a visit to Graz for a talk. It has traveled with me through too many countries and too many wars, and it’s now a complete piece of work.

0

0

4

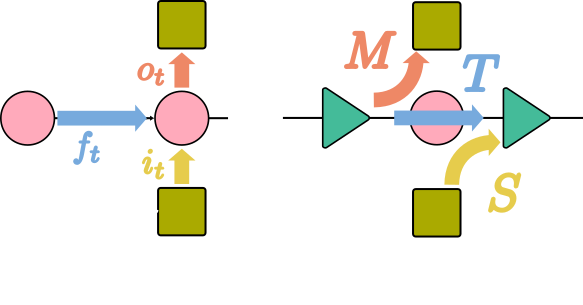

Check our new work pLSTM that brings the power of linear RNNs to arbitrary DAGs and multi-dimensional data, enabling parallel computation and long-range modeling. It outperforms Transformers on extrapolation tasks and handles images, graphs, and grids with remarkable efficiency.

Ever wondered how linear RNNs like #mLSTM (#xLSTM) or #Mamba can be extended to multiple dimensions?.Check out "pLSTM: parallelizable Linear Source Transition Mark networks". #pLSTM works on sequences, images, (directed acyclic) graphs. Paper link:

0

0

4

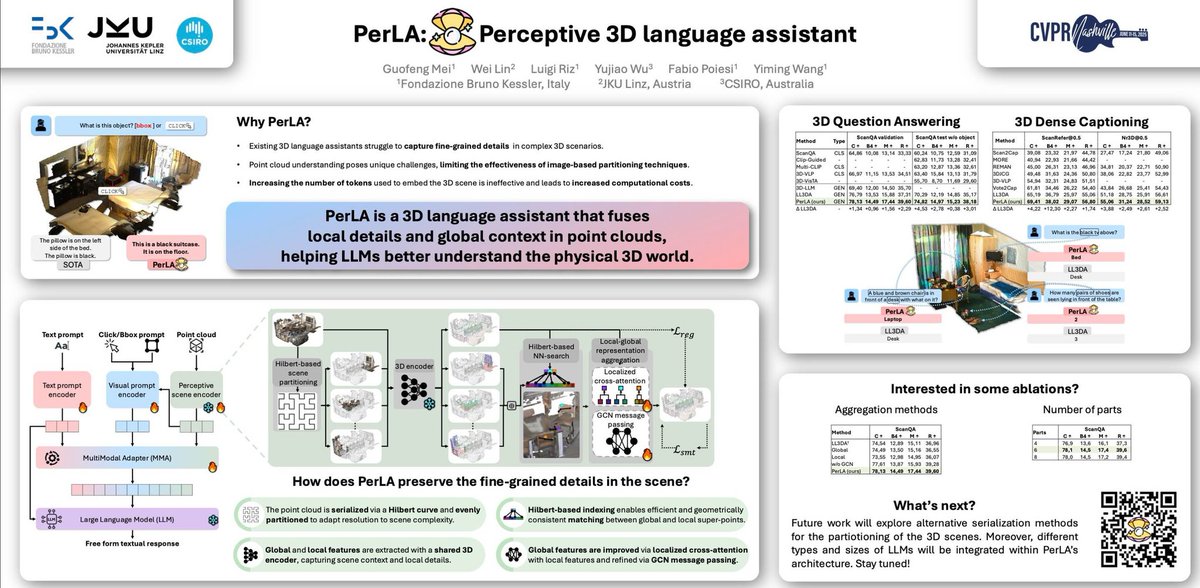

Check out our poster and talk with Guofeng at #355 in ExHall D. PerLA, is our new 3D language assistant that helps LLMs better understand the physical world! PerLA fuses local details and global context from point clouds using cross-attention + GNNs, and achieves SOTA on 3D bench

1

2

6

RT @roeiherzig: 🚨 Our panel kicks off at 11:30 AM in Room 207 A–D (Level 2)! . Don't miss an amazing discussion with: Ludwig Schmidt, Andre….

0

3

0

Our MMFM Panel Discussion "What is Next in Multimodal Foundation Models?" will happen at 11:30am in room 207 A-D.Moderator: Roei Herzig (UC Berkeley).Panelists: Ludwig Schmidt, Andrew Owens, Arsha Nagrani, Ani Kembhavi.@MMFMWorkshop.@CVPR

0

1

2

Our LiveXiv is "live" at ICLR2025!! Come and check it out at Poster session #356 in Hall 3!! @NimrodShabtay @iclr_conf

0

0

2

Our work LiveXiv will be presented at ICLR 2025 TODAY, April 25th, from 10:00 to 12:30 (Poster #356) !! 🚀🚀. LiveXiv is a challenging, maintainable, and contamination-free scientific multi-modal live dataset—designed to set a new benchmark for Large Multimodal Models (LMMs).

LiveXiv will be "live" on #ICLR2025 - Friday April 25th 10:00-12:30 Poster #356.@RGiryes @felipemaiapolo @LChoshen @WeiLinCV @jmie_mirza @leokarlin @ArbelleAssaf @SivanDoveh.

0

0

5

Excited to share our work PerLA accepted at CVPR 2025!🎉 PerLA enhances 3D scene understanding by integrating fine details with global context, improving accuracy in 3D QA and dense captioning while reducing hallucinations. Check out the details:.🔗Project

🎉 PerLA has been accepted at CVPR 2025! 🎉.🔹 What? A Large Language Model for 3D scene understanding, combining global context with object details for accurate, context-aware responses. 📄 More: #CVPR2025 #AI #3DUnderstanding #3DLanguageAssistant

0

0

5

🚀 We are excited to announce the 3rd Workshop on "What is Next in Multimodal Foundation Models?" at CVPR 2025 in Nashville! 🎉 . 📅 Paper Submission Deadlines:.🔹 Archival: Mar 14, 2025.🔹 Non-archival: Mar 21, 2025.Join us as we explore future of multimodal foundation modesl🤖.

🚀 Call for Papers – 3rd Workshop on Multi-Modal Foundation Models (MMFM) @CVPR! 🚀. 🔍 Topics: Multi-modal learning, vision-language, audio-visual, and more!.📅 Deadline: March 14, 2025.📝 Submit your paper: 🌐 More details:

0

1

7