Sahil Verma

@Sahil1V

Followers

586

Following

6K

Media

17

Statuses

583

PhD student @uwcse. Robustness and Interpretability. Currently at @MSFTResearch. Former intern at @amazon, @itsArthurAI. Undergrad @IITKanpur

Seattle, WA

Joined September 2013

Glad to share that our paper was accepted the main EMNLP 2025 Conference!.

🚨 New Paper! 🚨.Guard models slow, language-specific, and modality-limited?. Meet OmniGuard that detects harmful prompts across multiple languages & modalities all using one approach with SOTA performance in all 3 modalities!! while being 120X faster 🚀.

4

7

69

RT @soumyesinghal: Llama Nemotron model just got Super-Charged ⚡️We released Llama-Nemotron-Super-v1.5 today! The best open model that can….

huggingface.co

0

7

0

RT @_shruti_joshi_: I will be at the Actionable Interpretability Workshop (@ActInterp, #ICML) presenting *SSAEs* in the East Ballroom A fro….

0

6

0

RT @fengyao1909: 😵💫 Struggling with 𝐟𝐢𝐧𝐞-𝐭𝐮𝐧𝐢𝐧𝐠 𝐌𝐨𝐄?. Meet 𝐃𝐞𝐧𝐬𝐞𝐌𝐢𝐱𝐞𝐫 — an MoE post-training method that offers more 𝐩𝐫𝐞𝐜𝐢𝐬𝐞 𝐫𝐨𝐮𝐭𝐞𝐫 𝐠𝐫𝐚𝐝𝐢𝐞….

0

60

0

RT @avibose22: 🚨 Code is live! Check out LoRe – a modular, lightweight codebase for personalized reward modeling from user preferences. 📦 F….

github.com

Code to reproduce results of our experiments using LoRe - facebookresearch/LoRe

0

6

0

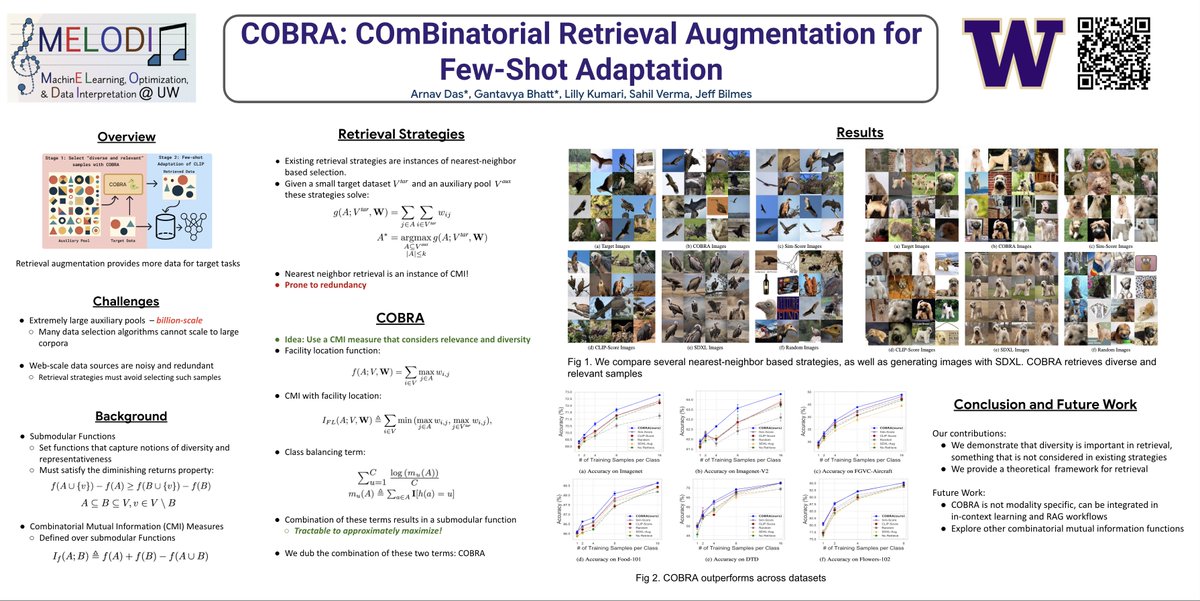

Using retrieval? --> check out this work by my awesome collaborator on how to increase diversity when retrieving!.

1/8 🚀 How can retrieval augmentation be made both relevant and non-redundant for few-shot adaptation? I'm excited to introduce COBRA. Catch our poster at #CVPR25 (ExHall D, Poster #450) on Sat 14 Jun, 5–7 p.m. CDT:

0

2

7

RT @fengyao1909: 🔥 "Vibe coding" is everywhere—but is it really care-free?. We introduce 𝐑𝐞𝐚𝐋, an RL framework that trains LLMs with automa….

0

41

0

Joint work with awesome collaborators:.@keeghin, @jbilmes, @_siskac, @LukeZettlemoyer,.@hila_gonen, and @csinva. Also huge thanks to @arnaved, @butcher_jasper, @BhattGantavya, @zvez11, @faeze_brh, @MakeshNarsimhan, @soumyesinghal, @_shruti_joshi_ for helpful discussions.

0

0

7

RT @jinaycodes: Introducing soarXiv ✈️, the most beautiful way to explore human knowledge. Take any paper's URL and replace arxiv with soar….

0

1K

0

RT @soumyesinghal: ⚡⚡ Llama-Nemotron-Ultra-253B just dropped: our most advanced open reasoning model.🧵👇

0

13

0

RT @soumyesinghal: ⚡️ Llama-Nemotron-Ultra is fully open — weights and post-training data. Achieves 76.0% on GPQA via FP8 RL training with….

0

4

0

RT @soumyesinghal: 🚀 Meet Llama-Nemotron-Super-49B, our team’s new reasoning model released at #GTC25! Proud to have contributed 🧠. Optimiz….

huggingface.co

0

4

0

RT @snehaark: Come for the ridiculous 30 column spreadsheet created at @sweetgreen, stay for a critical discussion of how you *actually* sc….

0

3

0

RT @_shruti_joshi_: 1\ Hi, can I get an unsupervised sparse autoencoder for steering, please? I only have unlabeled data varying across mul….

0

10

0