Yi-Chern

@yichern_tan

Followers

218

Following

220

Media

2

Statuses

23

lead, post-training @cohere. previously @Waymo @Facebook @Yale. 🇸🇬

San Francisco, CA

Joined September 2022

RT @cohere: We’re excited to announce $500M in new funding to accelerate our global expansion and build the next generation of enterprise A….

0

49

0

check out how we use system prompt learning to reverse engineer human preferences!.

Thrilled to share our new preprint on Reinforcement Learning for Reverse Engineering (RLRE) 🚀. We demonstrate that human preferences can be reverse engineered effectively by pipelining LLMs to optimise upstream preambles via reinforcement learning 🧵⬇️

0

1

17

1313 in 13th position after launching on 13th mar.

🚀 Big news @cohere's latest Command A now climbs to #13 on Arena!. Another organization joining the top-15 club - congrats to the Cohere team!. Highlights:.- open-weight model (111B).- 256K context window.- $2.5/$10 input/output MTok. More analysis👇

1

6

49

gpt-4o perf on enterprise and stem tasks, >deepseek-v3 on many languages including chinese human eval, >gpt-4o on enterprise rag human eval. 2 gpus 256k context length, 156 tops at 1k context, 73 tops at 100k context. this is your workhorse.

Today @cohere is very excited to introduce Command A, our new model succeeding Command R+. Command A is an open-weights 111B parameter model with a 256k context window focused on delivering great performance across agentic, multilingual, and coding usecases. 🧵

1

10

54

RT @cohere: We’re excited to release Command R7B Arabic – a compact open-weights AI model optimized to deliver state-of-the-art Arabic lang….

0

53

0

Let's rethink how LLMs learn from mistakes 📚 . Look out for this new work led by @LisaAlazraki while @cohere:.

arxiv.org

Showing incorrect answers to Large Language Models (LLMs) is a popular strategy to improve their performance in reasoning-intensive tasks. It is widely assumed that, in order to be helpful, the...

Do LLMs need rationales for learning from mistakes? 🤔 When LLMs learn from previous incorrect answers, they typically observe corrective rationales explaining each mistake. In our new preprint, we find these rationales do not help, in fact they hurt performance!. 🧵

1

3

10

Very happy to share Command R7B, our smallest and final model in the R series. It's an all-rounder and great for agentic workflows in a small package. I'm at #NeurIPS2024, happy to chat post-training generally capable agents, evals, synthetic data, and what we do at cohere!.

Introducing Command R7B: the smallest, fastest, and final model in our R series of enterprise-focused LLMs!. It delivers a powerful combination of state-of-the-art performance in its class and efficiency to lower the cost of building AI applications.

1

12

65

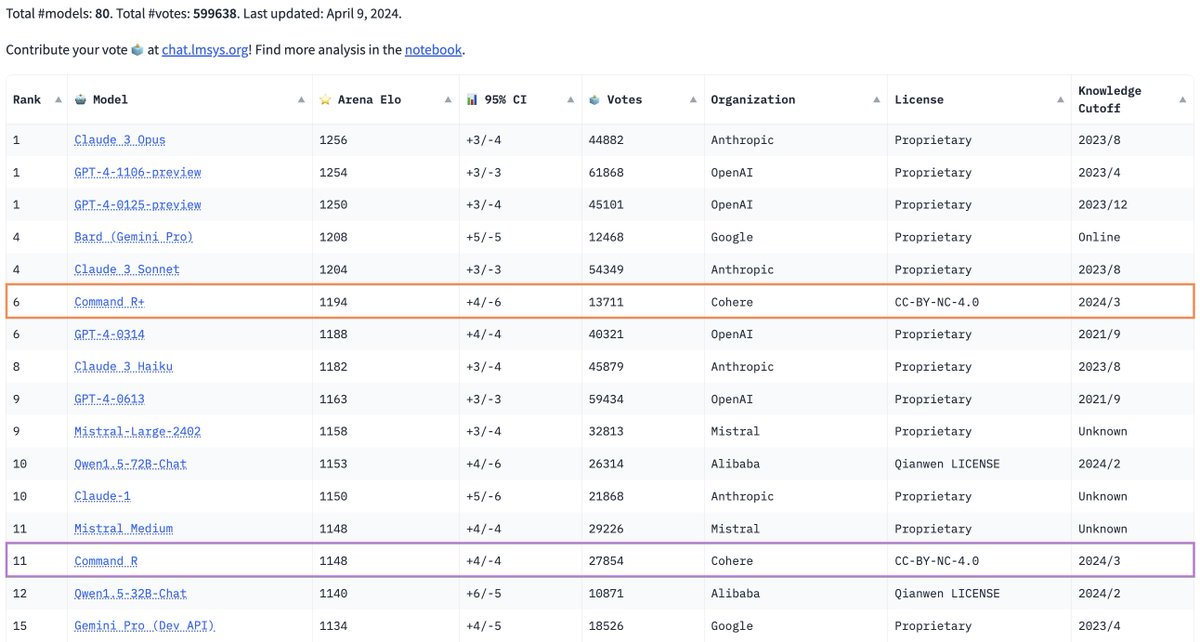

🏟Command R+ is 6th in the arena leaderboard, as the first open-weights model to surpass earlier versions of GPT-4. 🤔No RAG in the arena yet!. Download at or try via @cohere's API with the @CohereForAI Research Grant Program

1

6

45

➕more weights here, released on 🤗. For the fullest Command R+ experience with RAG, grounded generations, and citations, go to

⌘R+. Welcoming Command R+, our latest model focused on scalability, RAG, and Tool Use. Like last time, we're releasing the weights for research use, we hope they're useful to everyone!

0

3

29

We’re not done yet,➕more soon.

[Arena Update]. @cohere's Command R is now top-10 in Arena leaderboard🔥. It's now one of the best open models reaching the level of top proprietary models. We find the model great at handling longer context, which we plan to separate as a new category in Arena very soon.

1

1

26

RT @aidangomez: ⌘-R. Introducing Command-R, a model focused on scalability, RAG, and Tool Use. We've also released the weights for research….

cohere.com

Command R is a scalable generative model targeting RAG and Tool Use to enable production-scale AI for enterprise.

0

182

0

Excited to share what we've been working on - create a chatbot on top of LLMs with any persona you want using conversant!.

(1/2) Large language models' (LLM) generative capabilities can be used to create immersive dialogue agents (i.e., chatbots) for a wide variety of applications. However, you'll also need to manage chat logs, write LLM prompts, and keep track of facts. ↓

0

2

29