USC RESL

@uscresl

Followers

328

Following

23

Media

36

Statuses

93

Robotic Embedded Systems Laboratory @USC

Los Angeles, CA

Joined June 2009

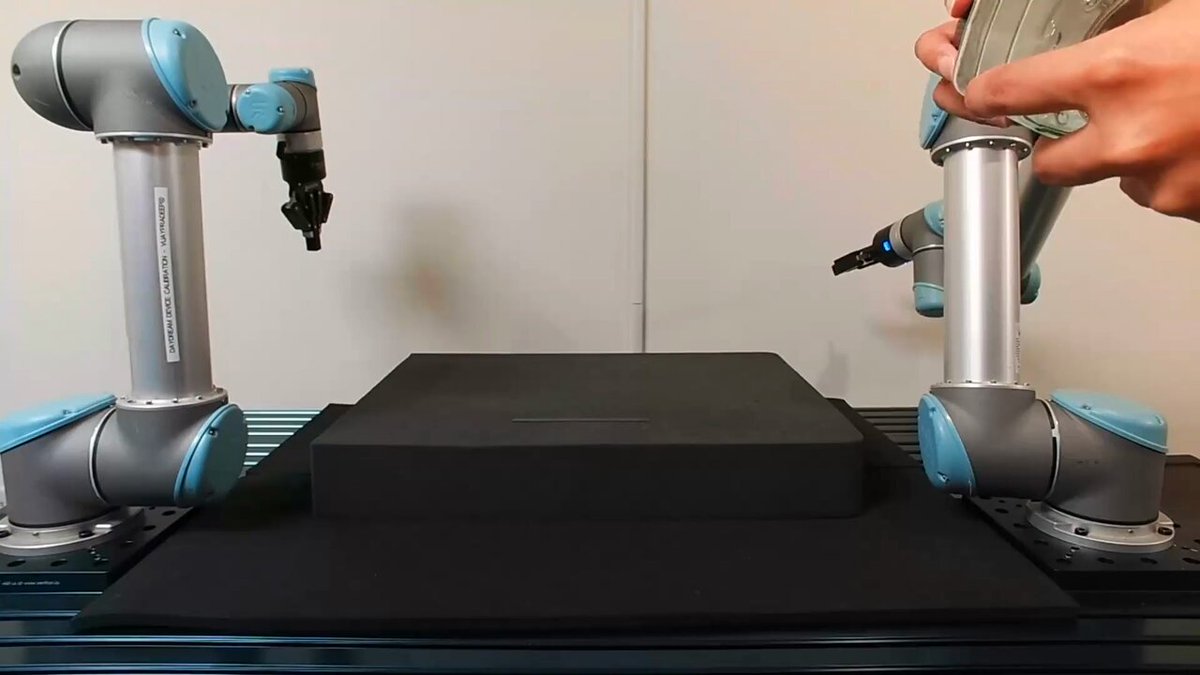

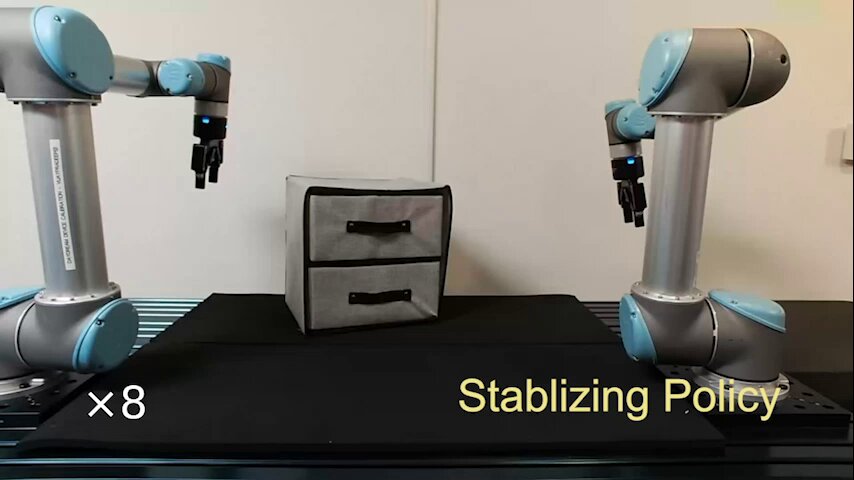

Blog post: Project website: Paper: Code: Authors: @arthur801031, @SichengHe12345, @daniel_t_seita, @gauravsukhatme. End thread🧵.

github.com

VoxAct-B: Voxel-Based Acting and Stabilizing Policy for Bimanual Manipulation (CoRL 2024) - VoxAct-B/voxactb

0

1

1

Collision Avoidance and Navigation for a Quadrotor Swarm Using End-to-end Deep Reinforcement Learning.site: arxiv: A thread🧵, 5/5.

sites.google.com

Abstract

0

0

2

Conditionally Combining Robot Skills using Large Language Models.code: arxiv: A thread🧵, 4/5.

github.com

Contribute to krzentner/language-world development by creating an account on GitHub.

1

0

2

RESL shall be presenting 4 papers at #ICRA2024! Congratulation to all the authors! .Presenters: @hegde_shashank, @Zhehui_Huang @JeremySMorgan3, @krzentner, @gauravsukhatme!. @ieee_ras_icra @CSatUSC @USCViterbi @USCMingHsiehEE . A thread🧵, 1/5

1

8

26

Checkout our poster at CoRL, presented by @arthur801031 @GautamSalhotra on Tue Nov 7!.Website, code, videos: PDF: @gauravsukhatme @CSatUSC . End thread🧵, 9/9.

0

1

3