Steve Rathje

@steverathje2

Followers

5K

Following

21K

Media

170

Statuses

7K

Incoming Asst. Prof. of HCI @CarnegieMellon studying psychology of technology. @NSF postdoc @nyuniversity, PhD @Gates_Cambridge, BA @Stanford, @ForbesUnder30

New York, USA

Joined November 2013

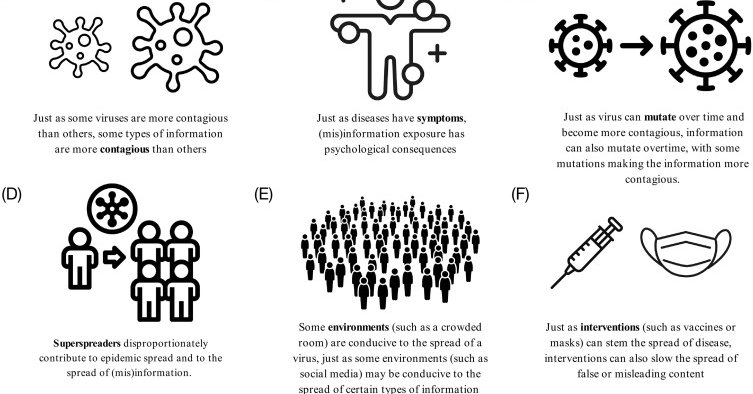

🚨New paper in @TrendsCognSci 🚨. Why do some ideas spread widely, while others fail to catch on?. @Jayvanbavel and I review the “psychology of virality,” or the psychological and structural factors that shape information spread online and offline. Thread 🧵(1/n)

2

57

180

RT @ItaiYanai: Storytellers have a fitness advantage. This study on the Agta – a Filipino hunter-gatherer population – found that skilled s….

0

40

0

In other words, psychological and structural factors interacted to shape information spread, as @jayvanbavel and I discussed in our recent paper:

cell.com

Why do some ideas spread widely, while others fail to catch on? Here, we review the psychology of information spread, or the psychology of ‘virality’. Similar types of information tend to spread in...

0

0

2

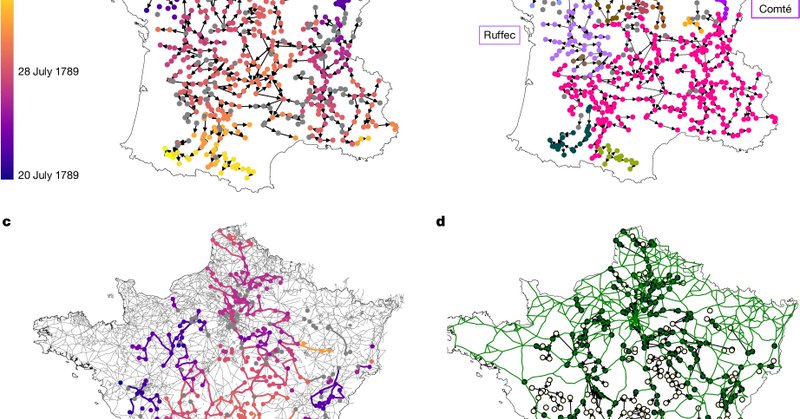

Negativity spread like a virus long before social media. This new paper in @Nature used epidemiological models to explain the viral spread of rumors during France’s Great Fear of 1789.

nature.com

Nature - Epidemiological methods are used to show that the Great Fear of 1789, a series of peasant insurrections in rural revolutionary France, was driven by deliberate political action rather than...

1

6

35

@TheMaliaMarks @YaraKyrychenko This fits our recent discussion on the "structural factors" shaping information spread:

0

0

1

While out-group animosity often goes viral on social media, this new paper by @TheMaliaMarks, @YaraKyrychenko, Johan Gärdebo & Jon Roozenbeek finds that in-group solidarity drives engagement after political crises: . Interesting illustration of how

1

7

28

RT @econ_b: Is AI already impacting the job market? . A new paper from me, @erikbryn, and @RuyuChen at @DigEconLab digs into data from ADP.….

0

453

0

RT @danicajdillion: 1 in 5 dog owners would let you die to save a puppy. 🐶. The majority of pet owners see their dog as a soulmate. Many do….

0

5

0

Had an amazing time on the @progress2analog podcast with @caitlinbegg! We talked about.-The psychology of virality.-My "feud" with Facebook.-Debates about the impact of social media.-AI sycophancy.And much more! Check out the full conversation here:

0

0

8

RT @SPSPnews: 🤔Why do some ideas spread widely, while others fail to catch on? This #MemberMonday, we're learning about the psychology of v….

0

4

0

RT @garrytan: If you *only* used SAT to admit to elite colleges, share of admits from top 1% income falls 15.8% → 9.9% and representation f….

0

2K

0

RT @TomerUllman: "The Illusion Illusion" . vision language models recognize images of illusions. but they also say non-illusions are illu….

0

27

0

RT @emollick: AI in HR: in an experiment with 70,000 applicants in the Philippines, an LLM voice recruiter beat humans in hiring customer s….

0

125

0

RT @MishaTeplitskiy: You: should I cite my paper? Maybe it's not related enough? Ok, screw it, I'll self-cite but only once. Other scienti….

0

103

0

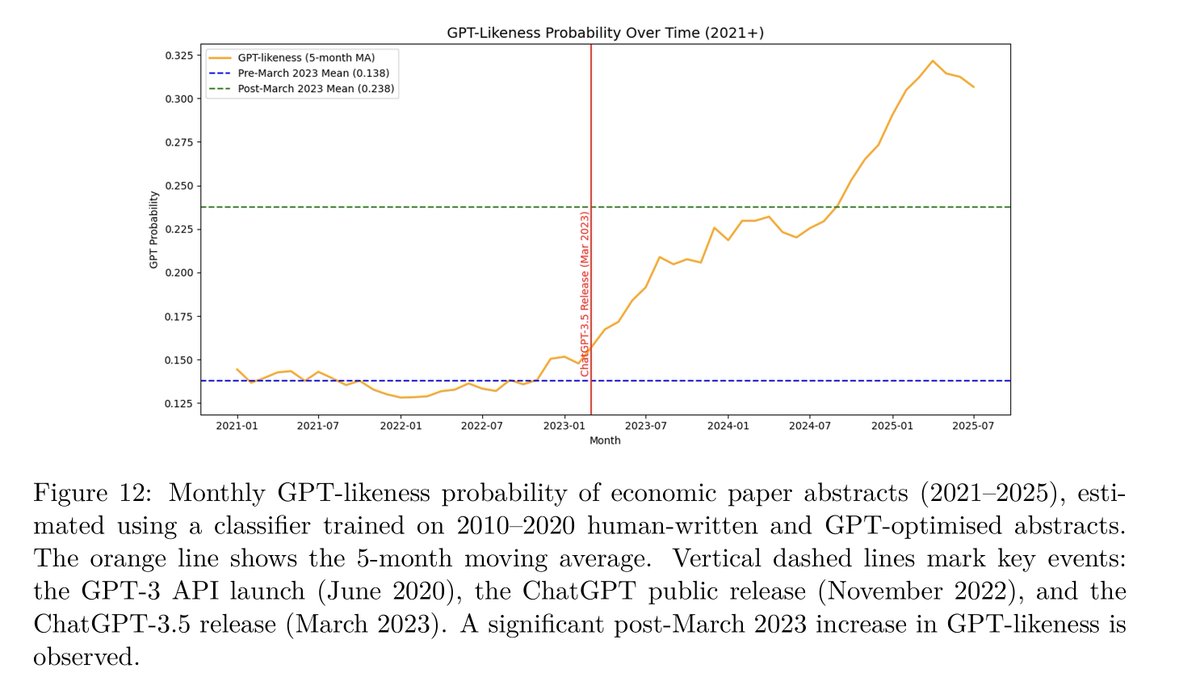

RT @JohnHolbein1: lol you can see very clearly that Economists are using ChatGPT to help write their abstracts.

0

47

0

RT @JohnHolbein1: What happened when Australia gave families with newborns $2,400 no questions asked?. Infant health improved considerably.….

0

29

0

RT @BrendanNyhan: "In meetings with senior executives last year, Zuckerberg scolded generative AI product managers for moving too cautiousl….

reuters.com

Impaired by a stroke, a man fell for a Meta chatbot originally created with Kendall Jenner. His death spotlights Meta’s AI rules, which let bots tell falsehoods.

0

25

0