Sean J. Taylor

@seanjtaylor

Followers

46,192

Following

4,240

Media

971

Statuses

18,982

Building @MotifAnalytics . Formerly @Lyft and @Facebook . Keywords: Experiments, Causal Inference, Statistics, Machine Learning, Economics.

Oakland, CA

Joined February 2009

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

Petro

• 503175 Tweets

Dortmund

• 212995 Tweets

Sancho

• 169539 Tweets

Boulos

• 117088 Tweets

Paco Villa

• 69498 Tweets

Rio Grande do Sul

• 65342 Tweets

Gabigol

• 52691 Tweets

Canelo

• 36285 Tweets

COMPLOT CONTRA LOS BROS

• 32600 Tweets

Kolo Muani

• 25361 Tweets

#AEWDynamite

• 22025 Tweets

Cacá

• 21766 Tweets

フジコ・ヘミングさん

• 21452 Tweets

Fortaleza

• 16269 Tweets

Carlos Miguel

• 14698 Tweets

Amazonas

• 14048 Tweets

Bidon

• 11473 Tweets

Last Seen Profiles

Pinned Tweet

I was stoked to get some data from Bluesky and start to play around with it in

@MotifAnalytics

. Check it out and see how much you can do (from scratch!) in ten minutes, when you have the right abstractions for working with sequences.

8

8

73

Another week, another re-read of

@karpathy

's timeless recipe for training neural networks, perhaps the article that's saved me the most time in aggregate.

9

165

1K

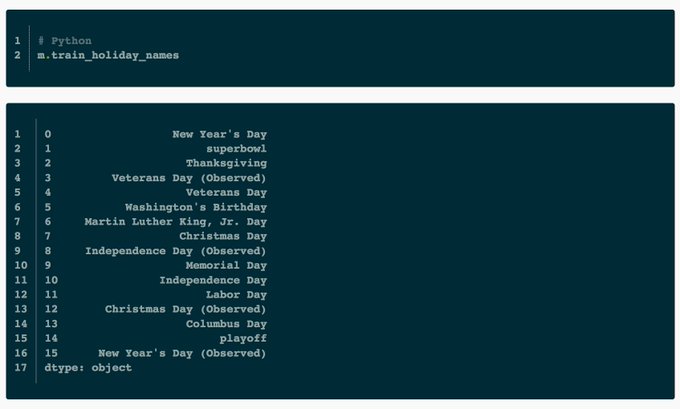

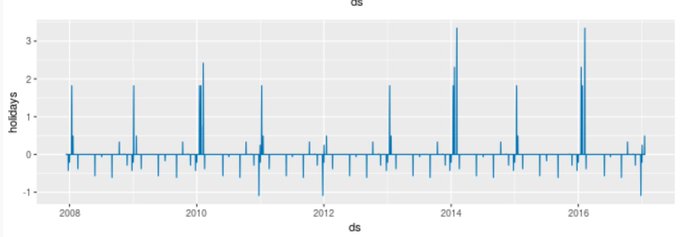

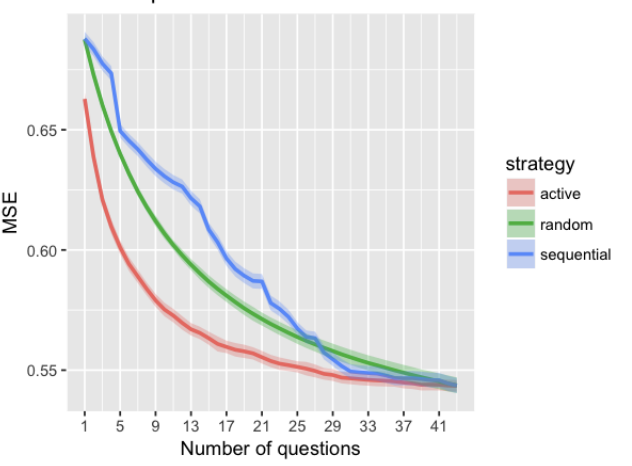

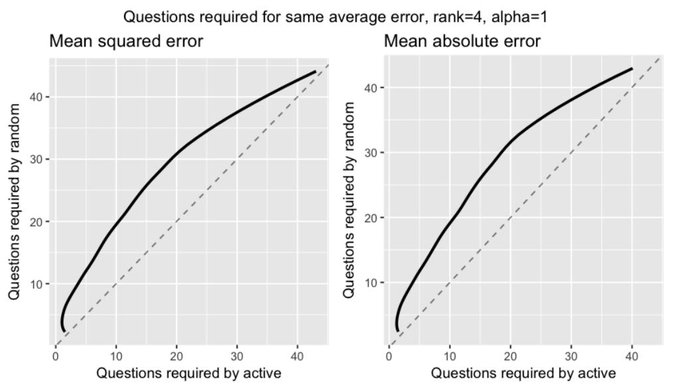

📈Long thread on how Prophet works📈

- Instead of sharing slides I'm transcribing my talk to tweets with lots of GIFs :)

- Thanks to

@_bletham_

for all his help.

24

335

1K

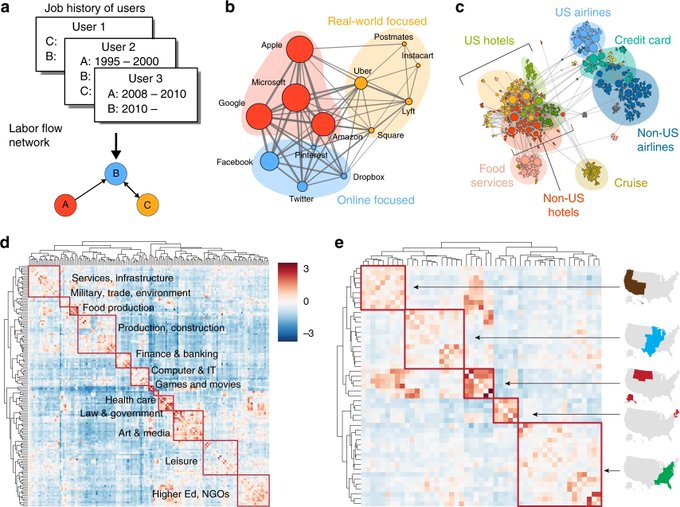

This new paper clustering the global labor network is really rad. It's cool how much structure pops out when you apply the right model. Great work

@vagabondjack

@yy

et al.!

10

197

698

Professional news! I’ve joined

@MotifAnalytics

as co-founder and chief scientist. Building a company and a product is a new challenge for me. Just a few weeks in I can tell it’s going to be a ton of fun.

Thanks to

@mikpanko

and Theron Ji for letting me join their awesome team!

49

16

543

One time, I had to estimate a confidence interval for a sample estimate and there was no convenient formula for analytic standard errors.

#IBootstrapped

5

32

545

Would be useful in a world where writing code were the main hard part of software engineering.

19

57

495

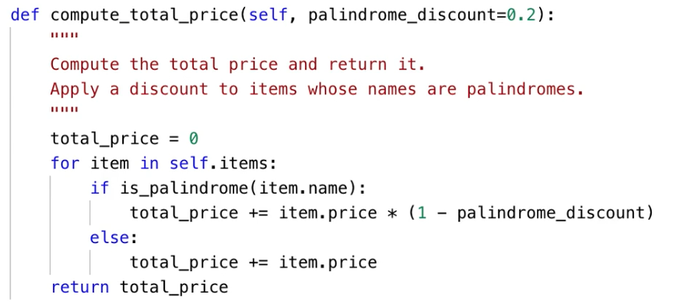

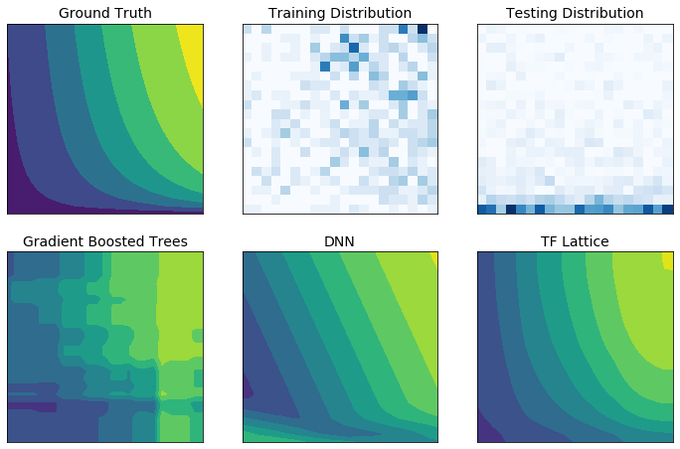

My team has used this idea (in PyTorch🔥) to ensure that models are consistent with various economic and business assumptions (e.g. diminishing marginal returns). It can improve quality and training requirements by not forcing your model to learn easy-to-articulate structure.

6

91

494

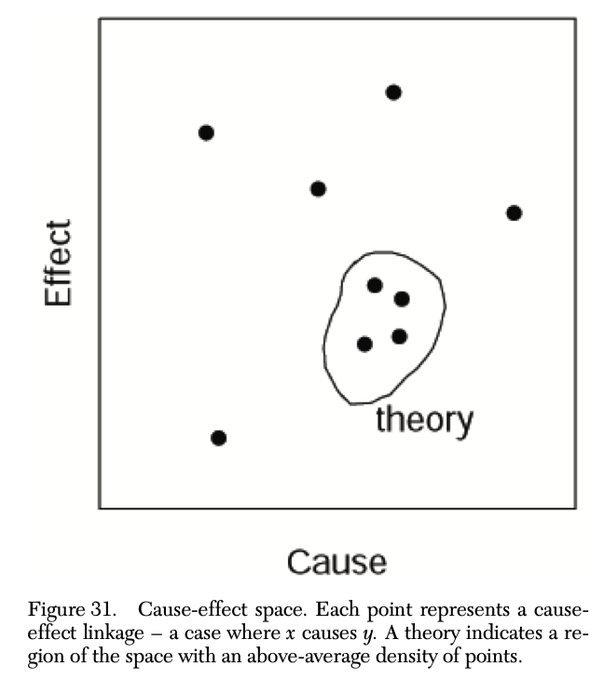

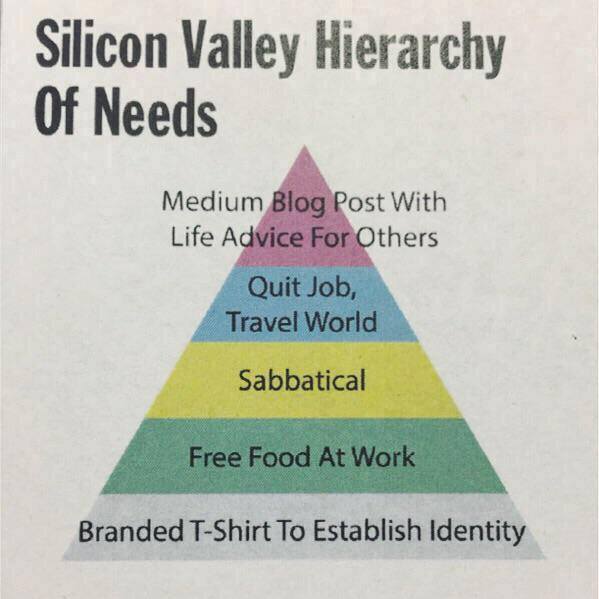

Here's a nice paper about why this idea is extremely appealing and completely wrong:

12

64

453

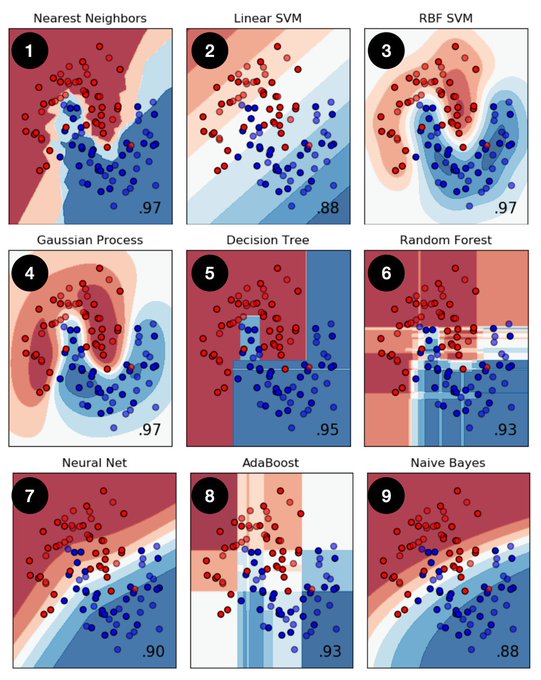

Folks have asked me for references on the ML perspective on causal inference. Here are two good ones:

1.

@matheusfacure

's book "Causal Inference for the Brave and the True" (Part II)

2.

@CasualBrady

's lecture notes (Chapter 7)

4

88

438

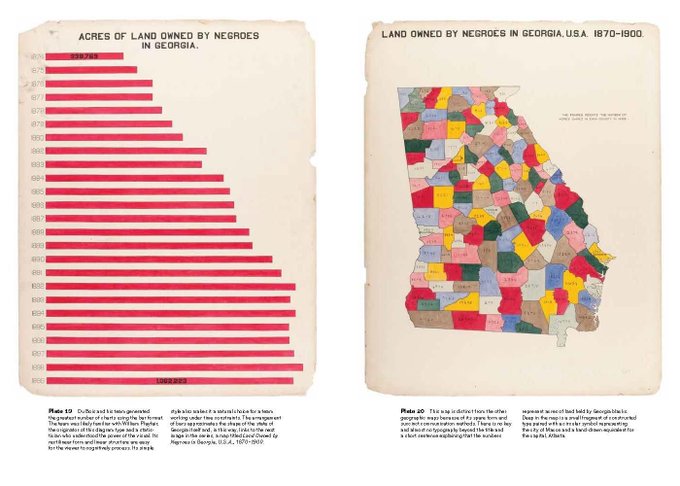

For a sufficiently small/biased sample, the most important factors are things experts already know and the next set of factors you learn are probably noise and unlikely to generalize. In other news, I look forward to the release of 19 new Marvel movies this summer.

16

44

437

Incredibly useful reference on gradient descent optimization algorithms:

I've been reading up on these algorithms for a project and the literature is SO dense and math-y. So thankful to

@seb_ruder

for synthesizing it clearly and providing pseudocode.

3

112

434