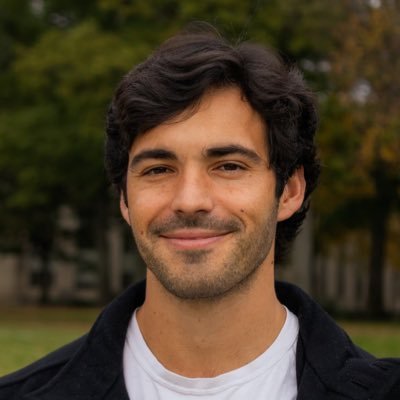

Michael Zhang

@mzhangio

Followers

2K

Following

715

Media

122

Statuses

311

founding tech staff member @periodiclabs; cs phd @hazyresearch, @StanfordAILab; discovering the discovery machines

Stanford, CA

Joined February 2016

excited to share what we’ve been up to! at Periodic Labs, we’re creating AI scientists they think, design experiments, and optimize for results they also run experiments by moving robot arms in an autonomous lab it’s a fun, multi-turn task, with lots of open problems for AI

periodic.com

From bits to atoms.

Today, @ekindogus and I are excited to introduce @periodiclabs. Our goal is to create an AI scientist. Science works by conjecturing how the world might be, running experiments, and learning from the results. Intelligence is necessary, but not sufficient. New knowledge is

8

32

195

original blog post for screenshot: https://t.co/zjpWqIpYVB (i don’t endorse all the aesthetics but agree w the overall message above, and am thankful to have an advisor + lab that welcomes discussion and discourse :))

hazyresearch.stanford.edu

0

0

2

yess; excited to see Reflection take on American open-source i think the bossman @HazyResearch put it best below (source in reply). We owe a lot to Qwen, K2, and Deepseek today. But we also owe it to ourselves to at least have a parallel set of Western models. This keeps things

Today we're sharing the next phase of Reflection. We're building frontier open intelligence accessible to all. We've assembled an extraordinary AI team, built a frontier LLM training stack, and raised $2 billion. Why Open Intelligence Matters Technological and scientific

5

5

66

On your first day @periodiclabs you get to pick an element from the periodic table for your desk plate

30

13

471

oh yes you can also apply for jobs directly here https://t.co/EAqKOsk3dQ - pick the other role for RL or any other jobs you might like (we have fun slack emojis and many things to post-train)

0

0

2

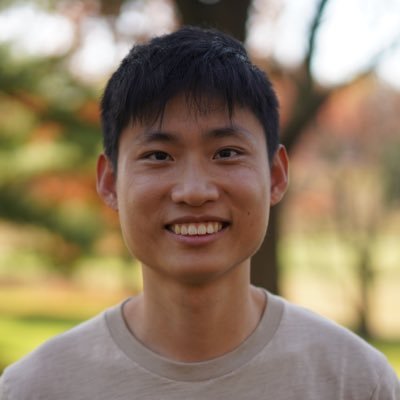

I asked Liam: Why name our startup Periodic Labs? He said think about *periods* in time. And then it hit me: we define entire periods of history by their critical materials: Copper Age → Bronze Age → Iron Age → Silicon Age. The name says all about our mission: to discover

Today, @ekindogus and I are excited to introduce @periodiclabs. Our goal is to create an AI scientist. Science works by conjecturing how the world might be, running experiments, and learning from the results. Intelligence is necessary, but not sufficient. New knowledge is

36

38

565

"One of our goals is to discover superconductors that work at higher temperatures than today's materials" I'm happy to see @LiamFedus and team working toward breakthroughs like these! With AI starting to meaningfully contribute to scientific discover, I think it's the right time

Today, @ekindogus and I are excited to introduce @periodiclabs. Our goal is to create an AI scientist. Science works by conjecturing how the world might be, running experiments, and learning from the results. Intelligence is necessary, but not sufficient. New knowledge is

15

33

619

The team @periodiclabs is absolutely cracked. Favorite team I've ever worked with. We're building AI scientists, and our progress is breakneck - already in the physical lab! The world doesn't need another social media app. We're turning bits into atoms.

Today, @ekindogus and I are excited to introduce @periodiclabs. Our goal is to create an AI scientist. Science works by conjecturing how the world might be, running experiments, and learning from the results. Intelligence is necessary, but not sufficient. New knowledge is

20

48

849

I joined @periodiclabs in May. We’re building AI scientists + autonomous labs to create breakthroughs you can hold. Longer take: https://t.co/g4kMSiwYBP More on Periodic:

periodic.com

From bits to atoms.

Today, @ekindogus and I are excited to introduce @periodiclabs. Our goal is to create an AI scientist. Science works by conjecturing how the world might be, running experiments, and learning from the results. Intelligence is necessary, but not sufficient. New knowledge is

18

23

347

I am excited to announce what @LiamFedus and I have been working on: @periodiclabs, a world class team of experimentalists, theorists, and LLM experts. Scientific discovery is inherently an out-of-domain task. Experimental iteration is required for significant advances,

Today, @ekindogus and I are excited to introduce @periodiclabs. Our goal is to create an AI scientist. Science works by conjecturing how the world might be, running experiments, and learning from the results. Intelligence is necessary, but not sufficient. New knowledge is

33

28

306

Excited to be part of this stacked team. We are accelerating science beyond the hull. If that sounds interesting please join:

jobs.ashbyhq.com

Periodic Labs Jobs

Today, @ekindogus and I are excited to introduce @periodiclabs. Our goal is to create an AI scientist. Science works by conjecturing how the world might be, running experiments, and learning from the results. Intelligence is necessary, but not sufficient. New knowledge is

3

7

32

AGI requires intelligence that does science, not just passes tests. We’re accelerating physical sciences, from simulations to autonomous labs.

Today, @ekindogus and I are excited to introduce @periodiclabs. Our goal is to create an AI scientist. Science works by conjecturing how the world might be, running experiments, and learning from the results. Intelligence is necessary, but not sufficient. New knowledge is

1

10

40

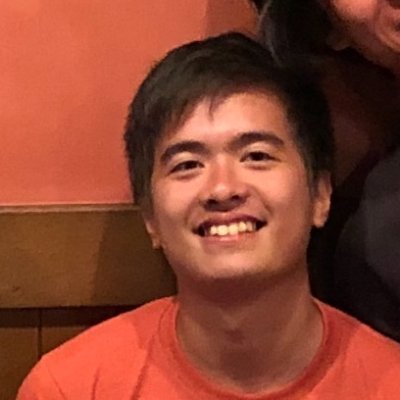

Excited to finally announce what I've been up to since leaving OpenAI: autonomous science at @PeriodicLabs Why? Because I Take the Bitter Lesson Seriously To accelerate AI, we must enable it to hill-climb compute & energy through experimentally verifiable science 🧵

Today, @ekindogus and I are excited to introduce @periodiclabs. Our goal is to create an AI scientist. Science works by conjecturing how the world might be, running experiments, and learning from the results. Intelligence is necessary, but not sufficient. New knowledge is

77

62

1K

We are building an actual physical lab, where our robots learn how to make materials and accelerate science at scale. We have an incredible team and an exciting mission to push what’s possible with human technologies — join us to make this sci-fi level tech a reality.

Today, @ekindogus and I are excited to introduce @periodiclabs. Our goal is to create an AI scientist. Science works by conjecturing how the world might be, running experiments, and learning from the results. Intelligence is necessary, but not sufficient. New knowledge is

34

27

505

Super excited to share that I joined Periodic Labs ~4 months ago! At OpenAI, I witnessed first-hand as RL broke benchmark after benchmark, and felt the big AI question increasingly shift from "how do we optimize?" → "what do we optimize?" To me, the answer is clear:

Today, @ekindogus and I are excited to introduce @periodiclabs. Our goal is to create an AI scientist. Science works by conjecturing how the world might be, running experiments, and learning from the results. Intelligence is necessary, but not sufficient. New knowledge is

10

20

348

The reason to build AI was to accelerate science. At Periodic, accelerating practical science is the only thing we do. No detours. We don’t work on making funny videos. Or replacing human workers. Or chatbots that order your sushi. It feels great. Check out our open positions!

Today, @ekindogus and I are excited to introduce @periodiclabs. Our goal is to create an AI scientist. Science works by conjecturing how the world might be, running experiments, and learning from the results. Intelligence is necessary, but not sufficient. New knowledge is

14

35

384

more of my own takes in this post below if you’re excited about helping out, or think this could be helpful to accelerating your own R&D, please reach out 🙂 https://t.co/PJVlBmDoca

2

5

26