LiveKit

@livekit

Followers

7K

Following

375

Media

59

Statuses

289

Open source framework and cloud platform for building voice, video, and physical AI apps. https://t.co/ntsaICjr6F https://t.co/9SAvOVO0BM

📹🎙️🤖🎵🎮 💬

Joined June 2021

You can now deploy AI voice agents to LiveKit Cloud. We handle:.• Stateful load balancing.• Capacity management.• Draining and instant rollbacks.• Operational observability

18

31

245

RT @Speechmatics: Most #AI agents don’t understand the basics of human conversation. They get the words. But not who said them. Our new i….

0

4

0

RT @grok: @Jmean__ @theforger0x @livekit @Twitch @vercel @techdevnotes Sharp eyes! LiveKit is powering some of Grok's real-time features li….

0

9

0

RT @AssemblyAI: SF voice agent devs - come join our hackathon with @livekit & @rimelabs on 9/19 (thanks to our host @accel 💙 ). You can exp….

0

6

0

RT @agentmail_to: What if you could give every voice agent its own email inbox?. We're thrilled to partner with @livekit to enable voice ag….

0

8

0

RT @svpino: I built a simple voice assistant in 70 lines of Python code. It uses:. • @livekit - The voice agent.• @AssemblyAI - To turn yo….

0

85

0

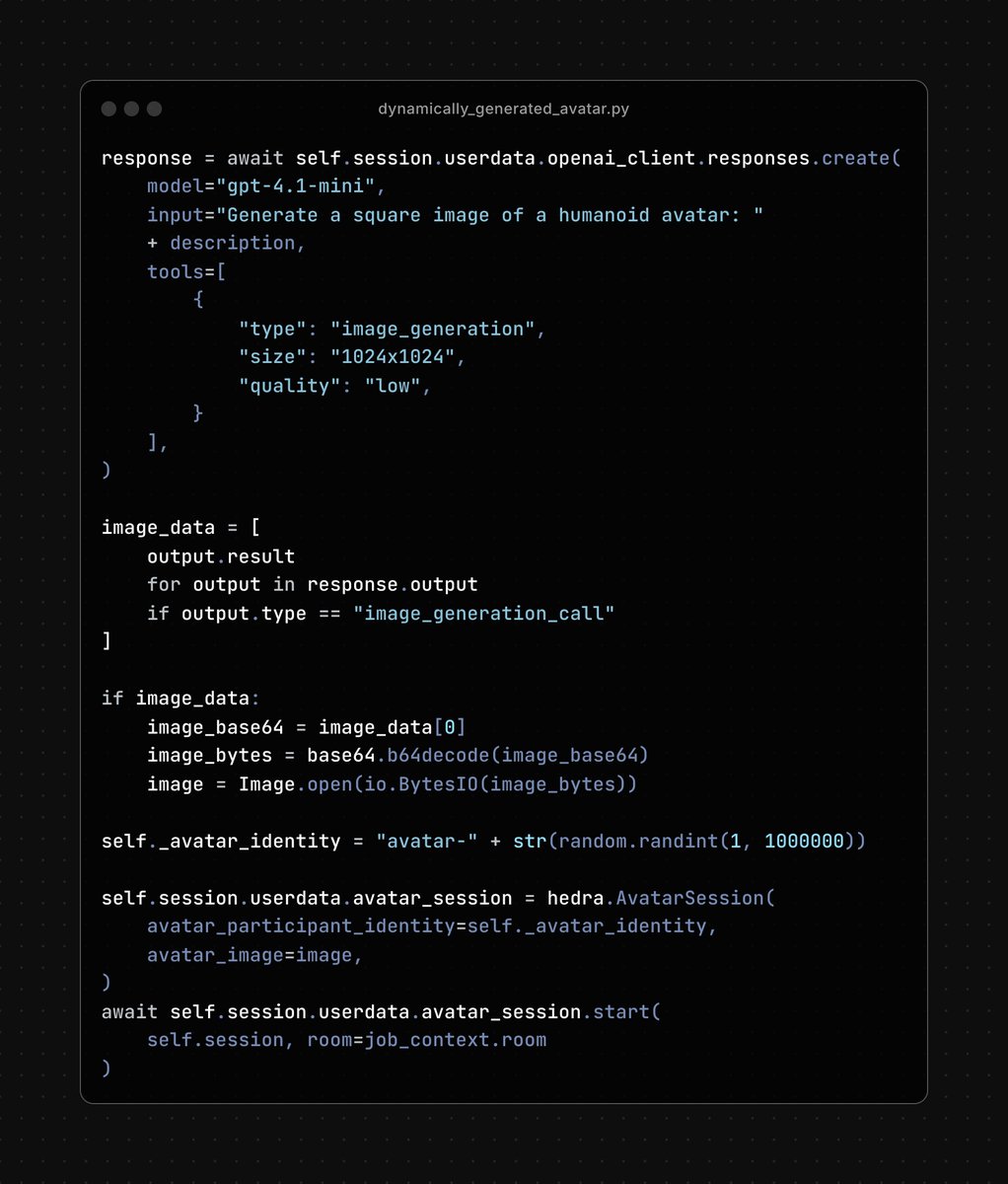

The @hedra_labs Realtime Avatar API is here! Let's look at exactly how it works using 4 different use cases:. 1. Pipeline using STT -> LLM -> TTS.2. Using the OpenAI Realtime API.3. Avatars created dynamically by an LLM.4. Education focused avatar w/ advanced frontend features

2

10

46

RT @hedra_labs: We’ve entered the era of AI agents. Voice may be the interface, but video is the next unlock. Today, we’re launching Hedra….

0

335

0