Liam

@liamhparker

Followers

129

Following

41

Media

10

Statuses

42

PhD student in theoretical physics @UCBerkeley supported by NSF GRFP and Researcher @PolymathicAI. Previously @Princeton.

New York, NY

Joined July 2020

RT @MilesCranmer: 🧵 Could this be the ImageNet moment for scientific AI?. Today with @PolymathicAI and others we're releasing two massive….

0

87

0

RT @cosmo_shirley: Our internship program at Polymathic is open for opportunities from now through fall 2025! . I believe our program provi….

0

45

0

RT @cosmo_shirley: You heard all about AI accelerating simulations (maybe from me?), but do you know. How can AI tell you what is in the….

0

52

0

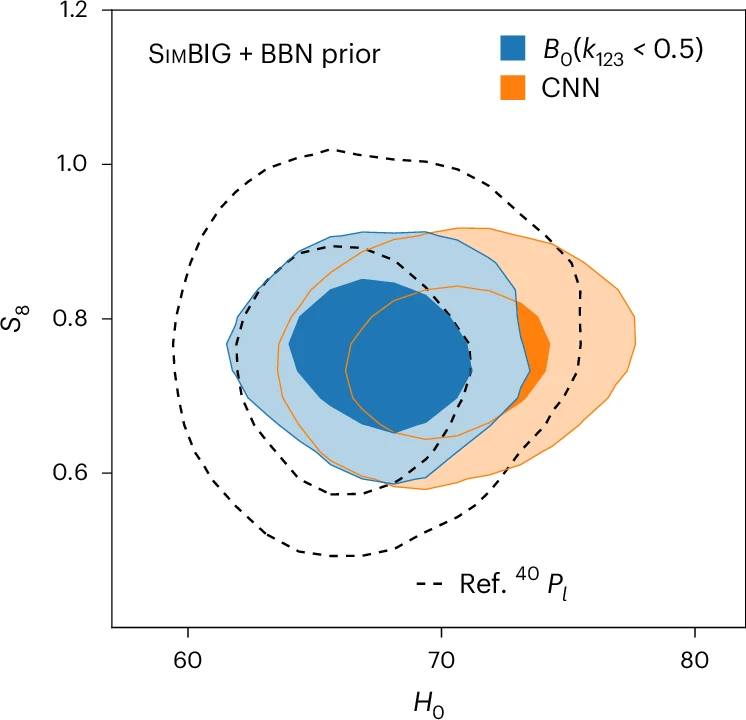

RT @ChanghoonHahn: Excited to announce that our latest #SimBIG collaboration research has just been published in @NatureAstronomy 🔭✨!.#Astr….

0

7

0

Check out our recent work on simulation-based inference in galaxy clustering!.

By extracting non-Gaussian cosmological information on galaxy clustering at non-linear scales, a framework for cosmic inference (SimBIG) provides precise constraints for testing cosmological models. @ChanghoonHahn @cosmo_shirley @DavidSpergel et al.:

0

0

10

RT @SiavashGolkar: SOTA models often use bidirectional transformers for non-NLP tasks but did you know causal transformers can outperform t….

0

11

0

RT @MilesCranmer: Some exciting @PolymathicAI news. We're expanding!!. New Research Software Engineer positions opening in Cambridge UK,….

0

29

0

RT @oharub: 🎉 Excited to introduce Gibbs Diffusion (GDiff), a new Bayesian blind denoising method with applications in image denoising and….

0

29

0

RT @YulingYao: We have been using neural posterior/simulation-based inference(SBI) for scientific computing. There was one hole: you run 10….

0

7

0

Blog: Paper: Training Code & Model Weights:

github.com

Multimodal contrastive pretraining for astronomical data - PolymathicAI/AstroCLIP

0

0

3

9/ This is joint work with my teammates @PolymathicAI, especially with Francois Lanusse, @SiavashGolkar, @LeopoldoSarra, @MilesCranmer, @cosmo_shirley, and many others!.

1

0

4

8/ The road for these advancements was paved by a lot of exciting work done in SSL for galaxies, especially by @GeorgeStein, @peter_melchior, and @mike_walmsley_.

1

0

4