Explore tweets tagged as #Test_Time_Compute

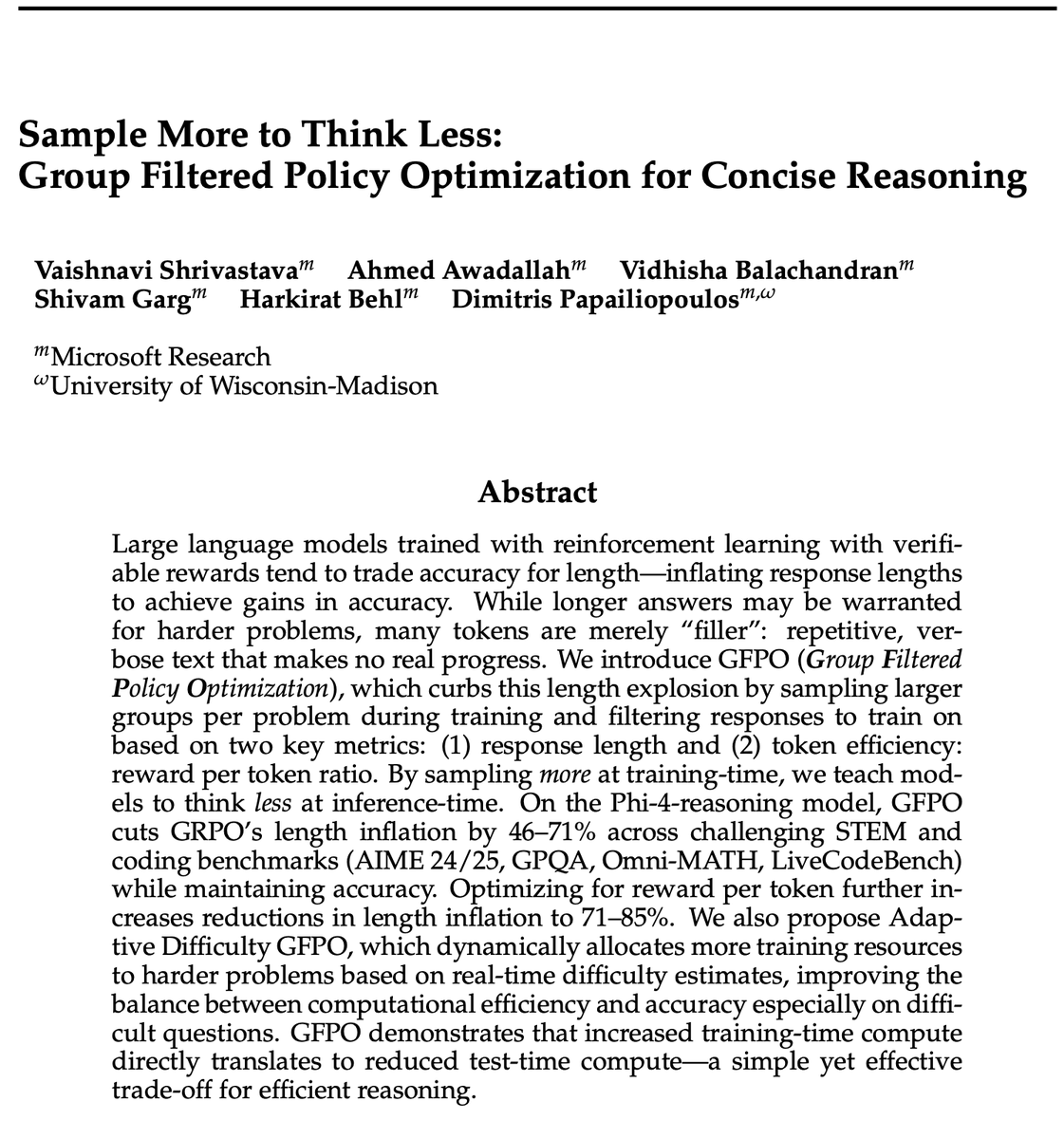

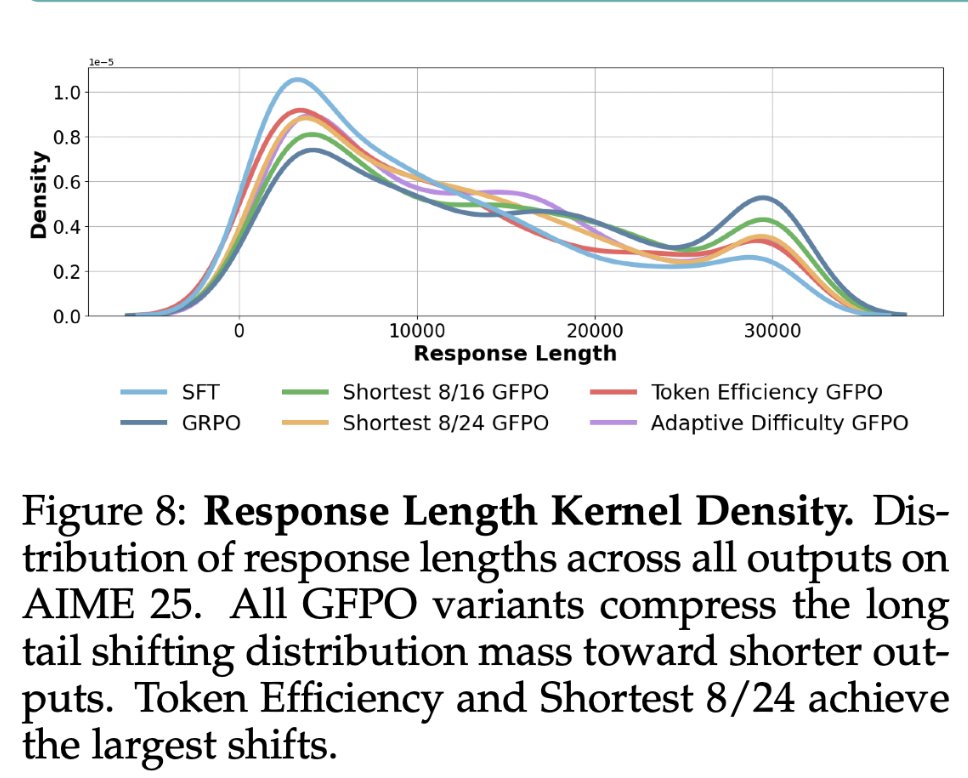

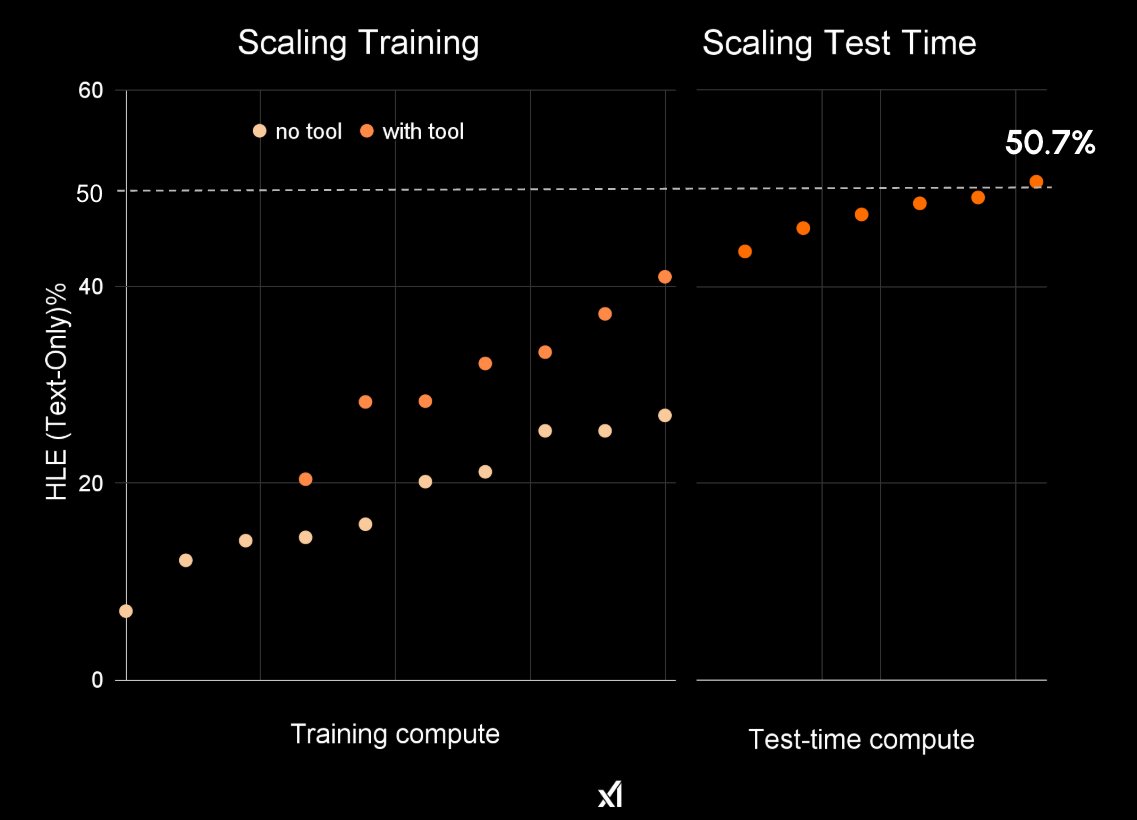

Thinking Less at test-time requires Sampling More at training-time!. GFPO is a new, cool, and simple Policy Opt algorithm is coming to your RL Gym tonite, led by @VaishShrivas and our MSR group:. Group Filtered PO (GFPO) trades off training-time with test-time compute, in order

19

41

361

Our work on test-time scaling for robotics has been accepted to @corl_conf! We show that scaling test-time compute via a generate-and-verify paradigm offers a practical and effective path toward building general-purpose robotics foundation models.

✨ Test-Time Scaling for Robotics ✨. Excited to release 🤖 RoboMonkey, which characterizes test-time scaling laws for Vision-Language-Action (VLA) models and introduces a framework that significantly improves the generalization and robustness of VLAs!. 🧵(1 / N). 🌐 Website:

2

12

93