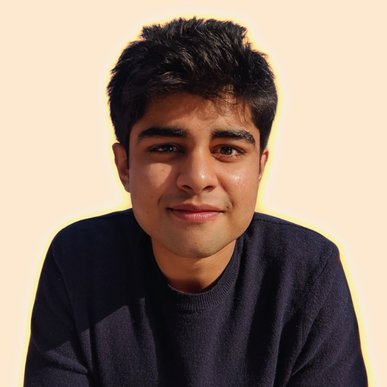

Dhruv Vemula

@dhruvtv

Followers

623

Following

7K

Media

412

Statuses

11K

I (re)post about tech, programming, music, movies, cricket. Engineer at @RobinhoodApp and podcaster at @telugubytes. Married to @divyaa.

San Francisco Bay Area

Joined August 2008

It’s super confusing that Anthropic calls CLAUDE dot md files “memory” files. May be call them “instructions” from now on? That aside, auto memory is awesome!

You can now think of Claude.MD as your instructions to Claude and Memory.MD as Claude's memory scratchpad it updates. If you ask Claude to remember something it will write it there.

0

0

0

This is.. not great, and in direct contrast with @trq212’s post from a few weeks ago.

Major Claude Code policy clear up from Anthropic: "Using OAuth tokens obtained through Claude Free, Pro, or Max accounts in any other product, tool, or service — including the Agent SDK — is not permitted"

0

0

0

We no longer have to write the software by hand. We write the test that we expect to pass and the computer generates. Well actually we don't write the test by hand. We write the spec and the computer generates the tests from that. Well actually we don't write the spec by hand. We

51

217

2K

Turns out with claude code, my decades long strategy of NOT deeply learning: - regexs - sql - nginx confs - elaborate shell commands - advanced shell scripting - any javascript framework - perf optimization - webpack, cdns, bundlers - 1000 other things ...was entirely correct.

689

1K

26K

Also, we tried video for first time, give it a watch and let us know if you want this content on youtube: Part 1: https://t.co/BCj8HbPFvV Part 2:

0

1

3

AI tsunami coastline ni first hit chestundi — and that's us. From ChatGPT to Claude Code, @dhruvtv and @gummadi break down what agentic AI means for software engineers. This one's not comfortable. https://t.co/6bKkxaaO2y

2

3

11

Opus 4.6 now takes the crown as the best long-context model. 🫡 As measured on MRCR v2 8-needle at 256k and 1M, and it beats GPT-5.2 on the 256k range. MRCR v2 (8-needle) is a long-context retrieval benchmark that checks whether a model can find and reproduce 8 specific facts

Anthropic just released Claude Opus 4.6, with major gains across coding, knowledge, and reasoning benchmarks. Claude Opus 4.6 builds on its predecessor’s coding skills and is better at planning, code review and debugging and operating reliably within large codebases. The model

16

8

52

Yet another Karpathy banger. I found myself nodding my head vigorously, as you would watching a rockstar perform. While pausing a few times to hit ‘Yes’ on Claude Code as it ran a deep analysis on 1000+ files, breaking down into Tasks, writing Python and bash scripts as usual.

A few random notes from claude coding quite a bit last few weeks. Coding workflow. Given the latest lift in LLM coding capability, like many others I rapidly went from about 80% manual+autocomplete coding and 20% agents in November to 80% agent coding and 20% edits+touchups in

0

0

0

If an agent can do 95% of a job: - a human only needs to do 5% of it (high-value? yes but still 5%) - frees them up to do 20x more; at worse 5x more - if work to be done is constant, you need 1/5th of current workforce @demishassabis just proved @DarioAmodei’s point.

Dario and Demis at Davos on AI jobs impact. Dario says we are headed to “unusual situation of high GDP growth and high unemployment” with 50% unemployment for entry level jobs within 5 years. Demis responds to Dario’s prediction and says he has a “longer timeline”. The models

0

0

2

I love how “tech” has become a euphemism for plastic clothing. I don’t get what tech pants are.

2

0

2

> Developer output up 76%, Team output up 89% Interested to see this data normalized by engineering hours put in. Anecdotally, I've seen me and everyone around me put in at least 1.5x more hours this year for various reasons. So, what percent can we attribute to gen AI alone?

Today we're releasing the first annual "State of AI Coding" report. At @greptile we review a billion lines of code a month written by every coding agent imaginable. This means we have a lot of data. In this report, we compiled some of the most interesting trends we observed

1

1

2

context engineering, succinctly put

We ran Claude 4.5 Sonnet on ~1200 vibe coding tasks and divided the trace into search and execution. Search accounts for >50% of the total tokens in the trace. Many of those tokens are irrelevant snippets of code from broad greps/file reads, and they cause context rot. Using a

0

1

2

I don’t like git work trees and also how @cursor_ai handles them. - My `git branch` output is a mess. - I use Cursor’s multi-agent UI for throwaway queries but the resultant work trees hang around for days. - Even after they are deleted, branches still remain.

1

0

1