Ashish Vaswani

@ashVaswani

Followers

19,470

Following

1,567

Media

6

Statuses

70

Explore trending content on Musk Viewer

Rio Grande do Sul

• 201547 Tweets

Madonna

• 156305 Tweets

Bucks

• 74997 Tweets

Dame

• 58630 Tweets

Knicks

• 53496 Tweets

Racing

• 53112 Tweets

Pacers

• 45184 Tweets

Sixers

• 34213 Tweets

#911onABC

• 32151 Tweets

bruno mars

• 28205 Tweets

憲法改正

• 27325 Tweets

Pabllo

• 26750 Tweets

dua lipa

• 22312 Tweets

Giannis

• 21496 Tweets

Rony

• 20947 Tweets

Bruins

• 15629 Tweets

Anne Hathaway

• 15150 Tweets

Costas

• 14848 Tweets

Lillard

• 14237 Tweets

#SnowMan結成12周年

• 13473 Tweets

76ers

• 12529 Tweets

Arias

• 12285 Tweets

Estevão

• 11858 Tweets

#LeafsForever

• 11422 Tweets

Talleres

• 10575 Tweets

Last Seen Profiles

Pinned Tweet

I'm thrilled to announce our company,

@essential_ai

. We believe that breakthroughs in AI will unlock the most profound tools for thought, advancing humanity's collective knowledge and capability.

105

117

2K

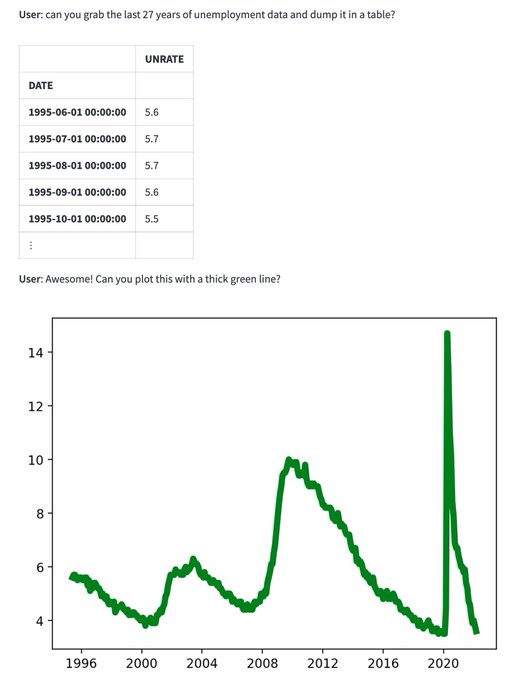

We've taught Transformers to take actions in the digital world!

19

62

698

We’re building the future of useful and intelligent machines. Our early neural networks can make plots, query databases and fetch internet data! If you’re excited to work on fundamental research like learning from human feedback and building sample efficient models, please apply!

18

30

434

Excited to be on this journey with

@nikiparmar09

along with our incredible team

@mrinal_iyer_01

,

@ag_i_2211

,

@samcwl

,

@andrewhojel

and Varun Desai

3

1

48

We are thankful for the support from our angels:

@amasad

,

@altcap

,

@w_conviction

,

@eladgil

,

@fdesouza

, David H. Patraeus,

@GSapoznik

,

@jwmontgomery

, and Mei Zuo.

5

0

32

We are excited to partner with

@MarchCPs

,

@ThriveCapital

, with participation from

@amd

, Franklin Venture Partners (

@FTI_US

), Google, KB Investment,

@nvidia

.

1

0

20

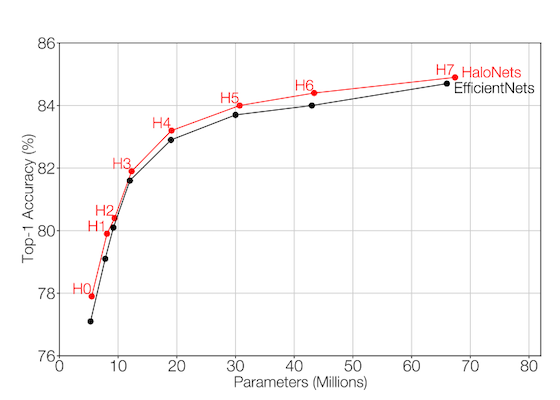

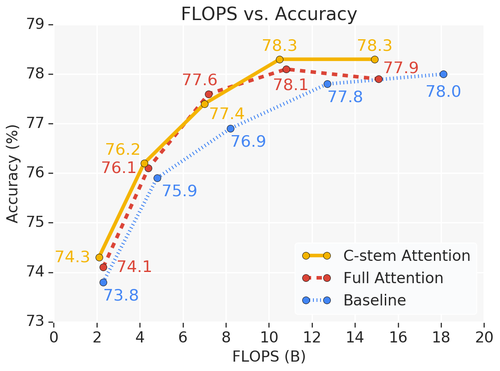

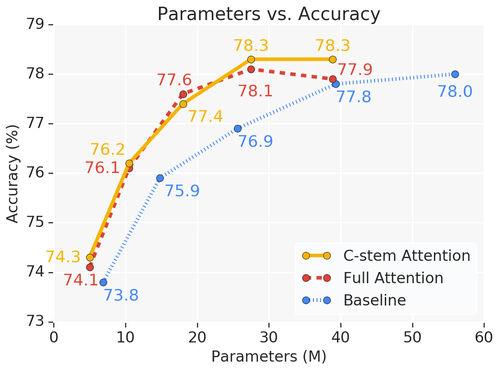

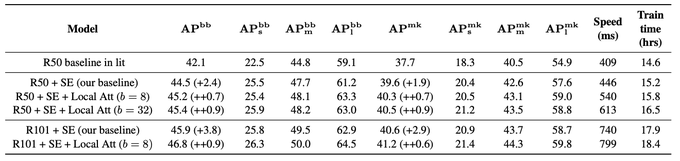

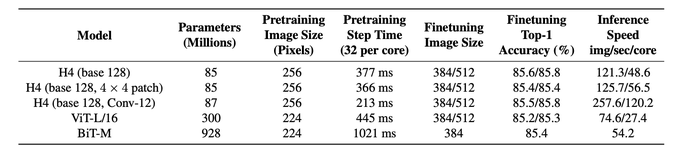

Pure content based interactions are competitive for vision models. Lot's of exciting work to be done in this research area.

1

1

20

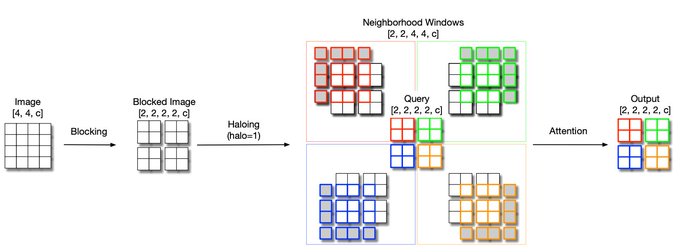

(5/5) Joint work with Prajit Ramachandran,

@AravSrinivas

,

@nikiparmar09

,

@BlakeHechtman

, and Jonathon Shlens.

1

1

13

@jekbradbury

@arvind_io

@nikiparmar09

Thanks! We haven't inspected the latents as yet but that is probably the most exciting thing to do at this point. It's definitely on our TODO.

0

1

3

@arvind2505

Can be photoshopped in ? We can do this on Monday and pretend it was just post NIPS.

1

0

1