Antoine Moulin

@antoine_mln

Followers

1K

Following

2K

Media

26

Statuses

259

doing a phd in RL/online learning on questions related to exploration and adaptivity

Joined August 2020

RT @arlet_workshop: We've extended the deadline for our workshop's calls for papers/ideas! Submit your work by August 29 AoE. Instructions….

0

5

0

RT @arlet_workshop: The OpenReview link for our calls (for papers and ideas) is available, submit here: We look fo….

0

7

0

last year's edition was so much fun I'm really looking forward to this one!! join us in San Diego :)).

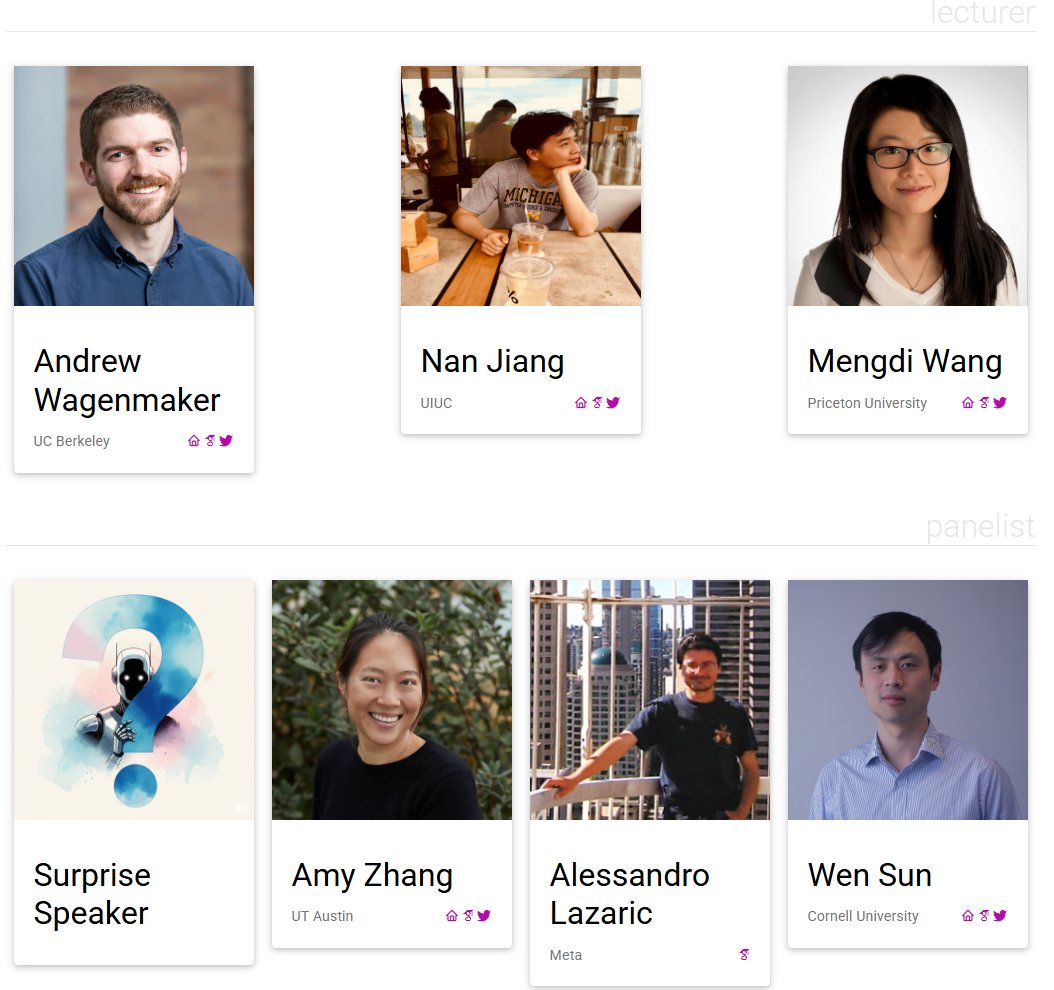

Delighted to announce that the 2nd edition of our workshop has been accepted to #NeurIPS2025!.We have an amazing lineup of speakers:.@WenSun1, @ajwagenmaker, @yayitsamyzhang, @MengdiWang10, @nanjiang_cs, Alessandro Lazaric, and a special guest!

2

6

26

RT @geneli0: like everyone else i am hopping on the blog post trend.

gene.ttic.edu

A personal website.

0

30

0

RT @KevinHanHuang1: Meanwhile, excited to be in #Lyon for #COLT2025, with a co-first author paper ( with the amazin….

arxiv.org

Over the last decade, a wave of research has characterized the exact asymptotic risk of many high-dimensional models in the proportional regime. Two foundational results have driven this progress:...

0

7

0

RT @RLtheory: Join us tomorrow for Dave's talk! He will present his recent work on randomised exploration, which received an outstanding pa….

0

3

0

RT @LucaViano4: Our new preprint is online ! Structural assumptions on the MDP helps in imitation learning, even if offline :).Joint work w….

0

6

0

new preprint with the amazing @LucaViano4 and @neu_rips on offline imitation learning!. when the expert is hard to represent but the environment is simple, estimating a Q-value rather than the expert directly may be beneficial. there are many open questions left though!

1

6

45

RT @canondetortugas: Dhruv Rohatgi will be giving a lecture on our recent work on comp-stat tradeoffs in next-token prediction at the RL Th….

0

2

0

RT @DimitriMeunier1: 🚨 New paper accepted at SIMODS! 🚨.“Nonlinear Meta-learning Can Guarantee Faster Rates”. When….

arxiv.org

Many recent theoretical works on \emph{meta-learning} aim to achieve guarantees in leveraging similar representational structures from related tasks towards simplifying a target task. The main aim...

0

15

0

RT @neu_rips: new work on computing distances between stochastic processes **based on sample paths only**! we can now:.- learn distances be….

0

8

0

RT @LucaViano4: Finally, we have expert sample complexity bounds in multi agent imitation learning!. Joint work wi….

0

4

0

RT @DimitriMeunier1: Check out our new result on regression with heavy-tailed noise !. I learned a lot on this project, thanks to @gaussian….

0

2

0

RT @gellertweisz: Super excited to have been part of the incredible journey with our team, bringing this to you all the way from research i….

0

5

0

RT @bodonoghue85: Excited to share what my team has been working on lately - Gemini diffusion! We bring diffusion to language modeling, yie….

0

260

0

RT @BlancaHuergo: Very excited to share what I have been working on. Having been part of the Gemini Diffusion team since day one, it is ama….

0

13

0

RT @Rahma_Chaa: It's been an incredible experience working on Gemini Diffusion. So much pride in what we've accomplished bringing this from….

0

12

0