Adhiraj Ghosh ✈️ ACL 2025

@adhiraj_ghosh98

Followers

258

Following

371

Media

8

Statuses

57

ELLIS PhD @uni_tue | vision-language & data-centric ML @bethgelab 🦋: https://t.co/Q03vvJFIPw

Tübingen, Deutschland

Joined April 2024

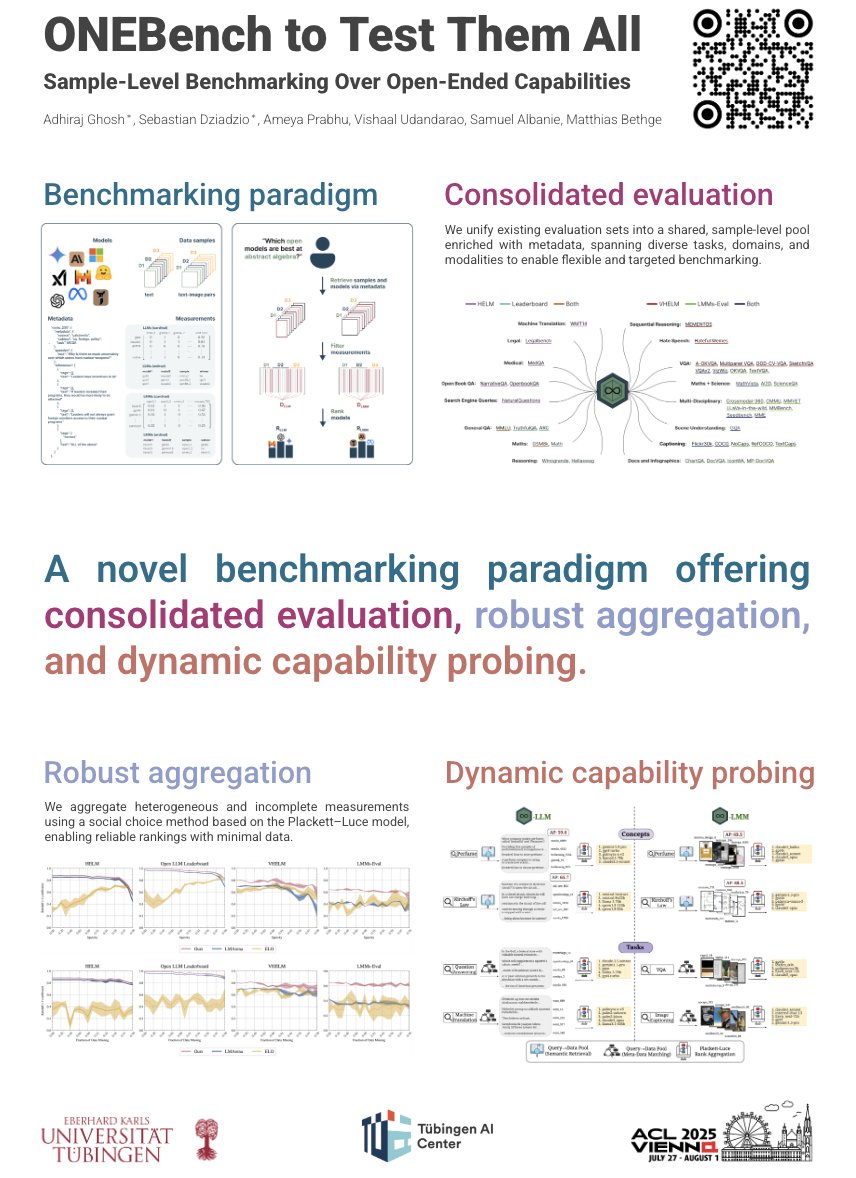

🏆ONEBench accepted to ACL main! ✨.Stay tuned for the official leaderboard and real-time personalised benchmarking release!. If you’re attending ACL or are generally interested in the future of foundation model benchmarking, happy to talk!. #ACL2025NLP #ACL2025.@aclmeeting.

🚨Looking to test your foundation model on an arbitrary and open-ended set of capabilities, not explicitly captured by static benchmarks? . Check out ONEBench, part of my MS thesis, where we show how sample-level evaluation is the solution. 🔎. 🧵👇

1

1

10

RT @benno_krojer: This is precisely why we released MVPBench to measure genuine video understanding with minimal video pairs. Otherwise we….

arxiv.org

Existing benchmarks for assessing the spatio-temporal understanding and reasoning abilities of video language models are susceptible to score inflation due to the presence of shortcut solutions...

0

2

0

RT @YungSungChuang: Scaling CLIP on English-only data is outdated now…. 🌍We built CLIP data curation pipeline for 300+ languages.🇬🇧We train….

0

80

0

RT @sbdzdz: If you're in Vienna for ACL, @adhiraj_ghosh98 and I will be presenting our work on benchmarking language and vision-language mo….

bethgelab.github.io

ONEBench: a new paradigm for open-ended benchmarking and evaluation of foundation models, aggregating sample-level tests across datasets.

0

4

0

RT @KarelDoostrlnck: The fine folks at HuggingFace used our APO-zero algorithm to train a very efficient reasoning LM. Awesome 🙌 !.

0

6

0

RT @thao_nguyen26: Web data, the “fossil fuel of AI”, is being exhausted. What’s next?🤔.We propose Recycling the Web to break the data wall….

0

62

0

RT @AmyPrb: 🚨 New paper!. Exciting progress in GRPO variants, smarter training strategies, and curated datasets showing impressive improvem….

0

4

0

RT @debsarkar_sayan: 🏆 CrossOver is accepted as a 𝗛𝗶𝗴𝗵𝗹𝗶𝗴𝗵𝘁 at @CVPR #CVPR2025! ✨.💻 Fully open-sourced code with all pre-trained checkpoint….

github.com

[CVPR 2025, Highlight] CrossOver: 3D Scene Cross-Modal Alignment - GradientSpaces/CrossOver

0

4

0

RT @fededagos: 🚨 New paper alert! 🚨.We’ve just launched openretina, an open-source framework for collaborative retina modeling across datas….

0

9

0

RT @AmyPrb: LMs excel at solving problems (~48% success) but falter at debunking them (<9% counterexample rate)! . Could form an AI Brandol….

0

1

0

If you needed a reason to visit Nashville in June🔥.

🎉 Excited to share our latest work, CrossOver: 3D Scene Cross-Modal Alignment, accepted to #CVPR2025 🌐✨. We learn a unified, modality-agnostic embedding space, enabling seamless scene-level alignment across multiple modalities — no semantic annotations needed!🚀

0

0

1

Valuable multimodal training data contribution and very thorough experimentation🎉. Interesting that fine tuning the visual encoder doesn’t help in such tasks, seems to be aligned with common wisdom in the LMM world.

[1/11] Many recent studies have shown that current multimodal LLMs (MLLMs) struggle with low-level visual perception (LLVP) — the ability to precisely describe the fine-grained/geometric details of an image. How can we do better?. Introducing Euclid, our first study at improving

0

0

3

RT @AmyPrb: 🚨Looking for open problems machine unlearning for AI safety? . We provide a deep dive into the nuances of removing harmful know….

0

2

0

RT @JieyuZhang20: Excited to share my intern project at Salesforce Research! Huge thanks to everyone on the team!!.

0

15

0

Used LMMs-Eval to build ONEBench(: fantastic team who have diligently helped me with queries and access to data over the past 6 months. Glad to see it gain so much traction!.

arxiv.org

Traditional fixed test sets fall short in evaluating open-ended capabilities of foundation models. To address this, we propose ONEBench(OpeN-Ended Benchmarking), a new testing paradigm that...

🚀LMMs-Eval🚀 has reached 2.2K stars with 60+ contributors from the community!. - Repo: - Dataset @huggingface : * Join us to build a standardized evaluation toolkit for large multimodal models (image, video and audio)🤩.

0

2

9

RT @BoLi68567011: Also recommend the open-source code for SAE in multimodal models.

github.com

[ICCV 2025] Auto Interpretation Pipeline and many other functionalities for Multimodal SAE Analysis. - EvolvingLMMs-Lab/multimodal-sae

0

16

0