Denis Rossiev ᯅ/acc

@Enuriru

Followers

13,906

Following

575

Media

883

Statuses

5,363

Building performing AR products · Meta/Snap/TikTok AR Ambassador · Clients: Tiffany, Elie Saab, Burberry, Forbes, JLo ·

Dubai

Joined January 2010

Don't wanna be here?

Send us removal request.

Explore trending content on Musk Viewer

JIN AT MILAN FASHION WEEK

• 957854 Tweets

FACE OF GUCCI JIN

• 860537 Tweets

Oprah

• 528118 Tweets

Papa

• 192137 Tweets

Beirut

• 143039 Tweets

#HyunjinxVersaceSS25

• 115940 Tweets

Matt Gaetz

• 108878 Tweets

HYUNJIN AT VERSACE MFW

• 106349 Tweets

Majima

• 35132 Tweets

Jill Biden

• 33994 Tweets

#الاهلي_ضمك

• 28107 Tweets

Ibrahim Aqil

• 27972 Tweets

AFFAIR EP4

• 23575 Tweets

ابراهيم عقيل

• 17805 Tweets

Jongdae

• 17398 Tweets

トイストーリー3

• 15359 Tweets

Roca

• 13740 Tweets

奏章III

• 12916 Tweets

Vaticano

• 12858 Tweets

ルビィちゃん

• 11690 Tweets

Last Seen Profiles

New experiment: real-time magic with floor destruction in

#AR

— no baked animations, 100% dynamic control by hand gestures.

#AugmentedReality

13

67

458

#AugmentedReality

Dr. Strange portal I made early this year. Fully controllable by hand tracking.

25

41

358

I keep working on

#AR

portals: added some subtle ripple. Still seamless passing between worlds.

19

35

284

Just FYI I made Minecraft inside Instagram filter. infinite digging, flowing water, more than 50 types of blocks and even save&load feature. Hey

@Minecraft

what do you think?

10

40

244

bitch wtf. so to make games for

#visionOS

you have to buy:

- $3500 vision pro

- $2000+ macbook pro

- $2040 unity license

- $100 apple dev account

31

32

229

Dropping new

#AR

experience! All the stuff works in real-time. No post-production. Link in the thread!

#AugmentedReality

11

30

217

Even though it looks cool, no one actually will want this visual garbage persistently float in front of our eyes. That’s why such concepts will never become real.

53

16

203

Have you seen this beautiful

#AppleEvent

particles animation?

I've made something similar in

#AR

a while ago.

7

27

199

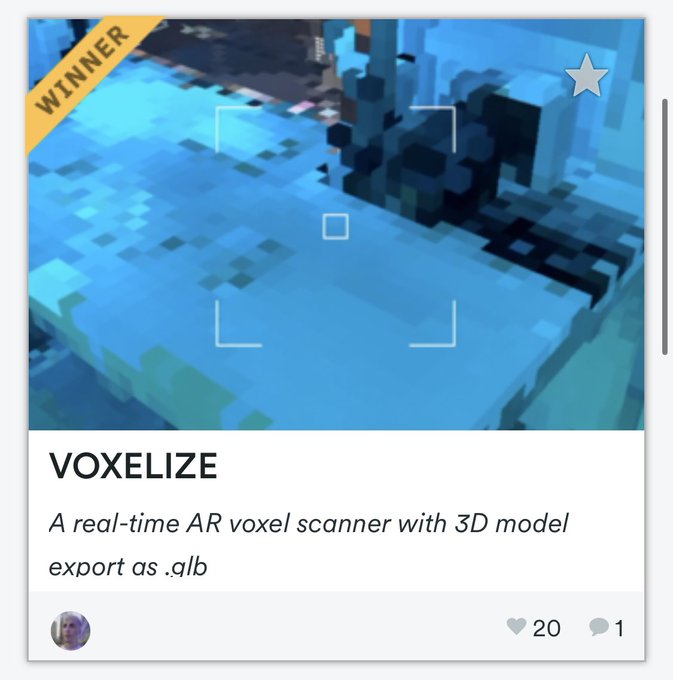

I won

@SnapAR

hackathon! 😱 First Place is really surprising! Thanks team for appreciating my work! Read more about the project:

#AR

#AugmentedReality

27

4

190

This is the upcoming version of human duplicator: 2.0. Even more weird.

#snapAR

@SnapAR

#AugmentedReality

3

32

188

That's how the illusion runs inside MetaSpark: here I manually move the virtual camera (in reality it's connected to the user's eye position).

The main difference from

#ARkit

is, that in Spark I have to manually modify the camera view and projection matrices in shaders.

8

15

174

1/4 Super excited to share that my work was featured by

@Meta

in the Top-10

#AR

effects of 2022, across millions of effects!

#AugmentedReality

7

26

171

Made with GPU particles in

#AugmentedReality

. Optical flow + particles sampling body color + dynamic attractor!

9

13

124

Made a real-time painting technique on a 3D model in

#AugmentedReality

. How would you use it?

12

6

122

8. That's a wrap.

Follow

@Enuriru

for more expert insights about AI and AR. I don't post bullshit like "how to create AI influencer in two minutes".

Retweet the original tweet if you like it:

16

7

115

My

#AR

portal implementation merges two isolated 3D scenes with a 100% seamless passing experience. No Switching. No transitions.

Portals are double-sided: if there is smth in front or behind the portal, you can see it from the corresponding side.

Multiple portals supported.

5

11

109

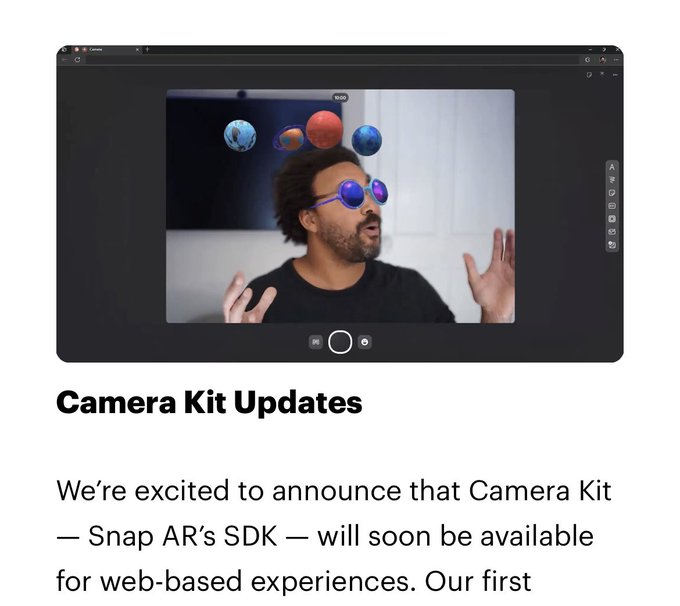

We just launched a new

#AR

experience for

@coachella

×

@BLACKPINK

! Give it a try and let me know what you think:

7

11

108

@_akhaliq

they've already implemented a simplified version of it in TikTok app (in closed beta for AR developers). Works in real-time. I made a simple world effect with it:

One more turn with this

#AR

: added underwater transition. All real-time, based on

#ML

scene depth estimation. Built in

@effecthouse

.

3

14

104

4

8

108

We’ve made an

#AR

package for

@coachella

! Everyone who gets a welcome box will be able to try!

5

15

107

One more turn with this

#AR

: added underwater transition. All real-time, based on

#ML

scene depth estimation. Built in

@effecthouse

.

3

14

104

At least we can do simple fresnel-based reflections

#VisionPro

0

3

98

1/5. How you can make a real-time

#AugmentedReality

garment with

#AI

in just 5 minutes. Thread!

4

20

95

About a year ago I spend weeks implementing custom physics for

@SnapAR

. Here is the video!

#AugmentedReality

But now they made it native. So cool!

3

9

95

Today's experiment: measure tool using LiDAR on iPhone, built in

#LensStudio

.

#SnapAR

@SnapAR

Also working to bring this to

@Spectacles

6

14

87

Stop using

#AugmentedReality

to stick low-poly 3D models to the floor. We can see them on Sketchfab even without AR. Make it interactive instead.

2

5

78