Aditya Yedetore

@AdityaYedetore

Followers

126

Following

60

Media

10

Statuses

24

Graduate Student at Boston University in the Linguistics Department. Studying language acqusition, syntax, and semantics

Joined September 2015

RT @yulu_qin: Does vision training change how language is represented and used in meaningful ways?🤔 The answer is a nuanced yes! Comparing….

0

23

0

RT @najoungkim: Here's work where we try to take analogies quite seriously, plus our takes about LLM experiments serving to raise the bar f….

0

5

0

I will be at #EMNLP2024 with some awesome tinlab people. Contact me if you would like to chat (esp. about 1. the similarities and differences between Connectionist/neural and Classical/symbolic computation, and 2. what compositionality, productivity, and systematicity are).

tinlab will be at #EMNLP2024! A few highlights:. * Presentations from @AdityaYedetore and @HayleyRossLing on neural network generalizations!.* I'm giving a keynote at @GenBench & organizing @BlackboxNLP .* Ask me about our faculty hiring & PhD/postdoc positions!. Details👇🧵.

0

1

6

RT @najoungkim: tinlab at Boston University (with a new logo! 🪄) is recruiting PhD students for F25 and/or a postdoc! Our interests include….

0

21

0

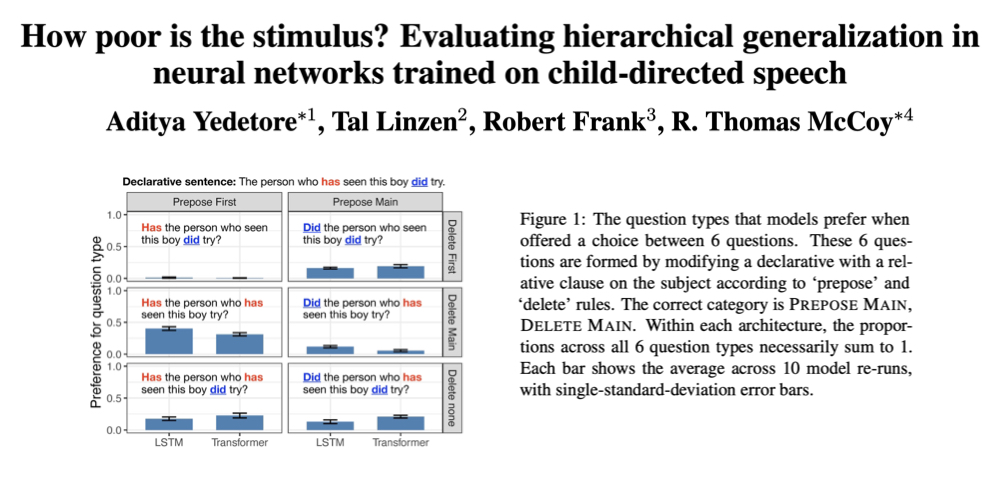

Prior (and also my computational work with @RTomMcCoy @tallinzen and @bob_frank !) suggests that hierarchical generalization requires stronger inductive biases than modern neural networks have (e.g., a hierarchical bias).

aclanthology.org

Aditya Yedetore, Tal Linzen, Robert Frank, R. Thomas McCoy. Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). 2023.

1

0

4

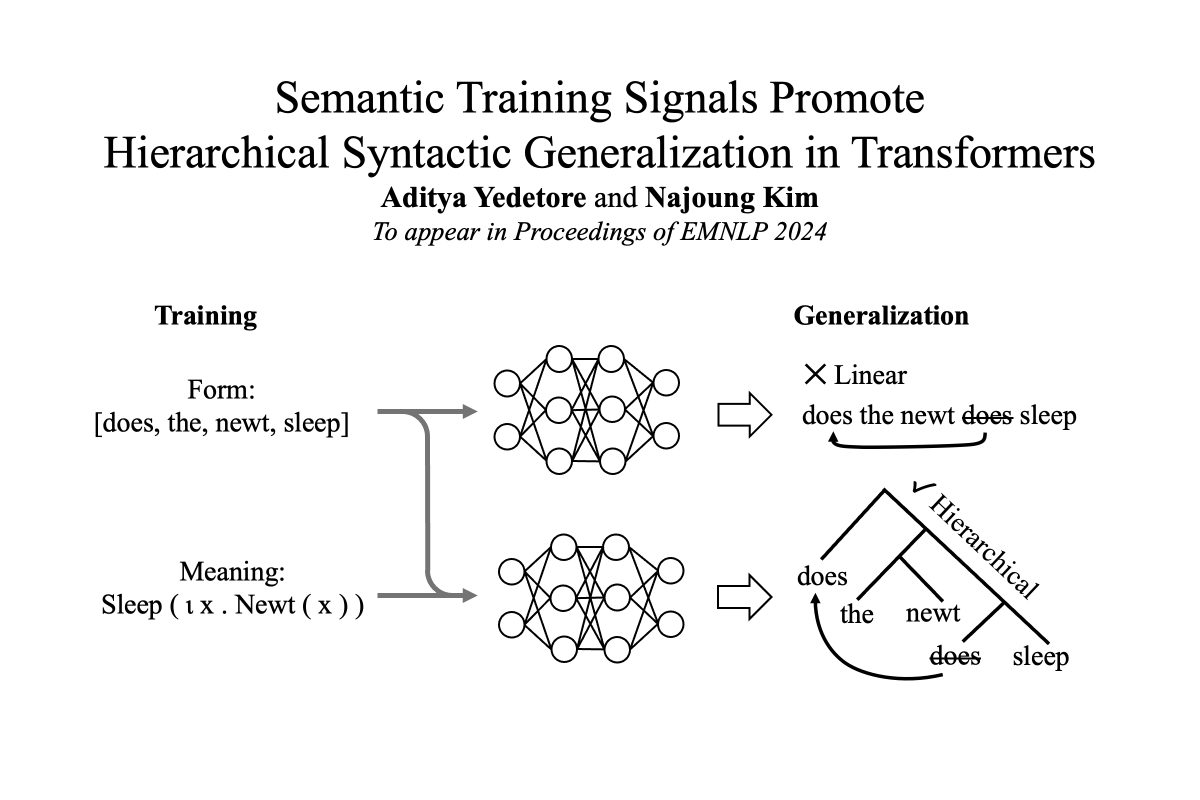

NEW PAPER! We (@najoungkim and I) find that training on mapping from form to meaning leads to improved hierarchical generalization.

2

20

84

RT @RTomMcCoy: 🌲Interested in language acquisition and/or neural networks? Check out our poster today at #acl2023nlp! Session 4 posters, 11….

0

5

0