In this project led by

@leeley18

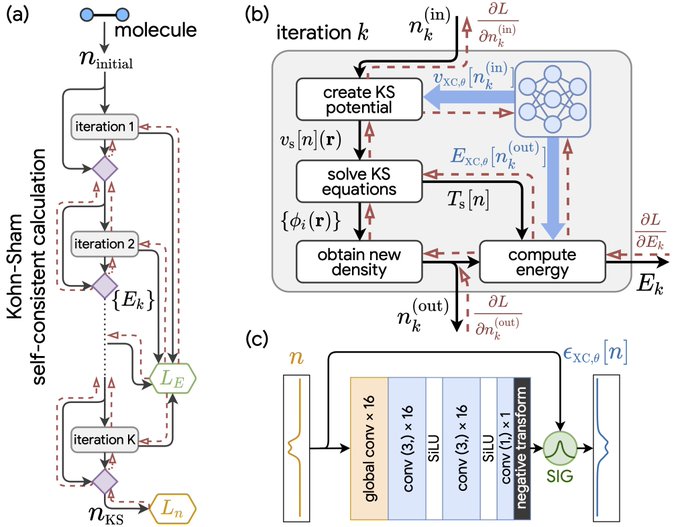

, we show that end-to-end training with differentiable physics results in extremely effective hybrid physics/ML models for density functional theory.

2

30

144

Replies

This paper shows that we can use neural nets to improve* Kohn-Sham DFT, one of the most popular methods of computational chemistry!

* We still have lots of work to do to make this practical, e.g., the paper only models 1D systems.

1

1

4