Maneesh Agrawala

@magrawala

Followers

3K

Following

3K

Media

120

Statuses

1K

Forest Baskett Professor of Computer Science and Director of the Brown Institute for Media Innovation at Stanford.

Joined November 2009

Generative AI tools are amazing and also incredibly frustrating to use. Why? I finally wrote down a few thoughts on this: .

3

25

109

RT @jama1017: We introduce MoVer, a Motion Verification DSL that automatically checks if AI-generated motion graphics animations match you….

0

15

0

RT @raoanyi: You cannot miss this AIGC technical workshop in #SIGGRAPH2025 . Thursday, August 14, 2025.9:00am - 12:15pm PDT, Vancouver, Can….

0

6

0

RT @raoanyi: 🔥 Announcing the Creative Visual Content Generation, Editing & Understanding Workshop at #SIGGRAPH2025!. For the first time in….

0

9

0

RT @YVinker: I'm very excited to announce our #SIGGRAPH2025 workshop:.Drawing & Sketching: Art, Psychology, and Computer Graphics 🎨🧠🫖. 🔗 ht….

0

19

0

RT @StanfordJourn: In the course, students learn the core principles of narrative journalism — how to utilize ingredients often found in fi….

journalism.stanford.edu

In the course, students learn the core principles of narrative journalism and apply them to nonfiction.

0

1

0

RT @raoanyi: Great experience at the opening remarks at CVM and HKUST AI Film Festival by @magrawala

0

1

0

RT @UofTDSI: Get your tickets and join us + @UofTMScAC for an upcoming #DataSciencesSpeakerSeries talk: "Unpredictable Black Boxes are Terr….

0

3

0

RT @Taesung: Excited to come out of stealth at @reveimage!.Today's text-to-image/video models, in contrast to LLMs, lack logic. Images seem….

0

85

0

RT @raoanyi: Prof. Maneesh Agrawala @magrawala will give a keynote speech at the Computational Visual Media Conference 2025 and AMC AI Fil….

0

2

0

RT @wobbrockjo: Thank you so much to the #UIST2024 community for recognizing our $1 gesture recognizer work from 2007 with the Lasting Impa….

0

5

0

RT @Miamiamia0103: Happy to have shared artistic insights to help bring our new sketch-to-image generation tool to life 🧑🎨🎨! . Check out o….

0

13

0

And if you are at #uist2024 come to our talk /poster and demo and say hello!. @VSarukkai and @Miamiamia0103 are here.

0

0

3

Be sure to try the working demo of our new sketch to image generation tool. The demo code and tutorial video are available from the paper website: .

I am presenting Block and Detail: Scaffolding Sketch-to-Image Generation at @ACMUIST today, 3-4PM EST in the New Vizualizations paper session!. Paper: Website: Work with Lu Yuan @Miamiamia0103 @magrawala @kayvonf.

3

8

84

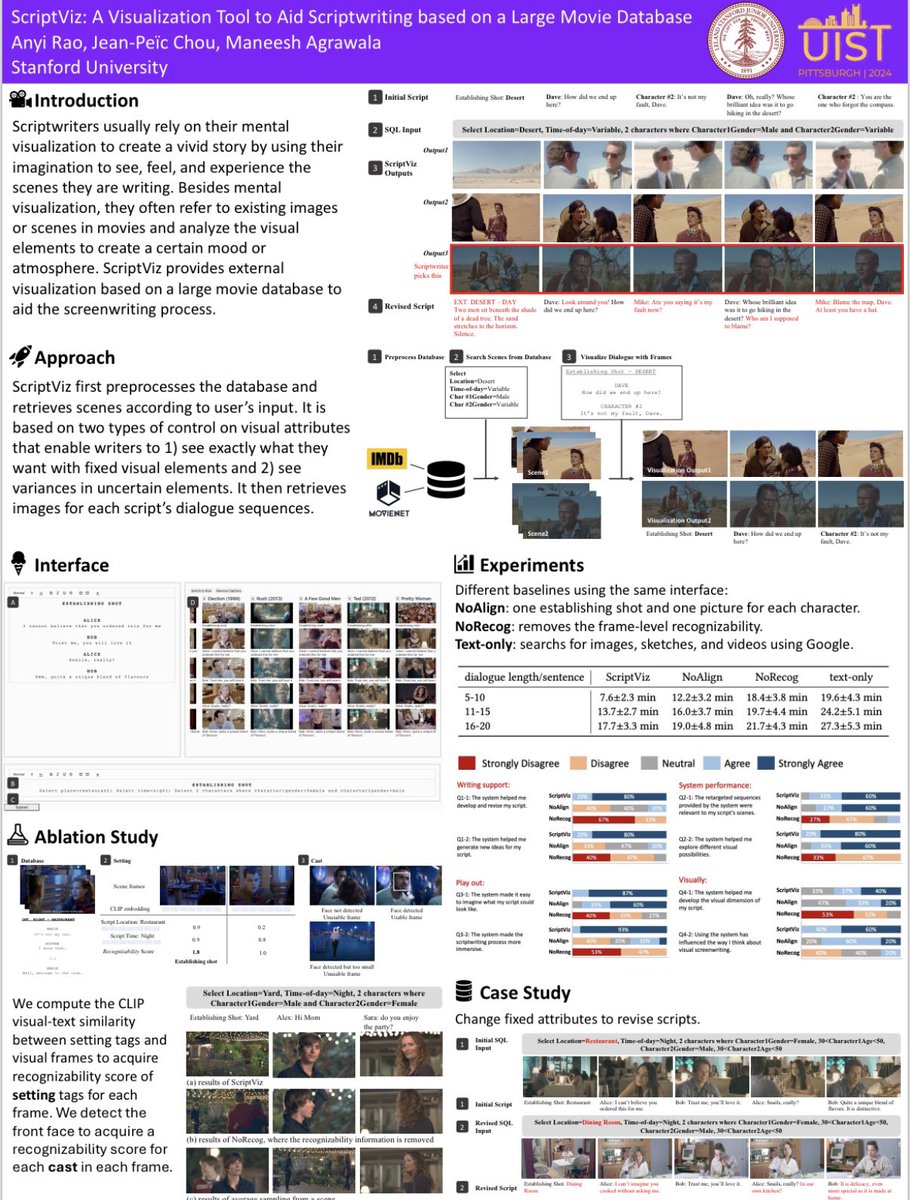

RT @raoanyi: I will present ScriptViz, A Visualization Tool to Aid ScriptWriting based on a Large Movie Database at Mon, 14 Oct | 1:55 PM -….

0

1

0

SparseCtrl is a technique for controlling text to video that builds on AnimateDiff and ControlNet. @GuoywGuo will present the technique at #ECCV2024 on Wed Oct 2.

Excited to present our new work, SparseCtrl. It's about boosting the controllability of existing T2V models in an efficient and practical way, and it's compatible with AnimateDiff. 🥳. arXiv report:.project page:.code: coming soon

0

1

17

We just released the code for FlashTex: text-to-relightable texture generation for 3D geometry. @kangle_deng will present this work tomorrow at #ECCV2024.

flashtex.github.io

[1/2].📢 I'll present "FlashTex: Fast Relightable Mesh Texturing with LightControlNet" at #ECCV2024’s Oral Session tomorrow! 🎉 . Join me to explore how our generated textures can be properly relit in various lighting environments. ⚡. 📅 Oral: Tue, Oct 1st, 2 PM.📍 Poster: #159

2

12

58