Ihor Stepanov

@ihor_step

Followers

167

Following

2K

Media

11

Statuses

323

I am the CEO and co-founder of Knowledgator. We are advancing the #information_extraction field with #opensource #AI models.

Ukraine

Joined July 2014

RT @Cohere_Labs: Don't forget to tune in tomorrow, August 26th as @ihor_step discusses Zero-Shot Named Entity Recognition with GLiNeR. Lea….

0

3

0

RT @Cohere_Labs: Join us for a deep dive into Zero-Shot Named Entity Recognition with GLiNeR presented by @ihor_step on Tuesday, August 26t….

0

6

0

RT @knowledgator: 🚀 GLiNER x SmolLM: a new joint encoder-decoder architecture 🚀. We are excited to release a new kind of GLiNER model built….

0

4

0

RT @knowledgator: 🚀 Our largest study on zero-shot text classification is out!.📄 We surpass cross-encoders while b….

0

3

0

RT @ClementDelangue: Every tech company can and should train their own deepseek R1, Llama or GPT5, just like every tech company writes thei….

0

280

0

RT @gm8xx8: GLiClass-V3: A family of encoder-only models that match or exceed DeBERTa-v3-Large in zero-shot accuracy, while delivering up t….

0

5

0

RT @knowledgator: 🚀 Introducing GLiClass‑V3 – a leap forward in zero-shot classification!. Matches or beats cross-encoder accuracy, while b….

huggingface.co

0

20

0

RT @GoogleDeepMind: An advanced version of Gemini with Deep Think has officially achieved gold medal-level performance at the International….

0

775

0

RT @knowledgator: 🚀 Introducing GLiNER-X: Breaking Language Barriers in Multilingual Zero-Shot NER! 🌍. We’re excited to roll out our latest….

0

3

0

RT @knowledgator: 🚀 GLiNER just reached 2K stars on GitHub — and it’s now 3x faster!. We’re thrilled to see this project grow into a core t….

0

1

0

RT @knowledgator: 🎉 Thanks to @GoogleStartups Ukraine Support Fund, we are receiving funding + long-term mentorship from @Google to grow ou….

0

1

0

RT @ClementDelangue: Biased but in my opinion ZeroGPU is one of the most impressive piece of infra in AI that no one is talking about. Po….

0

36

0

RT @Dorialexander: Breaking: @pleiasfr releases a new generation of small reasoning models for RAG and source synthesis. Pleias-RAG-350M an….

0

107

0

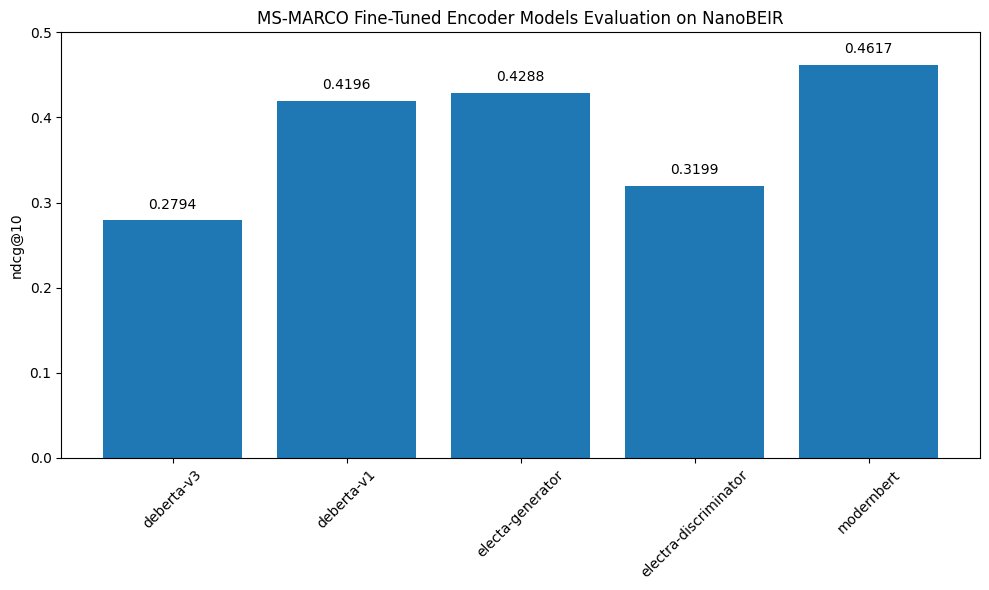

It became popular to think that architecture is no longer a thing; we achieved some local minimum in architectural design, and data right now is the limiting factor. This work empirically shows that this is not the case, that these aspects are more interesting and hide deeper.

ModernBERT or DeBERTaV3?. What's driving performance: architecture or data?. To find out we pretrained ModernBERT on the same dataset as CamemBERTaV2 (a DeBERTaV3 model) to isolate architecture effects. Here are our findings:.

0

0

6

RT @gm8xx8: ModernBERT vs DeBERTaV3. To isolate architecture from pretraining effects, ModernBERT is trained on the same dataset as CamemBE….

0

25

0

RT @knowledgator: ⚡ Introducing truly Flash DeBERTa implementation ⚡. DeBERTa remains a top-performing model in Named Entity Recognition, T….

github.com

Trully flash implementation of DeBERTa disentangled attention mechanism. - Knowledgator/FlashDeBERTa

0

18

0

RT @sijun_tan: Hey @sama, we know you're planning to open-source your reasoning model—but we couldn’t wait. Introducing DeepCoder-14B-Prev….

0

148

0