Joshua Gu

@astrogu_

Followers

34

Following

24

Media

2

Statuses

30

CS Phd student @MIT, @MIT_CSAIL, @MITEECS👨💻| @LMCache Lab | Previous: BS @UChicago. Research on AI Systems

Chicago, IL

Joined December 2023

Excited to share our latest work 𝗠𝗘𝗧𝗜𝗦 at #SOSP2025. This one’s special as it’s my first full CS project from start to finish—from early brainstorming and iterating on ideas to running experiments and writing the paper. Learned a ton, and perseverance finally paid off! 🚀.

With RAG and agents becoming ubiquitous in LLM systems, tuning quality and performance JOINTLY is essential to achieve the best LLM quality-of-experience. Our paper at SOSP this year, addresses this exact tradeoff!🔥

0

3

7

🔥 Tencent x @lmcache.

🚀 𝗧𝗲𝗻𝗰𝗲𝗻𝘁 x 𝗟𝗠𝗖𝗮𝗰𝗵𝗲 Collaboration: Integrating 𝗠𝗼𝗼𝗻𝗰𝗮𝗸𝗲 Store for Enhanced LLM Inference Caching! 🥮🥮. Excited to share insights from a powerful collaboration between Tencent engineers and the LMCache Lab team! 🎉. With the help from Tencent Engineers,

0

0

0

RT @lmcache: 🏆 Exciting news from #EuroSys2025: Our work on CacheBlend won Best Paper! 🚀. CacheBlend delivers the first-ever speedup for RA….

0

8

0

FAST!!! 🚀 Thrilled to see all the hard work pay off!.

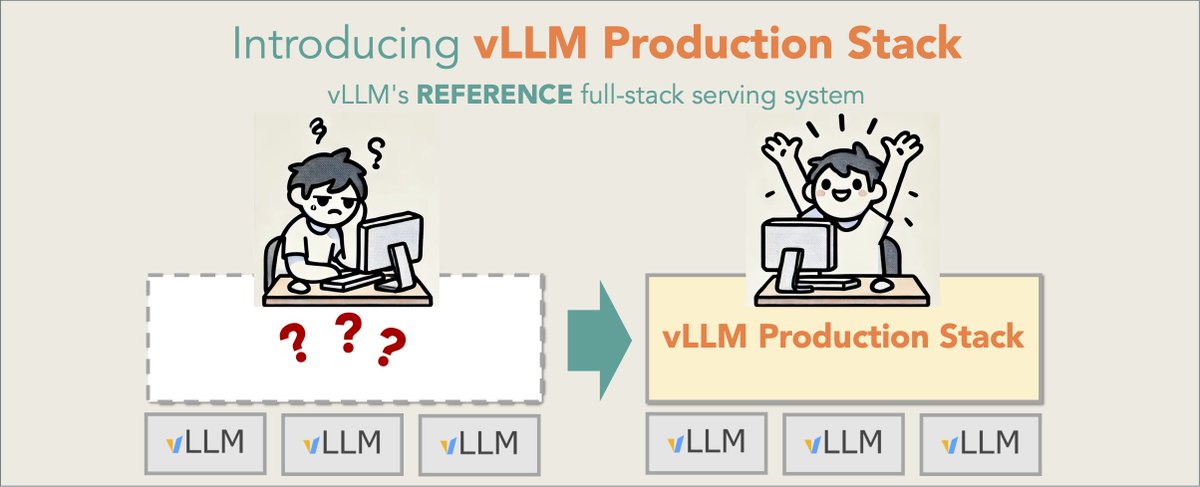

Our open-source LLM cluster deployment solution is 10x faster than SOTA OSS solution. Check out the vLLM Production-Stack!🤩🤩🤩. Since Jan 2025, vLLM Production Stack has been the reference open-source vLLM inference cluster solution with advanced KV cache offloading and K8s

0

0

1

🚀 vLLM Production Stack is here!.

🚀 We're thrilled to announce vLLM Production Stack—an open-source, Enterprise-Grade LLM inference solution that is now an official first-party ecosystem project under vLLM!. Why does this matter?.A handful of companies focus on LLM training, but millions of apps and businesses

0

0

2