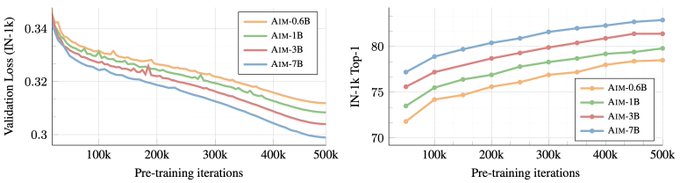

Our work on learning visual features with an LLM approach is finally out. All the scaling observations made on LLMs transfer to images! It was a pleasure to work under

@alaaelnouby

leadership on this project, and this concludes my fun (but short) time at Apple! 1/n

1

7

65

Replies

This works is another hint that confirms the intuition that we are converging across modalities and a single model may emerge as a form of AGI. I don't how far we are but I am very bullish that efforts like Gemini or GPT may get us across the line. 2/n

1

0

5