Jonas Beck

@__jnsbck__

Followers

102

Following

190

Media

12

Statuses

52

Probabilistic Inference in Mechanistic Models. PhD student with @CellTypist, @uni_tue.

Tübingen, Deutschland

Joined January 2022

RT @sbi_devs: 🚀 Join the 4th SBI Hackathon! 🚀. The last hackathon was a fantastic milestone in forming a collaborative open-source communit….

0

2

0

RT @KadhimKyra: Looking forward to the #Bernsteinconference tomorrow where I'll be presenting work developing a preliminary model of the mo….

0

2

0

Thrilled to be at #BernsteinConference next week to present my most recent work⬇️ on how probabilistic numerical methods can help with parameter inference in Hodgkin-Huxley models. If you’re curious or want to chat about fitting biophysical models, come by Poster 10 on Tue 2:15.

Interested in reliable parameter inference for ODEs with probabilistic solvers?. I am excited to present my latest work w. @nathanaelbosch, @deismic_, @KadhimKyra, @jakhmack, @PhilippHennig5, @CellTypist, @ml4science at #ICML2024 this year. 1/n

0

1

10

We built a simulator for biophysical neuron models that is.- all python.- accelerated.- fully differentiable.- capable of morphological detail.- scalable to >1M params. Very proud to be part of this! Much respect to @deismic_, who put most of it together. See what it can do ⬇️.

How can we train biophysical neuron models on data or tasks? We built Jaxley, a differentiable, GPU-based biophysics simulator, which makes this possible even when models have thousands of parameters! Led by @deismic_, collab with @CellTypist @ppjgoncalves

0

3

21

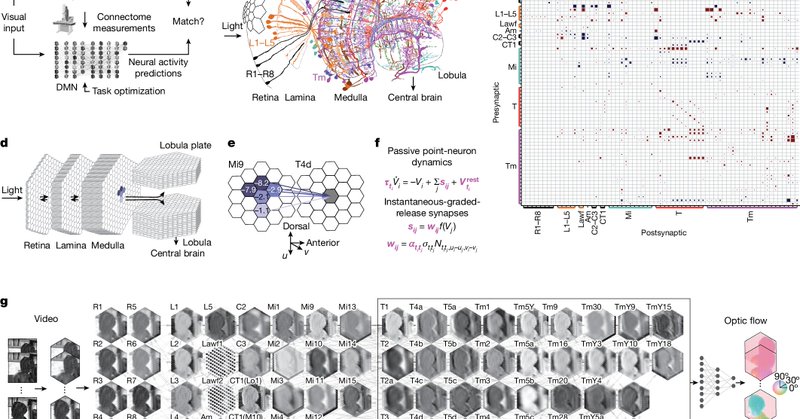

RT @lappalainenjk: Biggest joy and honour leading this project at the intersection of visual neuroscience and ML to a successful finish! Pa….

nature.com

Nature - A study demonstrates how experimental measurements of only the connectivity of a biological neural network can be used to predict neural responses across the fly visual system at...

0

44

0

RT @sbi_devs: We just released a new version of `sbi`, and this one has _a ton_ of new features! Many of these features are thanks to more….

0

19

0

RT @mackelab: @gloecklermanuel and @deismic_ are at ICML to present our work on `All-in-one simulation-based inference`. Join us at ORAL 6F….

0

5

0

RT @alfcnz: It’s 2020. @samgreydanus pushes on @arXiv a remarkable unpublished paper. 4 years later, @hippopedoid helps getting it publishe….

0

12

0

If you want to find out more, check out our code and paper or come say hi at #ICML2024 (Hall C 4-9 #1313 Tuesday 11:30) if you want to talk PN or ML + Neuro. @deismic_ and I will be there. 12/12.

github.com

Contribute to berenslab/DiffusionTempering development by creating an account on GitHub.

5

0

0