Arthur Holland Michel

@WriteArthur

Followers

5K

Following

2K

Media

223

Statuses

4K

Writer. Friendly reminders about spooky futures.

Joined August 2013

This is probably not the smartest time to fly a bunch of unusual aircraft over a densely populated area in the middle of the night.

On Wednesday into Thursday, there will be a night training exercise over the D.C. area involving multiple aircraft, @NORADCommand announces Expect these to be flying around! >>

1

3

4

Not so long ago, the biggest topic in defense tech was Silicon Valley’s reluctance to work with the Pentagon. The total reversal of that dynamic has been nothing short of mind blowing. https://t.co/MIoSyBbhqL

1

0

3

today in “no we swear it’s not a killer robot”: artillery shells that coordinate with each other to take out multiple targets in a single barrage. https://t.co/tuSWtfRO1G

twz.com

The baseline 155mm XM1180 anti-armor round will offer Army artillery units a valuable new way to hit enemy tanks, even if they are moving.

0

0

2

🚨 SCOOP 🚨 Apple ran a conference for cops, hosting at Cupertino. Called the Apple Global Police Summit, it welcomed cops from seven countries to talk about how they use Apple tech, from iPhones to CarPlay to Vision Pro. And yes, for surveillance apps. https://t.co/gQVSLYSyly

forbes.com

At Apple’s secretive Global Police Summit, cops from 7 countries learned how to use Apple products like the iPhone, Vision Pro and CarPlay for surveillance and policing.

17

425

837

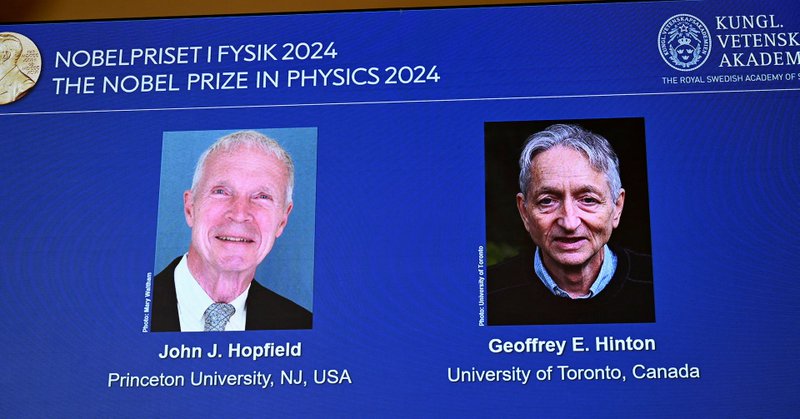

If machine learning can win the physics Nobel, where do we draw the line? Everything is physics if you squint hard enough

2

2

11

Least year Geoffrey Hinton made global headlines for warning about the “existential risk” posed to humanity by AI. Now he’s getting a Nobel Prize for pioneering the technology.

1

0

0

Well, there it is. The first Nobel Prize for modern AI—awarded to Hinton and Hopfield in physics. https://t.co/IWne29K0wH

reuters.com

John Hopfield and Geoffrey Hinton won for discoveries that paved the way for the AI boom.

0

0

1

NEW: License plate readers are enabling private investigators and police to track vehicles with political bumper stickers and homes with lawn signs w/ @mattburgess1

https://t.co/Dz59ocKYuf

4

73

123

This points to a very frightening and under-explored risk with autonomous mobility. Vehicles can be easily blocked and passengers are powerless to get themselves out of trouble.

🚨Warning to women in SF 🚨 I love Waymo but this was scary 😣 2 men stopped in front of my car and demanded that I give my number. It left me stuck as the car was stalled in the street. Thankfully, it only lasted a few minutes... Ladies please be aware of this

0

0

1

New from 404 Media: Google is serving AI images of mushrooms when users search for some species. Very risky, potentially fatal error for foragers who are trying to find what mushrooms are safe to eat. Could have "devastating consequences" one expert said

404media.co

AI-generated images of mushrooms that look nothing like the real species could spread misleading and dangerous information.

27

434

1K

It has always bugged me that the debate on military AI portrays autonomy and accountability as binary conditions: is this weapon making a kill decision on its own, and can someone be held accountable if that decision turns out to be wrong? That's a bad way to frame the issue.

3

1

4

I’ll stop here for now. There’s a lot more detail in the paper. But also, this is very much just the seed of the idea. So I’m eager for any feedback or thoughts!

0

0

0

My hope is that this metaphor could lead to discussions—and ultimately to answers—that are more practical, more specific, and more grounded in realistic solutions.

1

0

0

For any military AI system, we can ask: Will the use of this technology expand or contract the degree to which anyone in the organization will be held accountable for any harms resulting from any decisions in which it plays a role?

1

0

2

This metaphor (which draws from the cybersecurity concept known as the "attack surface") drives a new set of questions that go beyond the tired old binaries...

1

0

1

To wit: the accountability surface is the total degree to which humans in a military organization can be appropriately held accountable for harms arising from any decision in that organization.

1

0

2

We need a different approach. In this paper for @CIGIonline, I proposed a new way of conceptualizing military accountability in our era of increasingly automated decisions on the use of force, using a metaphor I call "the accountability surface." https://t.co/50eJ9PdMXq

cigionline.org

There are many people, decisions and technologies involved in the process leading to the use of force in military operations. Each of these touch points is a locus for applying — or failing to apply...

1

0

2

So treating these things as binaries fails to capture the many different varietes of military automation, and misrepresents the sociotechnical facts of automating warfare.

1

0

1