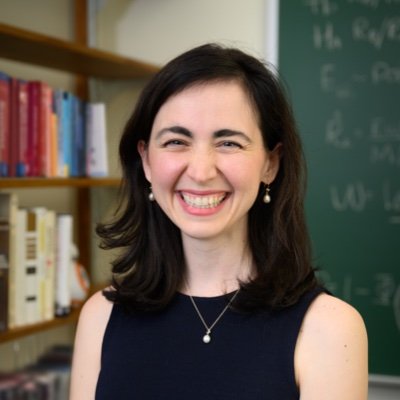

Lucy D’Agostino McGowan

@LucyStats

Followers

15K

Following

8K

Media

159

Statuses

749

Biostatistician • Assistant Prof @WakeForestStats • Postdoc @jhubiostat • PhD @vandy_biostat • SoMe Associate Editor @AmjEpi 🎙 @casualinfer • @WomeninStat

Joined September 2013

📈 I taught neural networks this week, so BEHOLD, an ode to @daniela_witten in the form of a shiny application:. "it's just a linear model" neural nets edition. #rstats

7

58

317

📣 July 22-25 I’ll be teaching an online short course on how to read and understand statistics commonly found in medical literature — tell your clinician friends! @StatHorizons

0

1

12

RT @WakeForestStats: 🖐️ There are five days left to apply to our M.S. in Statistics program at @WakeForest ! Our teacher-scholar faculty wo….

0

4

0

#WSDS2024 @SarahLotspeich teaching us about missing and misclassified wells! 👏 👏

@SarahLotspeich @LucyStats @ashley__mullan @vandy_biostat 🧪 @SarahLotspeich (Assistant Prof), "Missing and Misclassified Wells: Challenges in Quantifying the HIV Reservoir from Dilution Assays"

0

0

13

RT @SarahLotspeich: I was slow to post, but @CarlyLBrantner and @LucyStats followed up with more awesome research!

0

2

0

RT @WakeForestStats: Day 3️⃣ of Wake Stats at @AmstatNews #WSDS2024! Three of our faculty and former students will be speaking, panel-ing,….

0

2

0

Baby’s first conference 🥰 #wsds2024. Shout out to the lovely audience member who held him for the last bit of my talk when he started getting chatty! And to @SarahLotspeich & @MAshStat for being our fearless roadtrip buddies!

3

2

90

RT @dleannlong: Excited to hear from my @wakeforestmed colleague Kathy Lancaster (@prof_klancaster) at #WSDS2024 about time-varying effect….

0

2

0

RT @WakeForestStats: Day 2️⃣ of Wake Stats at #WSDS2024! Four of our faculty and current and former students will be speaking, panel-ing, a….

0

1

0

RT @WakeForestStats: 🆕📄 Starting the week with a new faculty publication! Dr. @LucyStats (Assistant Prof) has a new paper out this week in….

0

3

0

RT @WakeForestStats: 👏 @LucyStats is at it again, and @StatStaci5 (Associate Prof and Associate Chair) is here with her! Join them and fell….

0

1

0

us02web.zoom.us

Zoom is the leader in modern enterprise cloud communications.

⏰Session 48 "Infection Insights: Women's.Contributions to Infectious Disease Modeling" is starting now for #IDWSDS2024! We have four incredible speakers from @umichsph, @WakeForestStats, and @EmoryRollins.

1

0

10

RT @WakeForestStats: 🏃♀️And we're off and running! @LucyStats (Assistant Prof) is the first of many Wake Stats faculty and alums who will….

0

1

0

RT @WakeForestStats: 🏆 Wake Stats took home first place yesterday at "Hit the Bricks!" They ran the most laps and raised over $960 for canc….

0

4

0

RT @edwardhkennedy: PSA - the main ideas behind “causal ML” and “double machine learning” go back at least 40 years. Here is an estimator f….

0

24

0

RT @ashley___mullan: 🗓️ Only two more weeks until International Day of Women in Statistics and Data Science (IDWSDS) organized by @cwstat !….

0

2

0