Hao Sun - RL

@HolarisSun

Followers

890

Following

537

Media

36

Statuses

166

4th year PhD Student at @Cambridge_Uni. IRL x LLMs. Superhuman Intelligence needs RL, and LLMs help human to learn from machine intelligence.

Cambridge, UK

Joined October 2022

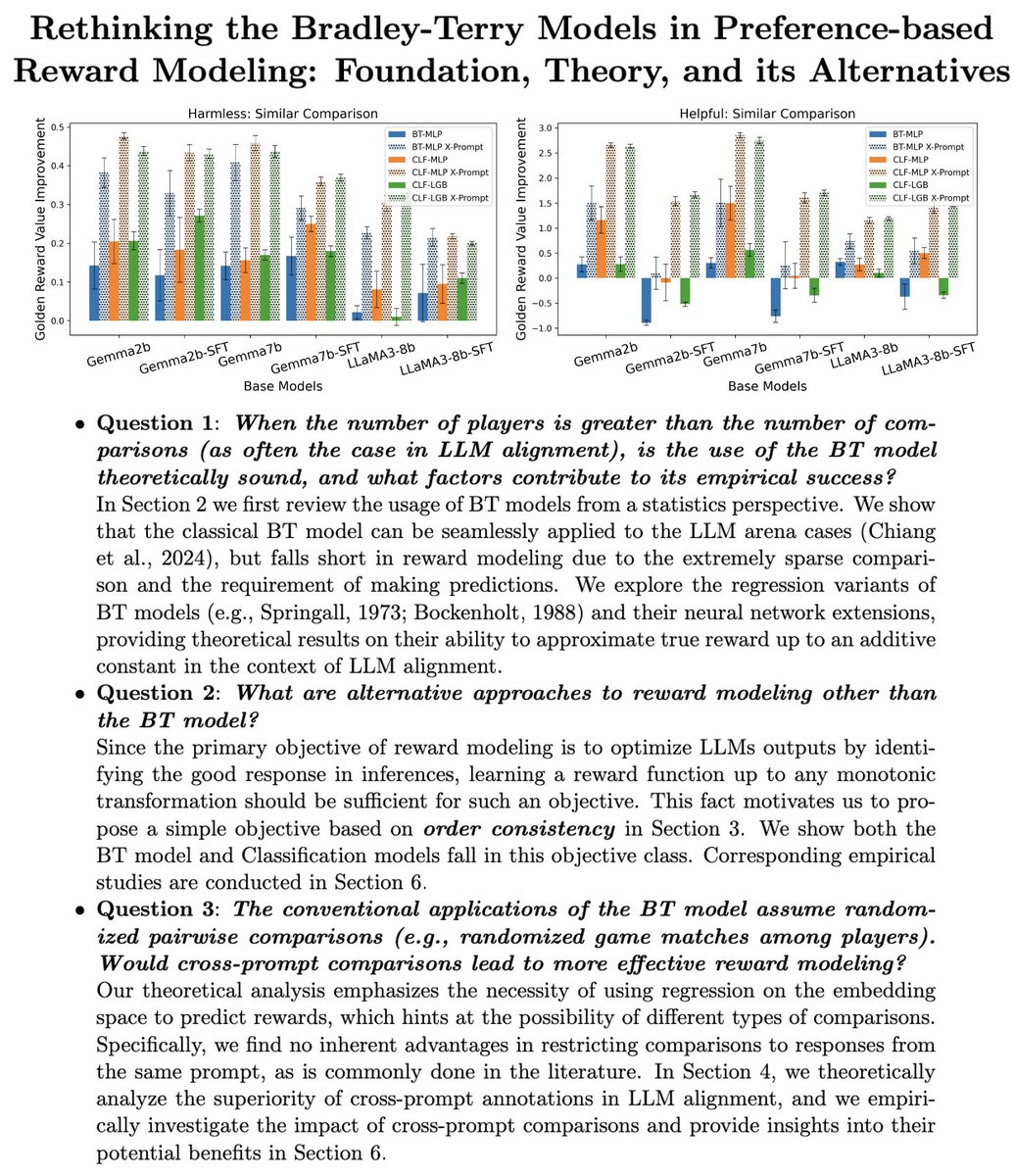

I have been working on Reward Modeling (and Inverse RL) for LLMs for the past 1.5 years. We built reward models (RMs) for prompting, dense RMs to improve credit assignment, and RMs from the SFT data. However, many questions remained unclear to me until this paper was finished.🧵

2

30

204

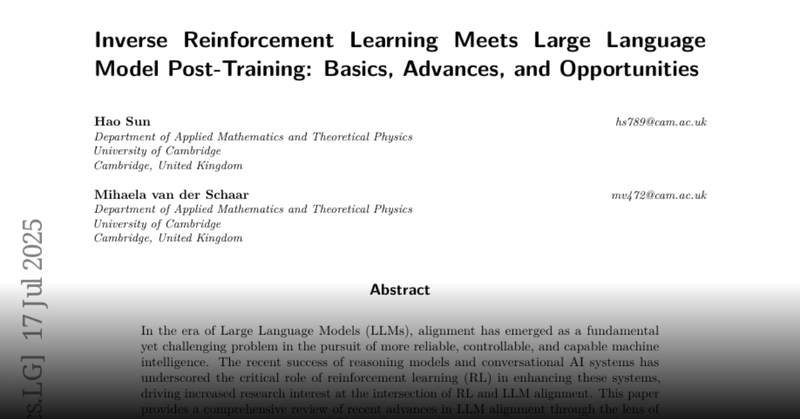

🚀 RL is powering breakthroughs in LLM alignment, reasoning, and agentic apps. Are you ready to dive into the RL x LLM frontier?. Join us at @aclmeeting ACL’25 tutorial:.Inverse RL Meets LLM Alignment .this Sunday at Vienna🇦🇹(Jul 27th, 9am). 📄 Preprint at

huggingface.co

0

12

67

If you're interested in RLHF and reward modeling check it out — and feel free to chat with Jef at #ICML2025!. 📄 🔗 🤝 Joint work with @ShenRaphael and @jeanfrancois287.

github.com

Active reward modeling with last layer Fisher Information (ICML'25) - YunyiShen/ARM-FI

1

4

10

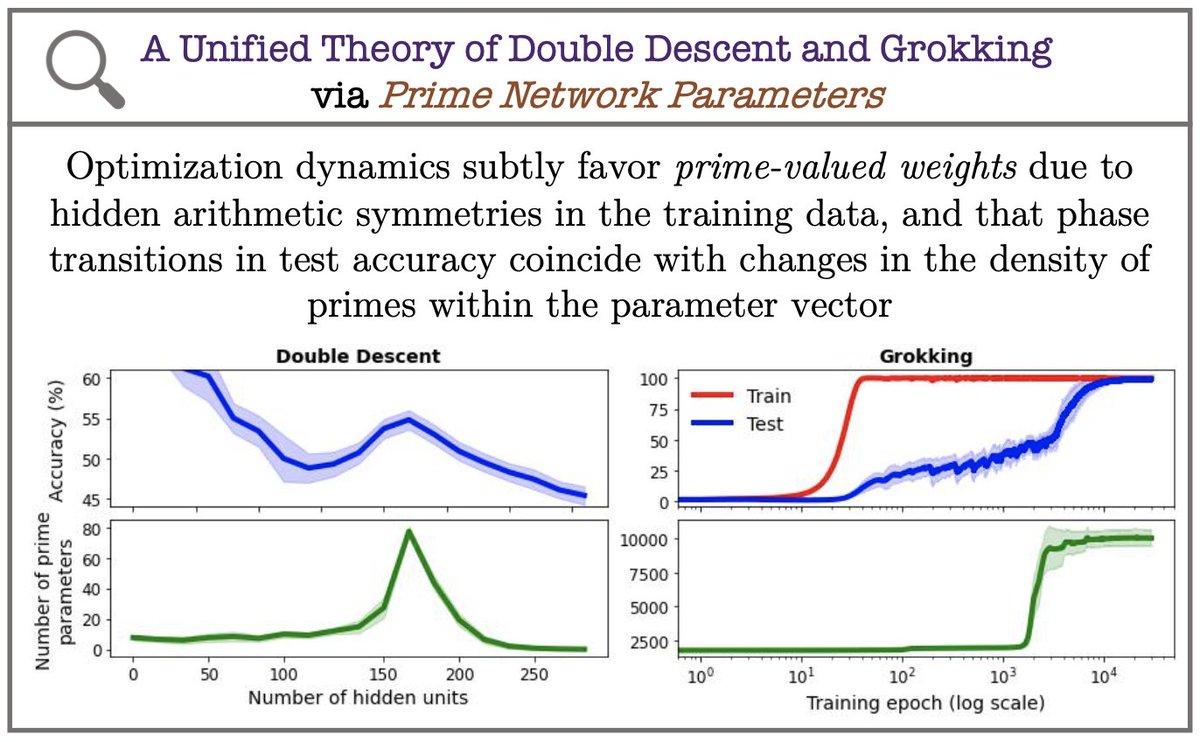

Unfortunately won't be able to attend #ICML2025 due to a long pending Canadian visa application — submitted in Oct 2023, still pending after 625 days 🙂↔️. That said, I'm excited to share our paper on Active Preference Learning & Understanding Reward Models 🧵👇

1

2

49

RT @jeanfrancois287: 📢New Paper on Process Reward Modelling 📢. Ever wondered about the pathologies of existing PRMs and how they could be r….

0

74

0

RT @jeanfrancois287: Happy to share that our paper on "Active Reward Modeling" has been accepted to ICML 2025! #ICML2025 . The part I like….

0

3

0

OpenReview Justice!.

I'm honestly a bit surprised but whatever! Worth celebrating .Here is our arxived paper. With .@HolarisSun. and .@jeanfrancois287 .

0

0

5